Depalletizing Vision Parameter Adjustment Guide

About 24250 wordsAbout 81 min

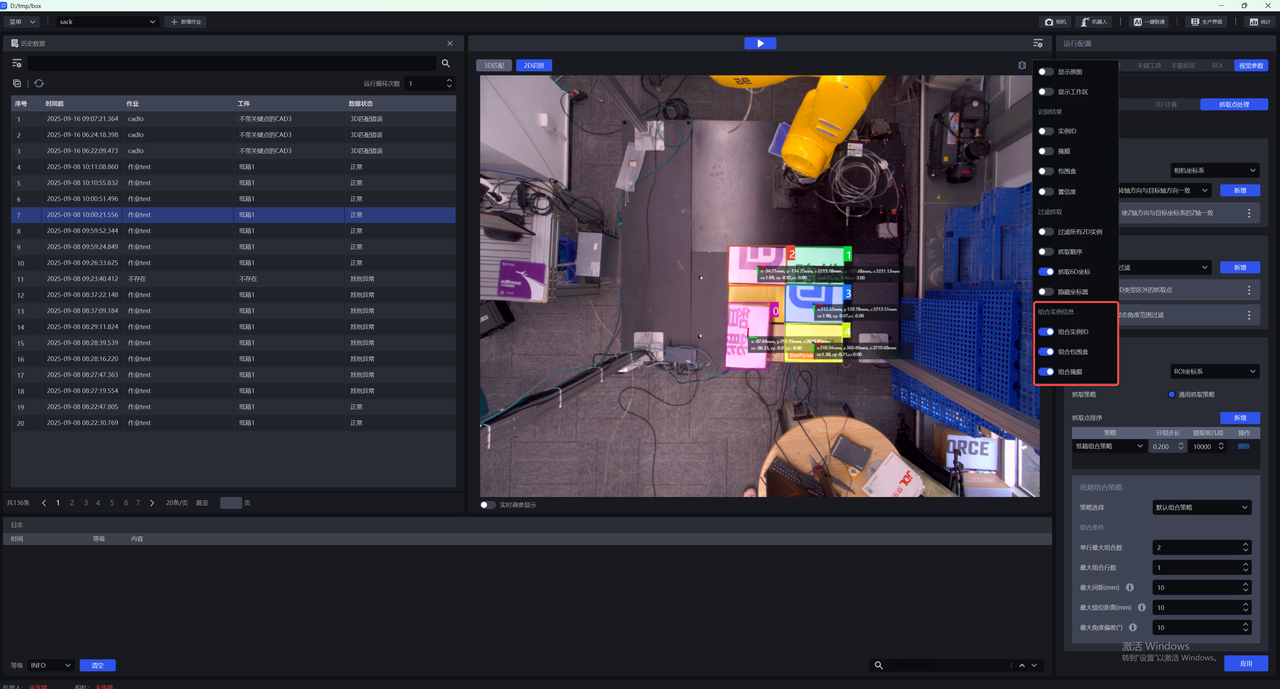

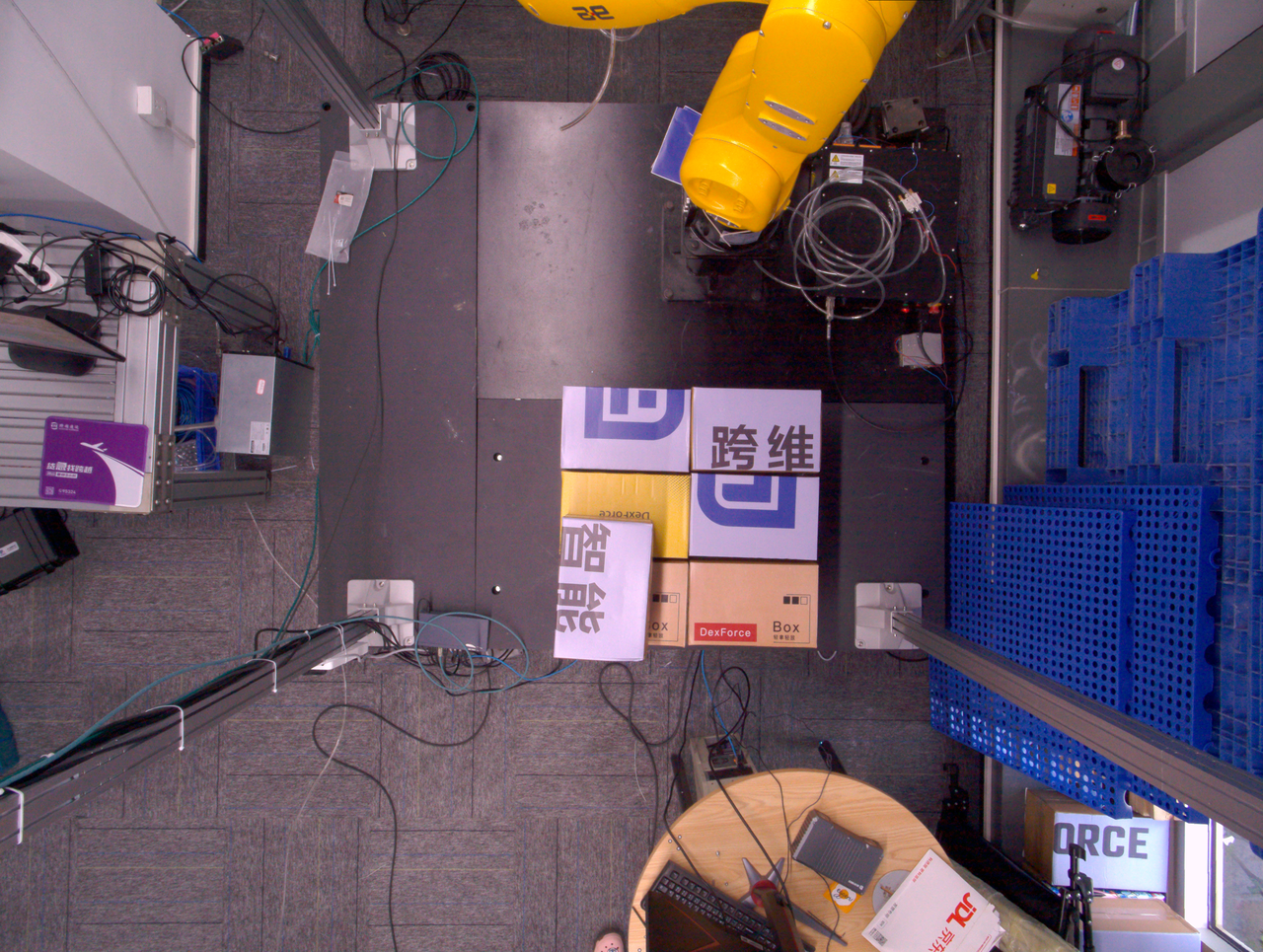

This chapter mainly introduces how to adjust vision parameters according to the actual scenario in depalletizing scenarios.

Getting Started:

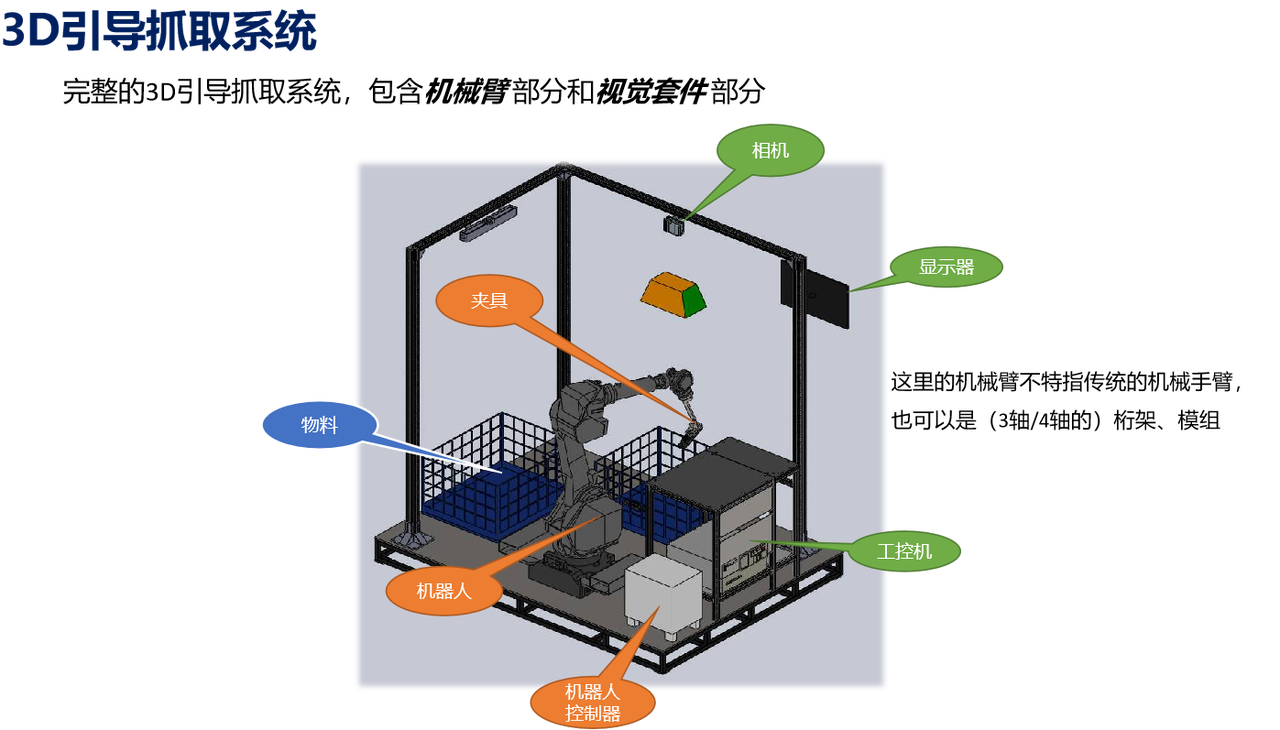

Background Introduction

Build a Project

(1)Create a new single-sack depalletizing/single-carton depalletizing/carton mixed depalletizing project (the project name and project path can be customized, but the project name cannot contain Chinese characters)

Target Object type: single-type sacks ------ single-sack depalletizing, single-type cartons ------ single-carton depalletizing, multiple types of cartons ------ carton mixed depalletizing

Optional functional options include Instance Segmentation optimization, vision classification, and pallet detection

(2)Camera and Robot configuration

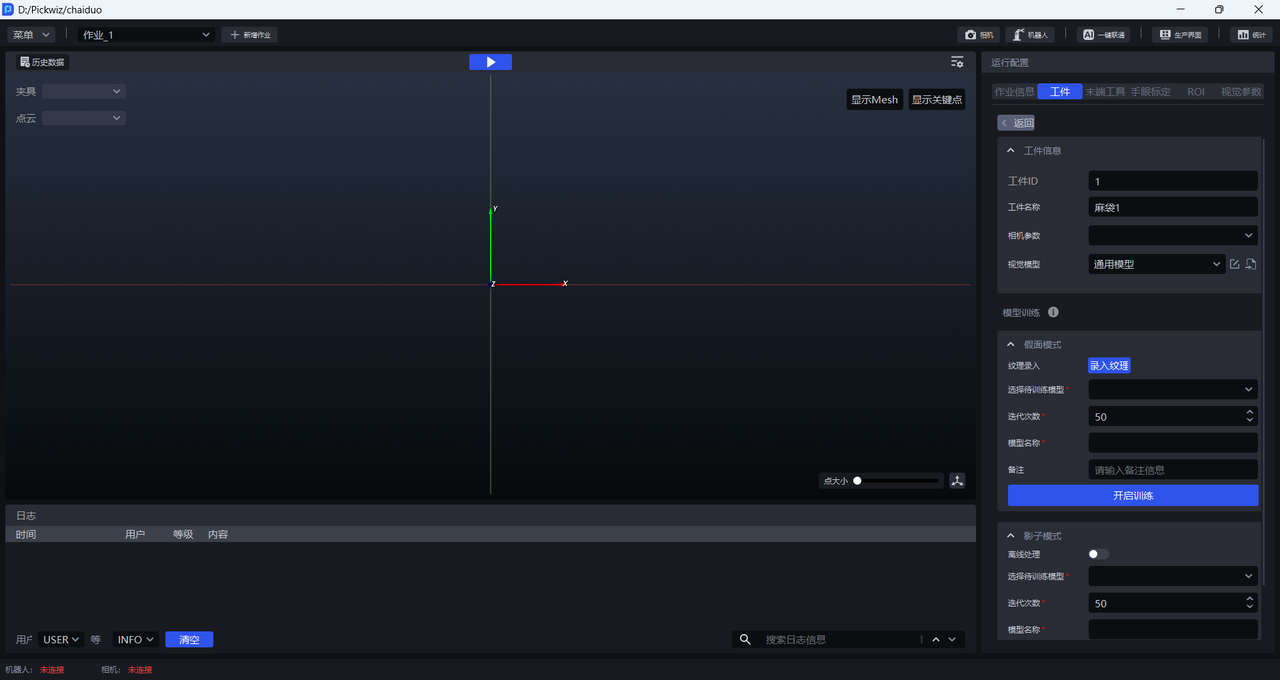

(3)Add Target Object

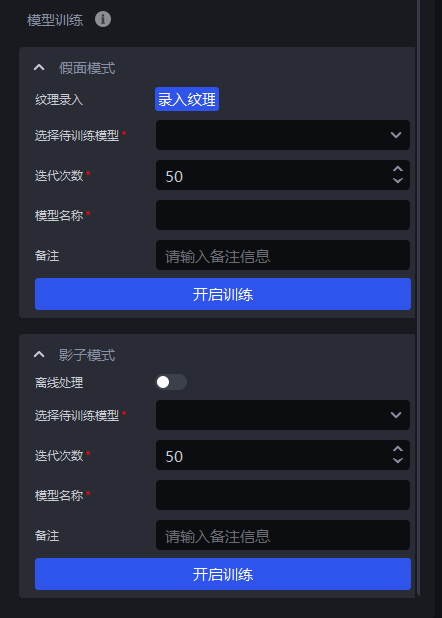

The vision model uses the general sack model (General sack model for single-sack depalletizing, general single-carton depalletizing model, and general carton mixed depalletizing model). If the vision segmentation results are unsatisfactory, you can train the model yourself based on the actual scenario. There are two training methods: Mask mode and Shadow mode. If there are few patterns on the sack surface, you can use Mask mode to improve recognition of specific textures, and the training takes about 1 hour; if there are many patterns on the sack surface and the Instance Segmentation success rate has already reached 95%, but you want an even higher success rate, you can use Shadow mode, and the training also takes about 1 hour.

Mask mode: You need to provide texture samples by finding unrecognized data in the historical data and importing images from the instance\input folder images. Select the target sack in the image, use at least 10 samples, then click Start Training and wait about 1 hour. How to use mask mode

Shadow mode: Shadow data refers to images with good vision segmentation results retained during production. Therefore, training with Shadow mode requires a period of production so that shadow data is generated before training can begin. How to use shadow mode

(4)Add Tool, eye-hand calibration, and ROI

(5)Functional options: Instance Segmentation optimization, pallet detection, vision classification

Instance Segmentation optimization: Optimizes model-generated instances and processes instance Mask.

Pallet detection: Enable this in scenarios where the pallet needs to be picked. After it is enabled, the pallet can be picked after normal Target Object picking is completed. Pallet detection guide

Vision classification: Used to identify features such as different textures and different orientations of the same Target Object. Vision classification guide

(6)Test data (historical data is provided for subsequent practice. When configuring the ROI, you can use the 2D images and 3D Point Cloud in the historical data foreground\input folder instead of capturing images with the Camera)

Sack data:

Carton data:

Vision Parameters

- 2D Recognition: identify and segment instances from the actual scenario

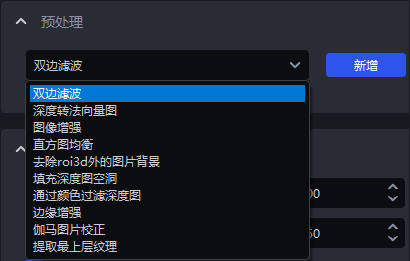

Preprocessing: process the 2D image before Instance Segmentation (edge enhancement)

Instance Segmentation: identify and segment instances (scale ratio & lower confidence threshold & auto enhancement)

Point Cloud generation: methods for generating instance Point Cloud; use the segmented instance Mask or bounding box to generate instance Point Cloud, or use the filtered instance Mask or bounding box to generate instance Point Cloud

Instance filtering: filter the segmented instances

Instance sorting (cartons): sort instances; the depalletizing picking order follows the instance sorting order

- 3D calculation: calculate the pose of the instance in the camera coordinate system and generate Pick Points

Preprocessing: preprocess the 3D Point Cloud before calculating Pick Points (calculate self vertical pose, remove Point Cloud outliers)

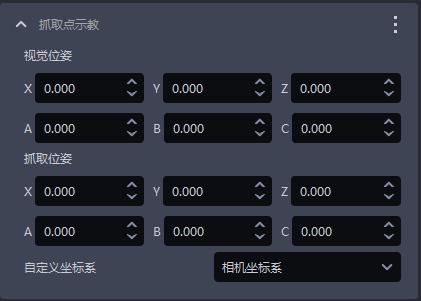

Pick Point generation: calculation methods for generating Pick Points (rectangle fitting, select coordinate system, neighborhood width, neighborhood length)

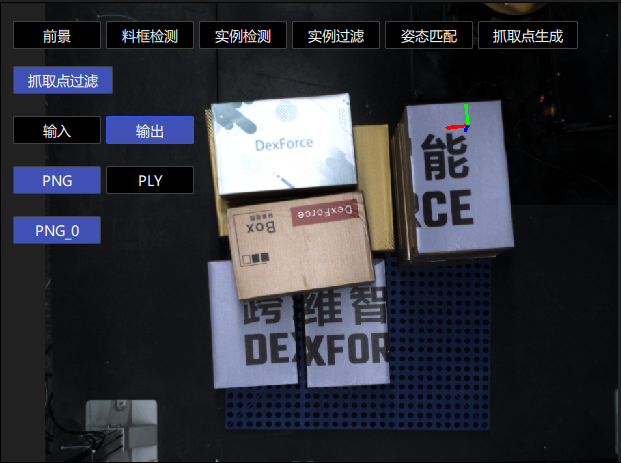

- Pick Point processing: filter, adjust, and sort Pick Points

Pick Point filtering: filter Pick Points

Pick Point adjustment: adjust Pick Points

1. 2D Recognition

This section mainly explains the functions related to preprocessing, Instance Segmentation, instance filtering, and instance sorting that affect 2D image recognition results, and provides parameter tuning suggestions.

1.1 Preprocessing

Preprocessing for 2D Recognition processes the 2D image before Instance Segmentation.

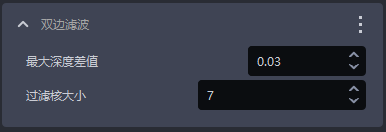

1.1.1 Bilateral Filtering

- Function

Image smoothing function based on bilateral filtering

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Maximum depth difference | Maximum depth difference for bilateral filtering | 0.03 | [0.01, 1] |

| Filter kernel size | Convolution kernel size for bilateral filtering | 7 | [1, 3000] |

1.1.2 Convert Depth to Normal Map

- Function

Calculate pixel Normals from the depth map and convert the image into a Normal map

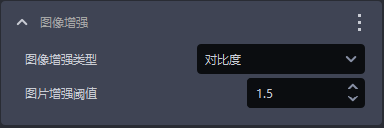

1.1.3 Image Enhancement

- Function

Common image enhancements such as saturation, contrast, brightness, and sharpness

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Image enhancement type | Enhance a certain element of the image | Contrast | Saturation, contrast, brightness, sharpness |

| Image enhancement threshold | How much to enhance a certain element of the image | 1.5 | [0.1, 100] |

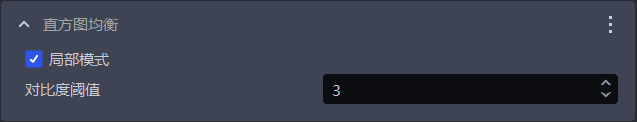

1.1.4 Histogram Equalization

- Function

Increase image contrast

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Local mode | Local or global histogram equalization. Select it for local histogram equalization; clear it for global histogram equalization | Selected | / |

| Contrast threshold | Contrast threshold | 3 | [1,1000] |

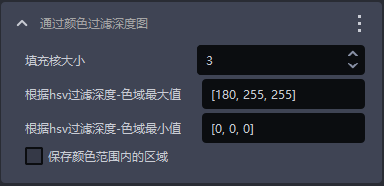

1.1.5 Filter Depth Map by Color

- Function

Filter the depth map according to color values

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Fill kernel size | Color fill size | 3 | [1,99] |

| Filter depth by HSV - maximum color range value | Maximum color value | [180,255,255] | [[0,0,0],[255,255,255]] |

| Filter depth by HSV - minimum color range value | Minimum color value | [0,0,0] | [[0,0,0],[255,255,255]] |

| Keep the area within the color range | Select it to keep the area within the color range; clear it to keep the area outside the color range | / | / |

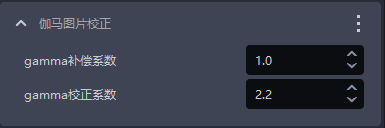

1.1.6 Gamma Image Correction

- Function

Gamma correction changes image brightness

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Gamma compensation coefficient | If this value is less than 1, the image becomes darker; if it is greater than 1, the image becomes brighter | 1 | [0.1,100] |

| Gamma correction coefficient | If this value is less than 1, the image becomes darker, which is suitable for overly bright images; if it is greater than 1, the image becomes brighter, which is suitable for overly dark images | 2.2 | [0.1,100] |

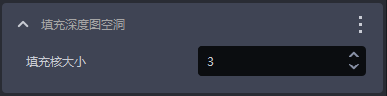

1.1.7 Fill Holes in the Depth Map

- Function

Fill hollow areas in the depth map and smooth the filled depth map

- Application Scenario

Due to structural occlusion of the Target Object itself, uneven lighting, and similar issues, parts of the Target Object may be missing in the depth map

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Fill kernel size | Hole filling size | 3 | [1,99] |

The fill kernel size can only be an odd number

- Tuning

Adjust according to the detection results. If the filling is excessive, reduce the parameter; if the filling is insufficient, increase the parameter

- Example

1.1.8 Edge Enhancement

- Function

Set the edge portions of textures in the image to the Background color or to a color with a large contrast from the Background color, so as to highlight the edge information of the Target Object

- Application Scenario

The edges are unclear because Target Objects occlude or overlap each other

- Parameter Description

| Parameter | Description | Default value | Parameter range | Tuning suggestion |

|---|---|---|---|---|

| Normal Z-direction filtering threshold | Angle filtering threshold between the Normal of each point in the depth map and the positive Z-axis direction of the Camera coordinate system. If the angle between a point's Normal and the positive Z-axis direction of the Camera coordinate system is greater than this threshold, the color at the corresponding position in the 2D image will be set to the Background color or to a color with a large contrast from the Background color | 30 | [0,180] | For flat Target Object surfaces, this threshold can be stricter. For curved surfaces such as bags, increase it appropriately according to the degree of surface tilt |

| Background color | RGB color threshold of the Background color | 128 | [0,255] | |

| Automatically adjust contrast Background | Selected After automatic contrast Background adjustment is enabled, the color of points in the 2D image whose angles are greater than the filtering threshold is set to a color with a large contrast from the Background color. Cleared If automatic contrast Background adjustment is disabled, the color of points in the 2D image whose angles are greater than the filtering threshold is set to the color corresponding to the Background color | Cleared | / |

- Example

In a pile of bags, the bags occlude each other. Select Edge Enhancement to distinguish the edges of each bag, as shown below.

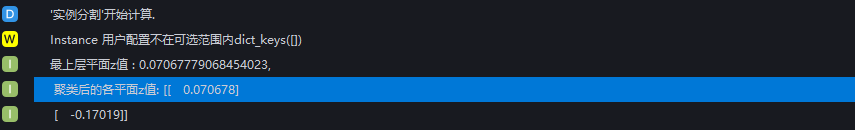

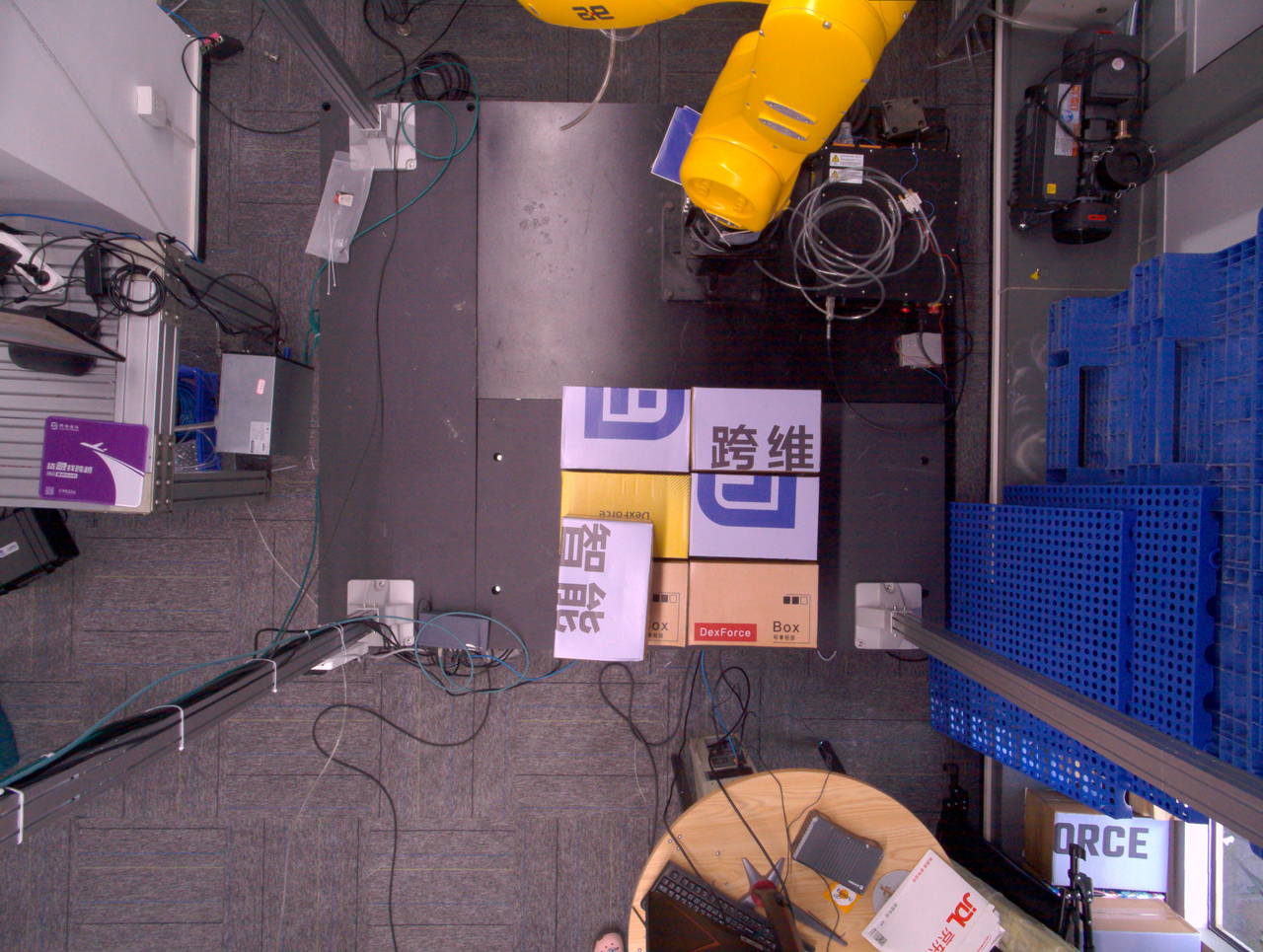

1.1.9 Extract the Top-Layer Texture

- Function

Extract the texture of the topmost or bottommost Target Object layer, and set the other areas to the Background color or to a color with a large contrast from the Background color.

- Application Scenario

Applicable to single-carton depalletizing scenarios. Poor lighting conditions, similar colors and textures, tight stacking, interleaved stacking, or occlusion may make it difficult for the model to distinguish texture differences between the upper and lower carton layers, which easily leads to false detections.

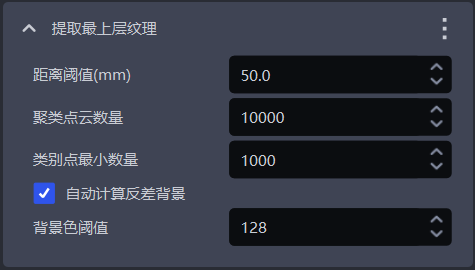

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit | Tuning suggestion |

|---|---|---|---|---|---|

| Distance threshold (mm) | If the distance between a point and the topmost plane (or bottommost plane) is lower than this threshold, the point is considered to be within the topmost plane (or bottommost plane) and should be kept. Otherwise, it is considered a lower-layer (or upper-layer) point, and its color is set to the Background color or to a color with a large contrast from the Background color | 50 | [0.1, 1000] | mm | Generally set to half of the carton height |

| Number of clustered Point Cloud points | Expected number of points participating in clustering, that is, the number of Point Cloud points sampled within the ROI 3D area | 10000 | [1,10000000] | / | The larger the number of clustered Point Cloud points, the lower the model inference speed but the higher the accuracy; the smaller the number of clustered Point Cloud points, the higher the inference speed but the lower the accuracy |

| Minimum number of points per category | Minimum point count used to filter categories | 1000 | [1, 10000000] | / | / |

| Automatically calculate contrast Background | Selected After automatic contrast Background calculation is enabled, other areas outside the topmost (or bottommost) layer in the 2D image are set to a color with a large contrast from the Background color threshold. Cleared If automatic contrast Background calculation is disabled, other areas outside the topmost (or bottommost) layer in the 2D image are set to the color corresponding to the Background color threshold | Selected | / | / | / |

| Background color threshold | RGB color threshold of the Background color | 128 | [0, 255] | / | / |

- Example

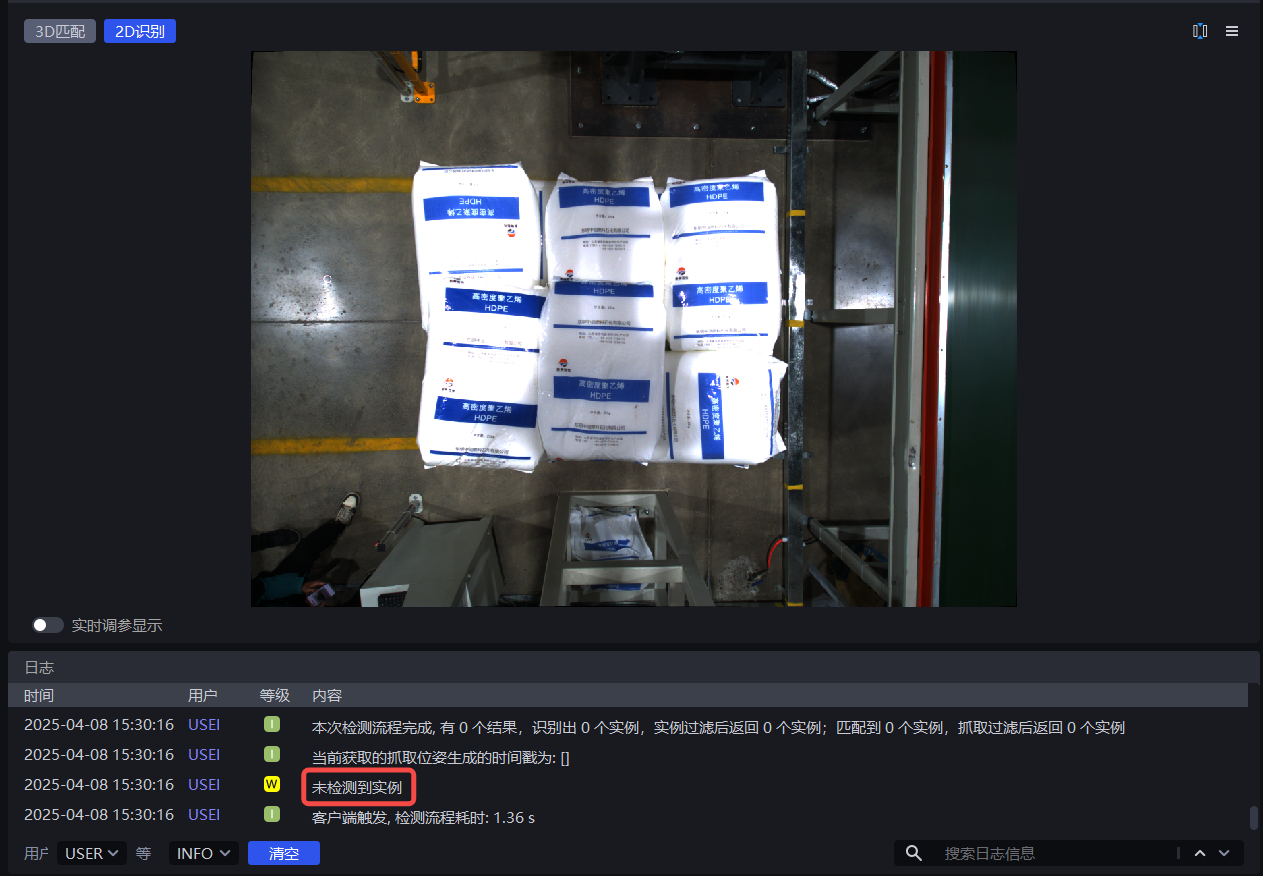

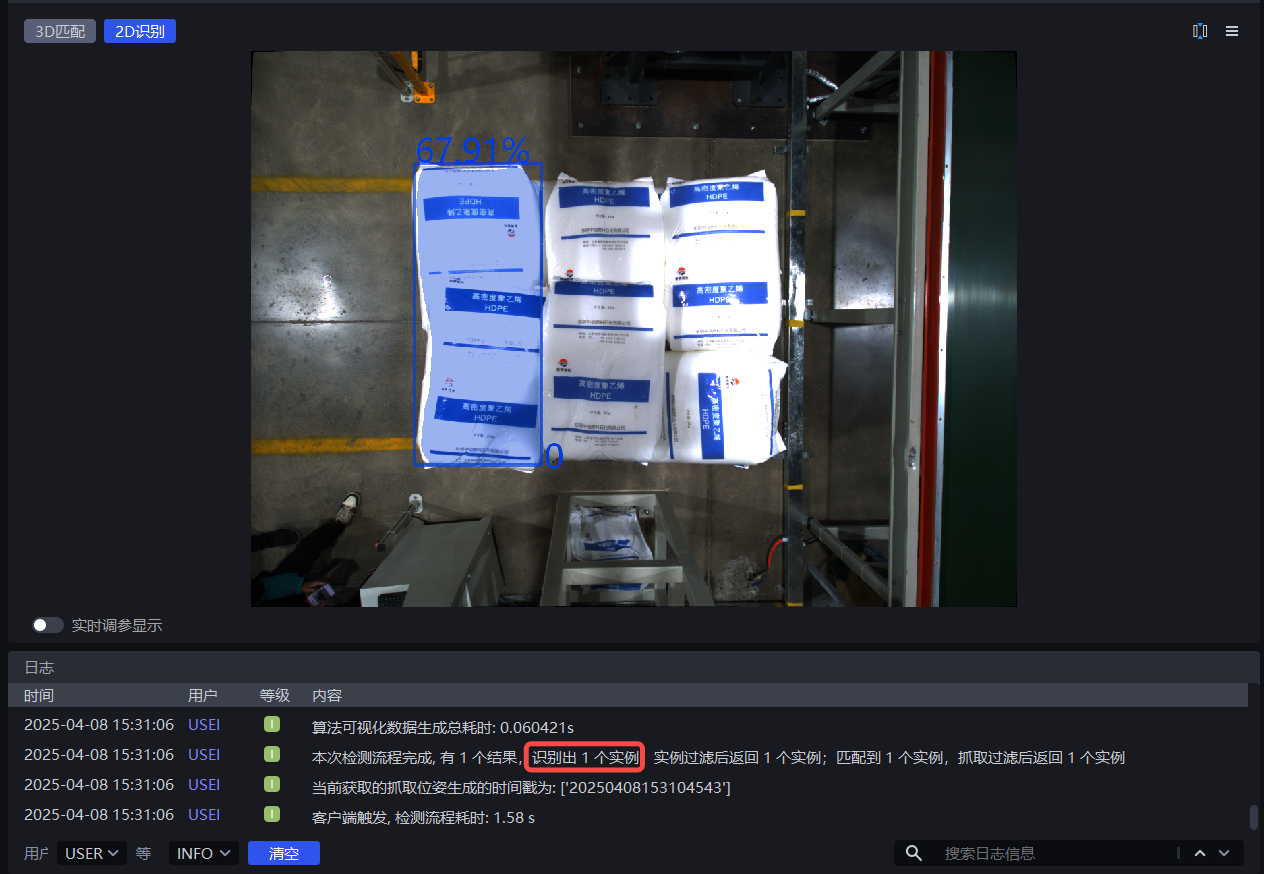

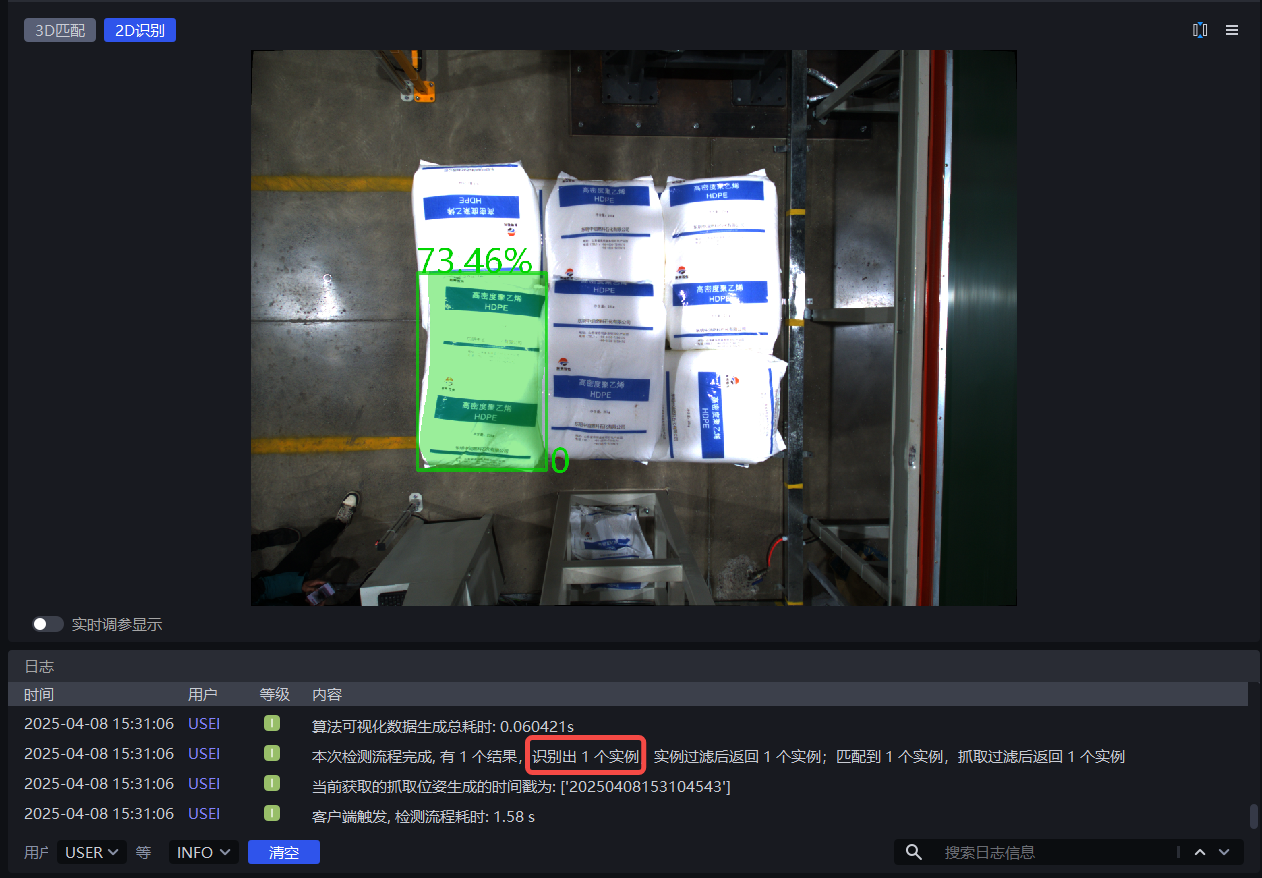

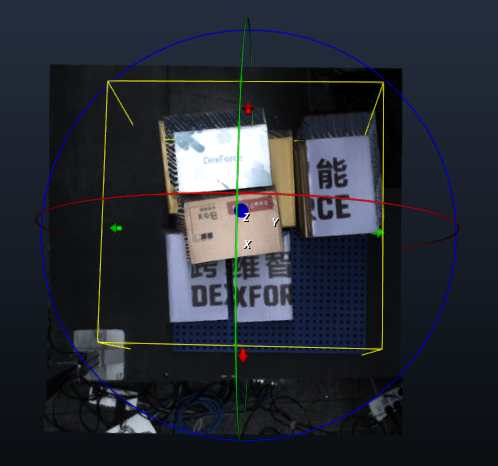

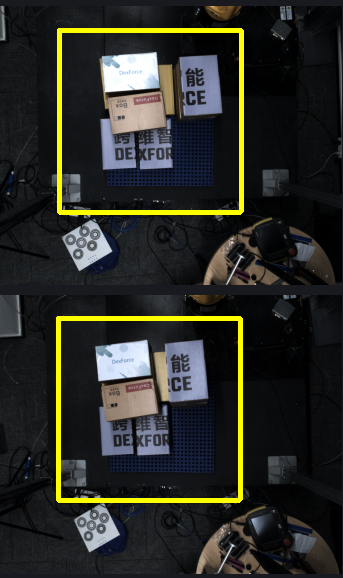

1.1.10 Remove the Image Background Outside ROI3D

- Function

Remove the background in the 2D image outside the ROI3D area

- Application Scenario

Excessive background noise in the image affects the detection result

- Parameter Description

| Parameter name | Description | Default value | Value range |

|---|---|---|---|

| Fill kernel size | Hole filling size | 5 | [1,99] |

| Number of iterations | Number of image dilation iterations | 1 | [1,99] |

| Automatically calculate contrast Background | Selected After automatic contrast Background calculation is enabled, areas outside the ROI in the 2D image are set to a color with a large contrast from the Background color threshold. Cleared If automatic contrast Background calculation is disabled, areas outside the ROI in the 2D image are set to the color corresponding to the Background color threshold | Selected | |

| Background color threshold | RGB color threshold of the Background color | 128 | [0,255] |

The fill kernel size can only be an odd number

- Tuning

If you need to remove more background noise from the image, reduce the fill kernel size

- Example

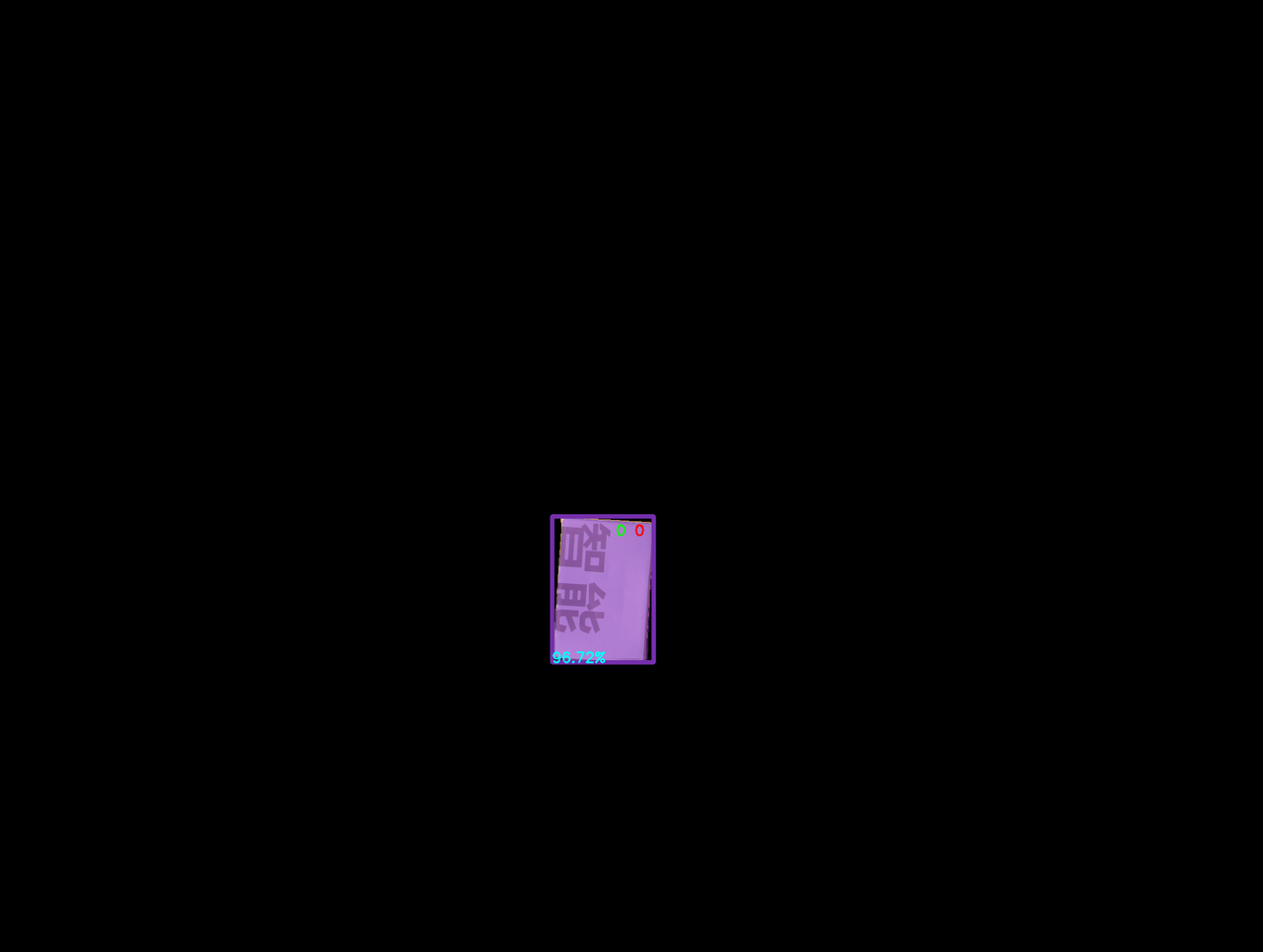

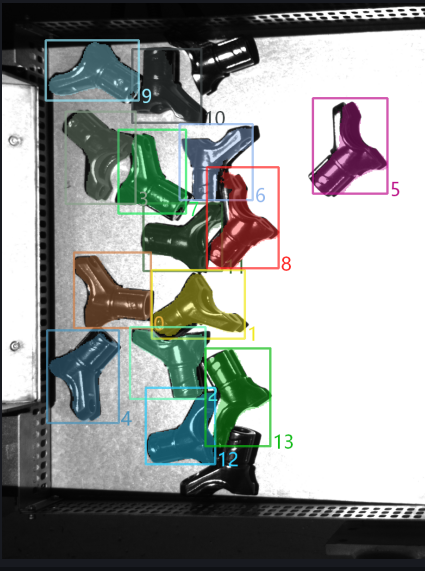

1.2 Instance Segmentation

1.2.1 Scaling Ratio

- Function

Scale the original image proportionally before inference to improve the accuracy and recall of 2D Recognition.

- Application Scenario

If the detection result is poor (for example, no instance is detected, missed recognition occurs, one bounding box covers multiple instances, or the bounding box does not fully cover the instance), this function should be adjusted.

Parameter Description

Default value: 1.0

Value range: [0.01, 3.00]

Step size: 0.01

Tuning

- Run with the default value and view the detection result in the visualization window. If no instance is detected, missed recognition occurs, one bounding box covers multiple instances, or the bounding box does not fully cover the instance, this function should be adjusted.

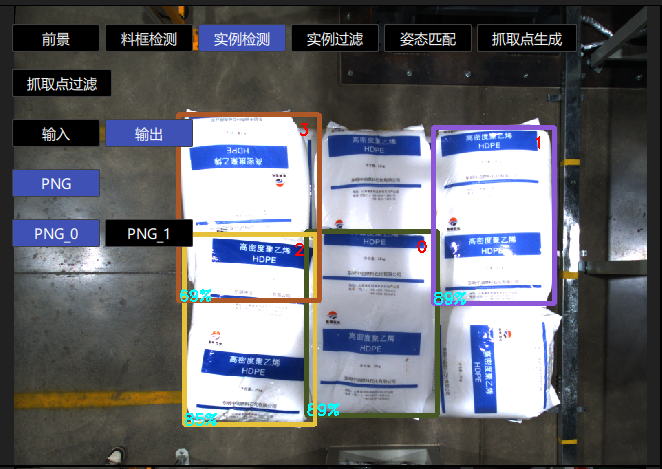

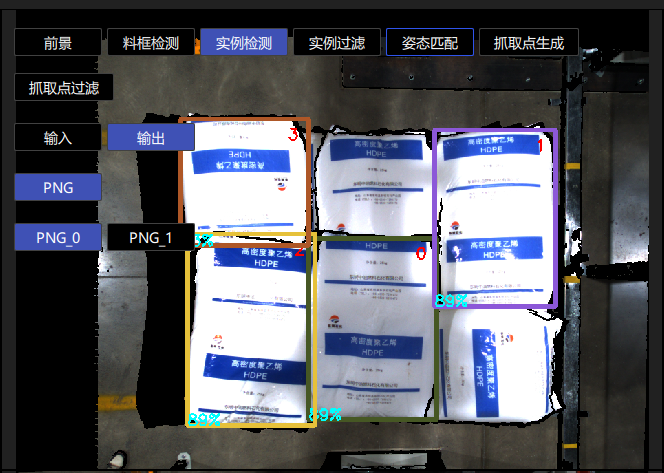

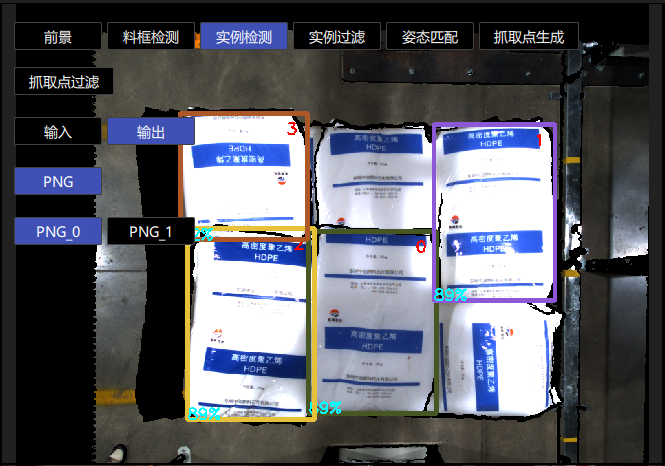

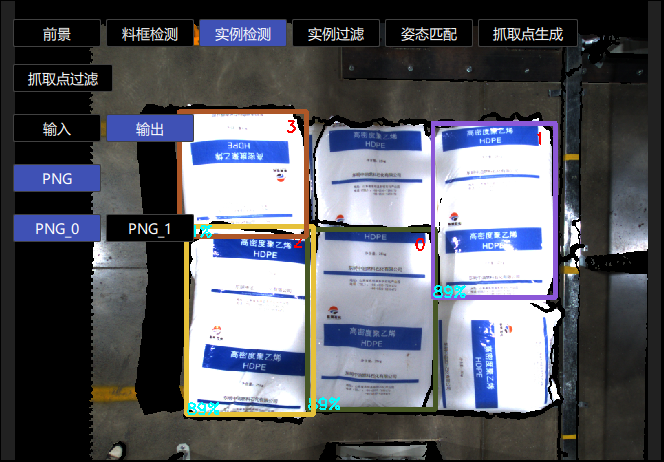

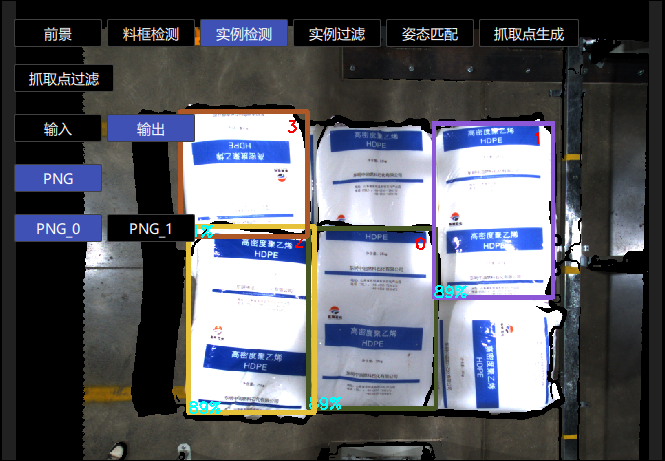

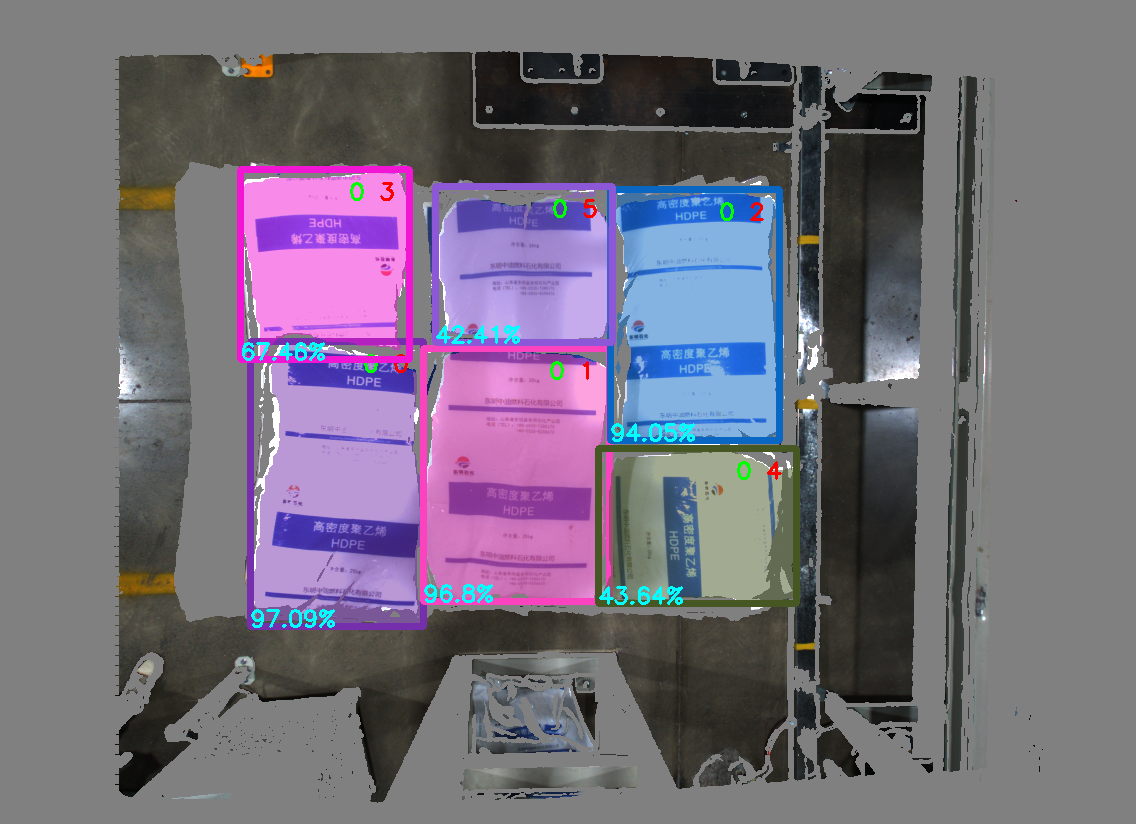

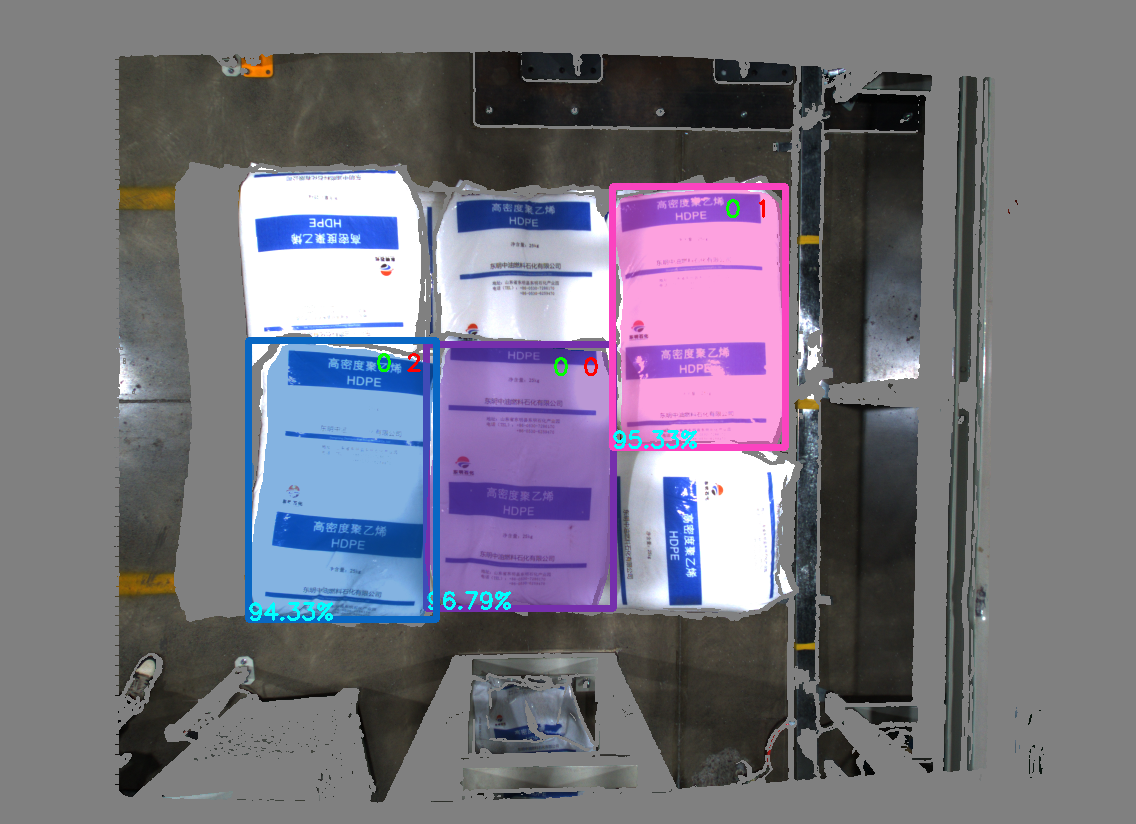

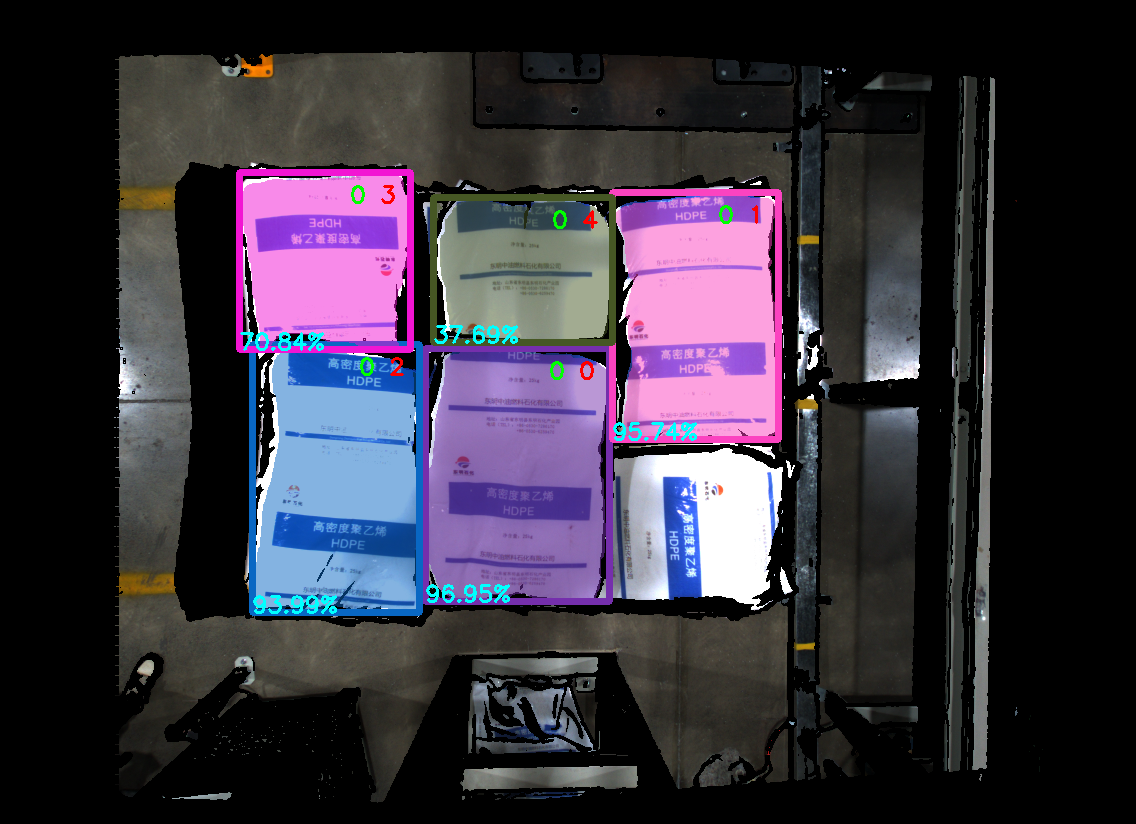

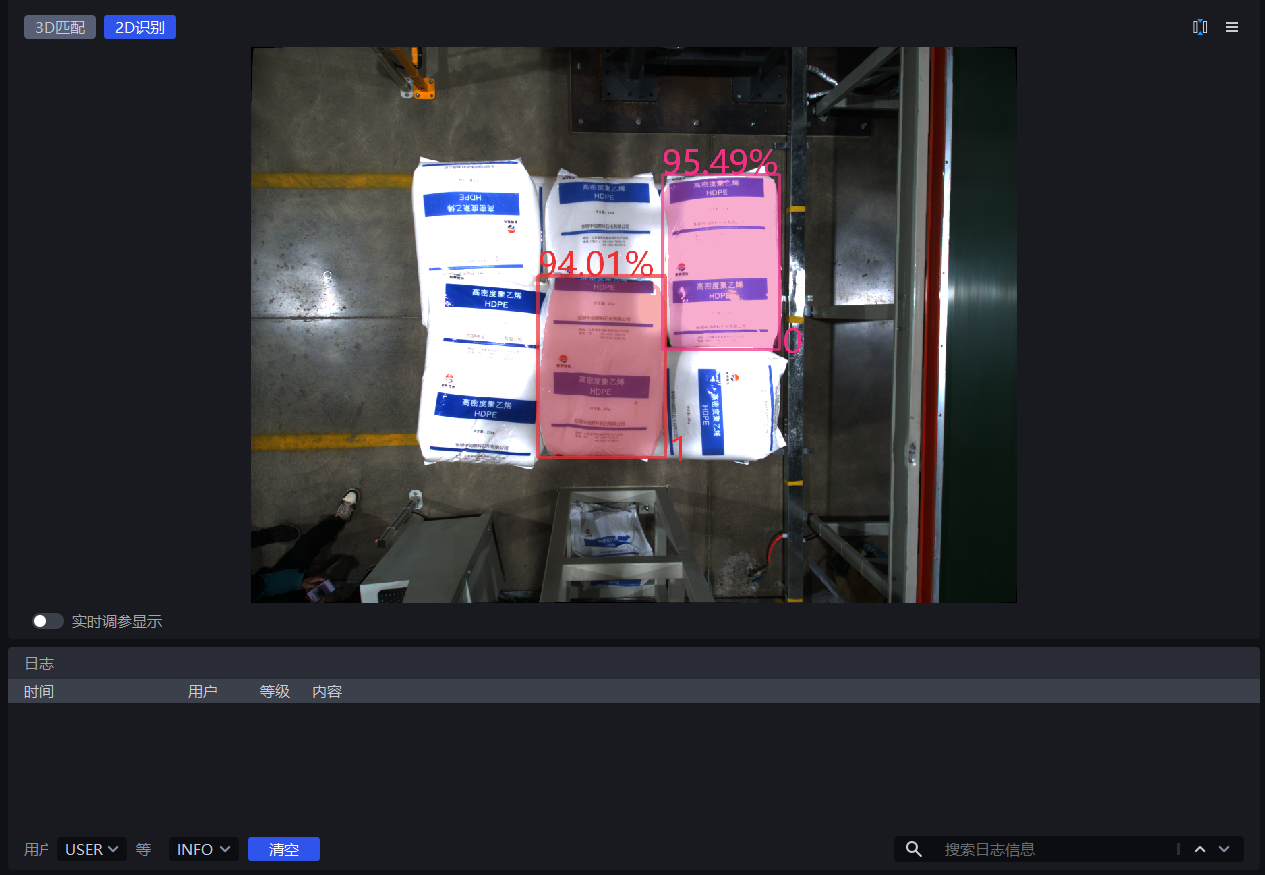

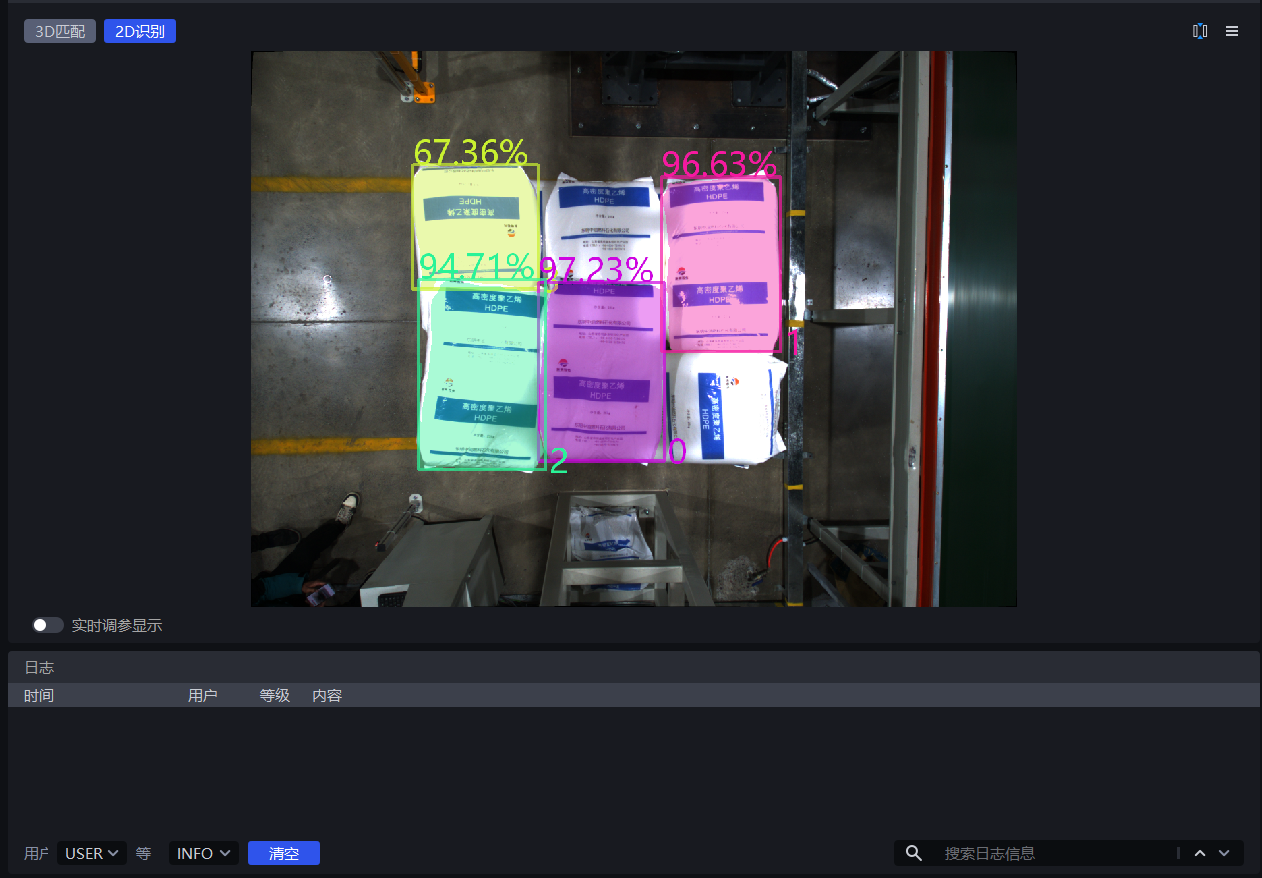

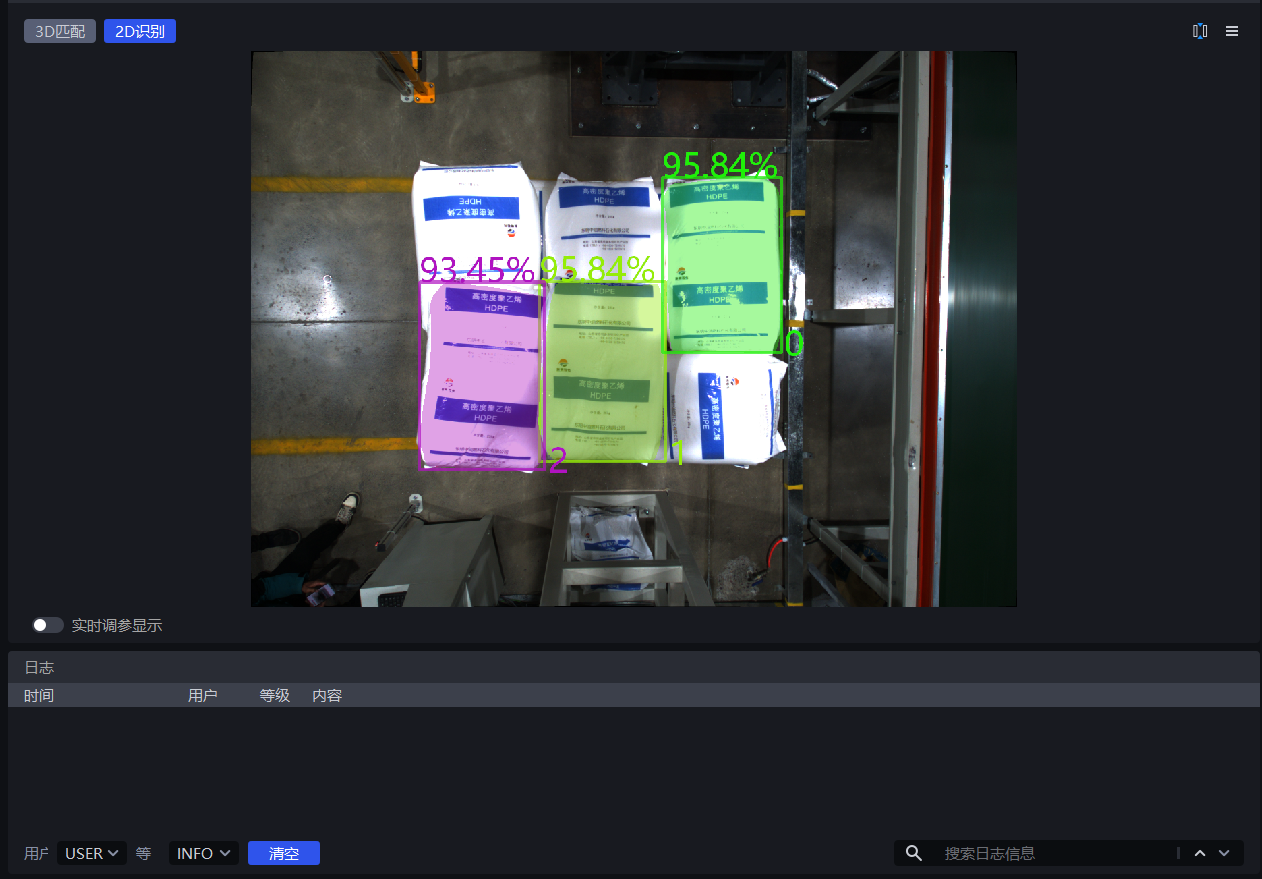

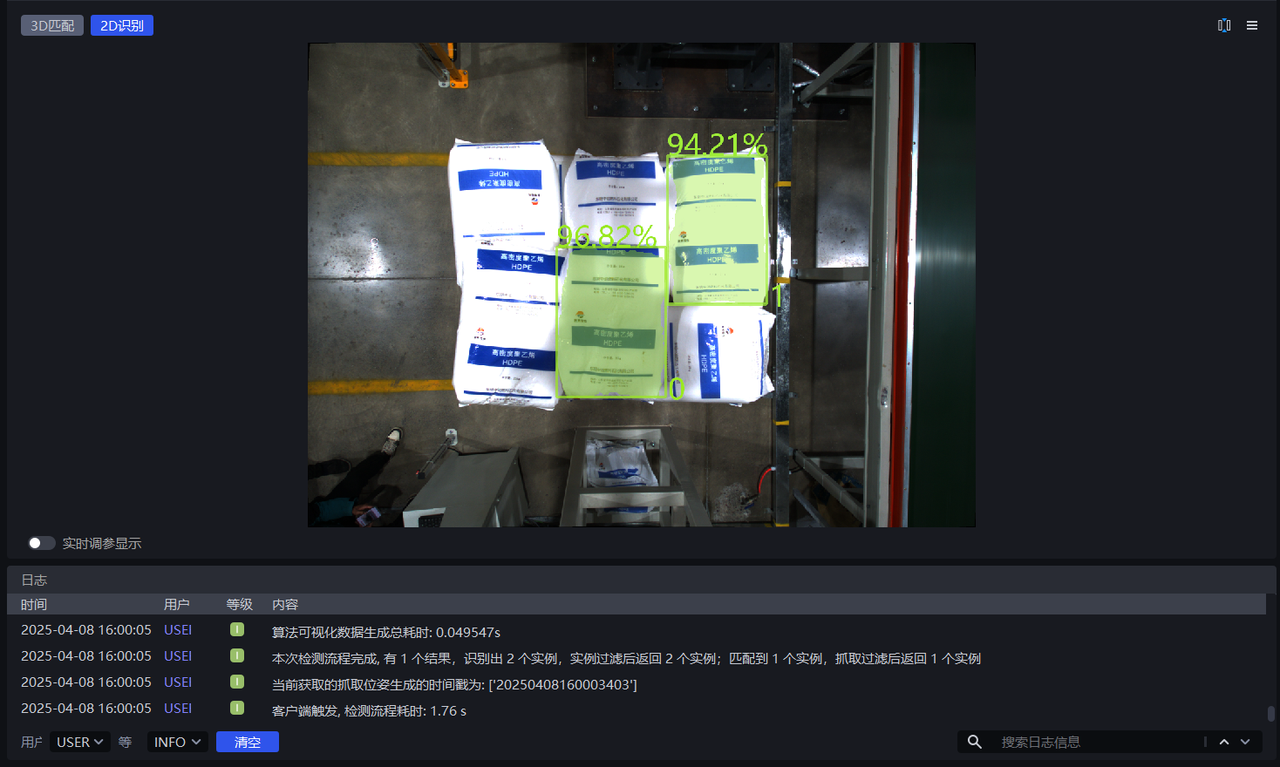

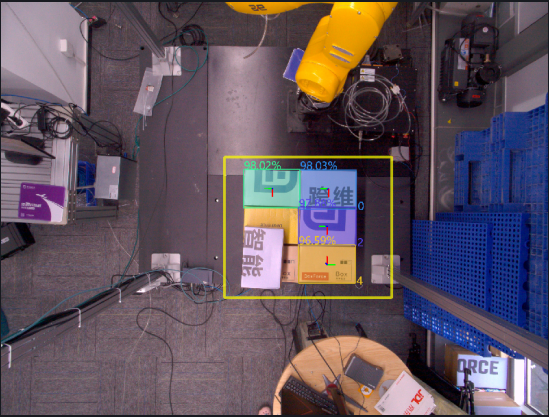

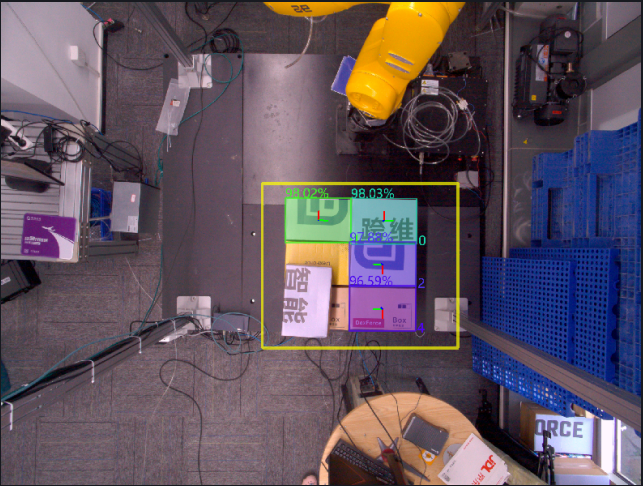

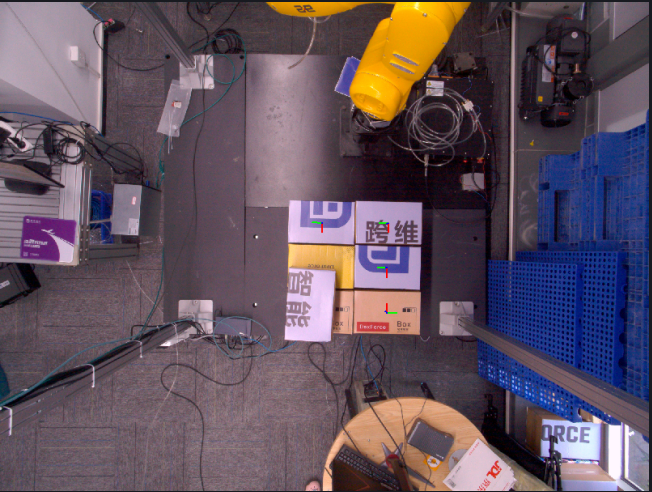

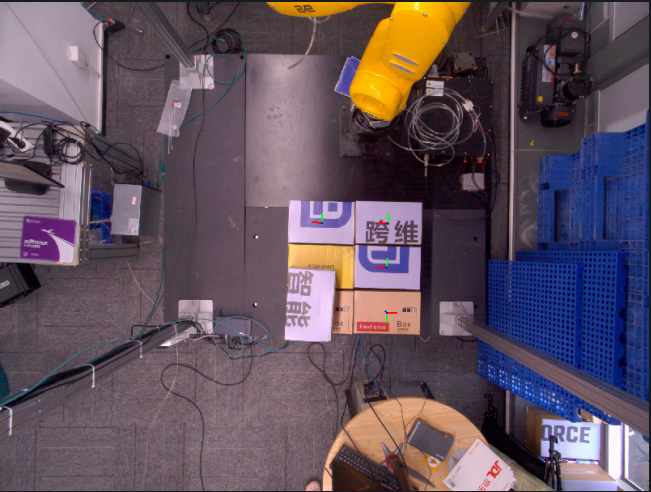

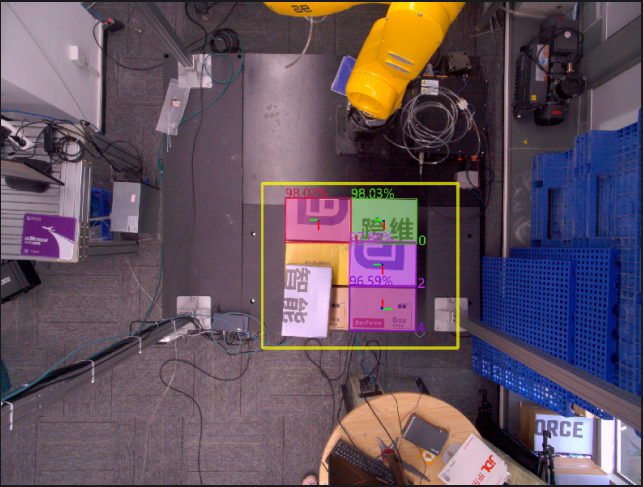

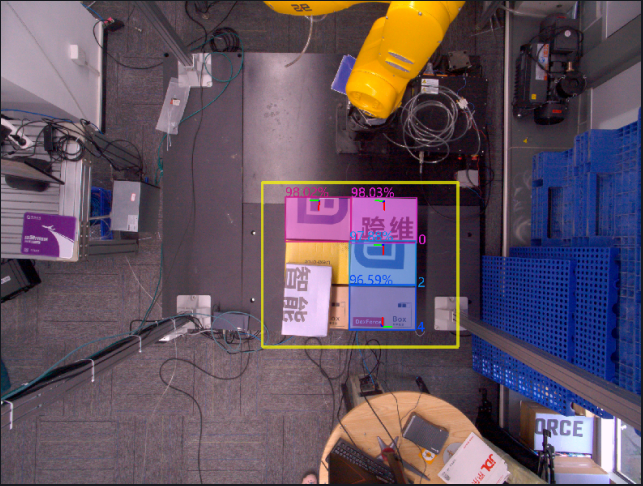

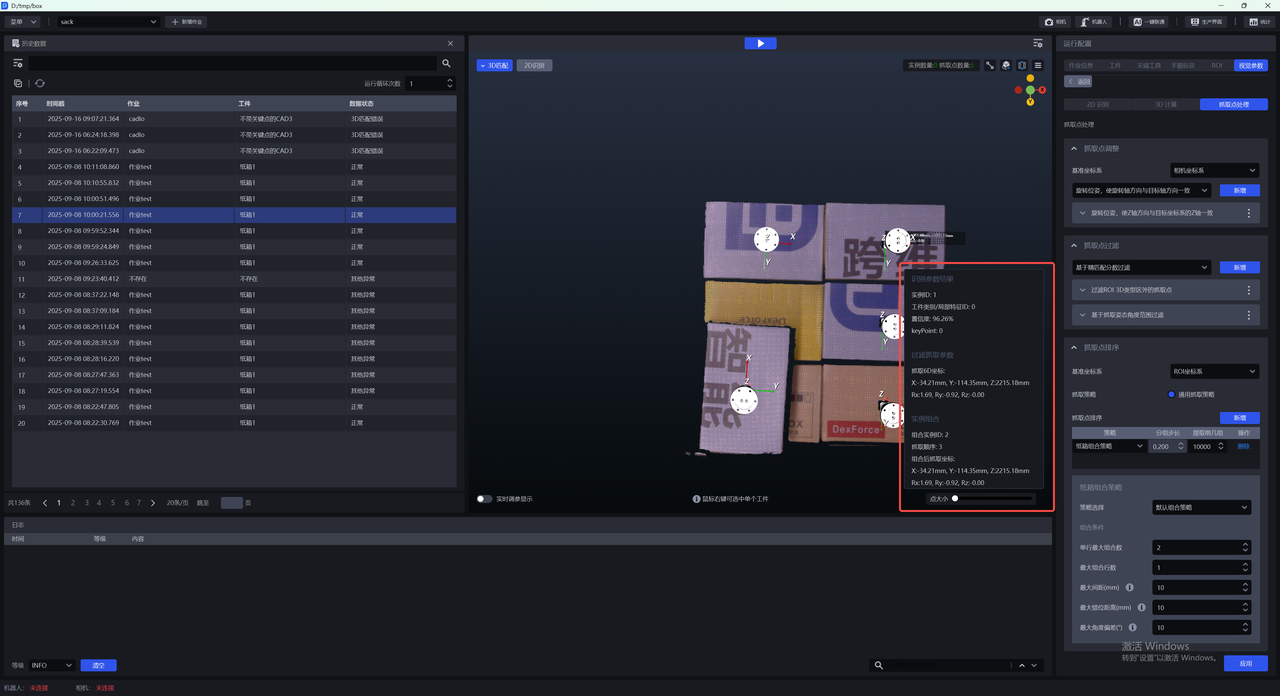

In 2D Recognition, the percentage shown on an instance is the Confidence score, and the number is the instance ID (the recognition order of the instance).

In 2D Recognition, the colored shadow on an instance is the Mask, and the rectangle surrounding the instance is the bounding box.

If good detection results cannot be obtained after trying all scaling ratios, you can adjust the ROI area

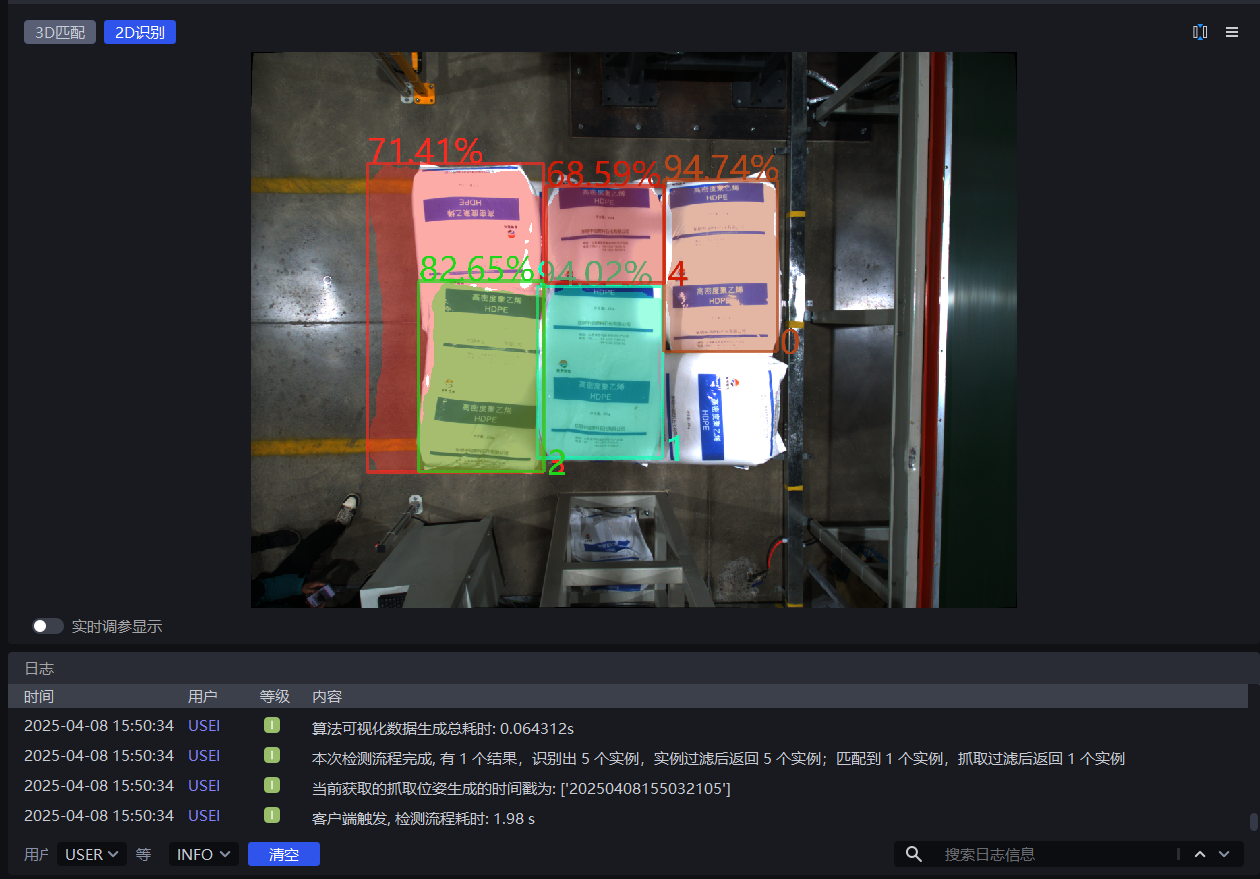

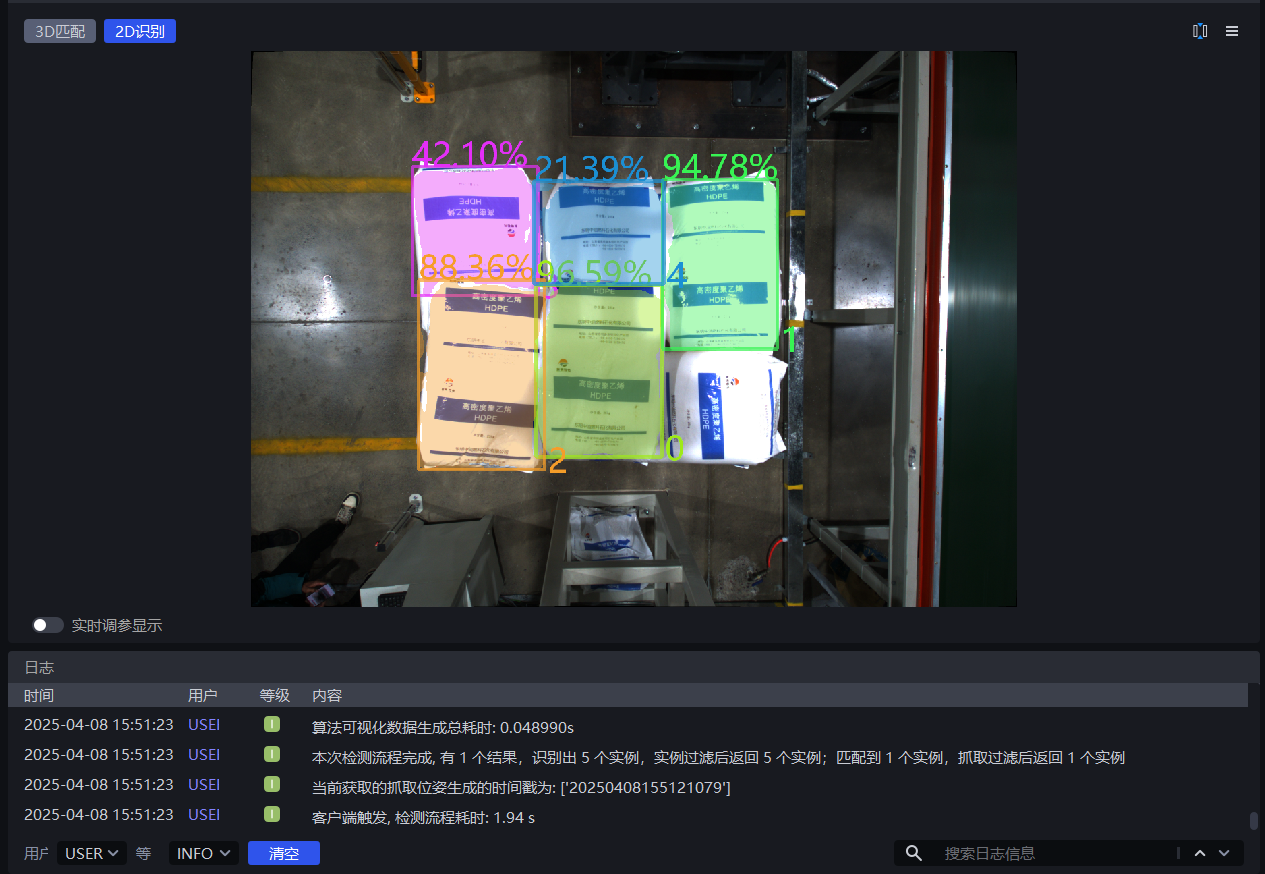

As shown below, the detection result improves significantly when the scaling ratio is 0.7, so 0.7 can be taken as the lower limit of the scaling ratio range

When the scaling ratio is 1.8, the detection result decreases significantly, so 1.8 can be taken as the upper limit of the scaling ratio range

1.2.2 Lower Confidence Threshold

- Function

Keep only recognition results whose deep learning model Confidence scores are higher than the lower Confidence threshold

- Application Scenario

This function can be adjusted when the instances enclosed by the detection results do not match expectations

- Parameter Description

Default value: 0.5

Value range: [0.01, 1.00]

Tuning

- If too few instances are detected, reduce this threshold. If the value is too small, it may affect image recognition accuracy.

- If an overly small lower Confidence threshold causes incorrect instances to be detected and those incorrect instances need to be removed, increase this threshold. If the value is too large, no detection result may be retained and no output will be produced.

Example

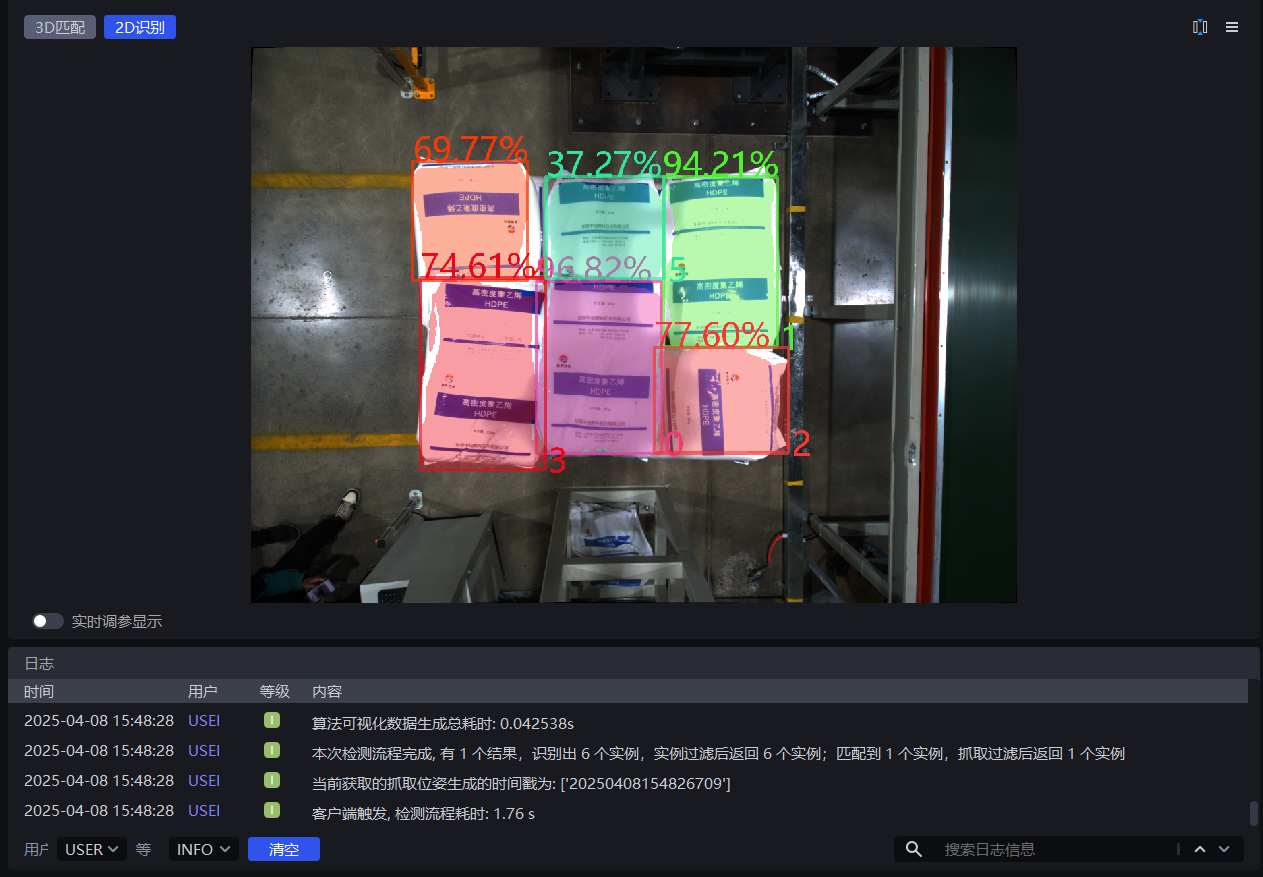

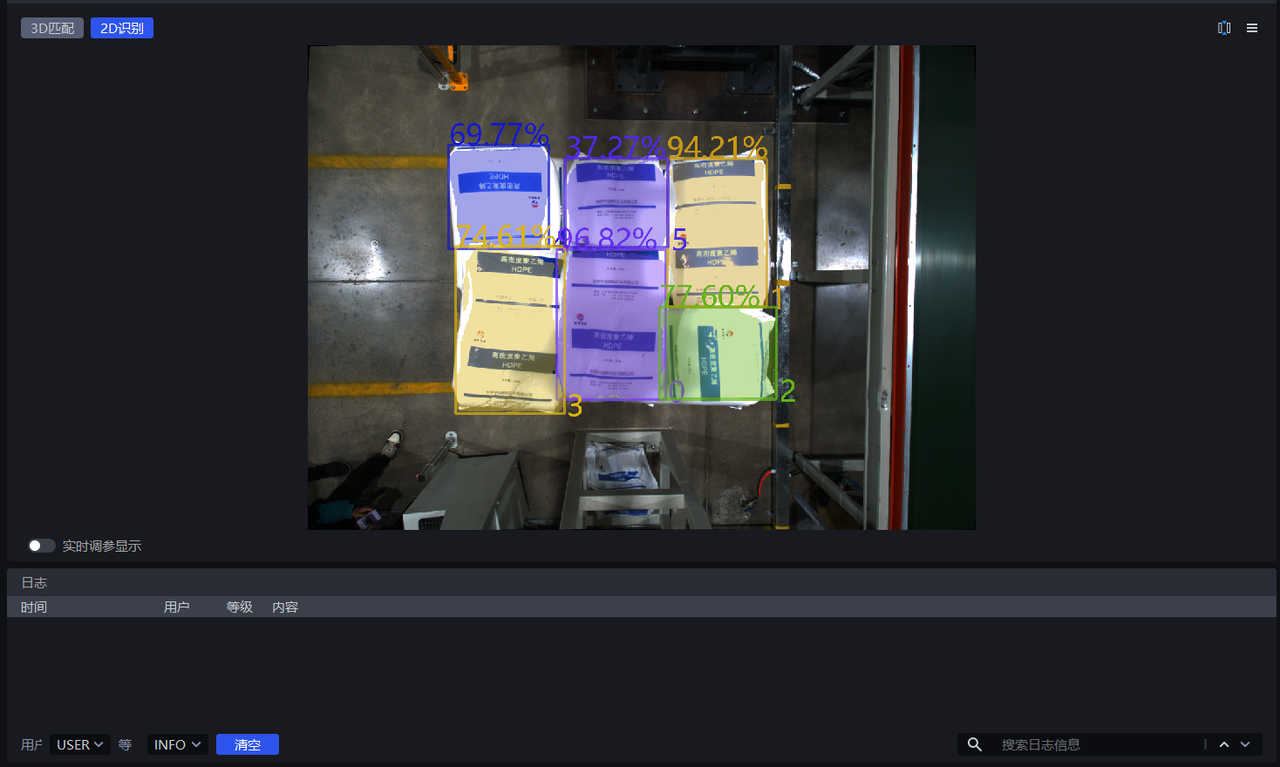

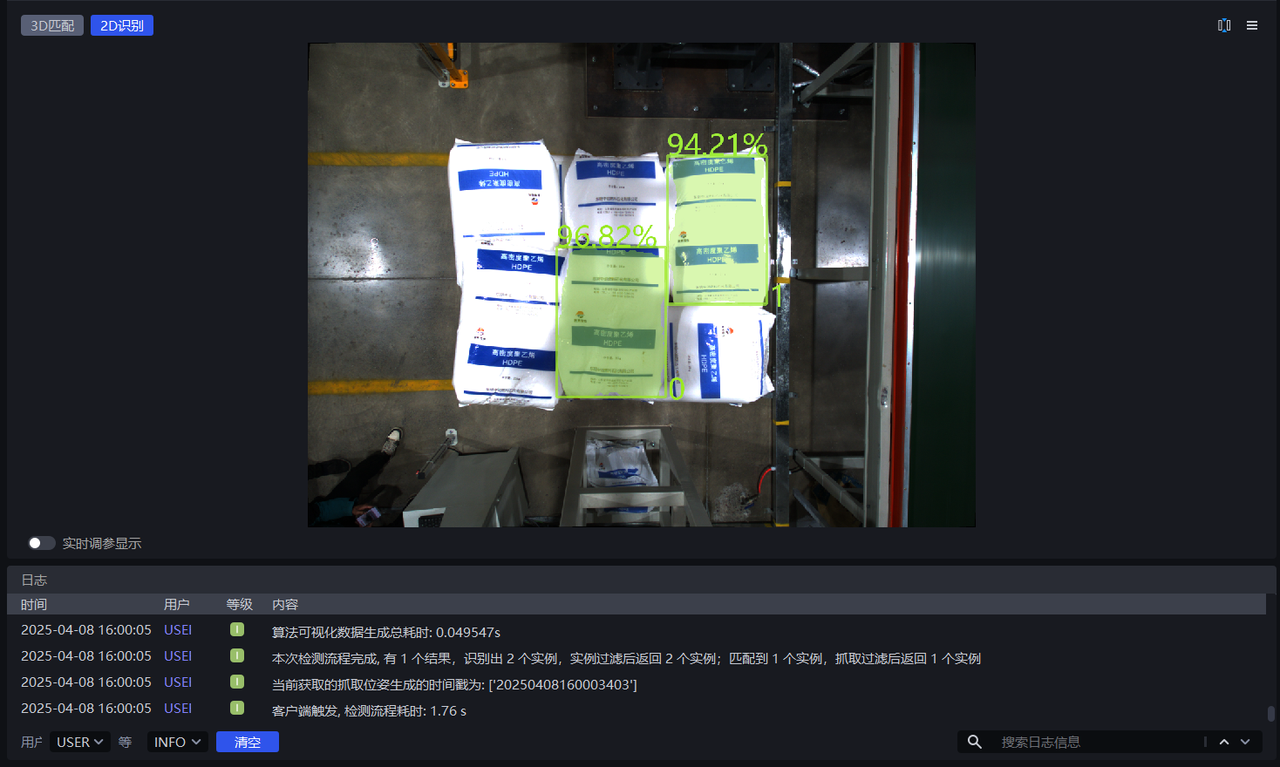

When the lower Confidence threshold is set to 0.5, the retained instance detection results are shown on the left below. When the lower Confidence threshold is set to 0.8, the retained instance detection results are shown on the right below. Instances with scores of 77.60%, 74.61%, and 69.77% (below 80%) are filtered out.

1.2.3 Enable Auto Enhancement

- Function

Perform inference after combining all values from the input scaling ratios and rotation angles, then return all results that are greater than the set lower Confidence threshold. This can improve model inference accuracy, but it increases processing time.

- Application Scenario

Use this when a single scaling ratio cannot meet actual scenario requirements, resulting in incomplete detection, or when the object placement tilt is relatively large.

- Example

If Auto Enhancement - Scaling Ratio is set to [0.8, 0.9, 1.0] and Auto Enhancement - Rotation Angle is set to [0, 90.0] , the values in the scaling ratios and rotation angles are combined pairwise. The model automatically generates 6 images internally for inference, then merges the 6 inference results and outputs the results that are greater than the lower Confidence threshold.

Auto Enhancement - Scaling Ratio

- Function

Scale the original image multiple times and perform inference multiple times, then output the combined inference result

- Application Scenario

Use this when a single scaling ratio cannot meet actual scenario requirements, resulting in incomplete detection

- Parameter Description

Default value:[1.0]

Value range:Each scaling ratio ranges from [0.1, 3.0]

Multiple scaling ratios can be set, separated by English commas

- Tuning

Enter multiple scaling ratios from 1.2.1 Scaling Ratio that produced good detection results

Auto Enhancement - Rotation Angle

- Function

Rotate the original image multiple times and perform inference multiple times, then output the combined inference result

- Application Scenario

Use this when object placement deviates greatly from the coordinate axes

- Parameter Description

Default value:[0.0]

Value range:Each rotation angle ranges from [0, 360]

Multiple rotation angles can be set, separated by English commas

- Tuning

Adjust Auto Enhancement - Rotation Angle according to the object angle in the actual scenario. The tilt angle can be judged from bag patterns and bag opening shapes, or from carton edges and brand logos

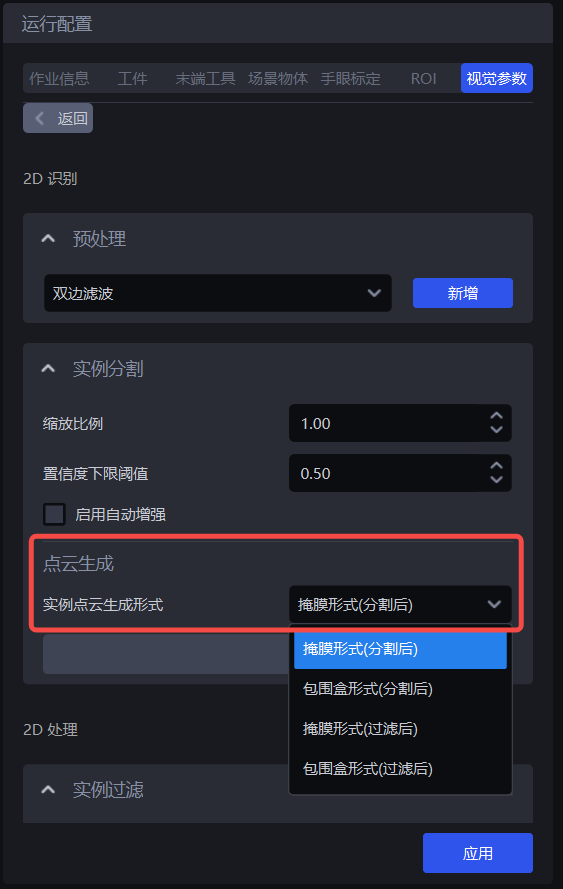

1.3 Point Cloud Generation

In depalletizing scenarios, instance Point Clouds are generally generated using Mask mode (after segmentation) and Mask mode (after filtering)

| Instance Point Cloud generation mode | Mask mode (after segmentation) | — | Use the segmented instance Mask to generate the Point Cloud |

| Bounding box mode (after segmentation) | Bounding box scaling ratio (after segmentation) | Use the segmented instance bounding box to generate the Point Cloud | |

| Whether color is needed when generating the Point Cloud (after segmentation) | Whether the generated instance Point Cloud needs attached color | ||

| Mask mode (after filtering) | — | Use the filtered instance Mask to generate the Point Cloud | |

| Bounding box mode (after filtering) | Bounding box scaling ratio (after filtering) | Use the filtered instance bounding box to generate the Point Cloud | |

| Whether color is needed when generating the Point Cloud (after filtering) | Whether the generated instance Point Cloud needs attached color |

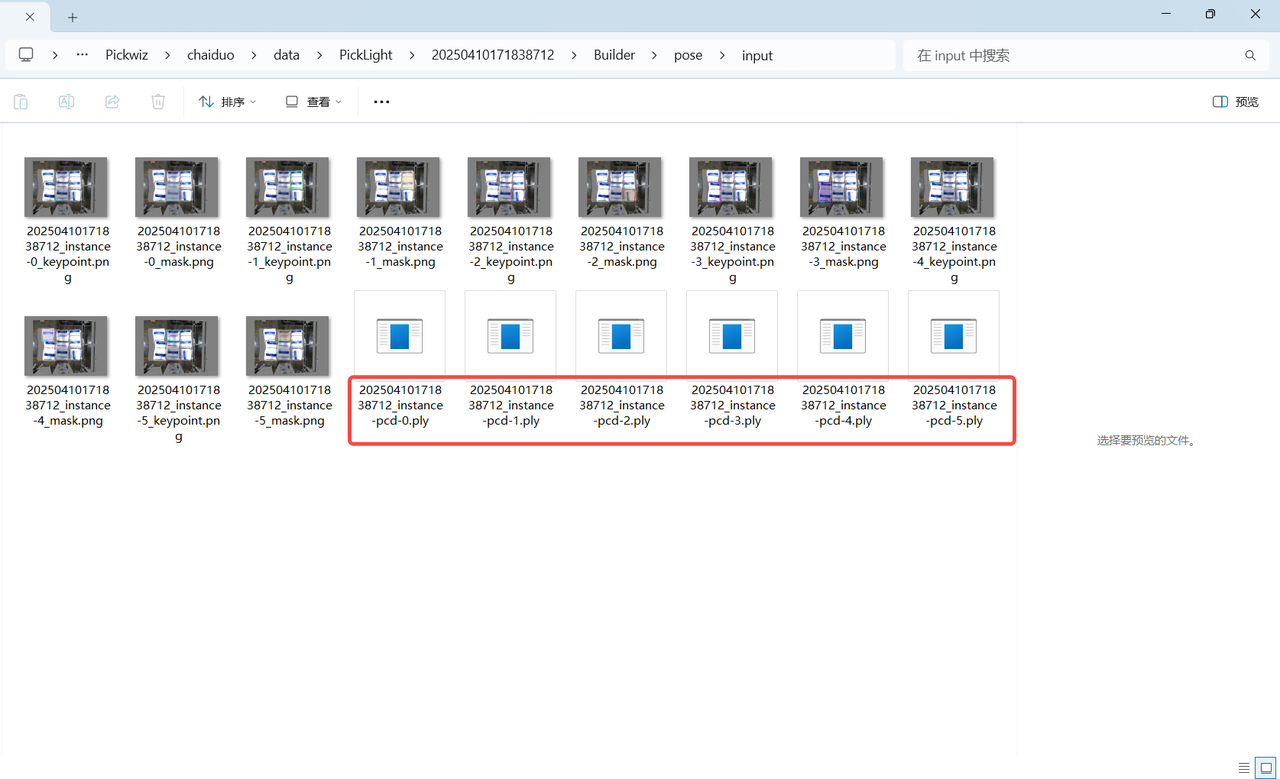

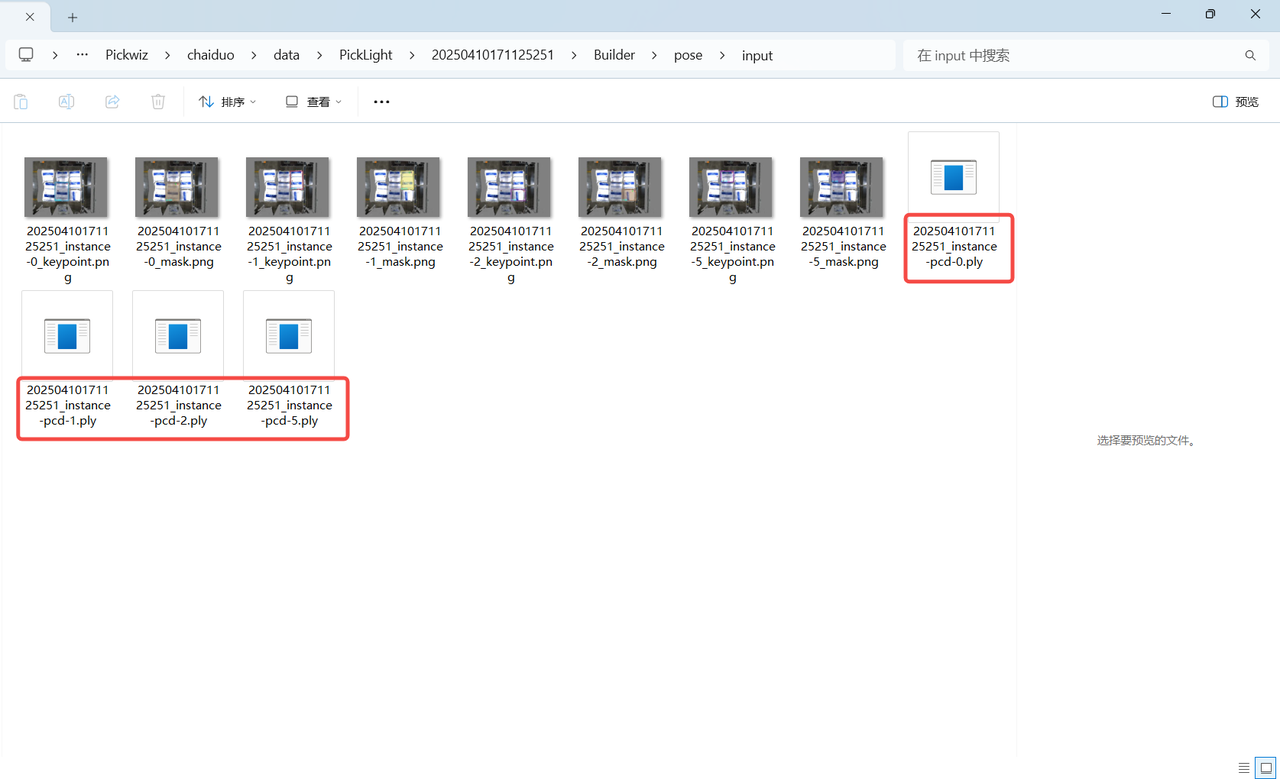

If acceleration is not required, there is no need to use the Instance filtering function. Use Mask mode (after segmentation) to generate the instance Point Cloud. The generated instance Point Cloud can be viewed in the project storage folder \ProjectName\data\PickLight\HistoricalDataTimestamp\Builder\pose\input folder;

If acceleration is required, you can use the Instance filtering function to filter instances and use Mask mode (after filtering) to generate the instance Point Cloud. The generated instance Point Cloud can be viewed in the project storage folder \ProjectName\data\PickLight\HistoricalDataTimestamp\Builder\pose\input folder

1.4 Instance Filtering

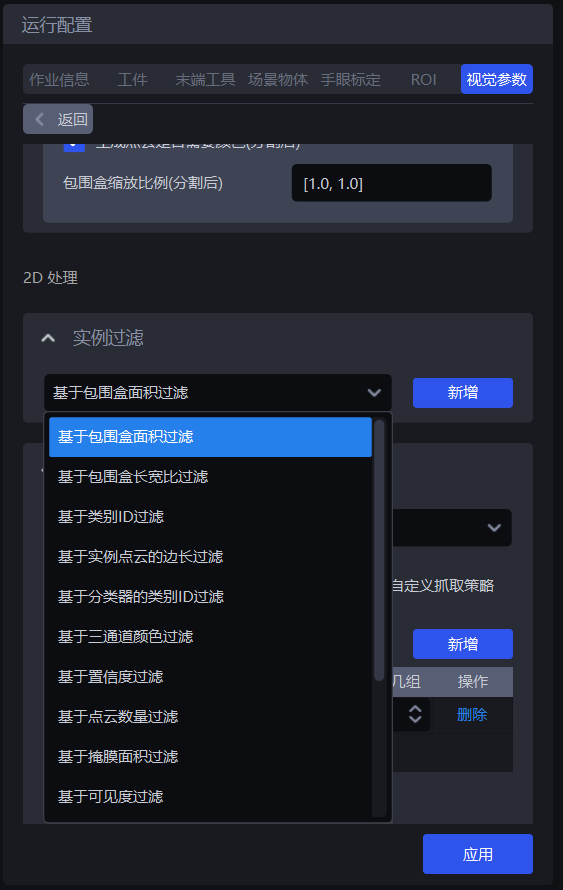

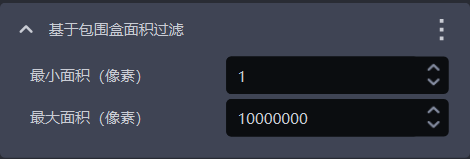

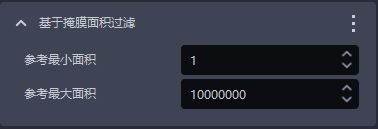

1.4.1 Filter Based on Bounding Box Area

- Function Description

Filter based on the pixel area of the bounding boxes of detected instances.

- Application Scenario

Applicable to scenarios where instance bounding box areas differ greatly. By setting upper and lower bounds for bounding box area, noise in the image can be filtered out, improving image recognition accuracy and avoiding additional processing time caused by noise.

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Minimum area (pixels) | This parameter sets the minimum filtering area of the bounding box. Instances whose bounding box area is lower than this value are filtered out | 1 | [1, 10000000] | pixels |

| Maximum area (pixels) | This parameter sets the maximum filtering area of the bounding box. Instances whose bounding box area is greater than this value are filtered out | 10000000 | [2, 10000000] | pixels |

- Example

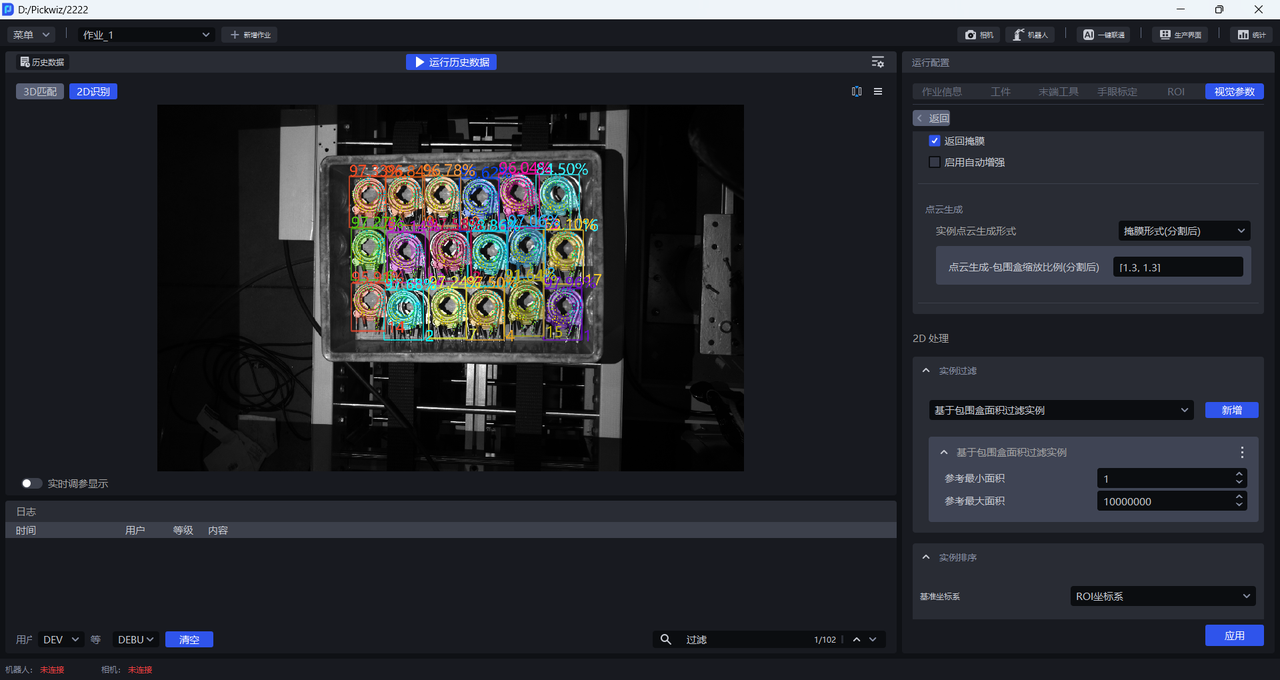

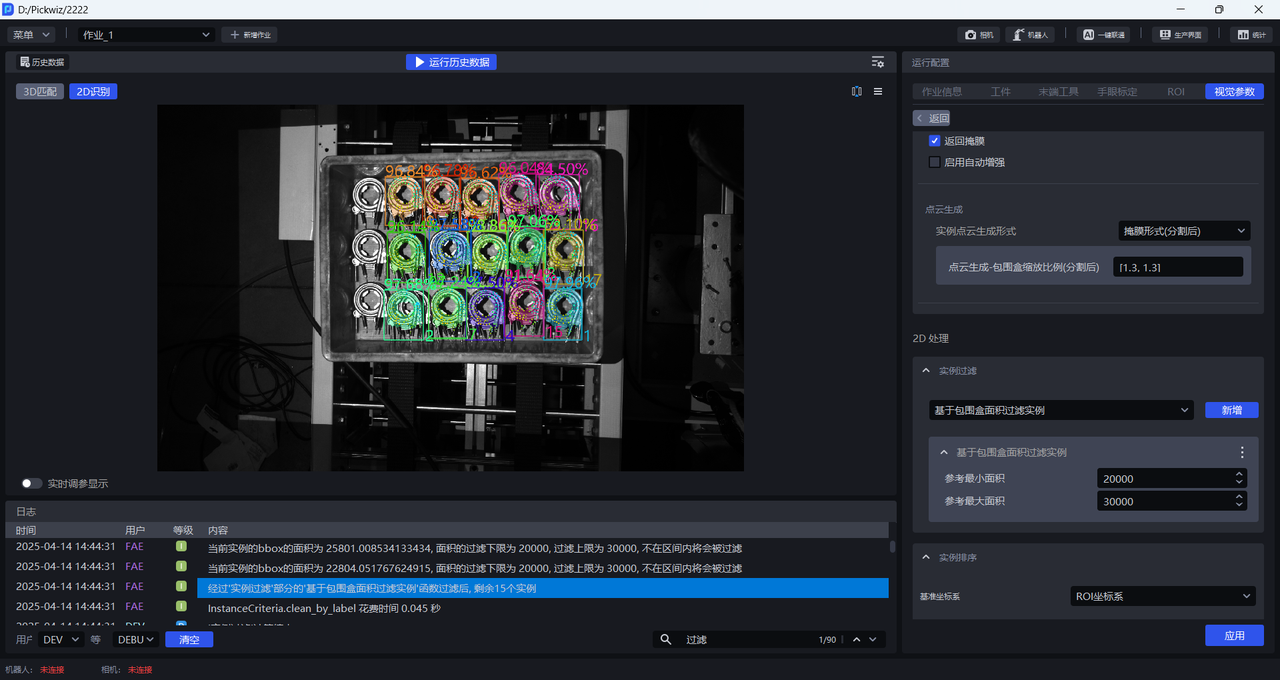

Run with the default values to view the bounding box area of each instance in the logs, as shown below.

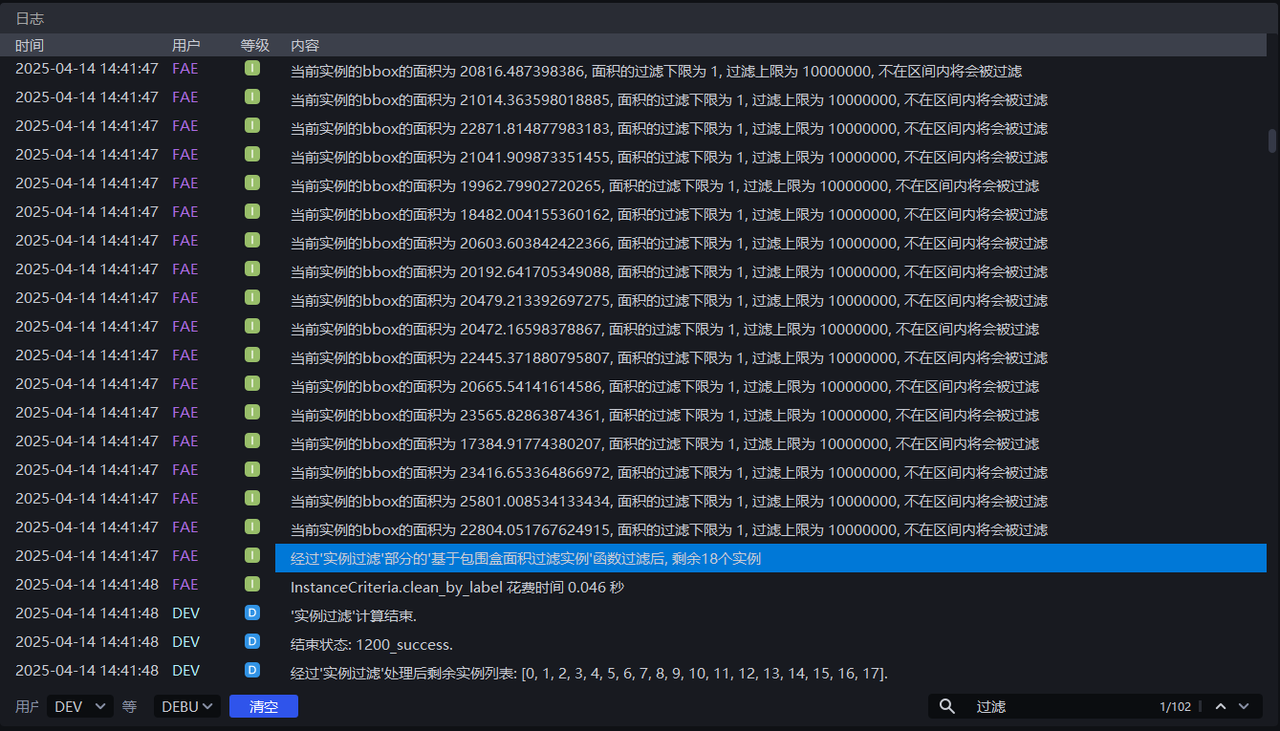

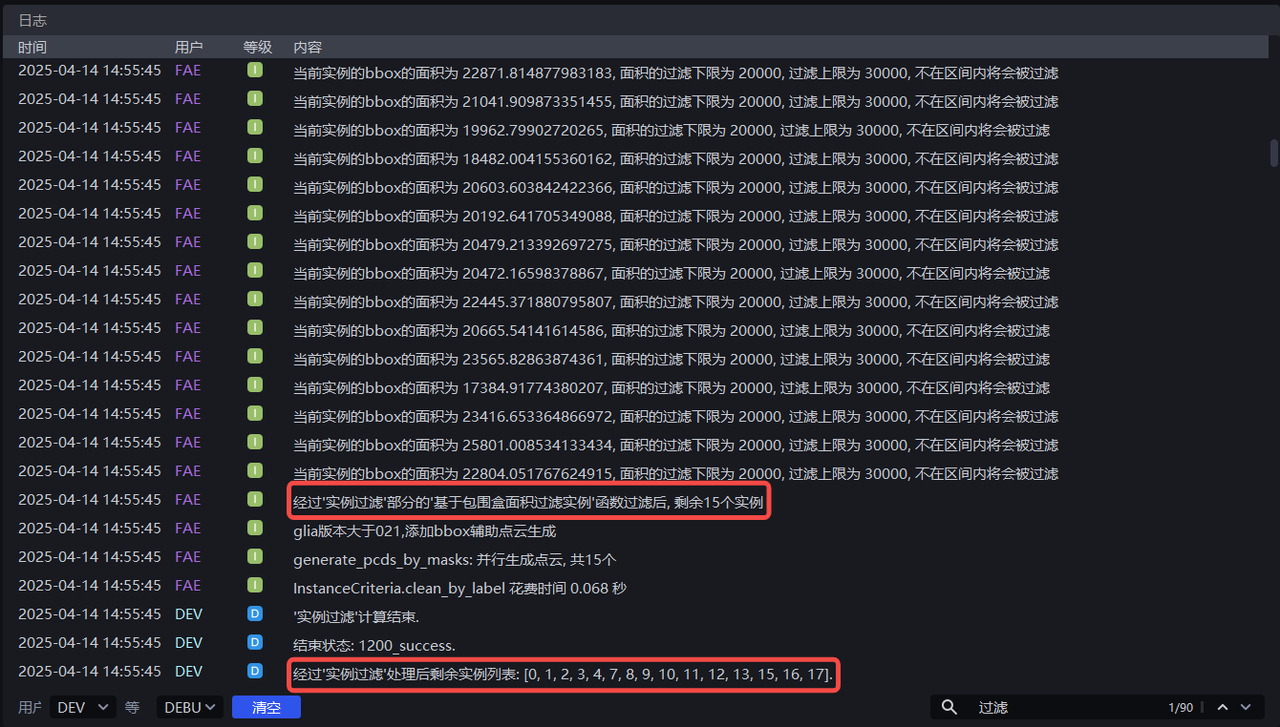

Adjust Minimum area and Maximum area according to the bounding box area of each instance. For example, if Minimum area is set to 20000 and Maximum area is set to 30000, instances whose pixel area is less than 20000 or greater than 30000 are filtered out. The instance filtering process can be viewed in the logs.

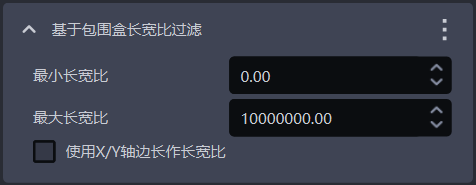

1.4.2 Filter Based on Bounding Box Aspect Ratio

- Function Description

Instances whose bounding box aspect ratios are outside the specified range are filtered out

- Application Scenario

Applicable to scenarios where the aspect ratios of instance bounding boxes differ greatly

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Minimum aspect ratio | Minimum aspect ratio of the bounding box. Instances whose bounding box aspect ratio is lower than this value are filtered out | 0 | [0, 10000000] |

| Maximum aspect ratio | Maximum aspect ratio of the bounding box. Instances whose bounding box aspect ratio is higher than this value are filtered out | 10000000 | [0, 10000000] |

| Use X/Y edge lengths as the aspect ratio | Cleared by default. The ratio of the longer edge to the shorter edge of the bounding box is used as the aspect ratio, which is suitable when the lengths of the long and short edges of the bounding box differ greatly; after selection, the ratio of the bounding box edge lengths on the X-axis/Y-axis in the pixel coordinate system is used as the aspect ratio, which is suitable when most normal instance bounding boxes have similar long-edge/short-edge ratios, but some abnormally recognized instance bounding boxes have large differences in the ratio of their X-axis length to Y-axis length. | Cleared | / |

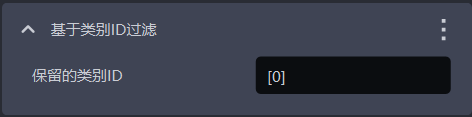

1.4.3 Filter Instances Based on Category ID

- Function Description

Filter according to instance category

- Application Scenario

Applicable to scenarios where the incoming materials contain multiple types of Target Objects

- Parameter Description

| Parameter | Description | Default value |

|---|---|---|

| Retained category IDs | Retain instances whose category IDs are in the list; instances whose category IDs are not in the list are filtered out | [0] |

- Example

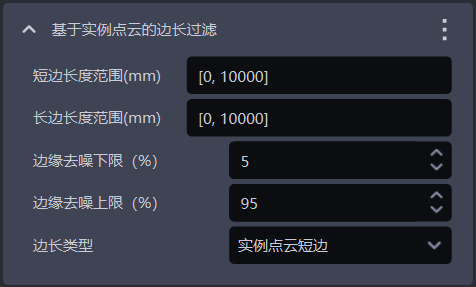

1.4.4 Filter Based on Instance Point Cloud Edge Length

- Function Description

Filter according to the long edge and short edge of the instance Point Cloud

- Application Scenario

Applicable to scenarios where instance Point Clouds differ greatly in distance on the X-axis or Y-axis. By setting the distance range of the instance Point Cloud, noise in the image can be filtered out, improving image recognition accuracy and avoiding additional time in subsequent processing caused by noise.

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Short-edge length range (mm) | Edge length range of the short edge of the Point Cloud | [0, 10000] | [0, 10000] | mm |

| Long-edge length range (mm) | Edge length range of the long edge of the Point Cloud | [0, 10000] | [0, 10000] | mm |

| Lower edge denoising limit (%) | Extract the lower percentage limit of X/Y values (Camera coordinate system) in the instance Point Cloud, and remove Point Cloud data outside the upper and lower limits to avoid noise affecting length calculation | 5 | [0, 100] | / |

| Upper edge denoising limit (%) | Extract the upper percentage limit of X/Y values (Camera coordinate system) in the instance Point Cloud, and remove Point Cloud data outside the upper and lower limits to avoid noise affecting length calculation | 95 | [0, 100] | / |

| Edge length type | Filter by the long edge and short edge of the instance Point Cloud. Instances whose long-edge or short-edge lengths are outside the range are filtered out | Instance Point Cloud short edge | Instance Point Cloud short edge; Instance Point Cloud long edge; Instance Point Cloud long and short edges | / |

- Example

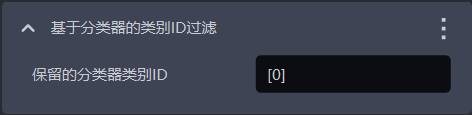

1.4.5 Category ID Filtering Based on Classifier

- Function Description

Filter instances based on classifier category IDs. Instances not in the reference categories are filtered out.

- Application Scenario

In multi-category Target Object scenarios, the vision model may detect multiple types of Target Objects, but the actual task may require only one category. In this case, this function can be used to filter out unnecessary Target Objects

- Parameter Description

The default value is [0], which means that instances with category ID 0 are retained by default, and instances whose category IDs are not in the list are filtered out.

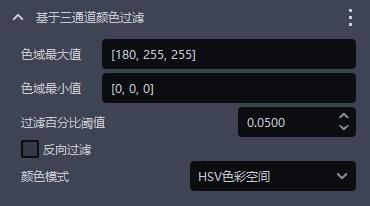

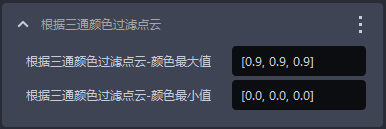

1.4.6 Filter Based on Three-Channel Color

- Function Description

Instances can be filtered out using three-channel color thresholds (HSV or RGB).

- Application Scenario

Applicable when the colors of incorrect instances and correct instances are clearly distinguishable.

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Maximum color range value | Maximum color value | [180,255,255] | [[0,0,0],[255,255,255]] |

| Minimum color range value | Minimum color value | [0,0,0] | [[0,0,0],[255,255,255]] |

| Filtering percentage threshold | Color pass-rate threshold | 0.05 | [0,1] |

| Reverse filtering | Select it to remove instances whose proportion outside the color range is lower than the threshold; clear it to remove instances whose proportion within the color range in the instance image is lower than the threshold | Cleared | / |

| Color mode | Color space selected for color filtering | HSV color space | RGB color space; HSV color space |

- Example

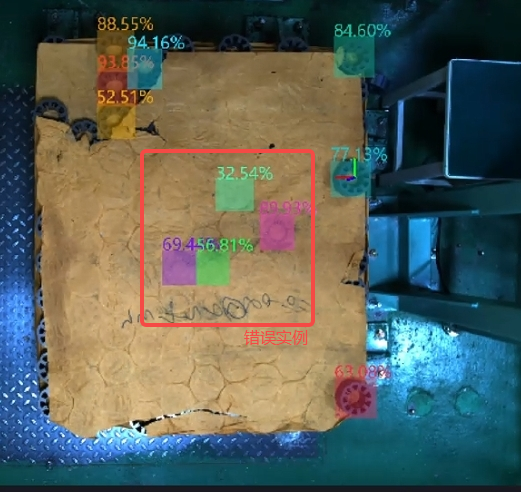

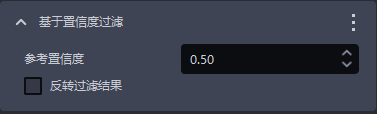

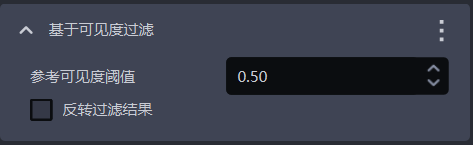

1.4.7 Filter Based on Confidence

- Function Description

Filter according to the Confidence score of the instance

- Application Scenario

Applicable to scenarios where instance Confidence values differ greatly

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Reference Confidence | Retain instances whose Confidence is greater than the threshold, and filter out instances whose Confidence is less than the threshold. | 0.5 | [0,1] |

| Invert filtering result | After inversion, retain instances whose Confidence is less than the threshold, and filter out instances whose Confidence is greater than the threshold. | Cleared | / |

- Example

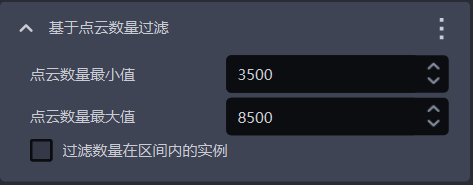

1.4.8 Filter Based on Point Cloud Quantity

- Function Description

Filter according to the number of downsampled instance Point Cloud points

- Application Scenario

Applicable when the instance Point Cloud contains a large amount of noise

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Minimum Point Cloud quantity | Minimum Point Cloud quantity | 3500 | [1, 10000000] |

| Maximum Point Cloud quantity | Maximum Point Cloud quantity | 8500 | [2, 10000000] |

| Filter instances whose quantity is within the interval | Select it to filter instances whose Point Cloud quantity is within the interval between the minimum and maximum values; clear it to filter instances whose Point Cloud quantity is outside the interval | Cleared | / |

1.4.9 Filter Based on Mask Area

- Function Description

Filter image masks based on the sum of the Mask pixels of detected instances (that is, the pixel area).

- Application Scenario

Applicable to scenarios where instance Mask areas differ greatly. By setting upper and lower limits for the Mask area, noise in the image Mask can be filtered out, improving image recognition accuracy and avoiding additional time in subsequent processing caused by noise.

- Parameter Description

| Parameter name | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Reference minimum area | This parameter sets the minimum filtering area of the Mask. Instances whose Mask area is lower than this value are filtered out | 1 | [1, 10000000] | pixels |

| Reference maximum area | This parameter sets the maximum filtering area of the Mask. Instances whose Mask area is higher than this value are filtered out | 10000000 | [2, 10000000] | pixels |

- Example

1.4.10 Filter Based on Visibility

- Function Description

Filter according to the visibility score of the instance

- Application Scenario

Applicable to scenarios where instance visibility differs greatly

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Reference visibility threshold | Retain instances whose visibility is greater than the threshold, and filter out instances whose visibility is less than the threshold. Visibility is used to judge how visible the instance is in the image. The more the Target Object is occluded, the lower the visibility. | 0.5 | [0,1] |

| Invert filtering result | After inversion, retain instances whose visibility is lower than the threshold, and filter out instances whose visibility is higher than the threshold. | Cleared | / |

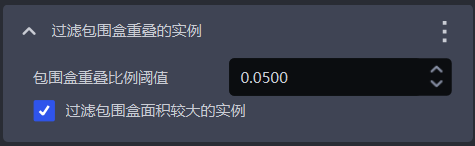

1.4.11 Filter Instances with Overlapping Bounding Boxes

- Function Description

Filter instances whose bounding boxes overlap

- Application Scenario

Applicable to scenarios where instance bounding boxes intersect each other

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Bounding box overlap ratio threshold | Threshold for the ratio of the overlapping area of bounding boxes to the area of the instance bounding box | 0.05 | [0, 1] |

| Filter the instance with the larger bounding box area | Select it to filter the instance with the larger area among two instances whose bounding boxes overlap; clear it to filter the instance with the smaller area among the two | Selected | / |

- Example

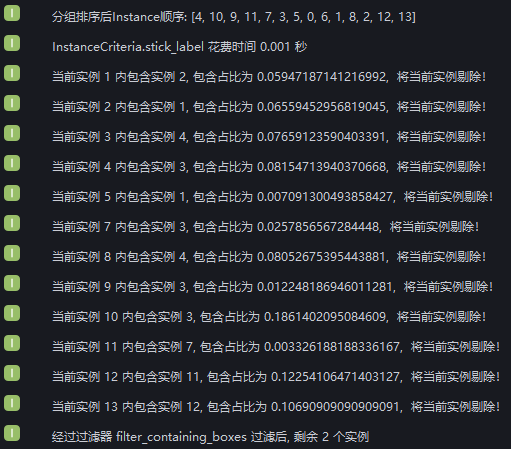

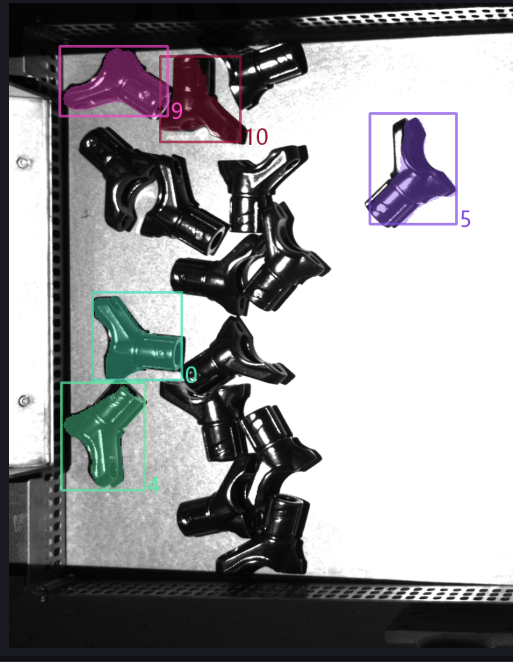

Add the function for filtering enclosed instances. Run with the default values and view the overlapping bounding box situation of instances in the logs. Two instances remain after filtering.

The logs show that 12 instances are filtered out because of bounding box overlap, leaving 2 instances whose bounding boxes do not overlap

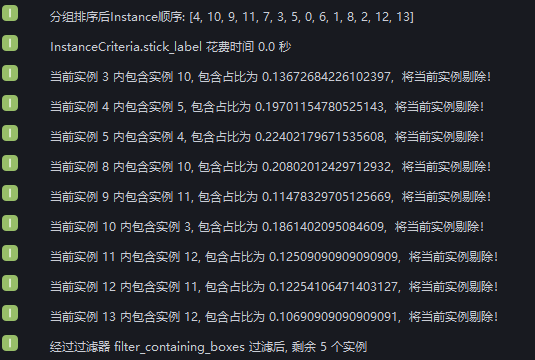

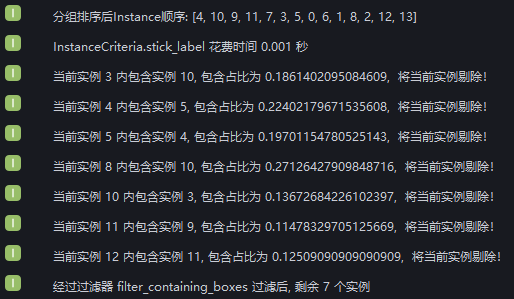

Set Bounding box overlap ratio threshold to 0.1 and select whether to filter the larger instance. View the instance filtering process in the logs. Nine instances are filtered out because the ratio of the overlapping area to the instance bounding box area is greater than 0.1, three instances are retained because the ratio is less than 0.1, and two instances have non-overlapping bounding boxes.

Set Bounding box overlap ratio threshold to 0.1 and clear whether to filter the larger instance. View the instance filtering process in the logs. The ratio of overlapping area to instance bounding box area is greater than 0.1 for 9 instances, but 2 of them are retained because their bounding box areas are smaller than those of the instances overlapping with them. Therefore, 7 instances are filtered out, 3 instances are retained because the ratio is less than 0.1, and 2 instances have non-overlapping bounding boxes.

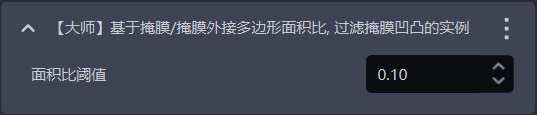

1.4.12 [Advanced] Filter Instances with Concave/Convex Masks Based on the Mask / Mask Circumscribed Polygon Area Ratio

- Function Description

Calculate the area ratio of the Mask to the polygon circumscribed around the Mask. If the ratio is lower than the set threshold, the instance is filtered out

- Application Scenario

Applicable when the Target Object Mask has jagged edges or concave/convex irregularities.

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Area ratio threshold | Threshold for the Mask / convex hull area ratio. If it is lower than the set threshold, the instance is filtered out. | 0.1 | [0,1] |

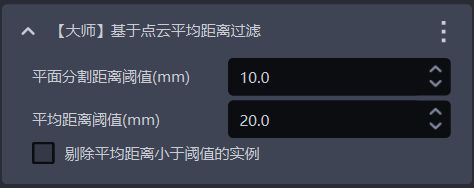

1.4.13 [Advanced] Filter Based on the Average Point Cloud Distance

- Function Description

Filter based on the average distance from points in the Point Cloud to the fitted plane, removing uneven instance Point Clouds

- Application Scenario

Applicable to scenarios where the Point Cloud of a planar Target Object is bent

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Plane segmentation distance threshold (mm) | Extract a plane from the bent instance Point Cloud. Points whose distances to the plane are smaller than this threshold are regarded as points on the plane | 10 | [-1000, 1000] | mm |

| Average distance threshold (mm) | Average value of the distances from points in the instance Point Cloud to the extracted plane | 20 | [-1000, 1000] | mm |

| Remove instances whose average distance is less than the threshold | Select it to filter instances whose average distance from points to the extracted plane is less than the average distance threshold; clear it to filter instances whose average distance is greater than the average distance threshold | Cleared | / | / |

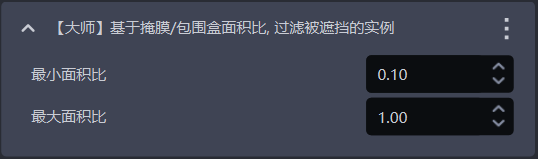

1.4.14 [Advanced] Filter Occluded Instances Based on the Mask / Bounding Box Area Ratio

- Function Description

Calculate the Mask / bounding box area ratio. Instances whose ratios are outside the minimum and maximum range are filtered out

- Application Scenario

Used to filter instances of occluded Target Objects

- Parameter Description

| Parameter | Description | Default value | Value range |

|---|---|---|---|

| Minimum area ratio | Lower limit of the Mask / bounding box area ratio range. The smaller the ratio, the more severely the instance is occluded | 0.1 | [0,1] |

| Maximum area ratio | Upper limit of the Mask / bounding box area ratio range. The closer the ratio is to 1, the less the instance is occluded | 1.0 | [0,1] |

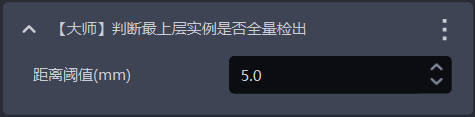

1.4.15 [Advanced] Determine Whether All Top-Layer Instances Have Been Detected

- Function Description

One of the foolproof mechanisms. It checks whether all top-layer instances have been detected. If any top-layer instance has not been detected, an error is reported and the Workflow ends

- Application Scenario

Applicable to scenarios where one image capture is used for multiple picks or where picking must be performed in sequence, preventing missed picks caused by incomplete instance detection from affecting subsequent tasks

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit | Tuning |

|---|---|---|---|---|---|

| Distance threshold | Used to determine whether a Target Object is in the top layer. If the distance between a point and the highest point of the Target Object Point Cloud is smaller than the distance threshold, the point is considered part of the top-layer Point Cloud; otherwise, it is not considered part of the top-layer Point Cloud. | 5 | [0.1, 1000] | mm | Should be smaller than the height of the Target Object |

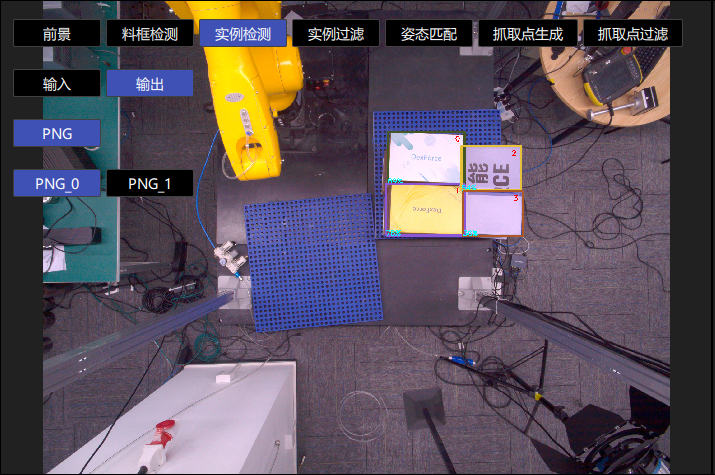

1.5 Instance Sorting

- Function Description

Group, sort, and extract instances according to the selected strategy

- Application Scenario

Applicable to depalletizing, random picking, and ordered loading/unloading scenarios

If sorting is not required, you do not need to configure a specific strategy.

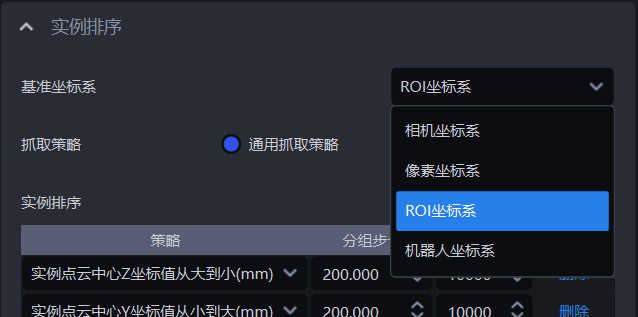

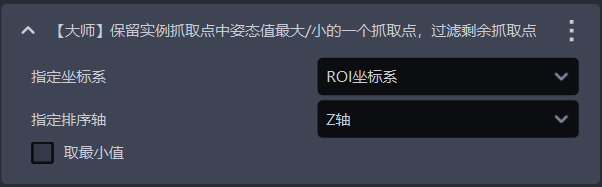

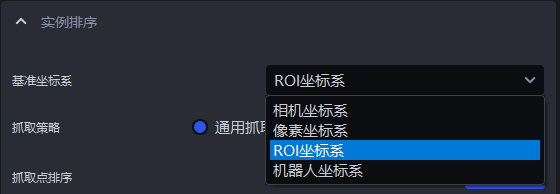

1.5.1 Reference Coordinate System

- Function Description

Set a unified coordinate system for all instances for instance grouping and sorting

- Application Scenario

Applicable to depalletizing, random picking, and ordered loading/unloading scenarios

Strategies related to coordinates require the reference coordinate system to be set first

- Parameter Description

| Parameter | Description | Illustration |

|---|---|---|

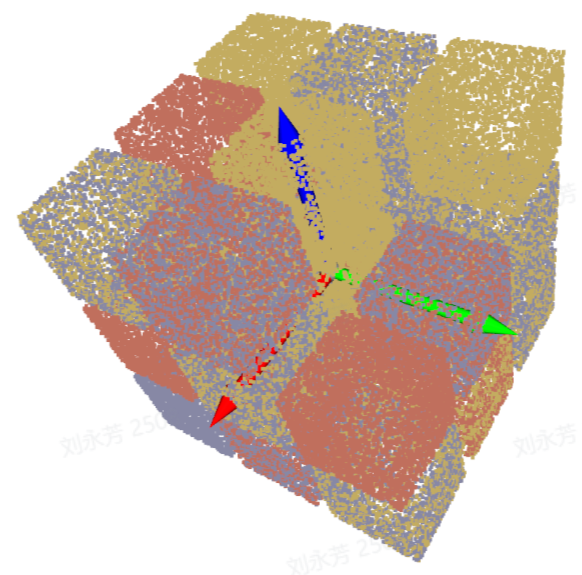

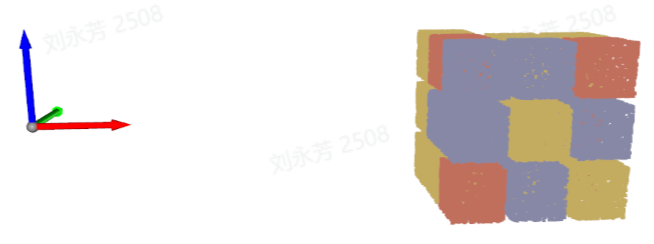

| Camera coordinate system | The coordinate system origin is above the object, and the positive Z-axis points downward; the XYZ values are the values of the object center point in this coordinate system |  |

| ROI coordinate system | The coordinate system origin is approximately at the center of the stack, and the positive Z-axis points upward; the XYZ values are the values of the object center point in this coordinate system |  |

| Robot Arm coordinate system | The coordinate system origin is on the Robot Arm itself, and the positive Z-axis generally points upward; the XYZ values are the values of the object center point in this coordinate system |  |

| Pixel coordinate system | The coordinate system origin is at the upper-left corner of the RGB image, which is a two-dimensional plane coordinate system; X and Y are the x and y values of the bbox detection box, and Z is 0 |  |

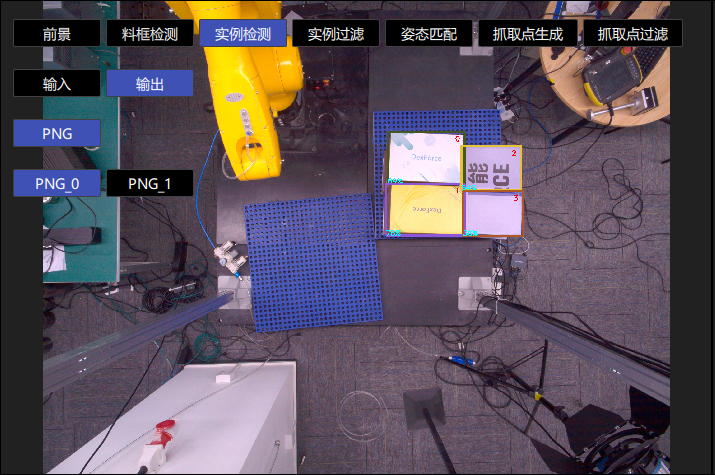

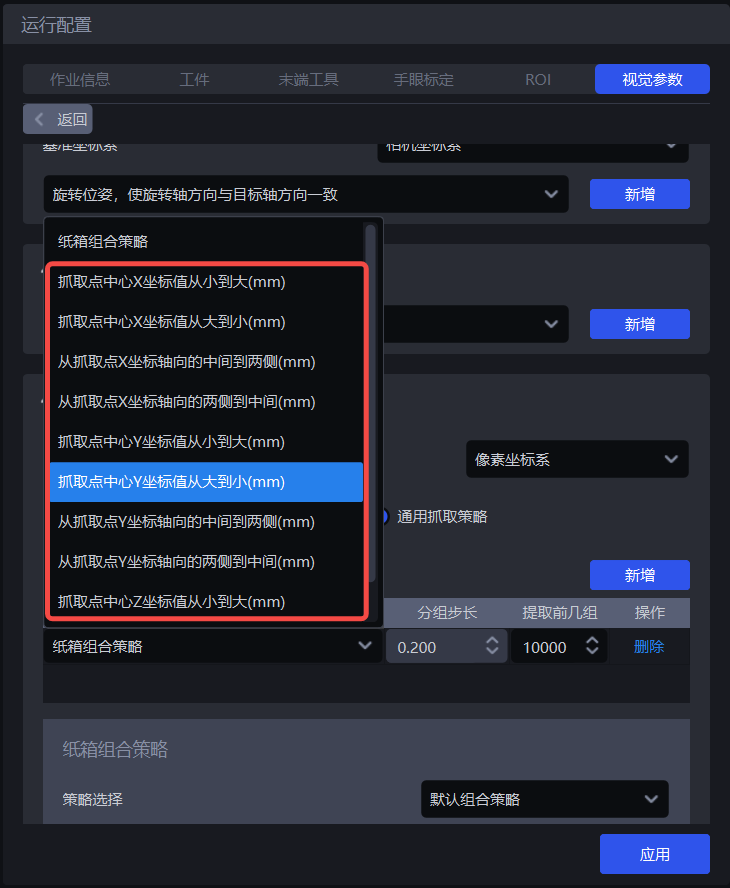

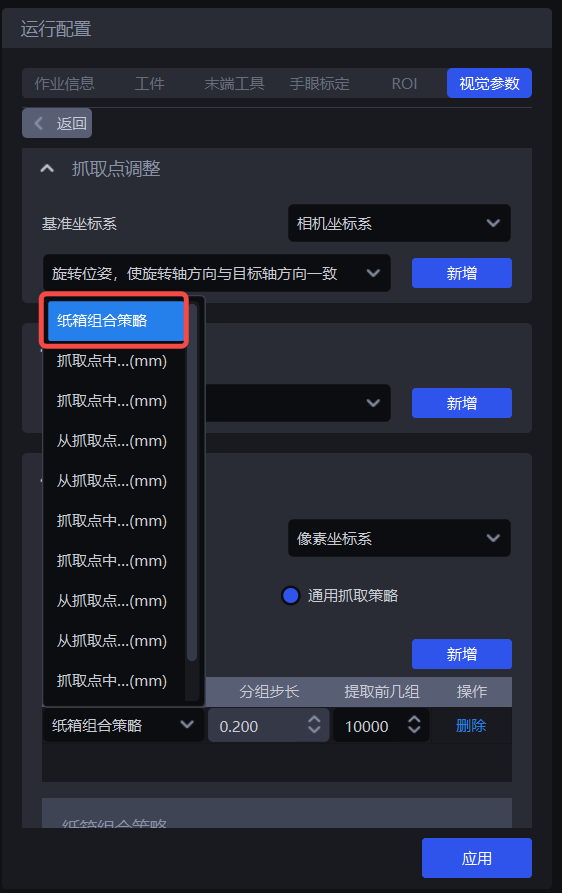

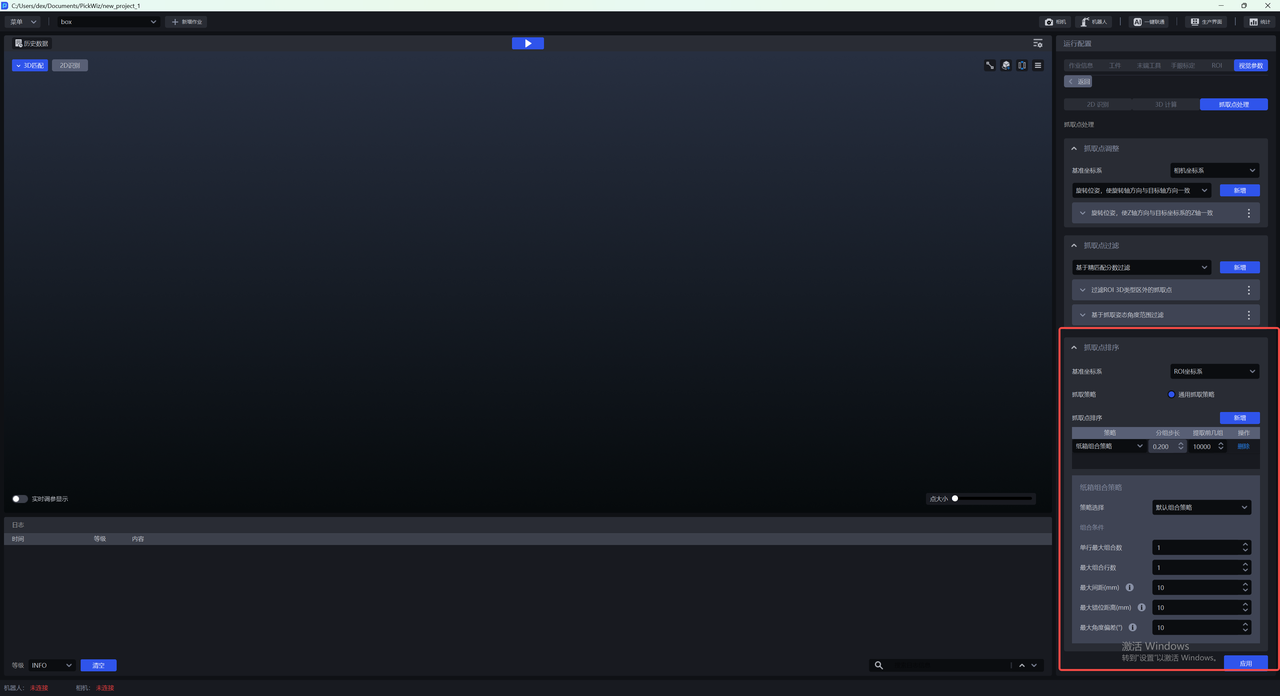

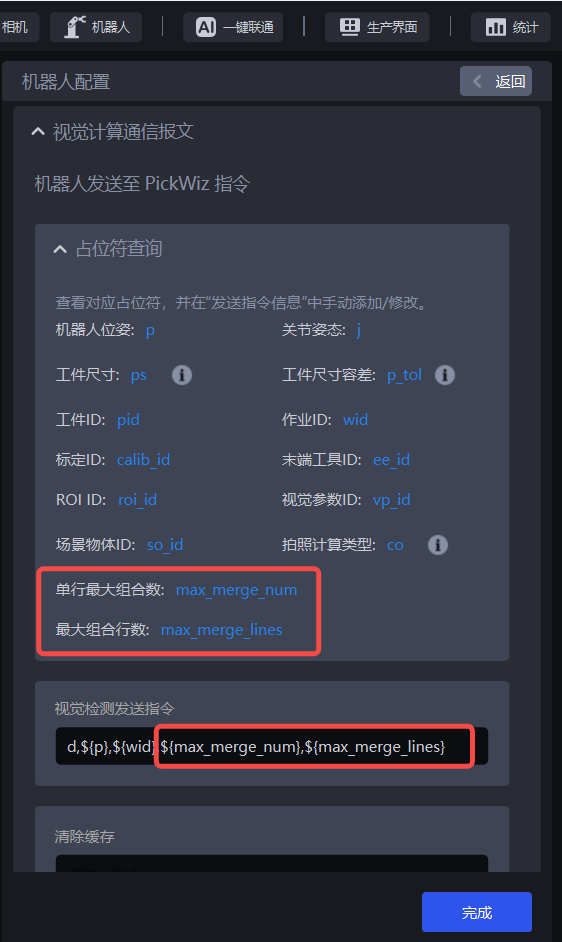

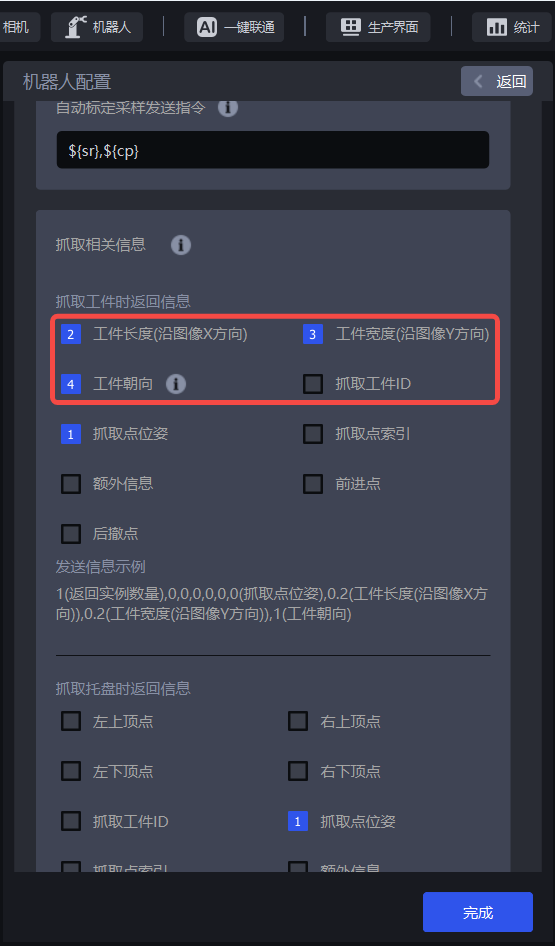

1.5.2 General Grasping Strategy

- Parameter Description

| Parameter | Description | Default value |

|---|---|---|

| Strategy | Select which value is used for grouping and sorting and how to sort, including the XYZ coordinates of the instance Point Cloud center, the bounding box aspect ratio, the distance from the instance Point Cloud center to the ROI center, and so on. Multiple strategies can be stacked and executed in sequence | Instance Point Cloud center X coordinate from small to large (mm) |

| Grouping step size | According to the selected strategy, instances are divided into several groups based on the step size, which is the spacing between two groups of instances. For example, if the strategy selected is “Instance Point Cloud center Z coordinate from large to small (mm)”, then the Z coordinates of all instance Point Cloud centers are sorted from large to small, then grouped by step size, and the corresponding instances are also divided into several groups | / |

| Extract the first N groups | How many groups of instances need to be retained after grouping and sorting | 10000 |

| Strategy name | Description | Grouping step size | Extract the first N groups | |

|---|---|---|---|---|

| Default value | Value range | Default value | ||

| Instance Point Cloud center XYZ coordinate from large to small / from small to large (mm) | Use the XYZ coordinates of each instance's Point Cloud center for grouping and sorting The reference coordinate system should be set before using this strategy for sorting | 200.000 | (0, 10000000] | 10000 |

| From the middle to both sides / from both sides to the middle along the XY coordinate axes of the instance Point Cloud center (mm) | Use the XY coordinate values of each instance's Point Cloud center and perform grouping and sorting in the direction of “middle to both sides” or “both sides to middle” The reference coordinate system should be set before using this strategy for sorting | 200.000 | (0, 10000000] | 10000 |

| Bounding box aspect ratio from large to small / from small to large | Use the ratio of the long edge to the width edge of the bounding box for grouping and sorting | 1 | (0, 10000] | 10000 |

| Mask area from large to small / from small to large | Use the Mask area of each instance for grouping and sorting | 10000 | [1, 10000000] | 10000 |

| Distance from the instance Point Cloud center to the ROI center from near to far / from far to near (mm) | Use the distance between each instance's Point Cloud center and the center of the ROI coordinate system for grouping and sorting | 200.000 | (0, 10000] | 10000 |

- Example

1.5.3 Custom Grasping Strategy

(1) Function Description

Switch Grasping Strategy to Custom Grasping Strategy, then click Add to add a custom grasping strategy.

Customize the picking order of each Target Object. If it is difficult to achieve picking with a general grasping strategy, or if it is difficult to tune suitable parameters because of Point Cloud noise and similar issues, you can consider using a custom grasping strategy

Custom grasping strategies are applicable to depalletizing and ordered loading/unloading scenarios, but not to random picking scenarios, because the Target Objects in a custom grasping strategy must be ordered (that is, their order is fixed)

A custom grasping strategy can only be used together with a single general grasping strategy, and the strategy can only be set to Z coordinate from small to large

(2) Parameter Description

| Parameter | Description | Default value | Value range | Tuning |

|---|---|---|---|---|

| IoU threshold | Indicates the overlap threshold between the annotated bbox and the detected bbox. The overlap is used to determine which image's sorting method should be used when sorting the current Target Object instances. | 0.7 | [0,1] | The larger the threshold, the stricter the matching, but the worse the anti-interference ability. Small changes in shape or position may cause matching failure, possibly matching an incorrect custom strategy and resulting in the wrong sorting order |

| Pixel distance threshold | Represents the size difference between a matchable bbox and the detected bbox. | 100 | [0,1000] | The smaller the threshold, the stricter the matching and the better the anti-interference ability. However, if the Target Object arrangements of different layers are very similar, the custom strategy may still be mismatched, resulting in an incorrect sorting order. |

(3) Select the Reference Coordinate System

When using a custom grasping strategy, only the Camera coordinate system or the Pixel coordinate system can be selected

If there are multiple layers of Target Objects, select the Camera coordinate system; if there is only one layer of Target Objects, select the Pixel coordinate system

(4) Strategy, Grouping Step Size, and Number of Groups to Extract

| Parameter | Description | Default value |

|---|---|---|

| Strategy | Only Instance Point Cloud center Z coordinate from large to small / from small to large (mm) can be selected | / |

| Grouping step size | According to the strategy of Z coordinates from small to large, sort the Z coordinates of instances from small to large and divide the instances into several groups according to the step size | 10000 |

| Extract the first N groups | How many groups of instances need to be retained after grouping and sorting | 10000 |

(5) Capture an Image / Add a Local Image

Click Capture Image to obtain an image from the currently connected Camera, or click Add Local Image to import an image locally. You need as many images as there are layers or different placement patterns of Target Objects. If every layer is the same, only one image is needed. Right-click an image to delete it.

Press and hold the left mouse button on the acquired image and drag to annotate bbox boxes. The DELETE key can be used to delete annotated bbox boxes one by one.

2. 3D Calculation

This section mainly explains the functions related to Pick Point generation and provides parameter tuning suggestions.

2.1 Preprocessing

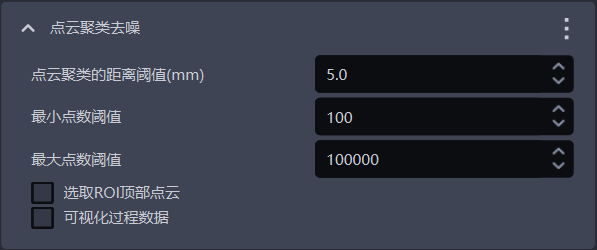

2.1.1 Point Cloud Clustering Denoising

- Function

Remove noise by Point Cloud clustering

- Application Scenario

Applicable when there is a large amount of noise in the instance Point Cloud

- Parameter Description

| Parameter name | Description | Default value | Value range | Unit | Tuning suggestion |

|---|---|---|---|---|---|

| Point Cloud clustering distance threshold (mm) | Determine whether Point Clouds in space belong to the same category. If the distance between Point Clouds is lower than this threshold, they are treated as the same category | 5 | [0.1, 1000] | mm | Generally does not need to be changed; it should be greater than the point spacing of the Target Object Point Cloud and smaller than the minimum distance between the Target Object Point Cloud and the noise Point Cloud |

| Minimum point count threshold | Point Cloud clusters with fewer points than this value are filtered out | 100 | [1,10000000] | / | Generally does not need to be changed; increase the minimum point count threshold according to the amount of noise in the instance Point Cloud |

| Maximum point count threshold | Point Cloud clusters with more points than this value are filtered out | 100000 | [1,10000000] | / | Generally does not need to be changed; if the number of Target Object Point Cloud points is greater than 100000, increase the maximum point count threshold |

| Select the top Point Cloud in the ROI | Select it to calculate and sort the average Z coordinate of Point Clouds of the same category in the ROI coordinate system, and retain the Point Cloud category with the largest average Z coordinate (top Point Cloud). Clear it to retain all eligible Point Clouds | Cleared | / | / | If the Target Object Point Cloud is above the noise Point Cloud, selecting this option retains the Target Object Point Cloud. If the Target Object Point Cloud is below the noise Point Cloud, select this option and also adjust the Z-axis of the ROI coordinate system to point downward to retain the Target Object Point Cloud |

| Visualize process data | Select it to save the denoised Point Cloud, which can be viewed in C:_data | Cleared | / | / | In debugging mode, select this if you need to save visualization data |

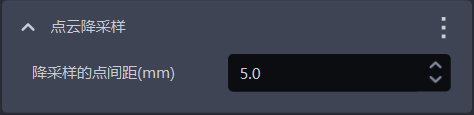

2.1.2 Point Cloud Downsampling

- Function

Sample the Point Cloud according to the specified point spacing to reduce the number of calculation points and increase model inference speed, but accuracy may decrease

- Application Scenario

Select Point Cloud Downsampling when the number of Point Cloud points in the actual scenario is too large.

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Point spacing for downsampling (mm) | Sample the Point Cloud according to the specified point spacing | 5.0 | [0.1, 1000] | mm |

Tuning

The larger the value of point spacing for downsampling, the fewer Point Cloud points remain after downsampling, so Pick Point calculation becomes faster, but accuracy may decrease

The smaller the value of point spacing for downsampling, the more Point Cloud points remain after downsampling, so Pick Point calculation becomes slower, but accuracy improves

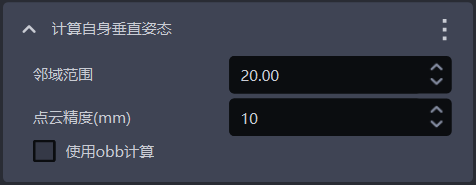

2.1.3 Calculate Its Own Vertical Pose

- Function

Calculate the pose of a tilted bag in the vertical direction

- Application Scenario

Applicable to depalletizing scenarios where bags are placed in a tilted manner

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Neighborhood range | Neighborhood range of the center point on the bag surface, that is, the distance from the center point to the edge. This value affects the processing and analysis of the Point Cloud around that center point | 20.00 | [0, 100] | / |

| Point Cloud precision (mm) | Distance between adjacent points in the 3D Point Cloud image collected by the Camera | 10 | [1, 1000] | mm |

| Use OBB for calculation | Select it to use the z vector of the Point Cloud OBB (oriented bounding box) as the vertical direction of the bag itself. Otherwise, traverse the Point Cloud within the neighborhood range of the center point and choose the largest axis in the projected axes as the vertical direction of the bag itself | Cleared | / | / |

- Example

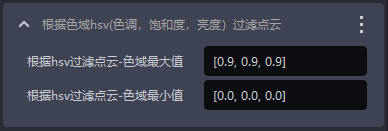

2.1.4 Filter Point Clouds According to HSV Color Range (Hue, Saturation, Value)

- Function

Filter the Point Cloud according to hue, saturation, and value in the Point Cloud image to select Point Cloud regions matching the target range

- Parameter Description

| Parameter name | Description | Default value | Value range |

|---|---|---|---|

| Filter depth by HSV - maximum color range value | Maximum color value for filtering the Point Cloud | [0.9,0.9,0.9] | [[0,0,0],[1,1,1]] |

| Filter depth by HSV - minimum color range value | Minimum color value for filtering the Point Cloud | [0.0,0.0,0.0] | [[0,0,0],[1,1,1]] |

Filter Point Clouds by Three-Channel Color

- Function

Filter the Point Cloud by three-channel color to select Point Cloud regions matching the target range

- Parameter Description

| Parameter name | Description | Default value | Value range |

|---|---|---|---|

| Filter Point Cloud by three-channel color - maximum color value | Maximum color value for filtering the Point Cloud | [0.9,0.9,0.9] | [[0,0,0],[1,1,1]] |

| Filter depth by three-channel color - minimum color value | Minimum color value for filtering the Point Cloud | [0.0,0.0,0.0] | [[0,0,0],[1,1,1]] |

2.1.5 Select Point Clouds Inside the ROI Area

- Function

Select the Point Cloud within the ROI 3D area from the instance Point Cloud. This default function cannot be deleted

2.1.6 Optimize the Mask According to the Point Cloud

- Function

Based on the Point Cloud within ROI 3D, remove Point Cloud data in the Mask that is not within ROI 3D to improve Mask precision

- Application Scenario

Applicable to depalletizing scenarios where model recognition sticks to areas outside ROI 3D

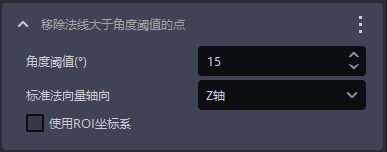

2.1.7 Remove Points Whose Normals Exceed the Angle Threshold

- Function

Remove Point Cloud points whose angle between the Normal and the reference Normal axis direction is greater than the Normal angle threshold

- Application Scenario

Applicable to loading/unloading of planar Target Objects (materials isolated from each other)

- Parameter Description

| Parameter name | Description | Default value | Value range | Unit |

|---|---|---|---|---|

| Angle threshold | Point Cloud points with angles greater than this threshold are considered to belong to different instances | 15 | [-360, 360] | |

| Reference Normal axis direction | The angle formed between the Point Cloud Normal and the reference Normal axis direction | Z-axis | X/Y/Z-axis | / |

| Whether to use the ROI coordinate system | Select it to calculate the angle between the Normal and the axes of the ROI coordinate system; clear it to calculate the angle between the Normal and the axes of the Camera coordinate system | Cleared | / | / |

2.1.8 Point Cloud Plane Segmentation

- Function

When fitting a plane to the Point Cloud, points whose distance to the plane is lower than the reference distance are considered points on the plane; otherwise, they are considered points outside the plane. The fitted plane can be retained or removed.

- Application Scenario

Applicable to single-carton depalletizing scenarios, but not to single-bag depalletizing scenarios

- Parameter Description

Default value:3.000

Value range:[0.001, 10000]

Unit:mm

- Tuning

The larger the reference distance, the thicker the fitted plane; the smaller the reference distance, the thinner the fitted plane

Select Remove plane to remove the plane with the largest number of Point Cloud points; clear it to retain the plane with the largest number of Point Cloud points

- Example

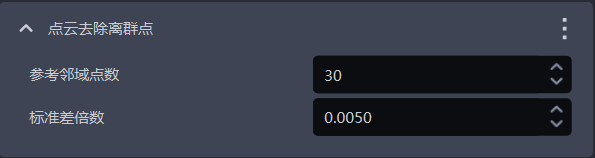

2.1.9 Remove Point Cloud Outliers

- Function

Identify and remove outlier noise within the neighboring point region (that is, the neighborhood) of each point in the Point Cloud

- Application Scenario

Applicable when the object Point Cloud contains a large amount of outlier noise

- Parameter Description

| Parameter | Description | Default value | Parameter range | Tuning suggestion |

|---|---|---|---|---|

| Reference neighborhood point count | The number of neighboring points for each point in the Point Cloud, that is, the neighborhood size. For dense Point Clouds, even a small neighborhood is sufficient to reflect the features of the object, so a smaller value can be used. For sparse Point Clouds, a larger neighborhood is needed to reflect the object features, so a larger value should be used. | 30 | [1, 10000000] | |

| Standard deviation multiplier | Used to identify outliers. If the deviation between a point's coordinates and the average coordinates of the object Point Cloud exceeds the standard deviation multiplier, the point is considered an outlier. The smaller the value, the more points are considered outliers and removed, but important object features may also be removed by mistake. The larger the value, the fewer points are considered outliers and removed, but some outliers may be retained and affect image recognition accuracy. | 0.005 | [0.0001, 2] | If the Point Cloud becomes too sparse after Remove Point Cloud Outliers, increase the standard deviation multiplier |

- Example

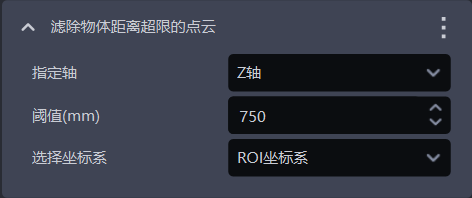

2.1.10 Filter Out Point Clouds Whose Object Distance Exceeds the Limit

- Function

Filter out Point Clouds in the specified direction to remove noise and improve the accuracy of Pick Point calculation

- Application Scenario

Usually used in single-bag depalletizing scenarios

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit | Tuning suggestion |

|---|---|---|---|---|---|

| Specified axis | Specified axis of the Point Cloud, used to filter out Point Clouds in the specified direction | Z-axis | X/Y/Z-axis | / | Specified axis generally does not need to be changed |

| Threshold (mm) | Along the specified axis, if the distance between the lower-layer Point Cloud and the Target Object Point Cloud is greater than this threshold, the lower-layer Point Cloud is filtered out; if the distance is less than this threshold, the lower-layer Point Cloud is retained | 750 | [0, 1000] | mm | Adjust the threshold according to the actual scenario. The larger the threshold, the fewer Point Clouds are filtered out; the smaller the threshold, the more Point Clouds are filtered out |

| Select coordinate system | Filter out Point Clouds in the selected coordinate system | ROI coordinate system | Camera coordinate system; ROI coordinate system; object's own coordinate system | / |

- Example

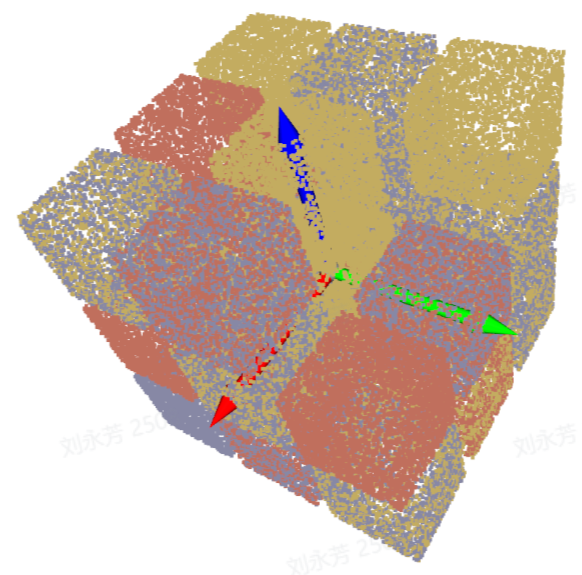

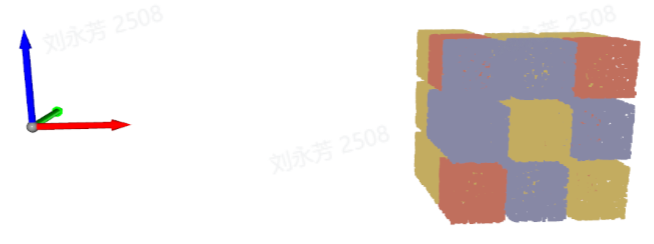

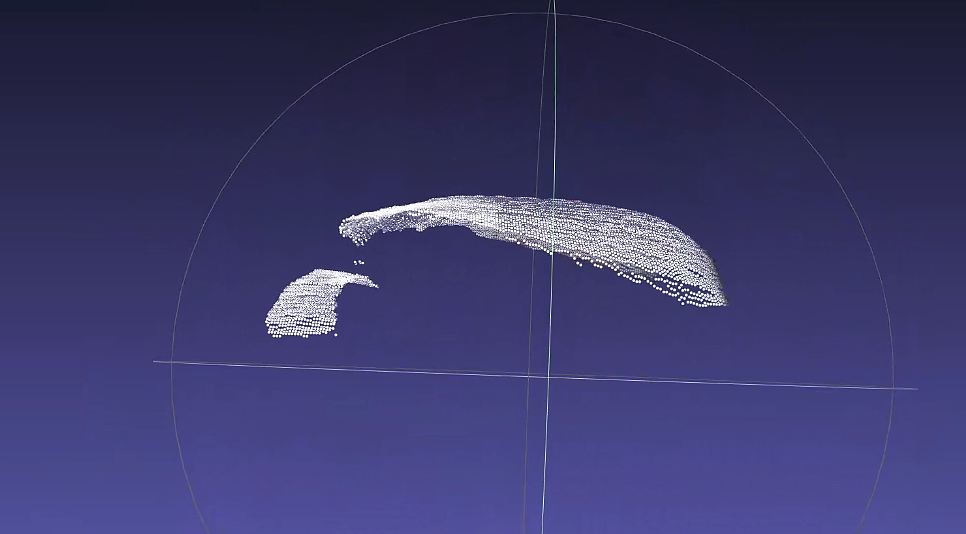

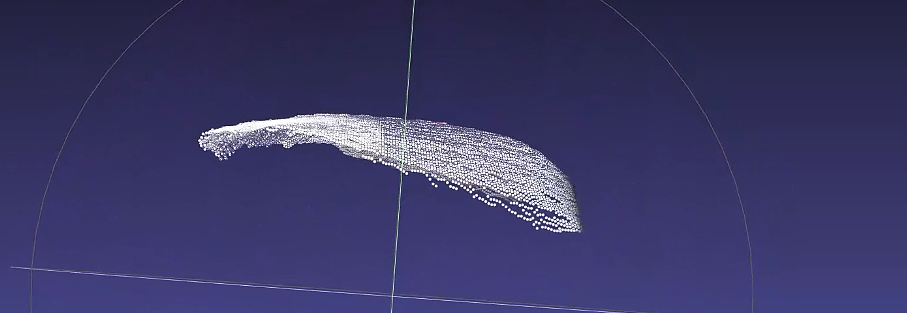

The Point Cloud containing lower-layer Point Cloud data is shown below (left). After selecting Filter Out Point Clouds Whose Object Distance Exceeds the Limit, the Point Cloud after filtering out the lower-layer Point Cloud is shown below (right)

As shown below, comparing the effect when the threshold is 100 with the detection result when the threshold is 400, the larger the threshold, the fewer Point Clouds are filtered out.

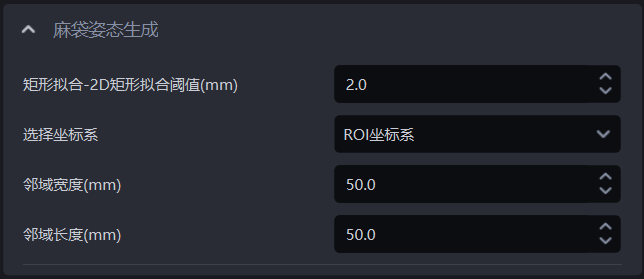

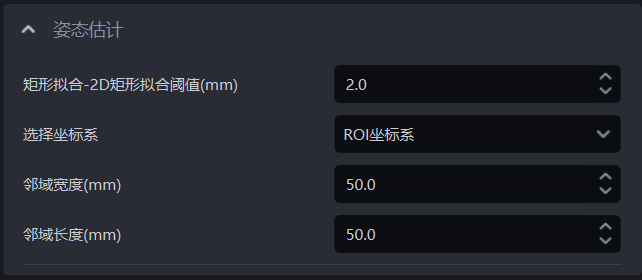

2.2 Bag Pose Generation / Pose Estimation

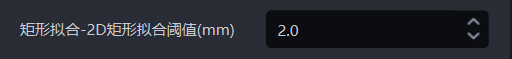

2.2.1 Rectangle Fitting - 2D Rectangle Fitting Threshold (m)

- Function

The model calculates an ideal rectangle based on the instance Point Cloud. If the maximum distance between the ideal rectangle and the Target Object contour does not exceed the fitting threshold, rectangle fitting succeeds. Points whose distance is smaller than the fitting threshold are fitted into the rectangle; if the distance exceeds the fitting threshold, rectangle fitting fails.

- Application Scenario

Applicable to depalletizing scenarios

- Parameter Description

Default value:2.0

Value range:[0,1000]

Unit:mm

- Tuning

Generally does not need to be adjusted. The Pick Point for a bag is generated at the contour center, so no rectangle fitting is required. The Pick Point for a carton is generated at the center of the fitted rectangle, and the fitting threshold for cartons is generally 2 mm

- Example

2.2.2 Select Coordinate System

- Function

Coordinate system used during Pick Point generation.

- Application Scenario

Applicable to depalletizing scenarios

- Parameter Description

Default value:ROI coordinate system

Value range:Camera coordinate system, ROI coordinate system, object's own coordinate system

- Tuning

The default is the ROI coordinate system, which usually does not need to be changed. In bag depalletizing scenarios with tilted stacking, select the object's own coordinate system, and also add Calculate Its Own Vertical Pose in the preprocessing of 3D Calculation

2.2.3 Neighborhood Width (mm)

- Function

Search for points within the width range near the Pick Point, and use points in the nearby neighborhood to adjust the Z coordinate value of the Pick Point, preventing abnormal noise points from affecting picking

- Application Scenario

Applicable to depalletizing scenarios; adjust when there are protrusions on the object surface

- Parameter Description

Default value:50

Value range:[0,1000]

Unit:mm

Tuning

The larger the value, the more accurate the Pick Point coordinates are; if the value is too large, the model may mistakenly use lower-layer or other objects to adjust the z coordinate value of the Pick Point

If the value is too small, the Pick Point coordinates are affected by noise points, reducing picking accuracy

If the object surface in the actual scenario has protrusions or depressions, increase the width to make the Pick Point more stable

If the object surface in the actual scenario is smooth, no change is needed. To improve model inference speed, the width can be reduced slightly

2.2.4 Neighborhood Length (mm)

- Function

Search for points within the length range near the Pick Point, and use points in the nearby neighborhood to adjust the Z coordinate value of the Pick Point, preventing abnormal noise points from affecting picking

- Application Scenario

Applicable to depalletizing scenarios; adjust when there are protrusions on the object surface

- Parameter Description

Default value:50

Value range:[0,1000]

Unit:mm

Tuning

The larger the value, the more accurate the Pick Point coordinates are; if the value is too large, the model may mistakenly use lower-layer or other objects to adjust the z coordinate value of the Pick Point

If the value is too small, the Pick Point coordinates are affected by noise points, reducing picking accuracy

If the surface of the Target Object in the actual scenario has protrusions or depressions, increase the length to make the Pick Point more stable

If the surface of the Target Object in the actual scenario is smooth, no change is needed. To improve model inference speed, the length can be reduced slightly

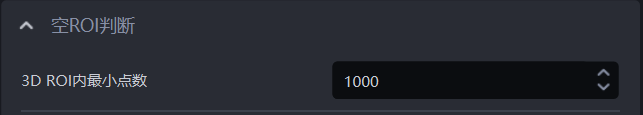

2.3 Empty ROI Detection

- Function

Determine whether there are still Target Objects (Point Clouds) remaining inside ROI 3D. If the number of 3D points inside ROI 3D is less than this value, it indicates that no Target Object Point Cloud remains, and no Point Cloud is returned at this time

- Parameter Description

Default value:1000

Value range:[0, 100000]

- Usage Procedure

Set the minimum point count threshold for ROI 3D. If the count is lower than this threshold, the Target Object Point Cloud in ROI 3D is insufficient, and it is determined that there is no Target Object in ROI 3D;

In Robot configuration, add a new vision status code to facilitate subsequent signal processing by the Robot.

3. Pick Point Processing

This section mainly explains the functions related to Pick Point filtering and adjustment and provides parameter tuning suggestions.

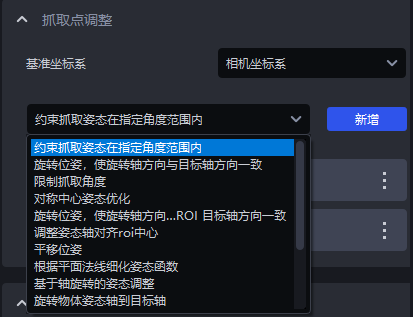

3.1 Pick Point Adjustment

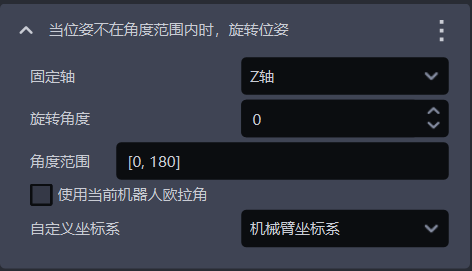

3.1.1 Rotate the Pose When It Is Outside the Angle Range

- Function Description

When the pose is outside the set angle range, rotate the pose counterclockwise around the fixed axis by a certain angle. If it is still outside the set angle range after rotation, a warning is issued.

- Application Scenario

Applicable only to depalletizing scenarios. This function keeps the Robot's picking direction stable and prevents the Tool from repeatedly rotating 180° during picking, thereby preventing cable twisting and similar abnormalities.

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Fixed axis | An axis of the grasping pose. Rotate the pose counterclockwise around this fixed axis | Z-axis | X/Y/Z-axis | / |

| Rotation angle | The angle by which the pose is rotated counterclockwise around the fixed axis. Adjust the rotation angle so that the grasping pose meets the angle range requirement | 0 | [-360,360] | angle |

| Angle range | Angle range of the grasping pose. Set the angle range according to factors such as material placement, Tool type, and Takt Time | [0,180] | [-180,180] | angle |

| Use the current Robot Euler angles | Pose calculation uses Euler angles “XYZ” by default. After selection, it uses the Euler angles configured for the current Robot, which keeps the pose consistent with the Robot teach pendant. | Cleared | / | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Robot Arm coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system | / |

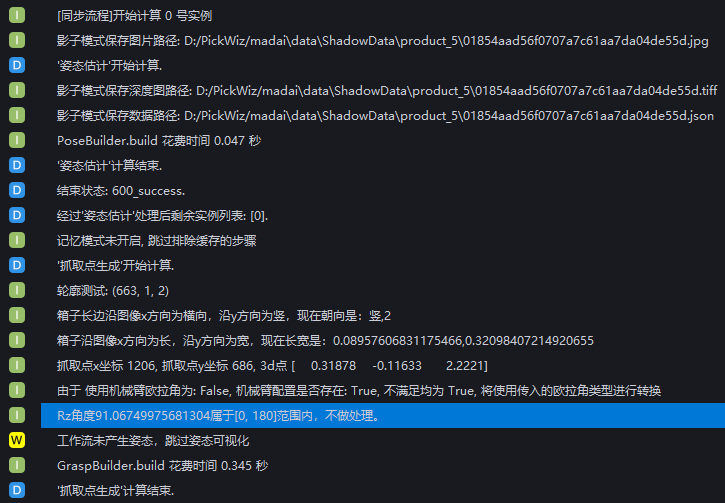

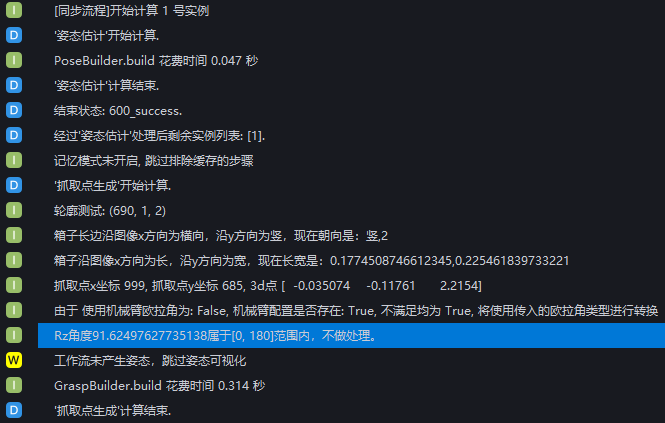

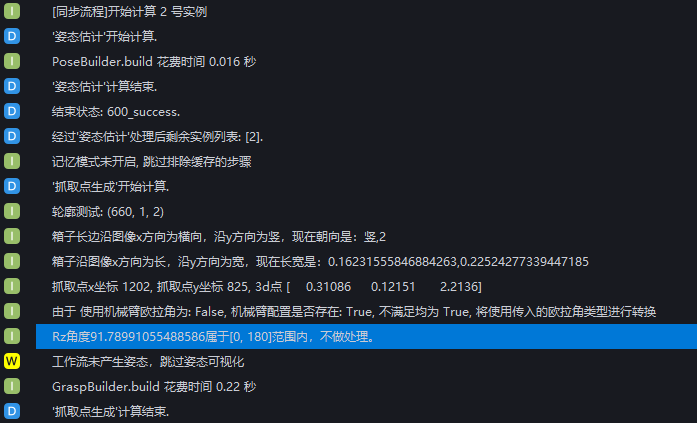

- Example

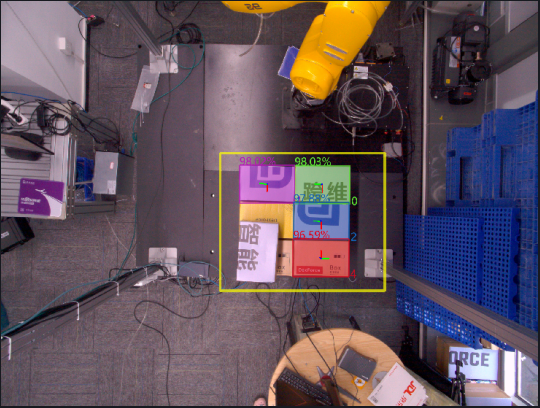

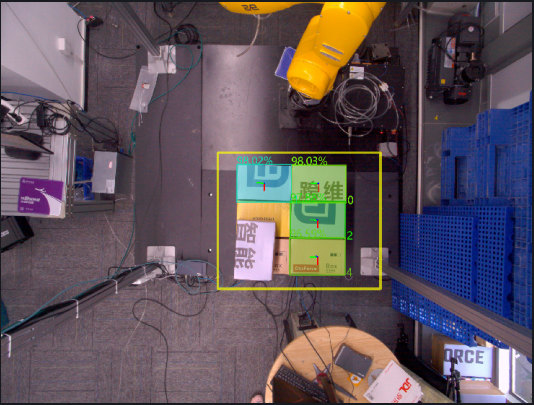

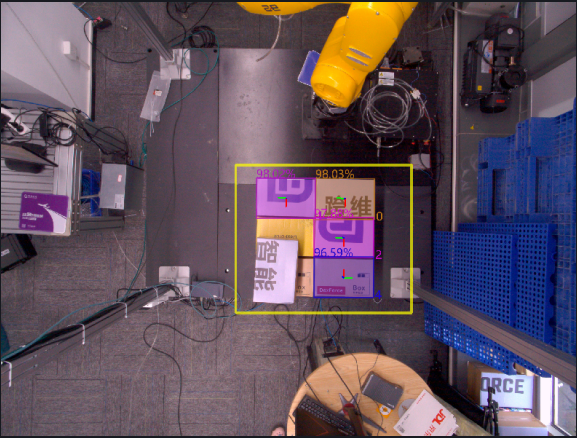

Without using this function, the generated Pick Points are shown below

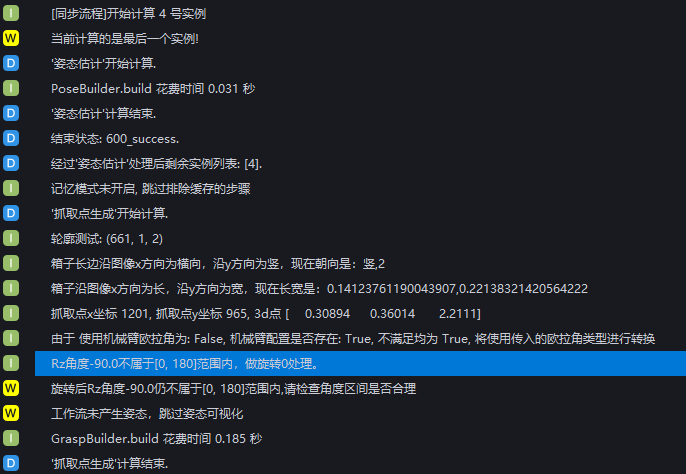

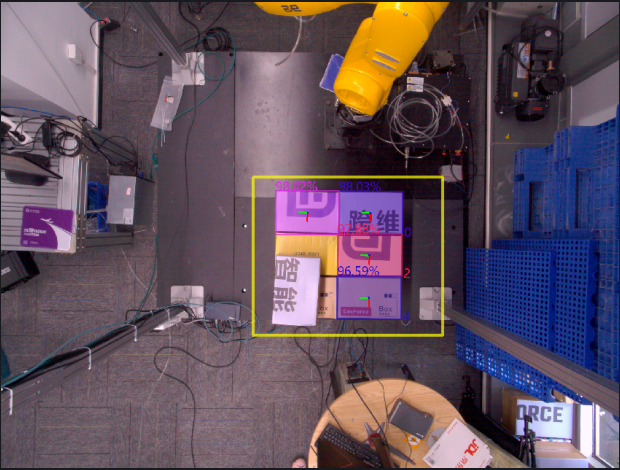

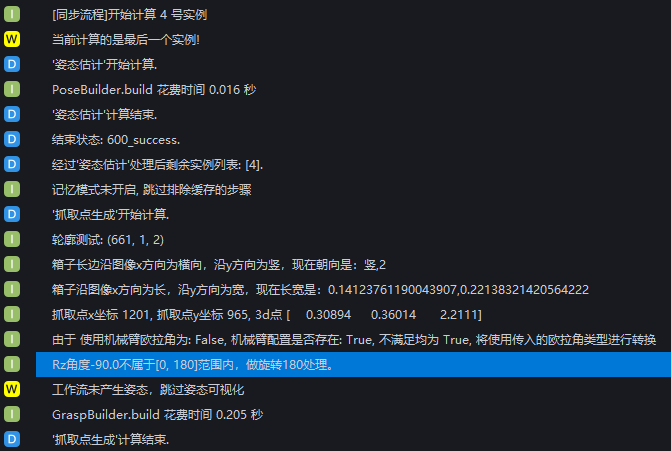

When using this function with the default values, the RZ angles of the grasping poses of instances 0, 1, and 2 are all within the angle range [0,180], so no processing is performed. The RZ angle of the grasping pose of instance 4 is -90°, which is outside the angle range [0,180], so the grasping pose of instance 4 is rotated by 0° around the fixed Z-axis

If you want to adjust the RZ angle of the grasping pose of instance 4 into the angle range, set the rotation angle to 180 so that the grasping pose of instance 4 is rotated by 180° around the fixed Z-axis

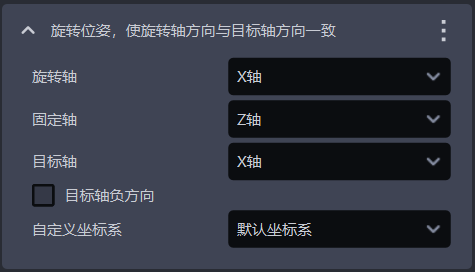

3.1.2 Rotate the Pose so that the Rotation Axis Direction Matches the Target Axis Direction

- Function Description

Rotate the pose once around the fixed axis, and make the direction of the rotation axis (determined according to the right-hand rule) consistent with the positive or negative direction of the target axis in the target coordinate system.

Application Scenario

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Rotation axis | An axis of the grasping pose. According to the right-hand rule, the grasping pose is rotated counterclockwise once around the fixed axis so that the direction of the rotation axis is consistent with the positive or negative direction of the target axis in the target coordinate system | X-axis | X/Y/Z-axis |

| Fixed axis | The grasping pose is rotated counterclockwise once around the fixed axis so that the direction of the rotation axis is consistent with the positive or negative direction of the target axis in the target coordinate system | Z-axis | X/Y/Z-axis |

| Target axis | An axis in the target coordinate system. The grasping pose is rotated counterclockwise once around the fixed axis so that the direction of the rotation axis is consistent with the positive or negative direction of the target axis in the target coordinate system | X-axis | X/Y/Z-axis |

| Negative direction of the target axis | Select it to make the direction of the rotation axis consistent with the negative direction of the target axis in the target coordinate system; clear it to make the direction of the rotation axis consistent with the positive direction of the target axis | Cleared | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system |

- Example

3.1.3 Rotate the Pose so that the Angle Between the Rotation Axis and the Target Axis Is Minimal

- Function Description

Rotate the pose around the fixed axis by 0°, 90°, 180°, and 270°, respectively, calculate the angle between the rotated rotation axis and the positive or negative direction of the target axis in the Camera coordinate system, and finally output the pose with the smallest angle after rotation.

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Fixed axis | An axis of the grasping pose. Rotate the pose counterclockwise around this fixed axis | Z-axis | X/Y/Z-axis |

| Rotation axis | An axis of the grasping pose. When rotating the pose, calculate the angle between this rotation axis and the positive or negative direction of the target axis | X-axis | X/Y/Z-axis |

| Target axis | An axis of the Camera coordinate system. When rotating the pose, calculate the angle between the rotation axis and the positive or negative direction of this target axis | X-axis | X/Y/Z-axis |

| Negative direction of the target axis | Select it to calculate the angle between the rotation axis and the negative direction of the target axis; clear it to calculate the angle between the rotation axis and the positive direction of the target axis | Selected | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system |

- Example

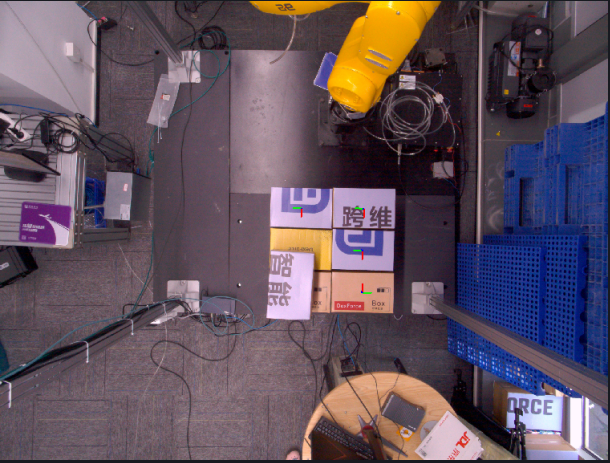

3.1.4 Flip the Pose so that the Angle Between the Rotation Axis and the Target Axis Is Minimal

- Function Description

Rotate the grasping pose once around the fixed axis so that the angle formed between the rotation axis and the positive or negative direction of the target axis in the ROI coordinate system is an acute angle.

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Fixed axis | An axis of the grasping pose. Rotate the pose counterclockwise around this fixed axis | Z-axis | X/Y/Z-axis |

| Rotation axis | An axis of the grasping pose. Rotate the pose so that the direction of this rotation axis is consistent with the positive or negative direction of the target axis | X-axis | X/Y/Z-axis |

| Target axis | An axis in the ROI coordinate system. Rotate the pose so that the direction of the rotation axis is consistent with the positive or negative direction of this target axis | X-axis | X/Y/Z-axis |

| Negative direction of the target axis | Select it to rotate the pose so that the direction of the rotation axis is consistent with the negative direction of the target axis; clear it to rotate the pose so that the direction of the rotation axis is consistent with the positive direction of the target axis | Selected | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system |

- Example

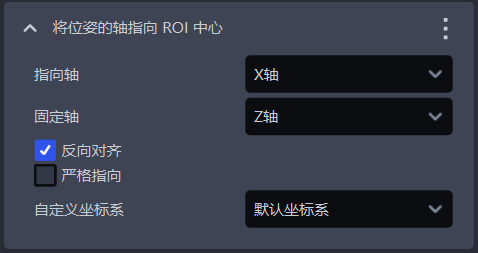

3.1.5 Point an Axis of the Pose Toward the ROI Center

- Function

Rotate the grasping pose around the fixed axis so that the pointing axis of the grasping pose points toward the ROI center.

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Pointing axis | The axis in the grasping pose that needs to be adjusted | X-axis | X/Y/Z-axis |

| Fixed axis | The axis that does not change during rotation | Z-axis | X/Y/Z-axis |

| Reverse alignment | Select it to align the pointing axis in the reverse direction toward the ROI center; clear it to align the pointing axis toward the ROI center | Selected | / |

| Strict pointing | Select it to force rotation of the grasping pose so that the pointing axis points toward the ROI center | Cleared | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system |

- Example

3.1.6 Rotate the Pose so that the Z-Axis Direction Matches the Z-Axis of the Target Coordinate System

- Function Description

Rotate the pose so that the Z-axis direction of the pose is aligned with the Z-axis of the target coordinate system

- Application Scenario

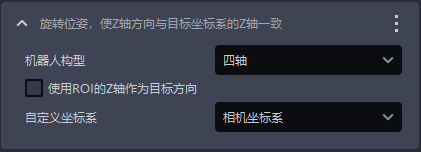

Usually used by default only in depalletizing scenarios and cannot be deleted. It is required to make the Z-axis of the grasping pose perpendicular to the Z-axis of the ROI coordinate system (four-axis) or consistent with the surface direction of the Target Object (six-axis)

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Robot configuration | Set according to the on-site Robot configuration. Four-axis or six-axis can be selected. If a six-axis Robot is actually used as a four-axis Robot, it should be set to four-axis | four-axis | four-axis / six-axis |

| Use ROI Z-axis as the target direction | When the Robot configuration is set to four-axis, selecting this option rotates the pose around the X-axis so that the Z-axis direction of the rotated pose is consistent with the positive direction of the ROI Z-axis ; clearing it rotates the pose around the X-axis so that the Z-axis direction of the rotated pose is consistent with the positive Z-axis direction of the Camera coordinate system . When the Robot configuration is set to six-axis, regardless of whether this option is selected, the pose is rotated around the X-axis so that the Z-axis direction of the rotated pose is consistent with the positive Z-axis direction of the object's own coordinate system | Cleared | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Camera coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system |

- Example

3.1.7 Rotate the Pose Around a Fixed Axis

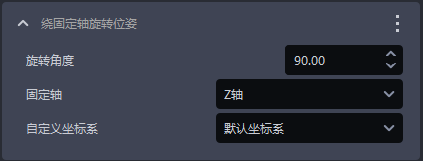

- Function Description

Rotate the pose around the fixed axis by a certain angle.

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Rotation angle | The angle by which the pose is rotated counterclockwise around the fixed axis | 90 | [-360, 360] | angle° |

| Fixed axis | An axis of the grasping pose. Rotate the pose counterclockwise around this fixed axis | Z-axis | X/Y/Z-axis | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system | / |

- Example

3.1.8 Translate the Pose

- Function Description

Move the pose by a certain distance along the translation axis.

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Translation amount (mm) | The distance by which the pose moves along the translation axis. A positive value moves in the positive direction of the translation axis, and a negative value moves in the negative direction | 0 | [-1000, 1000] | mm |

| Translation axis | The direction in which the pose moves | X-axis | X/Y/Z-axis | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Robot Arm coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system | / |

- Example

3.1.9 Pick Point Teaching

- Function Description

Record the Pick Point coordinates generated by the software and the taught Pick Point coordinates for the current condition, and output the converted grasping pose by using the deviation between the two.

- Application Scenario

When the Pick Points generated by the vision system have an obvious regular deviation, and the TCP coordinate accuracy of the Robot body is limited or difficult to calibrate, this method can be used to directly map the regular deviation to subsequent Pick Points, thus avoiding Robot TCP calibration

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Vision pose | Pick coordinates from the detection result | ||

| X(mm) | X coordinate of the vision pose | 0.00 | ±10000000, meaning no limit. |

| Y(mm) | Y coordinate of the vision pose | 0.00 | ±10000000, meaning no limit. |

| Z(mm) | Z coordinate of the vision pose | 0.00 | ±10000000, meaning no limit. |

| RX(°) | X-axis rotation of the vision pose | 0.00 | ±180 |

| RY(°) | Y-axis rotation of the vision pose | 0.00 | ±180 |

| RZ(°) | Z-axis rotation of the vision pose | 0.00 | ±180 |

| Grasping pose | Manually taught Pick Point | ||

| X(mm) | X coordinate of the grasping pose | 0.00 | ±10000000, meaning no limit. |

| Y(mm) | Y coordinate of the grasping pose | 0.00 | ±10000000, meaning no limit. |

| Z(mm) | Z coordinate of the grasping pose | 0.00 | ±10000000, meaning no limit. |

| RX(°) | X-axis rotation of the grasping pose | 0.00 | ±180 |

| RY(°) | Y-axis rotation of the grasping pose | 0.00 | ±180 |

| RZ(°) | Z-axis rotation of the grasping pose | 0.00 | ±180 |

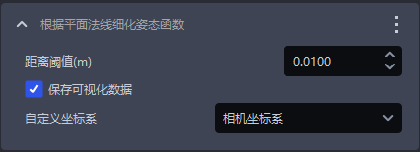

3.1.10 Refine the Pose According to the Plane Normal Function

- Function Description

Correct the Target Object pose by fitting the Normal of a plane, so that the Z-axis direction of the Target Object pose stays consistent with the direction of the Target Object plane Normal

- Application Scenario

Use this function to fine-tune the Target Object plane and improve picking accuracy when the Target Object has a plane and there is a deviation in the plane inclination angle when matching the template Point Cloud with the actual Point Cloud

Not applicable to depalletizing scenarios

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Distance threshold | Distance threshold for Point Cloud plane fitting | 10 | [-1000, 1000] | mm |

| Save visualization data | Select it to save visualization data under the historical data timestamp | Selected | / | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Camera coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system | / |

- Example

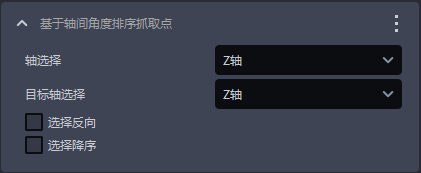

3.1.11 Sort Pick Points Based on the Angle Between Axes

- Function

Sort Pick Points according to the angle between a certain axis of the grasping pose and the target axis of the ROI

- Parameter Description

| Parameter | Description | Default value | Parameter range |

|---|---|---|---|

| Axis selection | A certain axis of the grasping pose | Z-axis | X/Y/Z-axis |

| Target axis selection | A certain axis of the ROI coordinate system | Z-axis | X/Y/Z-axis |

| Reverse selection | Select it to calculate the angle with the negative direction of the target axis; clear it to calculate the angle with the positive direction of the target axis | Cleared | / |

| Sort descending | Select it to sort Pick Points according to the angle from small to large; clear it to sort Pick Points according to the angle from large to small | Cleared | / |

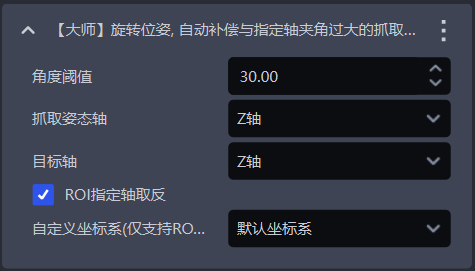

3.1.12 [Advanced] Rotate the Pose to Automatically Compensate for Grasping Pose Angles That Form an Excessive Angle with the Specified Axis

- Function Description

Determine whether the angle formed between the specified axis of the grasping pose and the target axis is within the specified range. If it is not, adjust the grasping pose to within the specified range

- Application Scenario

Avoid collisions between the Robot Tool and the container

- Parameter Description

| Parameter | Description | Default value | Parameter range | Unit |

|---|---|---|---|---|

| Angle range | Adjust the grasping pose to within the angle range | 30 | [0, 180] | angle° |

| Specified axis | A certain axis of the grasping pose. Adjust this axis so that it is within the angle range relative to the target axis of the ROI coordinate system | Z-axis | X/Y/Z-axis | / |

| Target axis | A certain axis of the ROI coordinate system, used to compare the angle range with the specified axis of the grasping pose | Z-axis | X/Y/Z-axis | / |

| Compare with the negative half-axis of the ROI | When cleared, compare the angle range with the positive direction of the target axis of the ROI coordinate system; when selected, compare with the negative direction of the target axis of the ROI coordinate system | Cleared | / | / |

| Custom coordinate system | The coordinate system in which the grasping pose is located | Default coordinate system | Default coordinate system; Camera coordinate system; ROI coordinate system; Robot Arm coordinate system | / |