How to Use Mask Mode

About 861 wordsAbout 3 min

1. Feature Overview

When training Deep Learning models, data augmentation techniques can be used to increase data diversity and robustness. Mask Mode is a special data augmentation method that adds real textures to the surface of real data/synthetic data and then uses them to train the model, simulating different scenarios and interference conditions so that the model can better adapt to various real-world scenarios.

Note

Sacks are trained using real data, while cartons are trained using synthetic data.

2. Applicable Scenarios

In depalletizing scenarios, when the infeed is relatively ordered and the textures are complex or diverse, issues such as failed recognition, one bbox covering multiple instances, or a bbox not fully covering a single instance can easily occur (for example, medicine boxes).

Currently, Mask Mode training through PickWiz on the edge side (IPC) is supported only in depalletizing scenarios. Other scenarios are not yet supported.

3. Operating Procedure

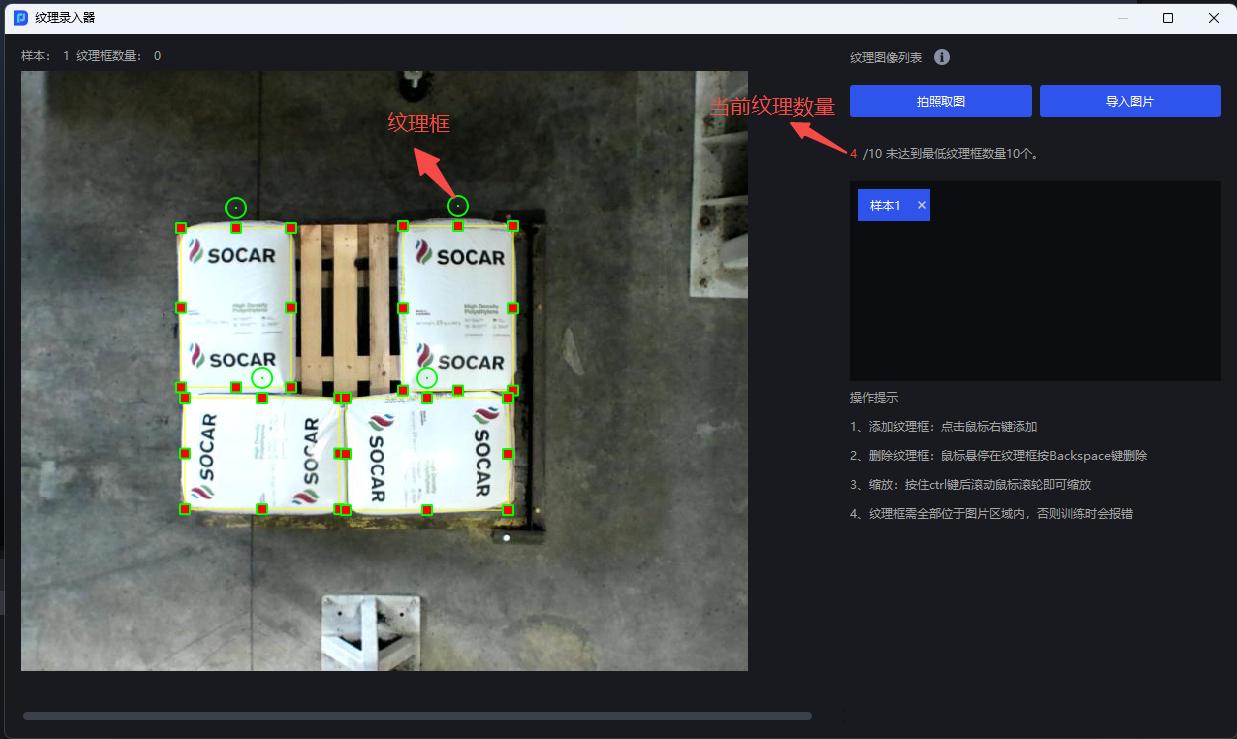

- On the Workpiece interface, click

Enter Texture

- Add images of the object to be picked. You can choose

Capture ImageorImport Image

- Right-click on the image of the object to be picked to add texture boxes and box the object texture. The number of textures must be no fewer than 10, and multiple texture boxes can be drawn on each image

It is recommended to add 2-3 or more samples for each texture type in each image to help ensure the effectiveness of Mask Mode training

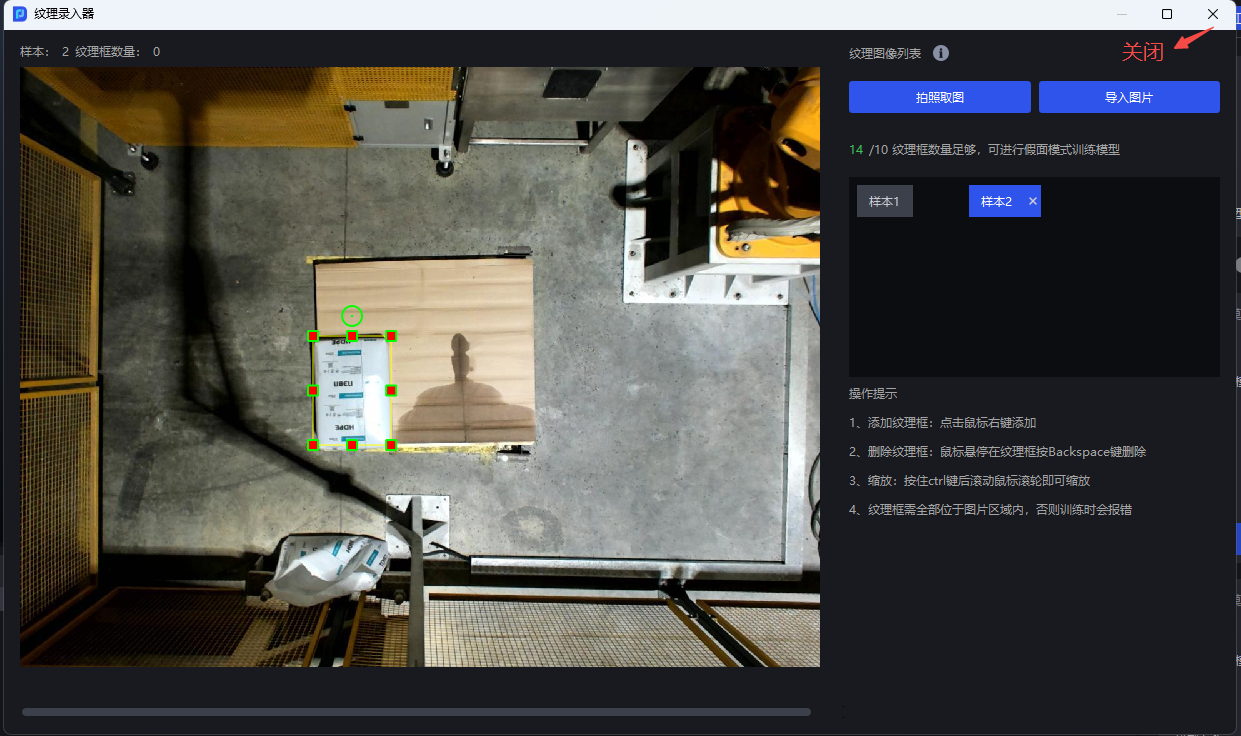

- After recording textures of the object to be picked and the quantity reaches 10 or more, close the texture recorder

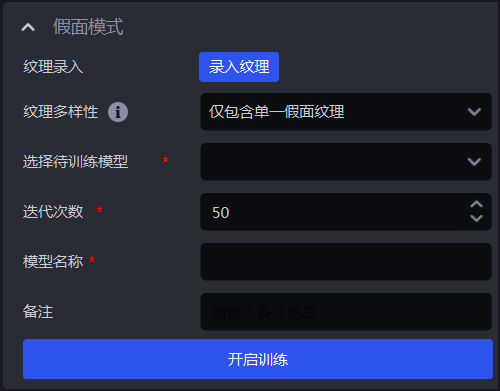

For Texture Diversity, you can select

Single Mask Texture OnlyorMultiple Mask Textures Included. If there is only one boxed texture type, selectSingle Mask Texture Only; if there are multiple boxed texture types, selectMultiple Mask Textures Included.For

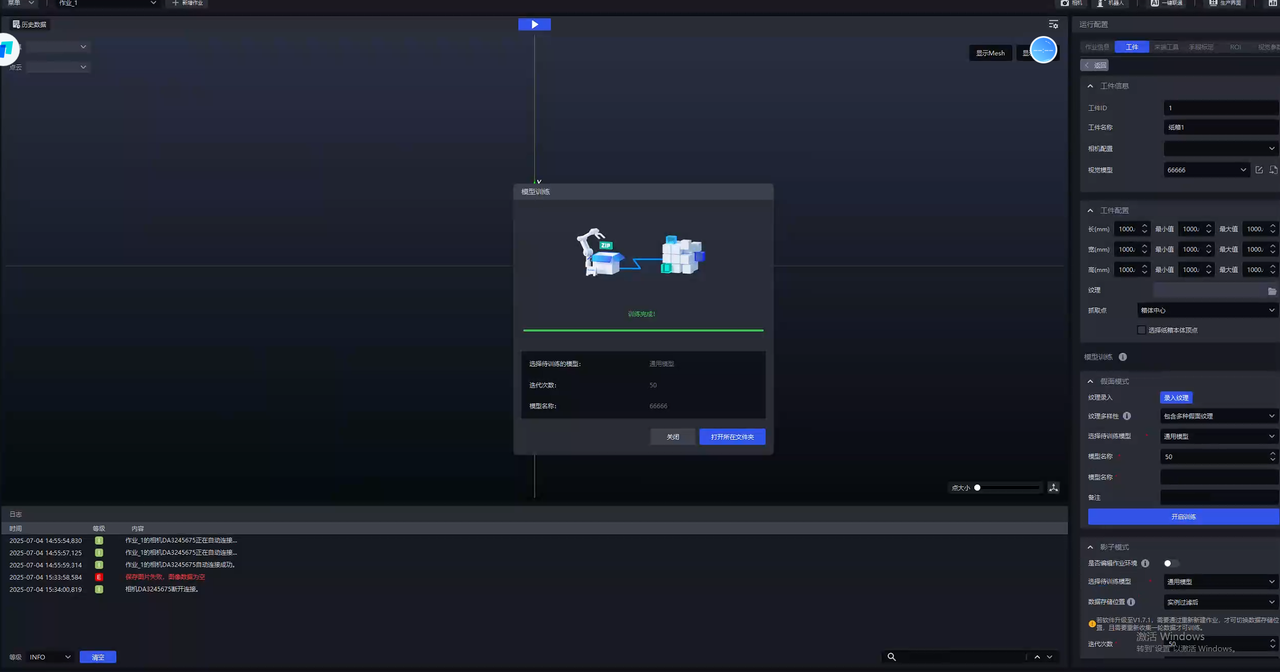

Select Model to Train, keepIterationsat the default value, setModel Name, and finally clickStart Training

- After training is complete, click

Open Containing Folderand replaceWorkpiece Info - Vision Modelwith the newly trained model.

4. Frequently Asked Questions

- Where can I view the texture data saved for each training session?

The texture dataset is saved under the corresponding project configuration, and the texture boxes for each image are saved separately in one directory

However, please note that each time a new Mask Mode training session is started, the texture data exported by the previous training session will be cleared

- If the captured image of the object to be picked is distorted, can it still be used for Mask Mode training?

Slight distortion does not affect usage, but when taking photos, the phone should be kept as parallel as possible to the target object

- What is an appropriate size for the texture box?

The entire single object should be fully enclosed, while avoiding other interfering objects

- If new textures are added, does the model support iteration? Do the textures previously used for training need to be deleted?

Model iteration is supported. Textures previously used for training need to be deleted, and the initial model selected for training should be a model that has already been trained with Mask Mode

If all textures can be obtained at one time, it is recommended to use all textures together for training

- What should I do if the trained model performs poorly?

Check whether the detected material and the material used during Mask Mode training belong to the same category

On the Workpiece interface, check whether the Vision Model has been replaced with the latest trained Deep Learning model

On the Workpiece interface, click Enter Texture and check in the texture recorder whether the boxed textures cover all cases, such as the front and back sides of a medicine box

On the Vision Parameters interface, try adjusting the Scaling Ratio in 2D recognition - instance segmentation

If a single Scaling Ratio cannot meet the needs of the actual scenario (for example, in depalletizing scenarios, the optimal Scaling Ratio may differ between objects in the top layer and bottom layer), select the Auto Enhancement function on the Vision Parameters interface and configure multiple Auto Enhancement - Scaling Ratios. For details, see Depalletizing Vision Parameter Adjustment Guide

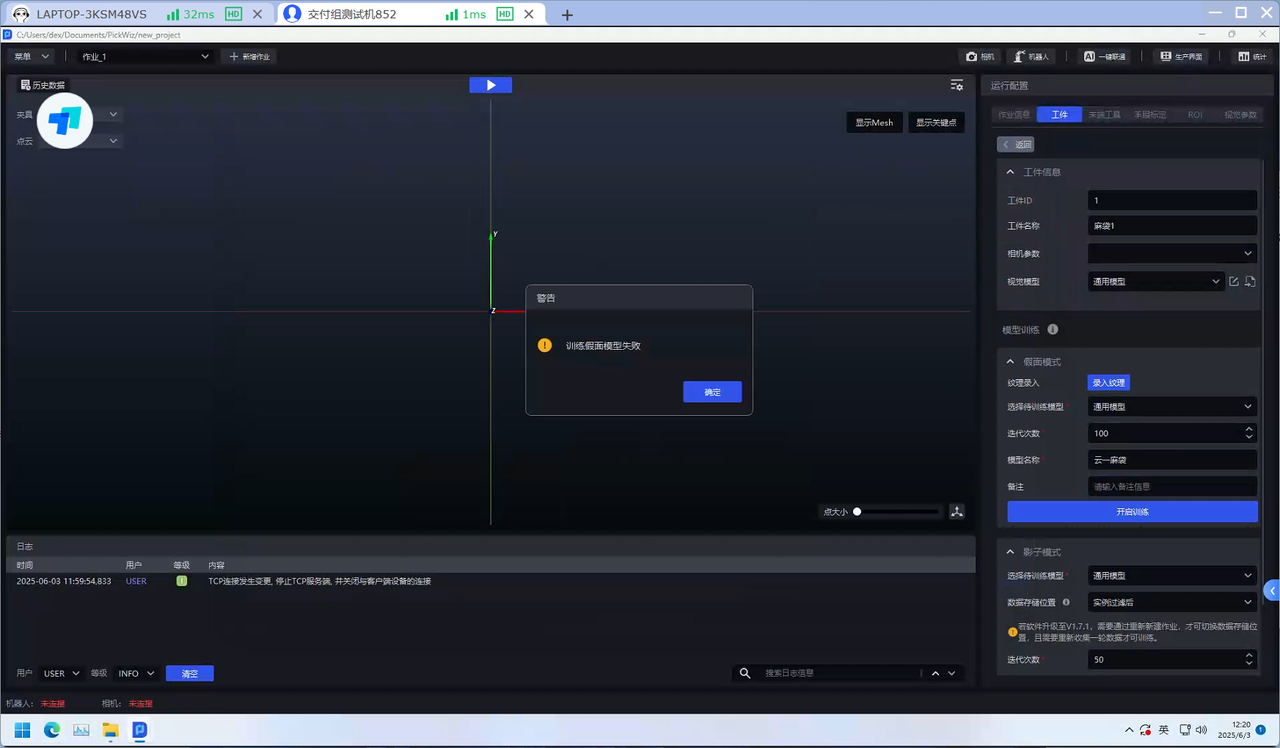

- After Mask Mode training, the error “Mask model training failed” is reported. How can this be resolved?

The minimum GPU requirement for the IPC is GTX 3050. Otherwise, Mask Mode training may fail. If the error “Mask model training failed” is reported, the graphics card needs to be replaced