Vision Parameter Adjustment Guide for Ordered Loading/Unloading of Planar Workpieces (Parallelized)

About 13281 wordsAbout 44 min

Getting Started

Background

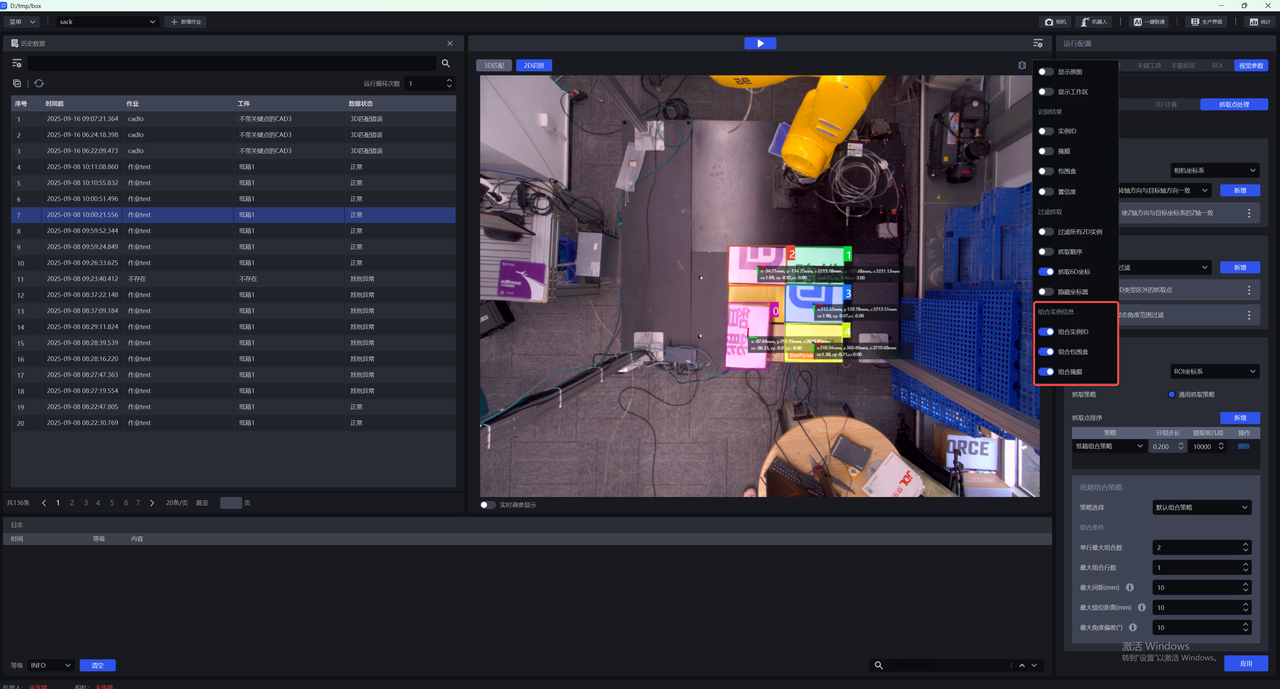

The "Planar Workpiece Ordered Loading/Unloading (Parallelized)" scene has been added as a vision acceleration solution for ordered planar workpieces. The key feature of this scene is the improved vision computation cycle time: by using parallel computation to reduce processing overhead, grasp points are generated directly.

Unlike the way visual acceleration mode is enabled in other scenes, the planar ordered scene is activated by creating a new job.

Job Scene Selection

In the current planar ordered scene, there are two available job scenes. A comparison is shown in the table below.

| Workflow | Planar Ordered Loading/Unloading | Planar Ordered Loading/Unloading (Parallelized) | Note |

|---|---|---|---|

| Accuracy | High — depends on point cloud density and scene point cloud consistency | Relatively high — depends on image features, point cloud density, and scene point cloud consistency | / |

| Speed | Relatively fast (single instance) | Fast (multiple instances) | Only the parallelized mode can output all valid results in a scene |

| Parameter tuning | Moderate — requires experience with matching parameter tuning | Simple — relatively straightforward and fixed (similar to general workpieces) | Some Advanced-class parameters in the parallelized mode will be hidden in future updates |

| Applicability | Strong — applicable to all planar workpieces | Relatively weak — current version supports inconsistent incoming workpiece orientations | Multi-template mode has been added for parallelized processing to support multiple incoming orientations |

| Template creation difficulty | Moderate — software-assisted | More complex — current version requires a script | Template creation will be integrated into PickWiz in a future parallelized update |

| Template characteristics | Reference | Select a complete scene instance point cloud that is relatively centered in the camera field of view | / |

Setting Up a Project

(1) Create a new Planar Workpiece Ordered Loading/Unloading (Parallelized) project (the project name and path can be customized; the project name must not contain Chinese characters)

Workpiece type: Planar workpiece (not round, cylindrical, or quadrilateral, and with minimal difference between front and back faces)

(2) Configure the camera and robot

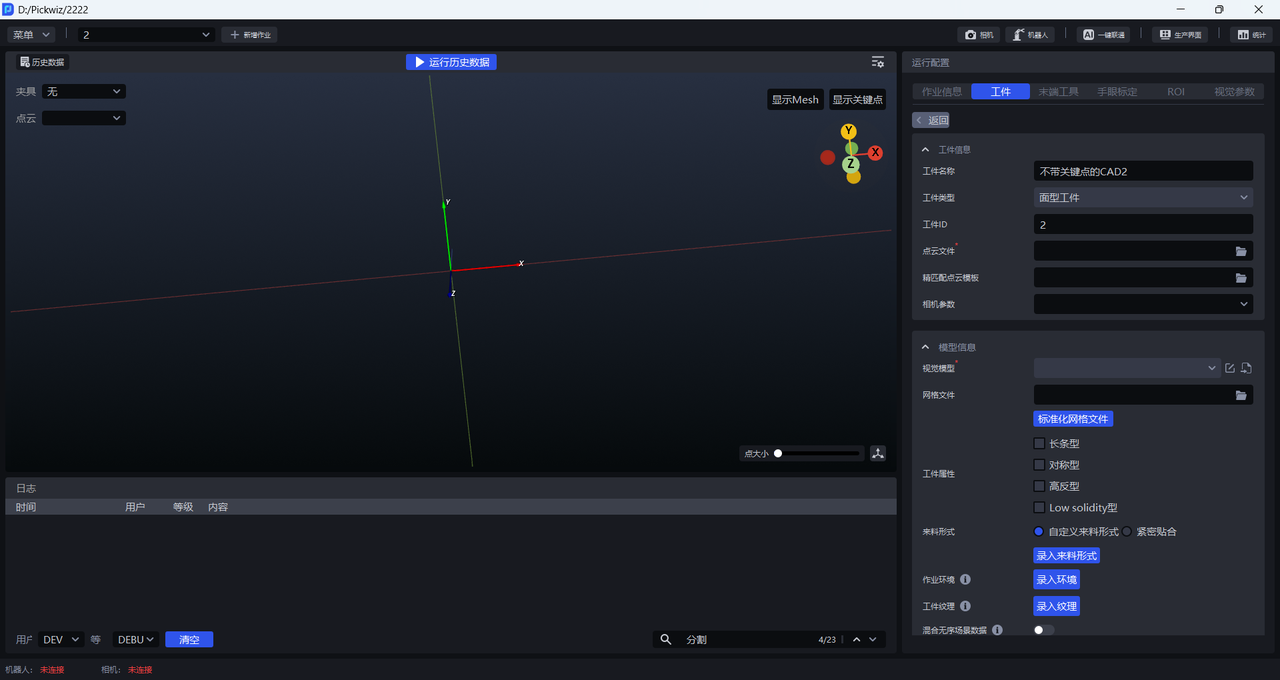

(3) Add a workpiece

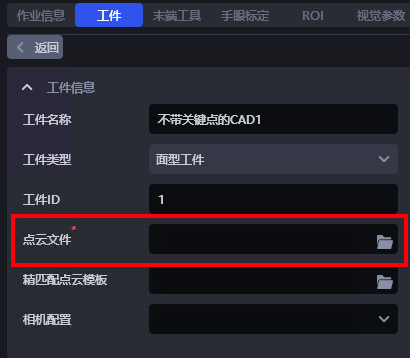

- Workpiece Information

The workpiece name can be customized. The workpiece type defaults to "Standard Workpiece" and cannot be changed. The workpiece ID can be customized and is used to automatically switch workpieces during robot grasping.

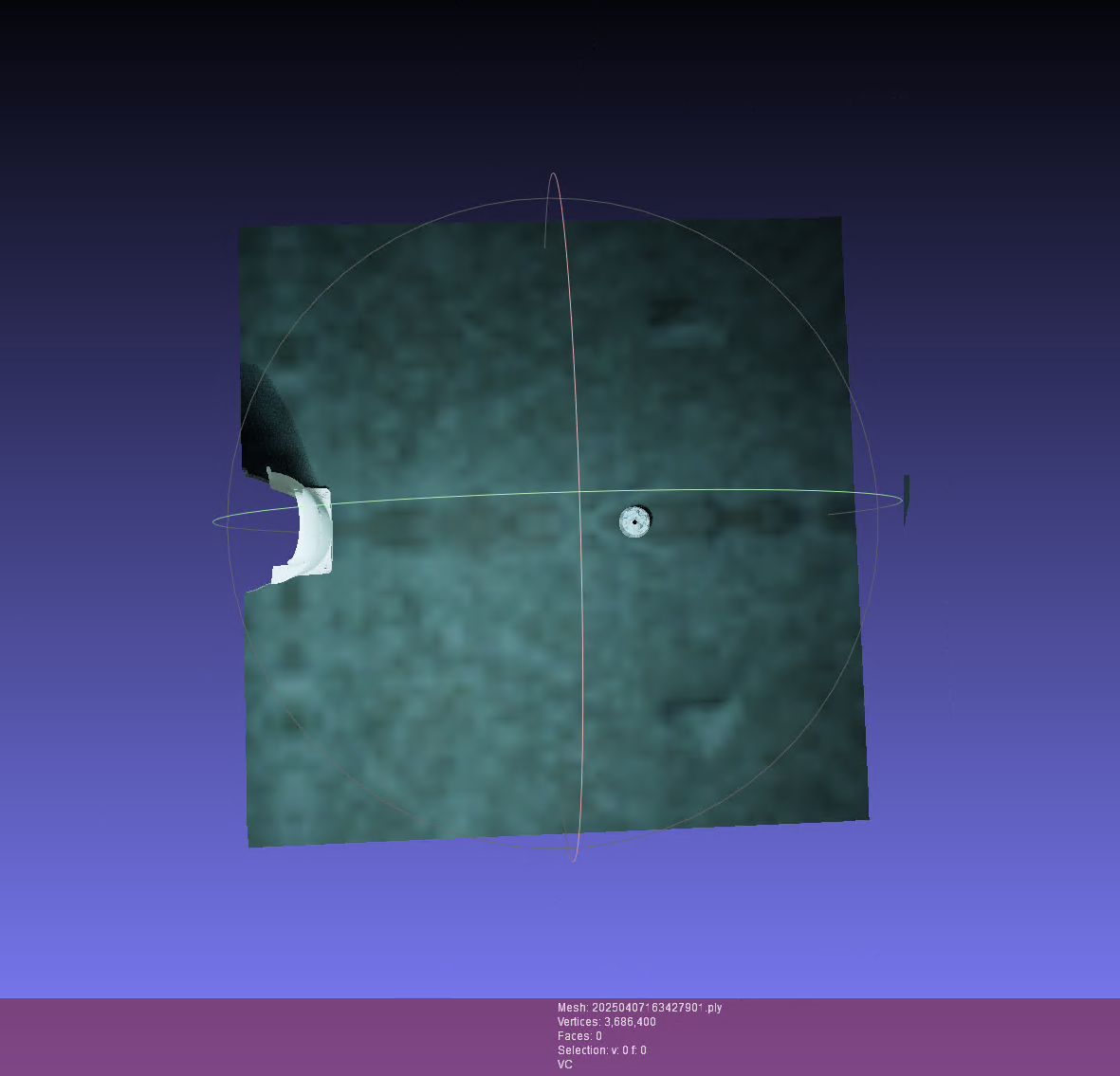

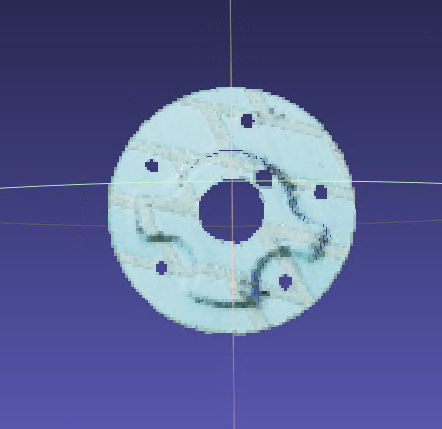

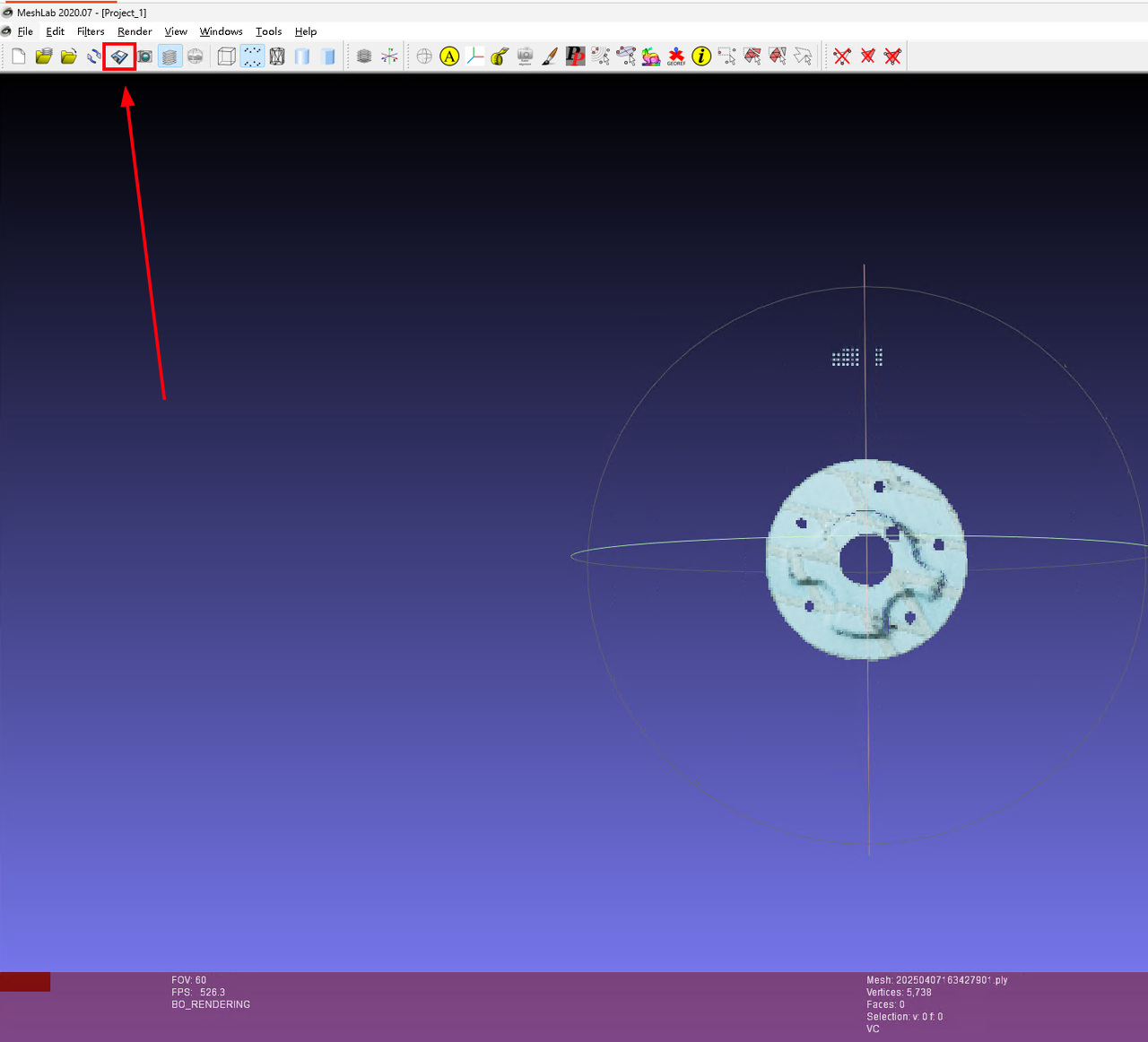

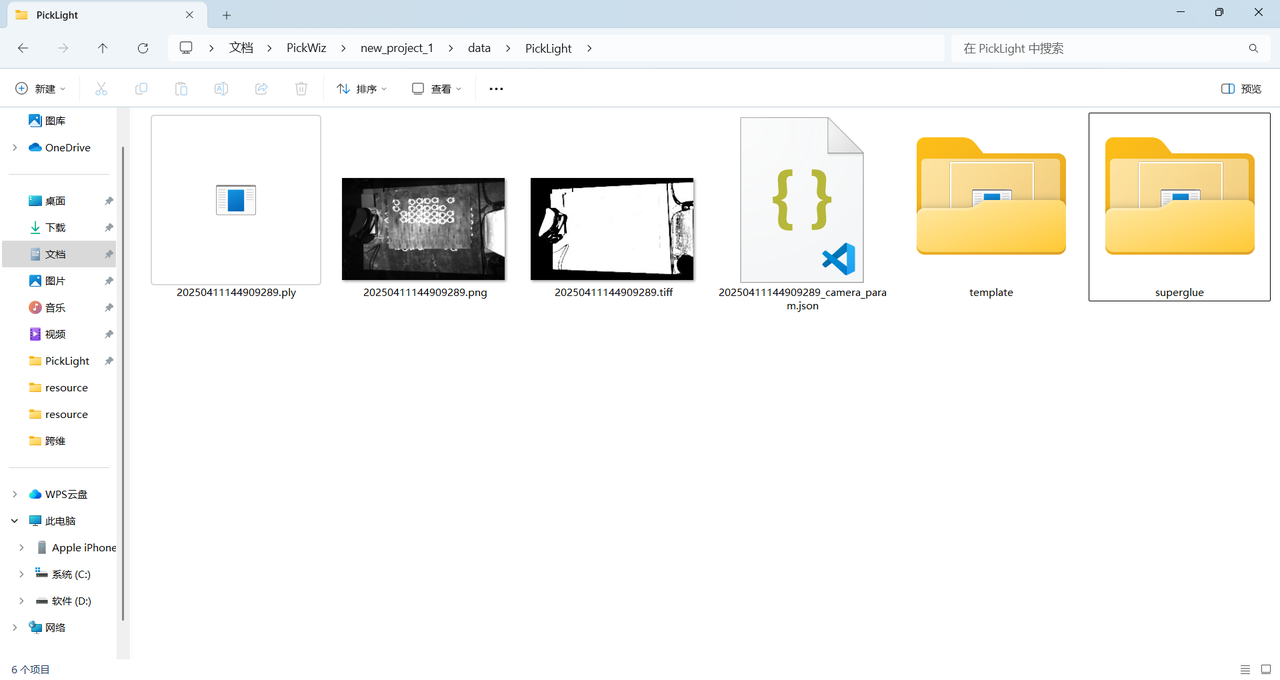

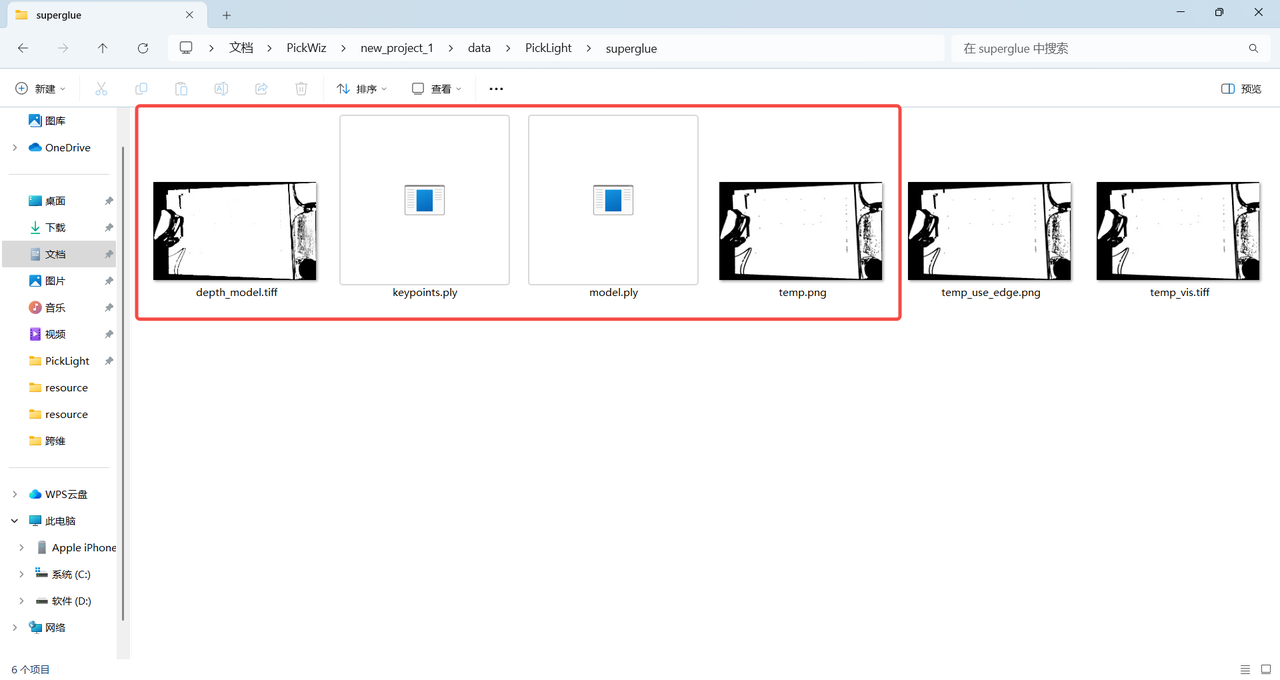

Point cloud file: The workpiece point cloud template. The point cloud file for the Planar Workpiece Ordered Loading/Unloading (Parallelized) scene is special — see 2.2.1 Template File Path for instructions on how to create it.

Fine matching point cloud template: Used for fine matching.

Camera parameters: Not required.

- Model Information

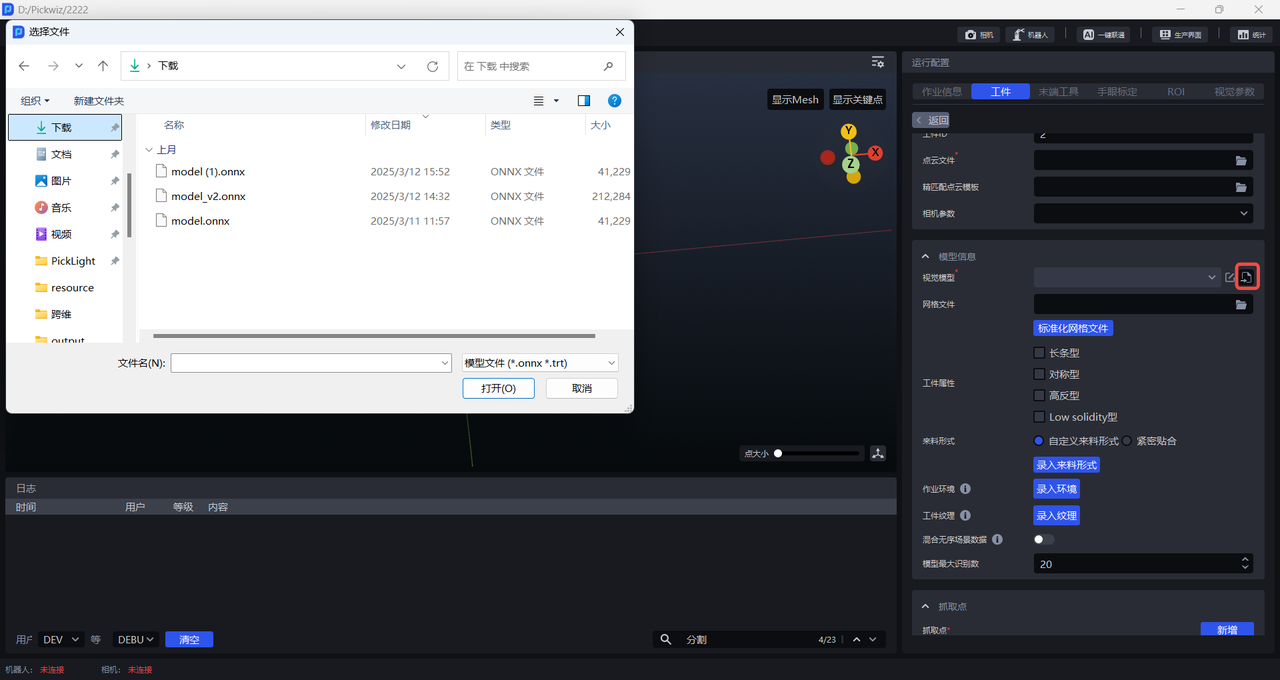

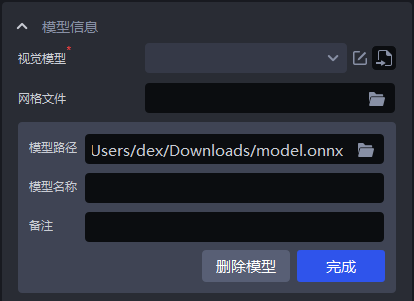

Vision model: The 2D recognition solution used for planar workpieces is CAD-based synthetic data training (One-Click Connect). The vision model for each planar workpiece application must be trained via One-Click Connect.

Mesh file: Typically the workpiece CAD file is uploaded. To eliminate noise, the mesh file must be normalized; normalization can also be done during point cloud template creation.

Workpiece properties: Elongated, symmetric, highly reflective, low solidity.

Incoming form: Custom incoming form — enter the incoming form; tightly packed — enter the number of workpieces per row and column.

Work environment: Load an environment file. The environment used for data generation in One-Click Connect will be automatically replaced with the loaded environment to improve recognition accuracy.

Workpiece texture: Load workpiece texture. During One-Click Connect model training, the loaded texture will be used for data augmentation to improve recognition accuracy.

Mix unordered scene data: When enabled, One-Click Connect training will simultaneously generate synthetic data for both unordered and ordered scenes to improve recognition accuracy.

Maximum model detection count: Default is 20; adjust according to scene requirements.

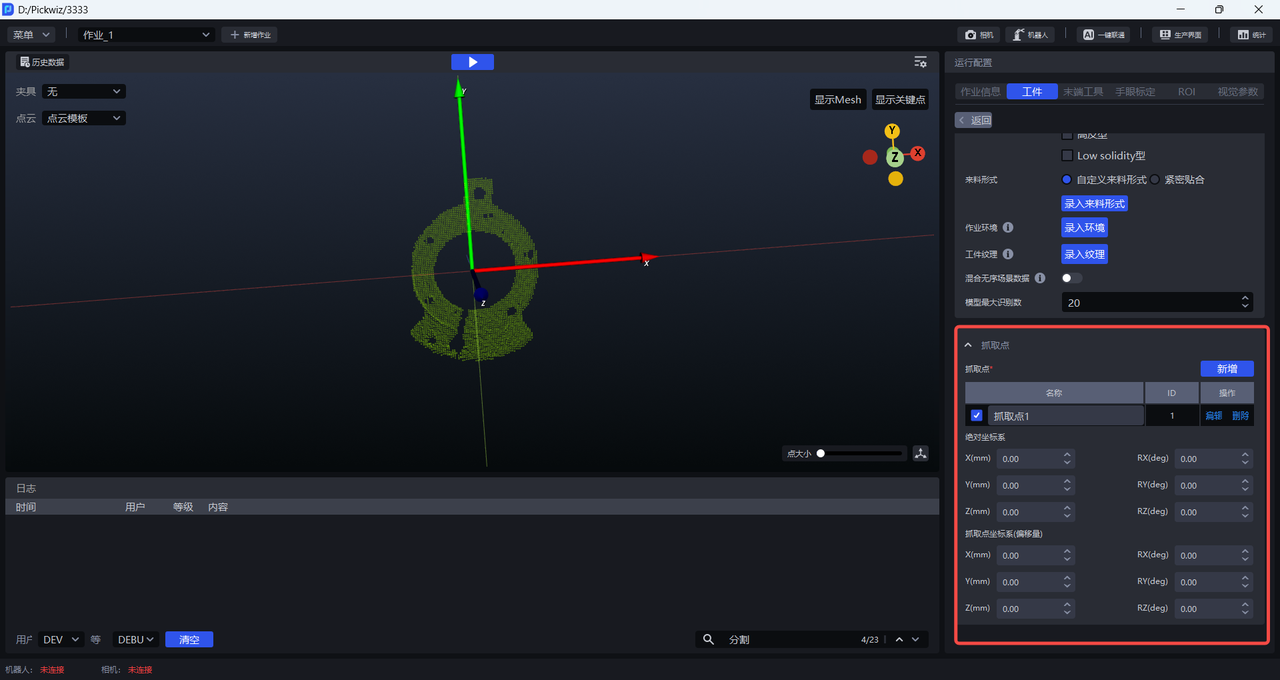

- Grasp point: Set grasp points based on the workpiece.

Absolute coordinate system: Uses the initial point as the origin; the initial point comes from the workpiece point cloud and CAD.

Grasp point coordinate system (offset): Uses the current grasp point as the origin.

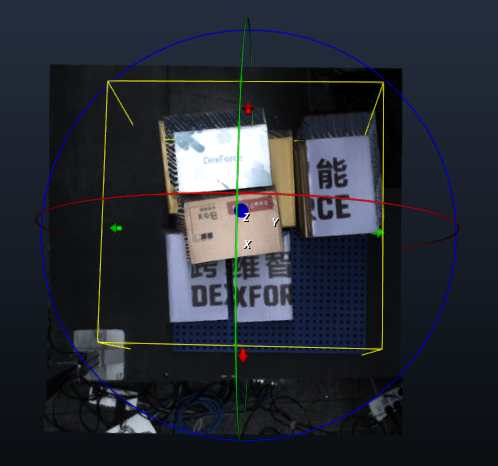

(4) Add end-of-arm tool, eye-hand calibration, and ROI.

(5) Optional features: Instance optimization, collision detection, visual classification, point cloud noise removal, bin/workpiece collision detection.

Instance optimization: Optimizes the instances generated by the model by processing instance masks.

Collision detection: Detects collisions between the end-of-arm tool and the container, and filters out grasp poses that may cause collisions. Collision Detection Guide

Visual classification: Used to recognize different textures, orientations, and other features of the same workpiece. Visual Classification Guide

Point cloud noise removal: Load the workpiece's point cloud template to filter noise from instance workpiece point clouds. Point Cloud Noise Removal Guide

Bin/workpiece collision detection: Detects collisions between the end-of-arm tool and the scene (bin, scene point cloud), and filters out grasp poses that may cause collisions. Bin/Workpiece Collision Detection Guide

Vision Parameters

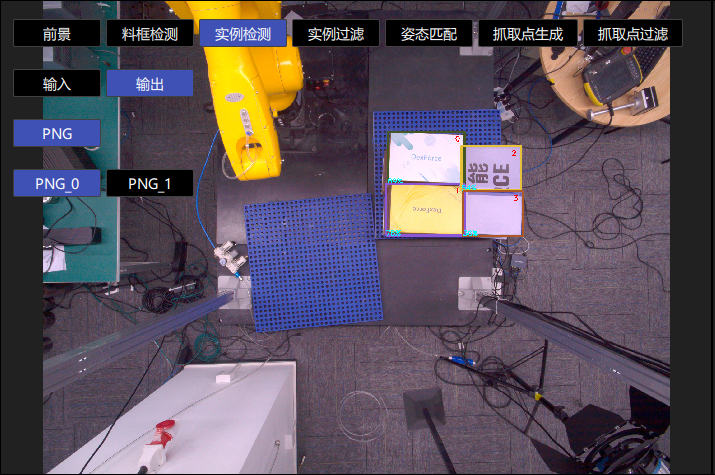

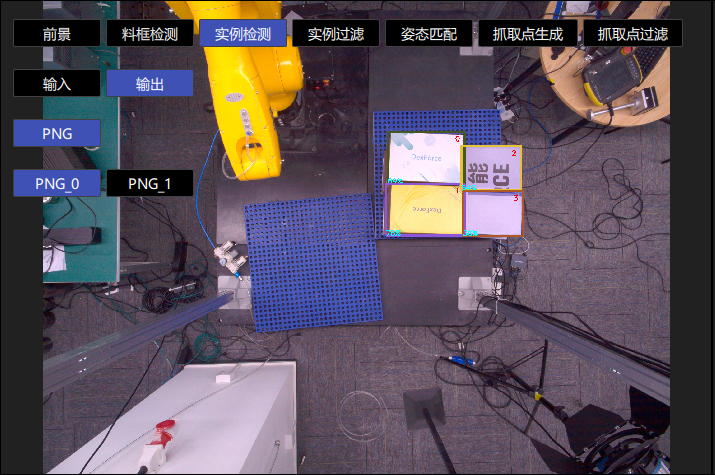

- 2D Recognition: Recognizes and segments instances from the actual scene.

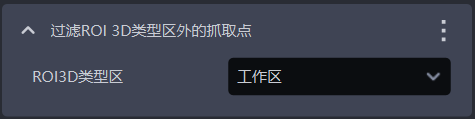

Preprocessing: Processes the 2D image before instance segmentation (commonly used: fill depth map holes & edge enhancement & extract top-layer texture & remove image background outside ROI 3D).

Instance segmentation: Segments instances (scale ratio & confidence lower threshold & auto augmentation). Uncheck to disable acceleration and return masks.

Point cloud generation: The method for generating instance point clouds — use the segmented instance mask or bounding box / use the filtered instance mask or bounding box to generate instance point clouds.

Instance filtering: Filters the segmented instances.

Instance sorting: Sorts the instances.

- 3D Computation: Computes the pose of instances in the camera coordinate system and generates grasp points.

Preprocessing: Processes 3D point clouds before computing grasp points.

Pose estimation: Computes the pose of instances in the camera coordinate system (coarse matching, fine matching) and generates grasp points.

- Grasp Point Processing: Filters, adjusts, and sorts grasp points.

Grasp point filtering: Filters grasp points.

Grasp point adjustment: Adjusts grasp points.

Grasp point sorting: Sorts grasp points.

1. 2D Recognition

This section mainly explains the preprocessing, instance segmentation, instance filtering, and instance sorting related functions that affect 2D image recognition results, along with parameter tuning suggestions

1.1 Preprocessing

Preprocessing for 2D recognition processes the 2D image before instance segmentation.

1.1.1 Bilateral Filtering

- Function

Image smoothing based on bilateral filtering

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Maximum Depth Difference | Maximum depth difference for bilateral filtering | 0.03 | [0.01, 1] |

| Filter Kernel Size | Convolution kernel size for bilateral filtering | 7 | [1, 3000] |

1.1.2 Depth to Normal Map

- Function

Compute pixel normals from the depth map and convert the image into a normal map

1.1.3 Image Enhancement

- Function

Common image enhancement operations such as saturation, contrast, brightness, and sharpness

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Image Enhancement Type | Enhances a specific element of the image | Contrast | Saturation, Contrast, Brightness, Sharpness |

| Image Enhancement Threshold | How much a specific element of the image is enhanced | 1.5 | [0.1, 100] |

1.1.4 Histogram Equalization

- Function

Improve image contrast

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Local Mode | Local or global histogram equalization. When selected, local histogram equalization is used; when cleared, global histogram equalization is used. | Selected | / |

| Contrast Threshold | Contrast threshold | 3 | [1,1000] |

1.1.5 Filter Depth by HSV

- Function

Filter the depth map according to color values

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Fill Kernel Size | Size of color filling | 3 | [1,99] |

| Filter Depth by HSV - Maximum Color Range Value | Maximum color value | [180,255,255] | [[0,0,0],[255,255,255]] |

| Filter Depth by HSV - Minimum Color Range Value | Minimum color value | [0,0,0] | [[0,0,0],[255,255,255]] |

| Keep Regions Within the Color Range | If selected, regions within the color range are kept; if cleared, regions outside the color range are kept. | / | / |

1.1.6 Gamma Correction

- Function

Gamma correction changes image brightness

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Gamma Compensation Coefficient | When this value is less than 1, the image becomes darker; when it is greater than 1, the image becomes brighter. | 1 | [0.1,100] |

| Gamma Correction Coefficient | When this value is less than 1, the image becomes darker and is suitable for images that are too bright; when it is greater than 1, the image becomes brighter and is suitable for images that are too dark. | 2.2 | [0.1,100] |

1.1.7 Fill Depth Hole

- Function

Fill hole regions in the depth map and smooth the filled depth map

- Use Case

Due to issues such as obstruction caused by the Target Object structure itself or uneven lighting, parts of the Target Object may be missing in the depth map

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Fill Kernel Size | Hole filling size | 3 | [1,99] |

Fill kernel size can only be an odd number

- Parameter Tuning

Adjust according to the detection results. If filling is excessive, reduce the parameter; if filling is insufficient, increase the parameter.

- Example

1.1.8 Edge Enhancement

- Function

Set the texture edge areas in the image to the background color or to a color with a large contrast from the background color, so that the edge information of the Target Object is highlighted

- Use Case

Target Objects occlude or overlap each other, resulting in unclear edges

- Parameter Description

| Parameter | Description | Default Value | Range | Tuning Recommendation |

|---|---|---|---|---|

| Normal Z-Direction Filtering Threshold | Filtering threshold for the angle between the normal vector corresponding to each point in the depth map and the positive Z-axis direction of the camera coordinate system. If the angle between a point's normal vector and the positive Z-axis direction of the camera coordinate system is greater than this threshold, the color at the corresponding position in the 2D image is set to the Background color or to a color with a large contrast from the Background color. | 30 | [0,180] | For flat Target Object surfaces, this threshold can be stricter. For curved surfaces such as sacks, increase it appropriately according to the surface inclination of the Target Object. |

| Background | RGB color threshold of the background color | 128 | [0,255] | |

| Automatically Adjust Contrast Background | Selected After Automatically Adjust Contrast Background is enabled, the colors of points in the 2D image whose angles are greater than the filtering threshold are set to a color with a large contrast from the Background color. If it is not selected, the colors of points in the 2D image whose angles are greater than the filtering threshold are set to the color corresponding to the Background color. | Cleared | / |

- Example

In a scene with a pile of sacks, the sacks occlude each other. Enable Edge Enhancement to distinguish the edges of individual sacks, as shown below.

1.1.9 Extract Top by Depth

- Function

Extract the texture of the topmost or bottommost Target Object, while setting other regions to the background color or to a color with a large contrast from the background color.

- Use Case

Applicable to single-carton depalletizing scenarios. Factors such as poor lighting conditions, similar color textures, tight stacking, interleaved stacking, or occlusion may make it difficult for the model to distinguish texture differences between the upper and lower cartons, which easily leads to false detections.

- Parameter Description

| Parameter | Description | Default Value | Range | Unit | Tuning Recommendation |

|---|---|---|---|---|---|

| Distance Threshold (mm) | If the distance from a point to the topmost plane (or bottommost plane) is lower than this threshold, the point is regarded as lying on the topmost plane (or bottommost plane) and should be retained. Otherwise, it is regarded as a point on the lower layer (or upper layer), and the color of the lower-layer (or upper-layer) point is set to the background color or to a color with a large contrast from the background color. | 50 | [0.1, 1000] | mm | Generally set to 1/2 of the carton height |

| Cluster Point Cloud Quantity | Expected number of points participating in clustering, that is, the number of sampled point clouds within the ROI 3D area | 10000 | [1,10000000] | / | The larger the Cluster Point Cloud Quantity, the slower the model inference speed but the higher the accuracy; the smaller the Cluster Point Cloud Quantity, the faster the inference speed but the lower the accuracy. |

| Minimum Category Point Quantity | Minimum number of points used for category filtering | 1000 | [1, 10000000] | / | / |

| Automatically Compute Contrast Background | Selected After Automatically Compute Contrast Background is enabled, regions outside the topmost (or bottommost) layer in the 2D image are set to a color with a large contrast from the background color threshold. If it is not selected, regions outside the topmost (or bottommost) layer in the 2D image are set to the color corresponding to the background color threshold. | Selected | / | / | / |

| Background Color RGB Threshold | RGB color threshold of the background color | 128 | [0, 255] | / | / |

- Example

1.1.10 Extract ROI 3D RGB

- Function

Remove the background outside the ROI3D area in the 2D image

- Use Case

Excessive image background noise affects detection results

- Parameter Description

| Parameter Name | Description | Default Value | Range |

|---|---|---|---|

| Fill Kernel Size | Size of hole filling | 5 | [1,99] |

| Iteration Count | Number of image dilation iterations | 1 | [1,99] |

| Automatically Compute Contrast Background | Selected After Automatically Compute Contrast Background is enabled, the area outside the ROI in the 2D image is set to a color with a large contrast from the background color threshold. If it is not selected, the area outside the ROI in the 2D image is set to the color corresponding to the background color threshold. | Selected | |

| Background Color Threshold | RGB color threshold of the background color | 128 | [0,255] |

Fill kernel size can only be an odd number

- Parameter Tuning

If you need to remove more background noise from the image, reduce the Fill Kernel Size

- Example

1.2 Instance Segmentation

1.2.1 Scaling Ratio

- Function

Improve the accuracy and recall of 2D recognition by scaling the original image proportionally before inference.

- Use Case

If the detection result is poor (for example, no instances are detected, instances are missed, a bounding box covers multiple instances, or a bounding box does not fully cover an instance), this function should be adjusted.

Parameter Description

Default Value: 1.0

Range: [0.01, 3.00]

Step Size: 0.01

Parameter Tuning

- Run with the default value and view the detection results in the visualization window. If no instances are detected, instances are missed, a bounding box covers multiple instances, or a bounding box does not fully cover an instance, this function should be adjusted.

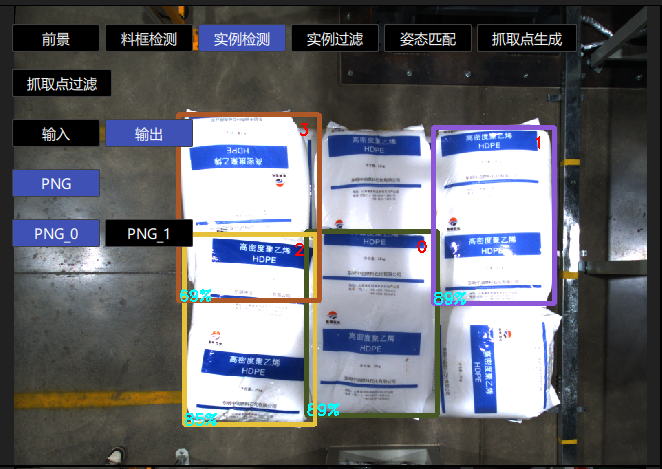

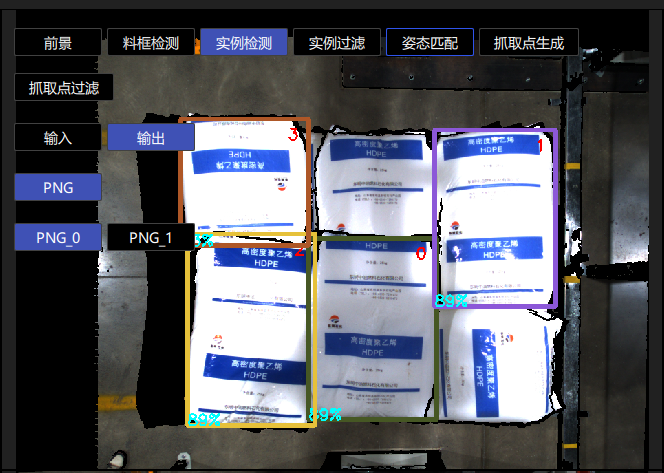

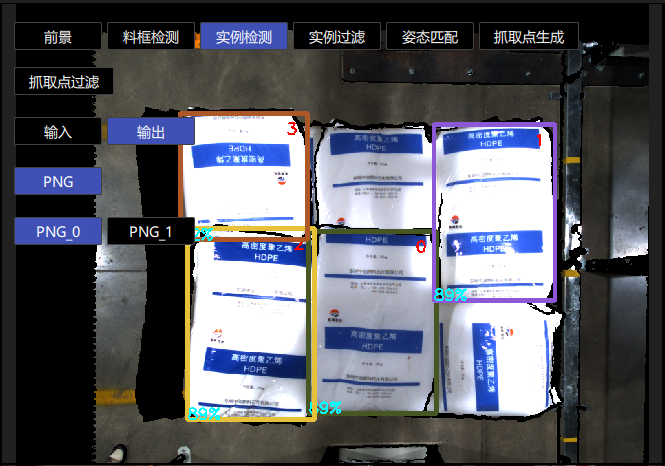

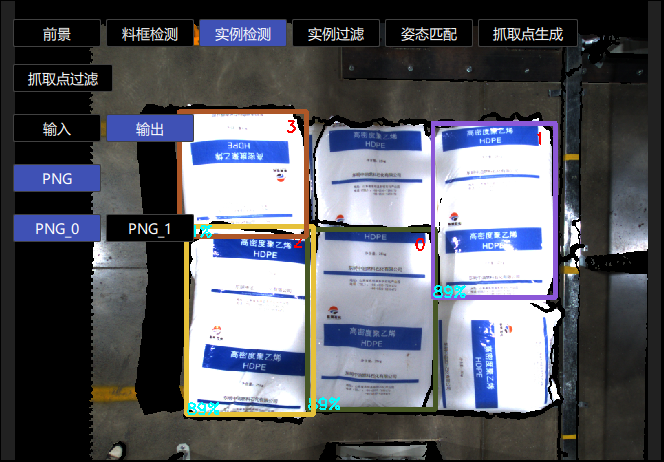

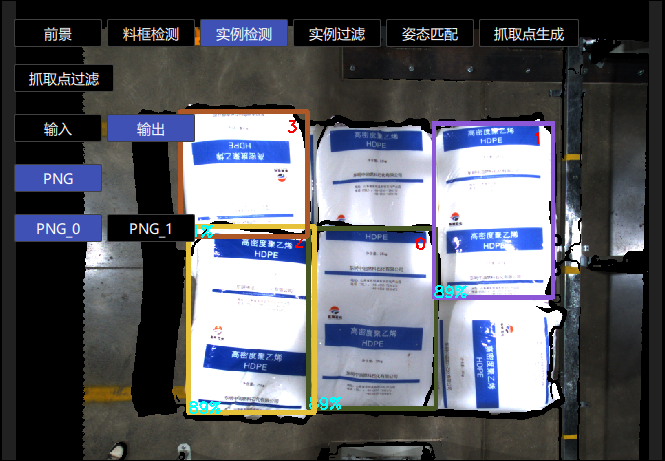

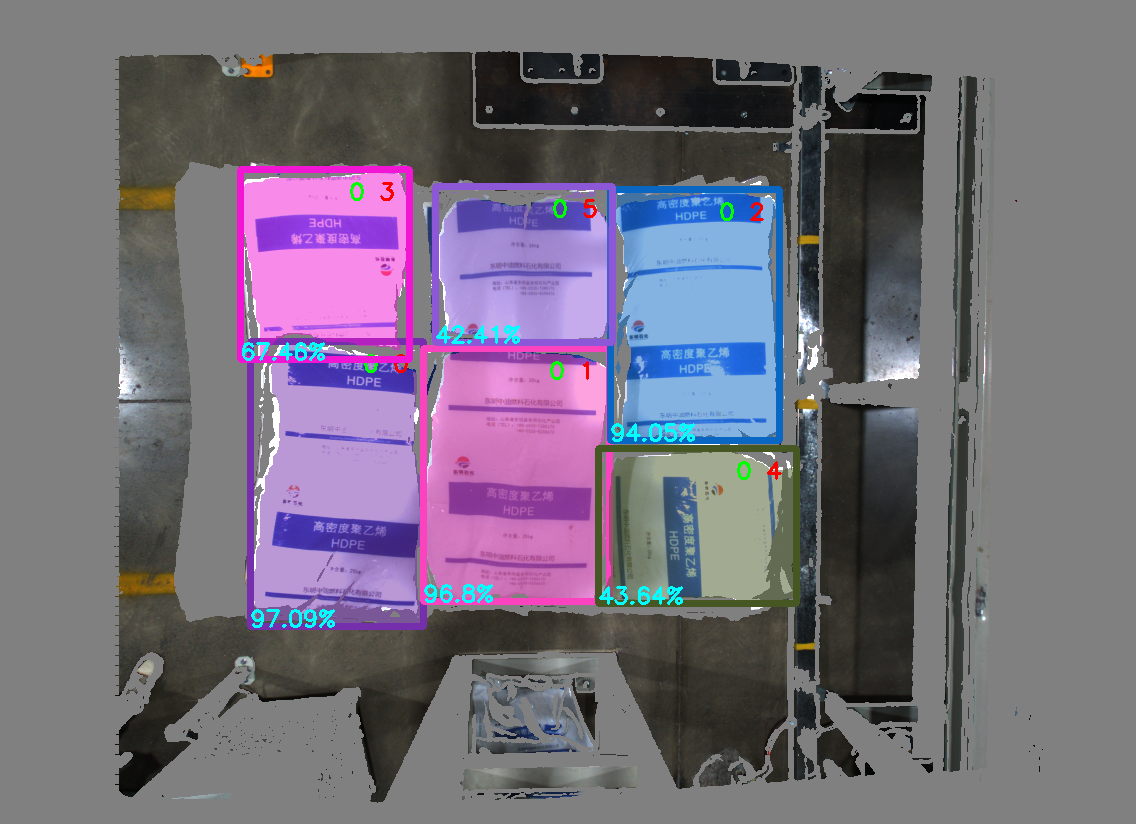

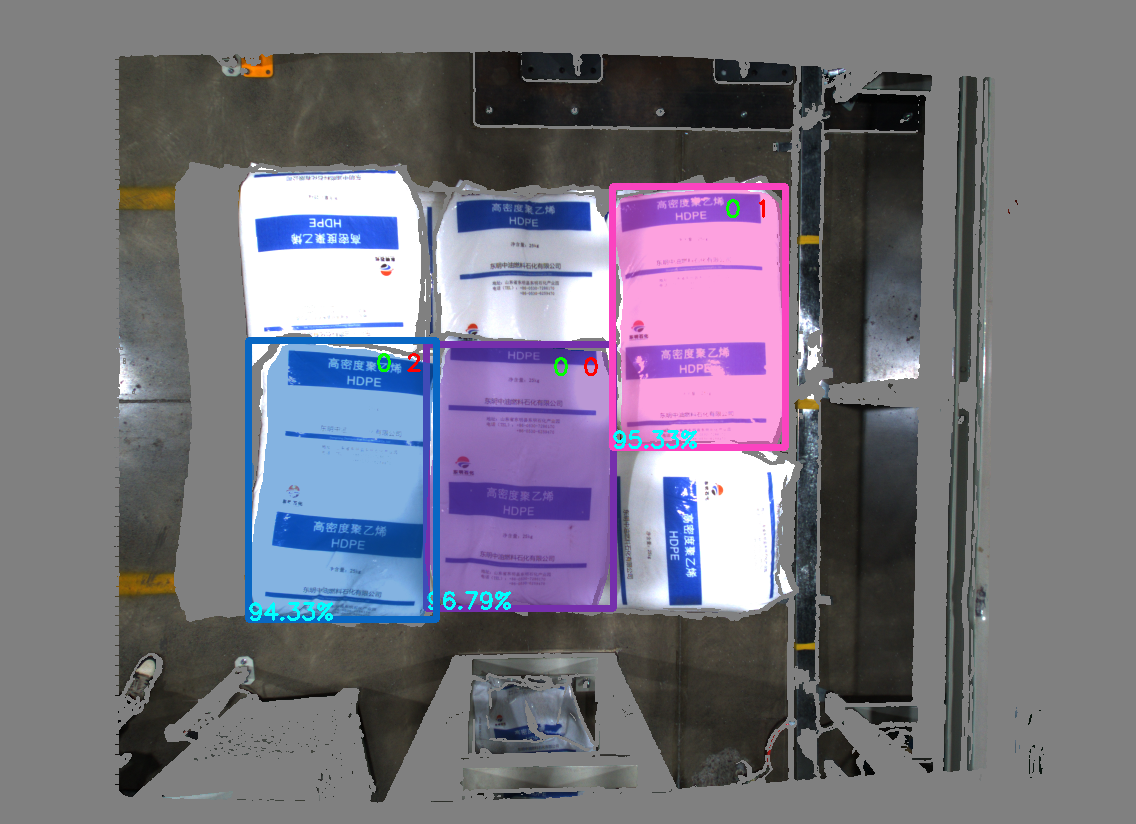

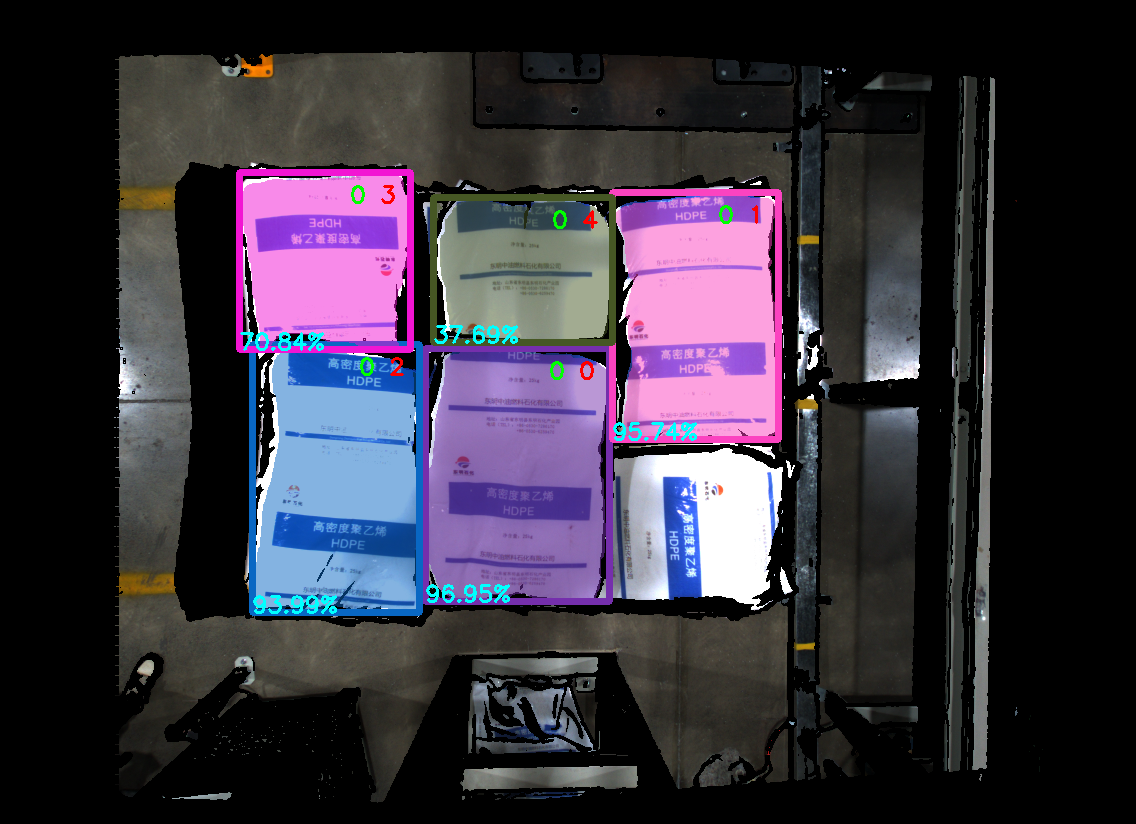

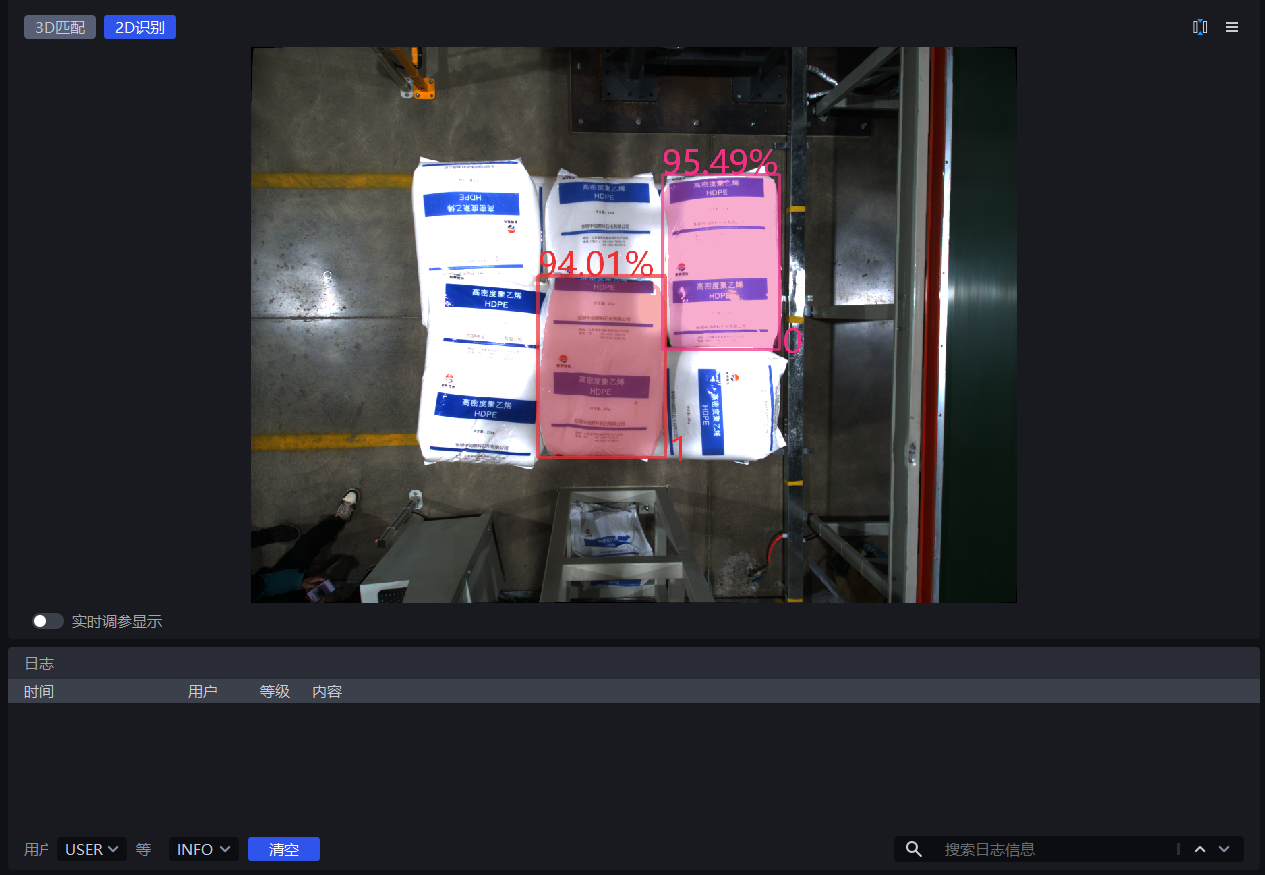

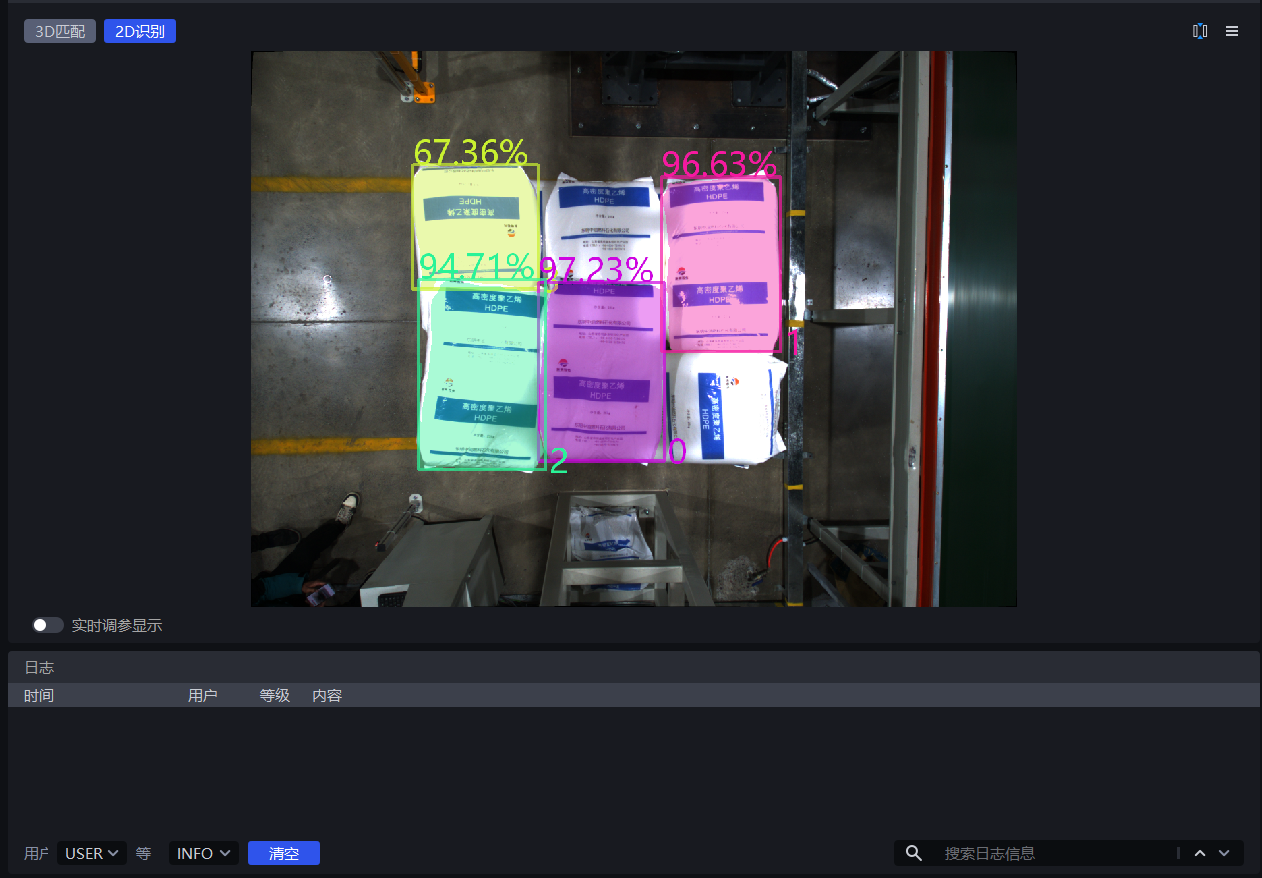

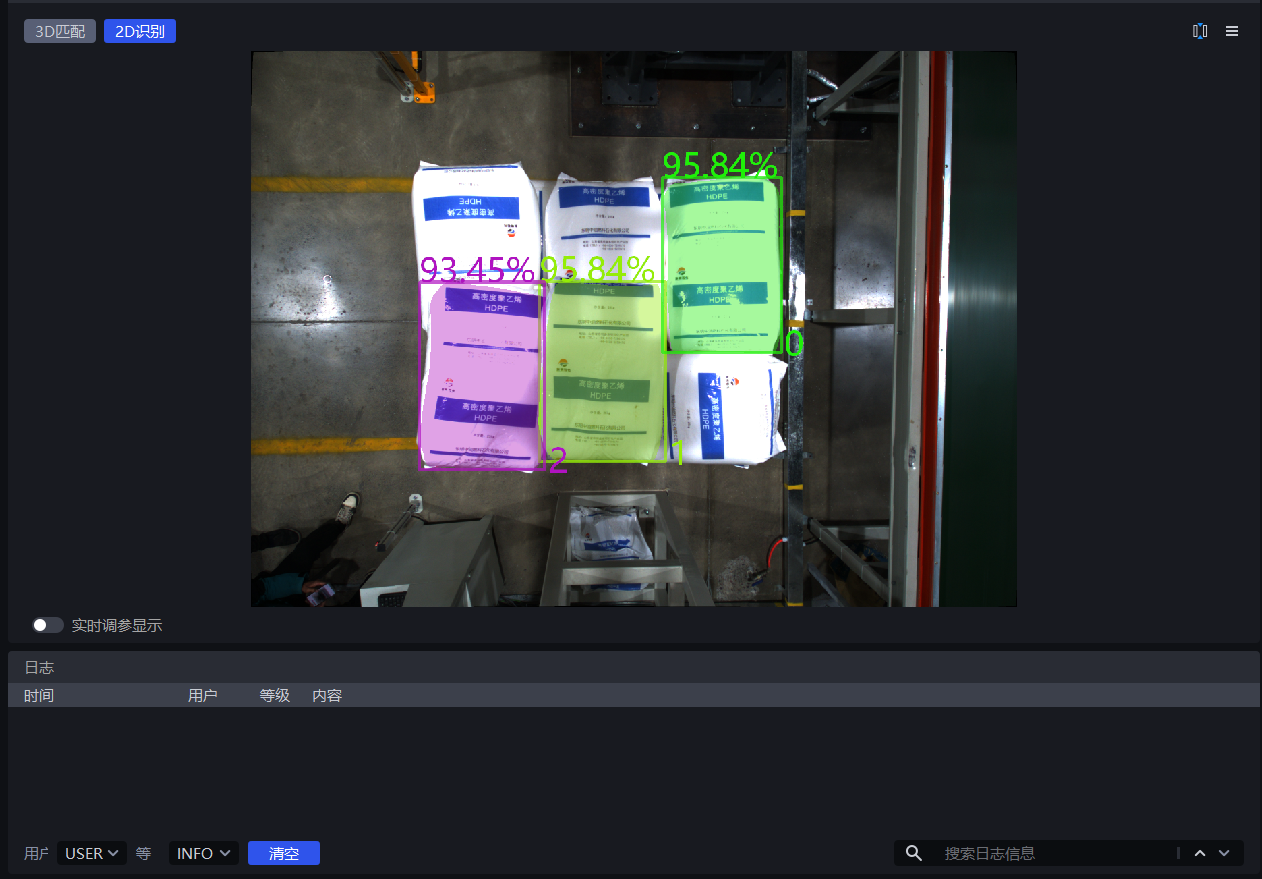

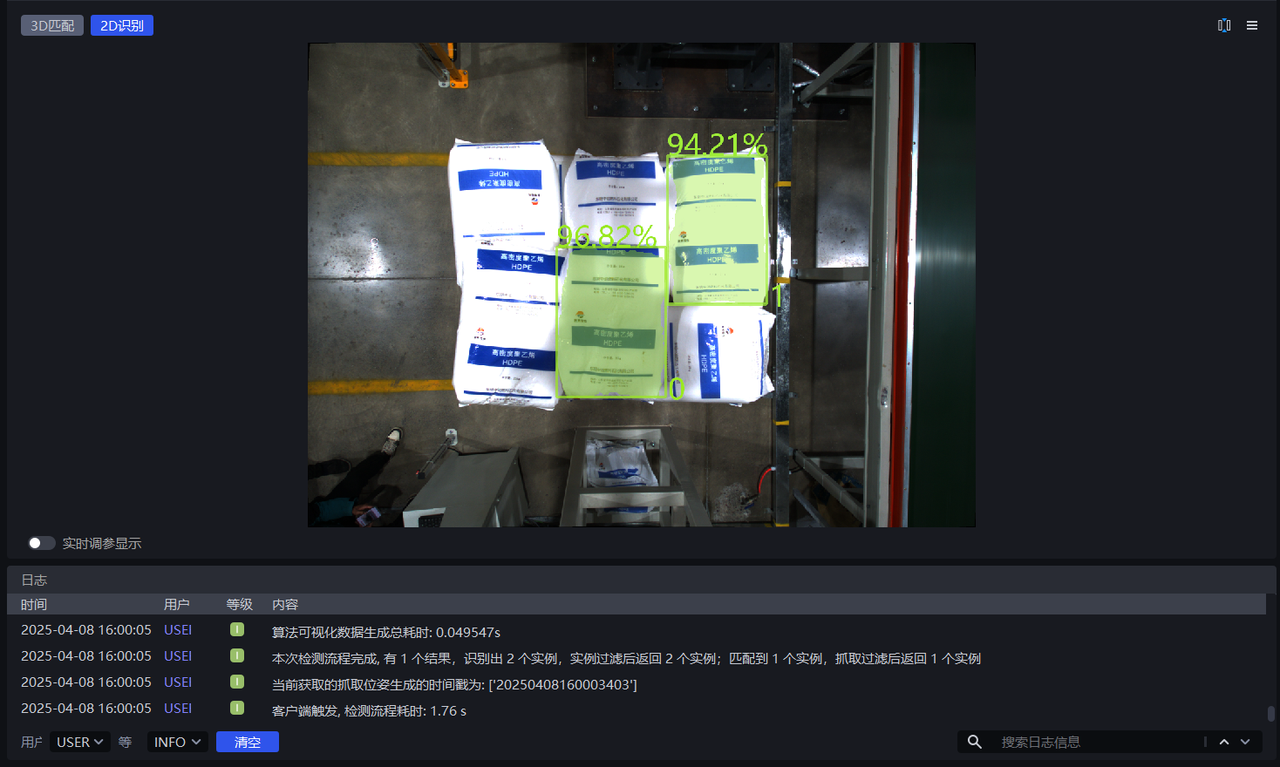

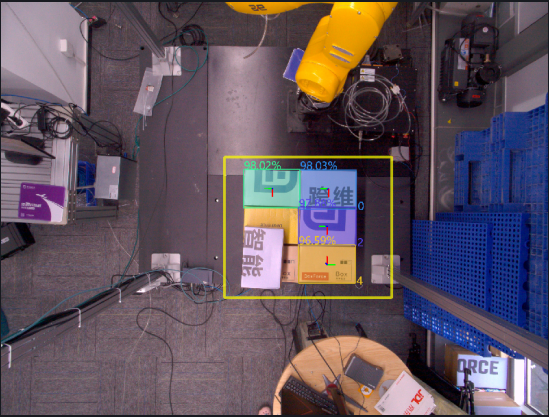

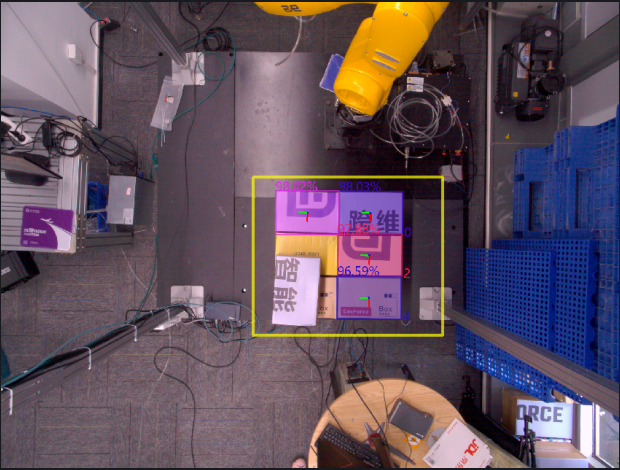

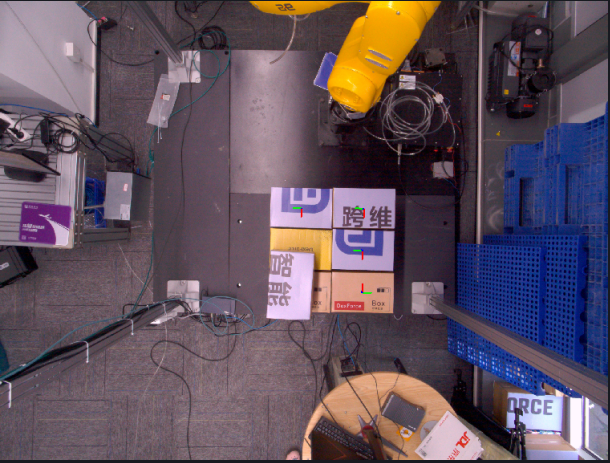

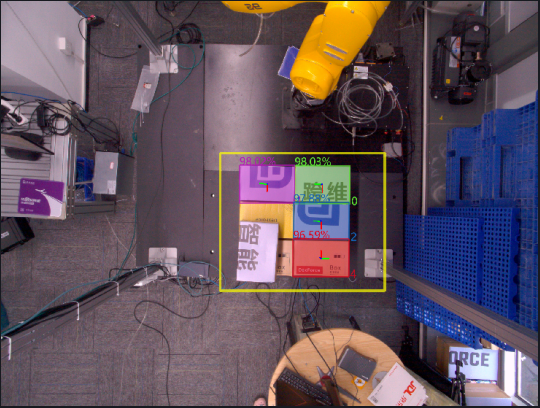

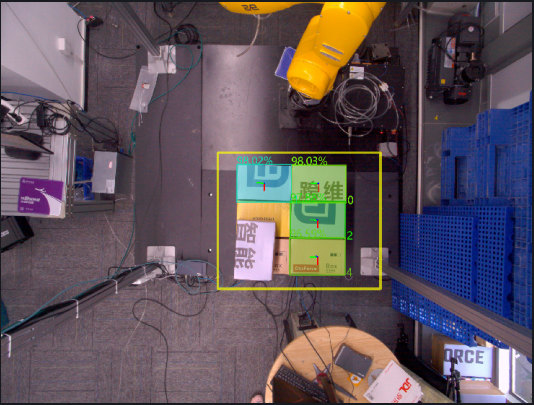

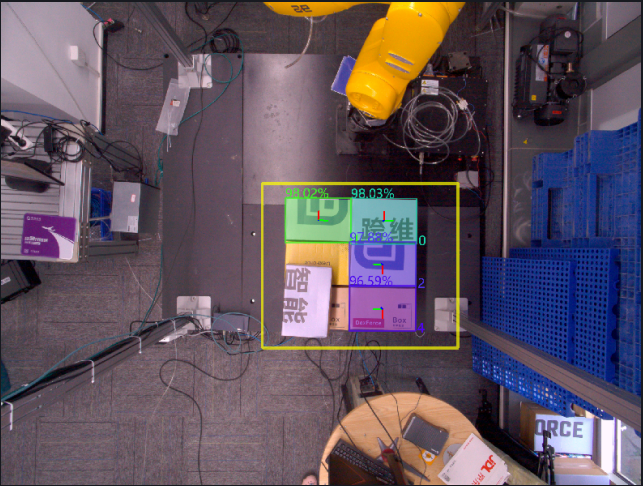

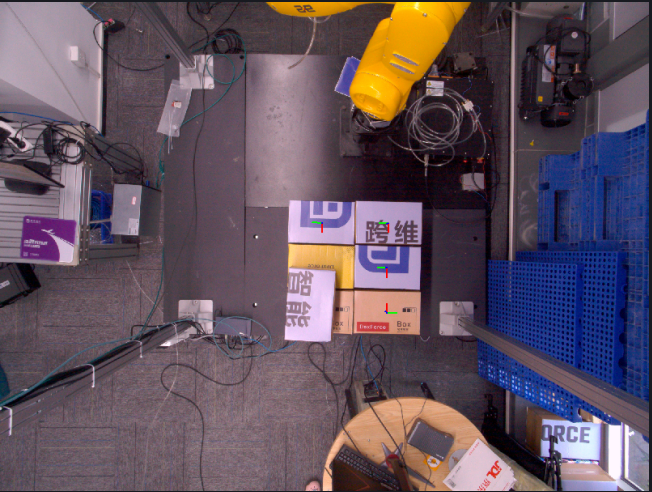

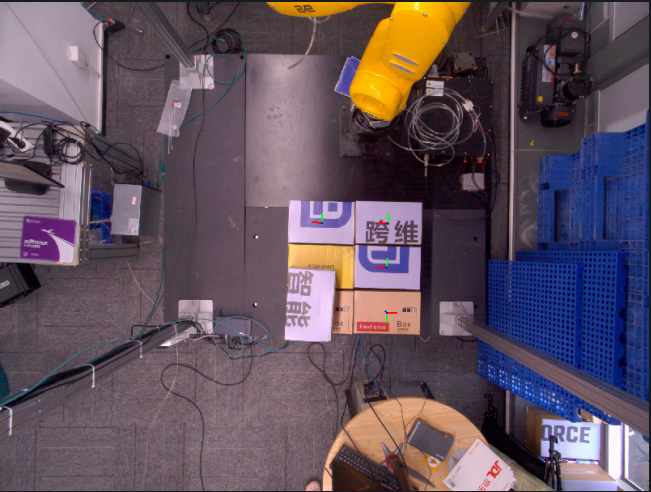

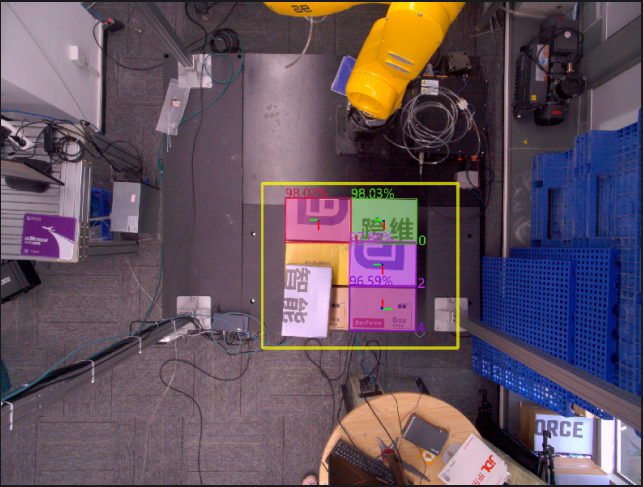

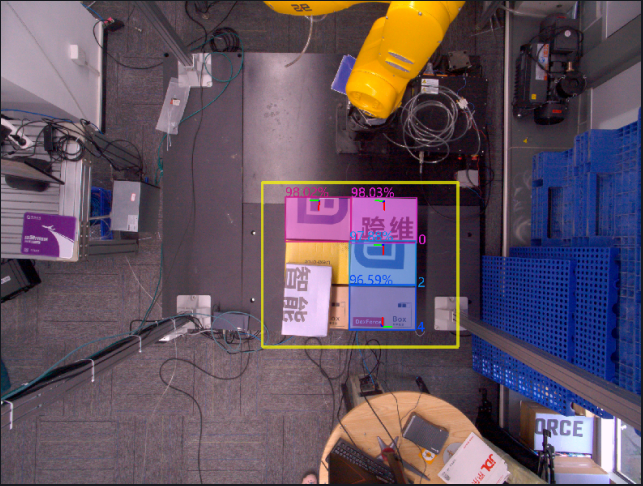

In 2D recognition, the percentage shown on an instance is the Confidence score, and the number is the Instance ID (the recognition order of the instance).

In 2D recognition, the colored shadow on an instance is the mask, and the rectangular box surrounding the instance is the bounding box.

If good detection results still cannot be obtained after trying all scaling ratios, you can adjust the ROI area.

As shown below, when the scaling ratio is 0.7, the detection result improves significantly. Therefore, 0.7 can be determined as the lower bound of the scaling ratio range.

When the scaling ratio is 1.8, the detection result becomes significantly worse. Therefore, 1.8 can be determined as the upper bound of the scaling ratio range.

1.2.2 Confidence Lower Threshold

- Function

Keep only recognition results whose deep learning model confidence scores are higher than the lower confidence threshold

- Use Case

This function can be adjusted when the instances enclosed by the detection result do not match expectations

- Parameter Description

Default Value: 0.5

Range: [0.01, 1.00]

Parameter Tuning

- If too few instances are detected, reduce this threshold. If the value is too small, the accuracy of image recognition may be affected.

- If an excessively small lower confidence threshold causes incorrect instances to be detected and those incorrect instances need to be removed, increase this threshold. If the value is too large, the retained detection results may become zero, resulting in no output.

Example

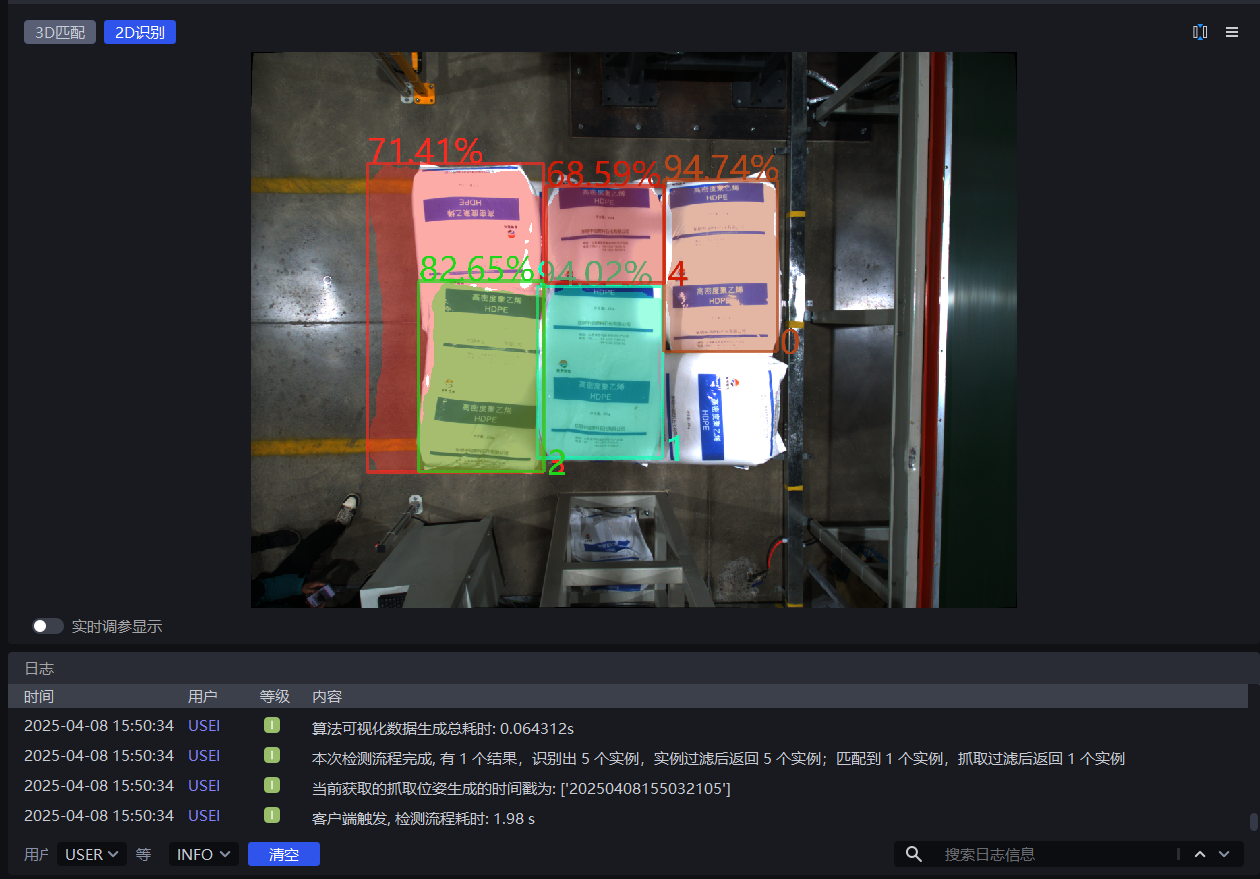

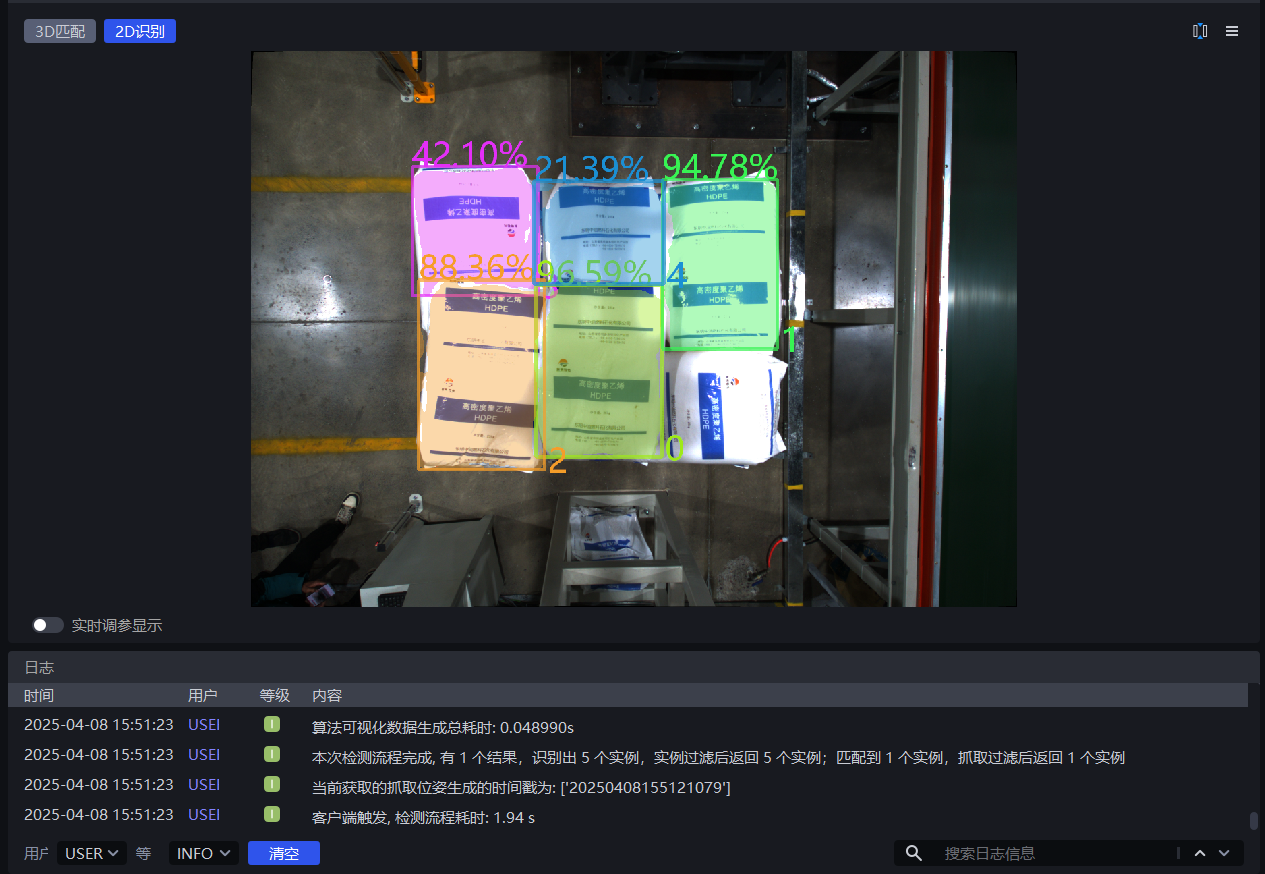

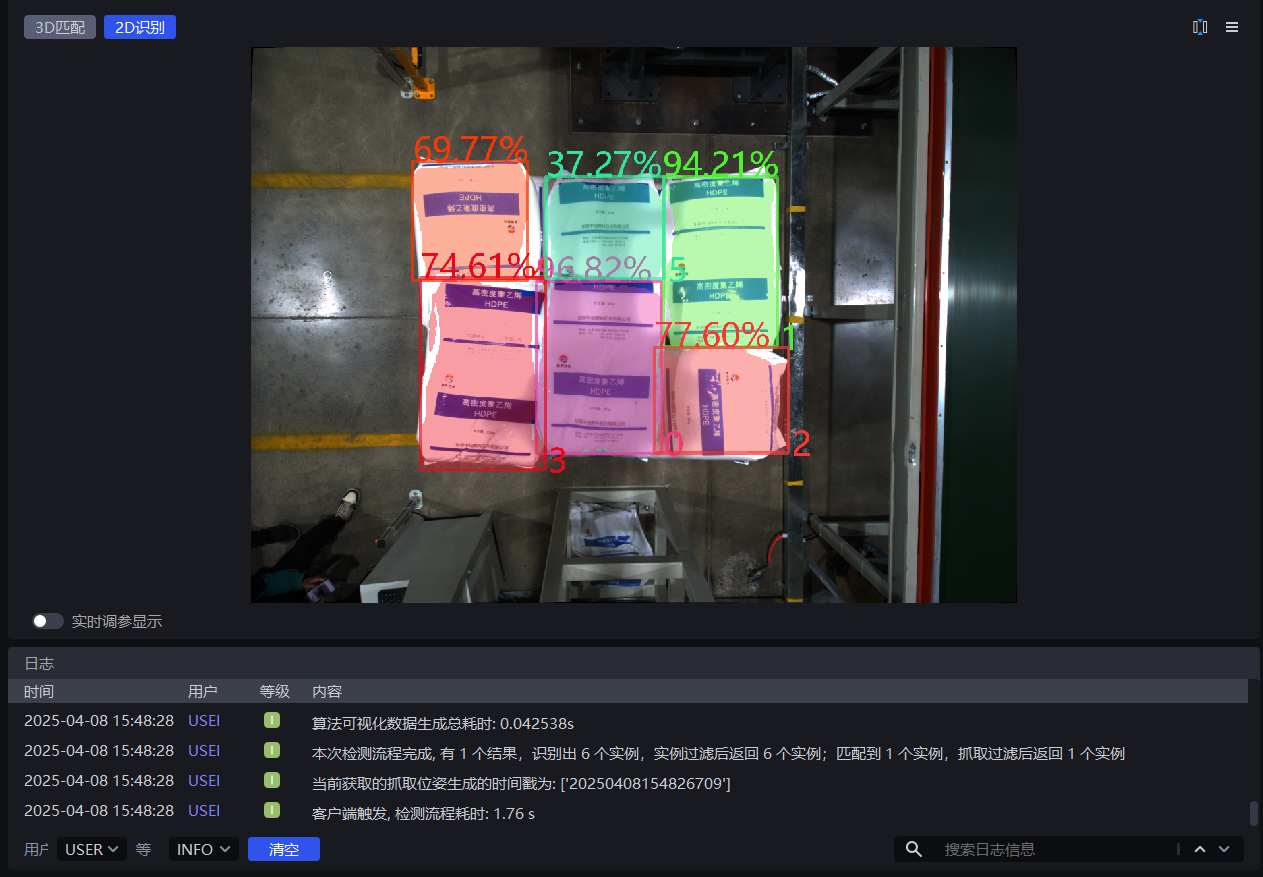

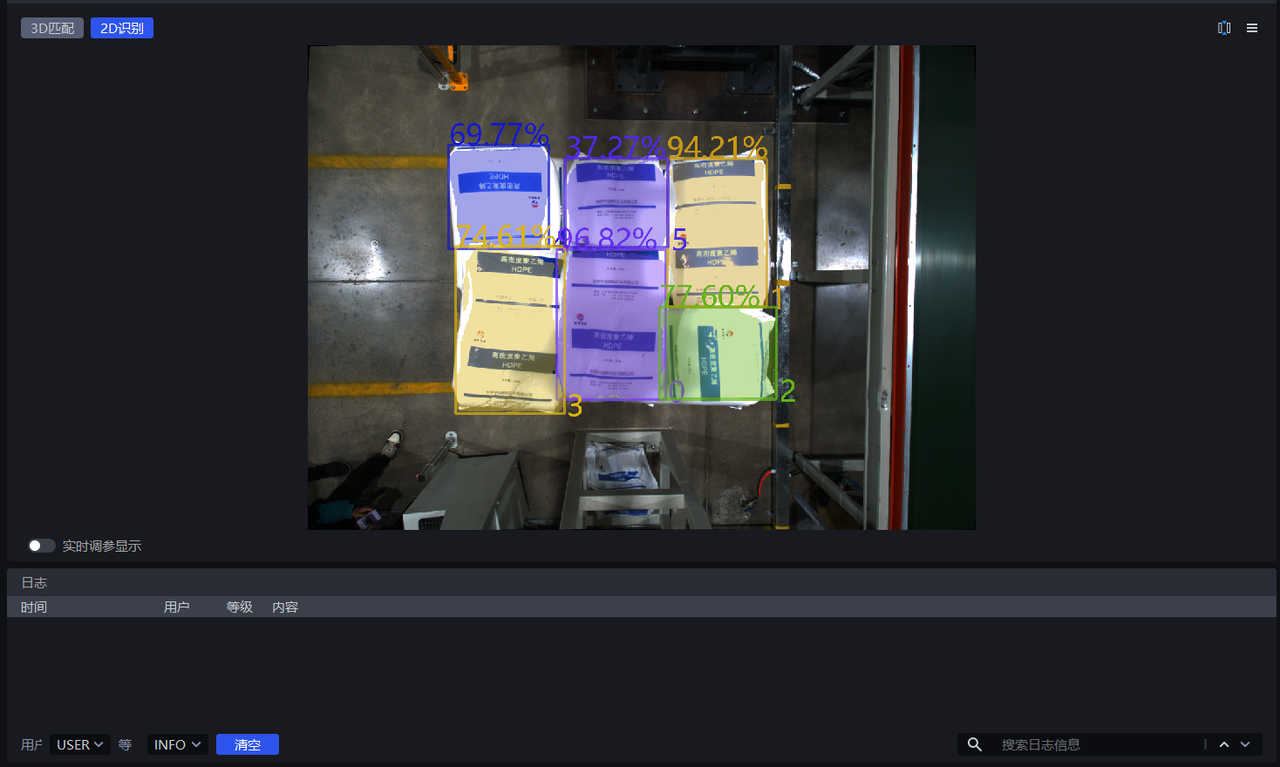

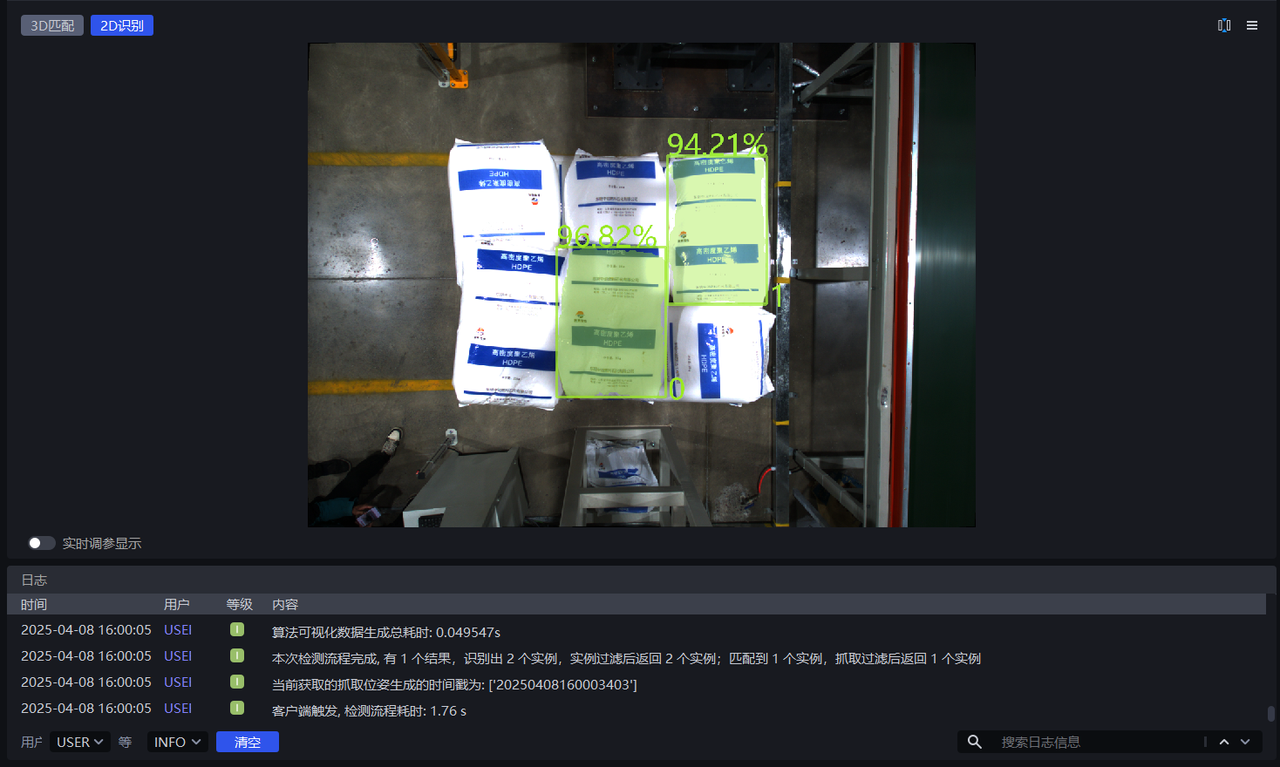

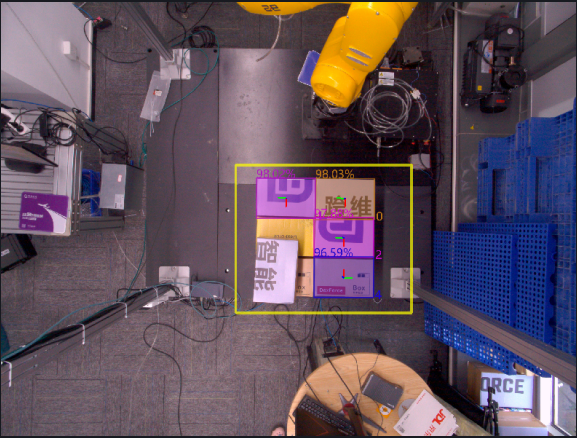

When Lower Confidence Threshold is set to 0.5, the retained instance detection results are shown in the left figure below. When Lower Confidence Threshold is set to 0.8, the retained instance detection results are shown in the right figure below. The instances with scores of 77.60%, 74.61%, and 69.77% (lower than 80%) are filtered out.

1.2.3 Auto Augment

- Function

All input values of scaling ratios and rotation angles are combined for inference. All results greater than the set lower confidence threshold after the combinations are returned, which can improve model inference accuracy, but increases processing time.

- Use Case

A single scaling ratio cannot meet the requirements of the actual scene, causing incomplete detection, or the object placement tilt is relatively large.

- Example

If Automatic Enhancement - Scaling Ratio is set to [0.8, 0.9, 1.0] and Automatic Enhancement - Rotation Angle is set to [0, 90.0] , then the values of the scaling ratios and rotation angles are combined pairwise. The model automatically generates 6 images internally for inference, and finally merges these 6 inference results together, outputting the results greater than the lower confidence threshold.

Auto Augment - Scaling Ratio

- Function

Scale the original image multiple times and perform inference multiple times, then output the combined inference result

- Use Case

Incomplete detection because a single scaling ratio cannot satisfy actual scene requirements

- Parameter Description

Default Value: [1.0]

Range: the range of each scaling ratio is [0.1, 3.0]

Multiple scaling ratios can be set, separated by English commas

- Parameter Tuning

Fill in multiple scaling ratios from 1.2.1 Scaling Ratio that produce good detection results

Auto Augment - Rotation Angle

- Function

Rotate the original image multiple times and perform inference multiple times, then output the combined inference result

- Use Case

Used when the object placement deviates significantly from the coordinate axes

- Parameter Description

Default Value: [0.0]

Range: the range of each rotation angle is [0, 360]

Multiple rotation angles can be set, separated by English commas

- Parameter Tuning

Adjust Automatic Enhancement - Rotation Angle according to the object angle in the actual scene. The tilt angle can be judged from the sack pattern and bag opening shape, or from the carton edges and brand logo.

1.3 Point Cloud Generation

In depalletizing scenarios, instance point clouds are generally generated using Mask Form (After Segmentation) or Mask Form (After Filtering).

| Instance Point Cloud Generation Mode | Mask(Segmented) | — | Use the segmented instance mask to generate the point cloud |

| Bounding Box (Segmented) | Bounding Box Scaling Ratio (Segmented) | Use the segmented instance bounding box to generate the point cloud | |

| Whether Color Is Required When Generating the Point Cloud (Segmented) | Whether the generated instance point cloud needs attached color | ||

| Mask(Filtered) | — | Use the filtered instance mask to generate the point cloud | |

| Bounding Box (Filtered) | Bounding Box Scaling Ratio (Filtered) | Use the filtered instance bounding box to generate the point cloud | |

| Whether Color Is Required When Generating the Point Cloud (Filtered) | Whether the generated instance point cloud needs attached color |

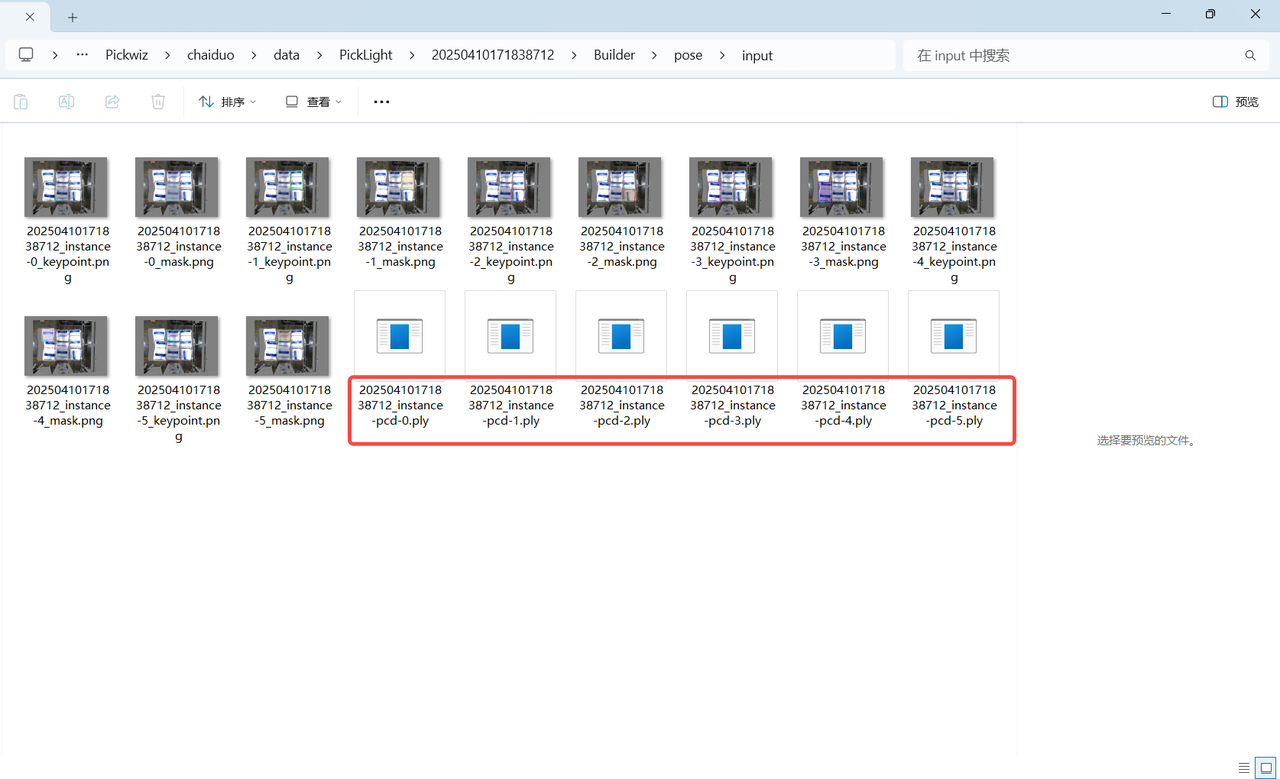

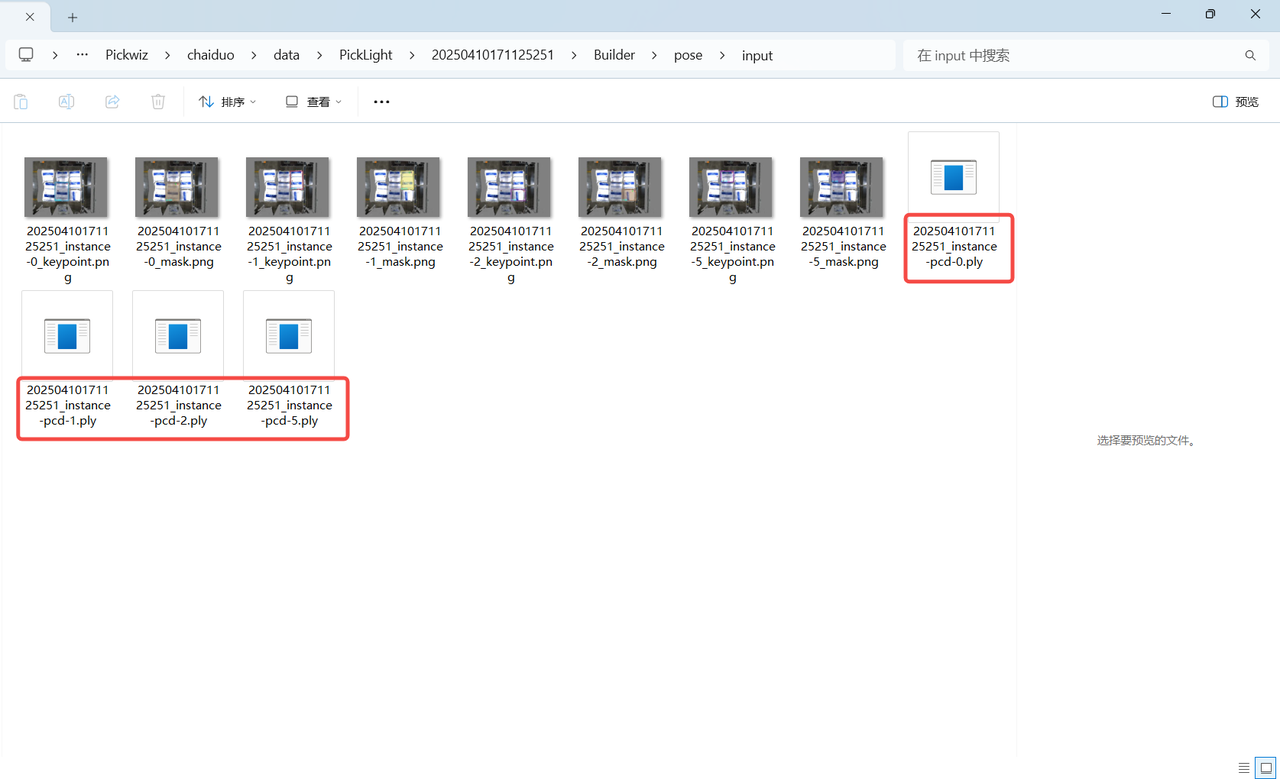

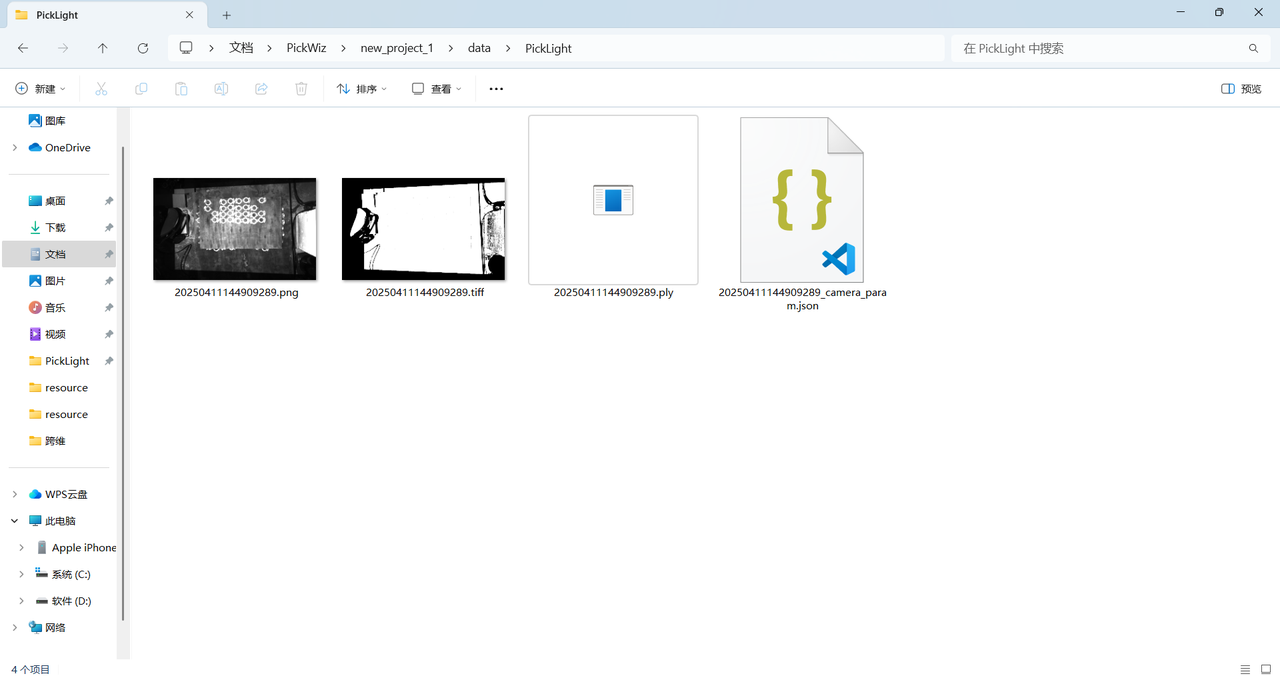

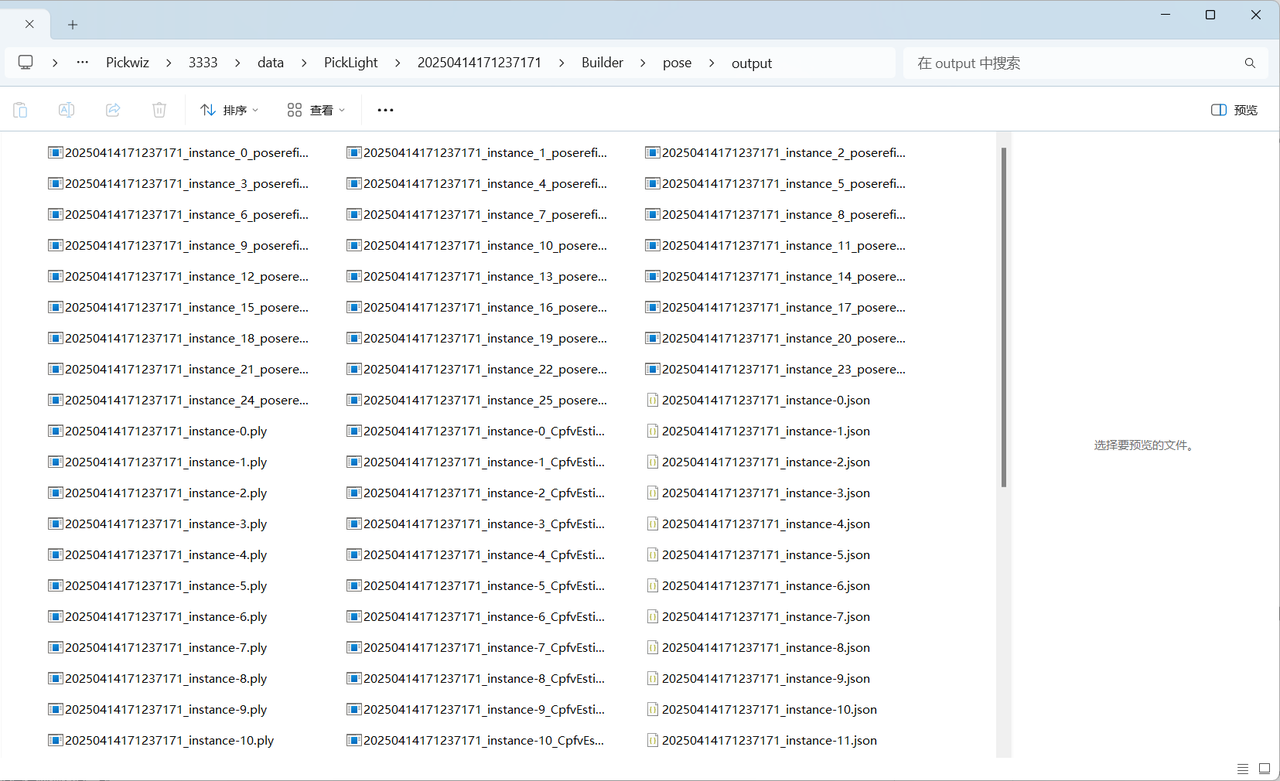

If acceleration is not required, there is no need to use the Instance Filtering function. Use Mask (Segmented) to generate the instance point cloud, which can be viewed under the project storage folder \Project Name\data\PickLight\Historical Data Timestamp\Builder\pose\input folder containing the generated instance point cloud.

If acceleration is required, you can use the Instance Filtering function to filter instances and use Mask (Filtered) to generate the instance point cloud, which can be viewed in the generated instance point cloud under the project storage folder \Project Name\data\PickLight\Historical Data Timestamp\Builder\pose\input folder containing the generated instance point cloud

1.4 Instance Filtering

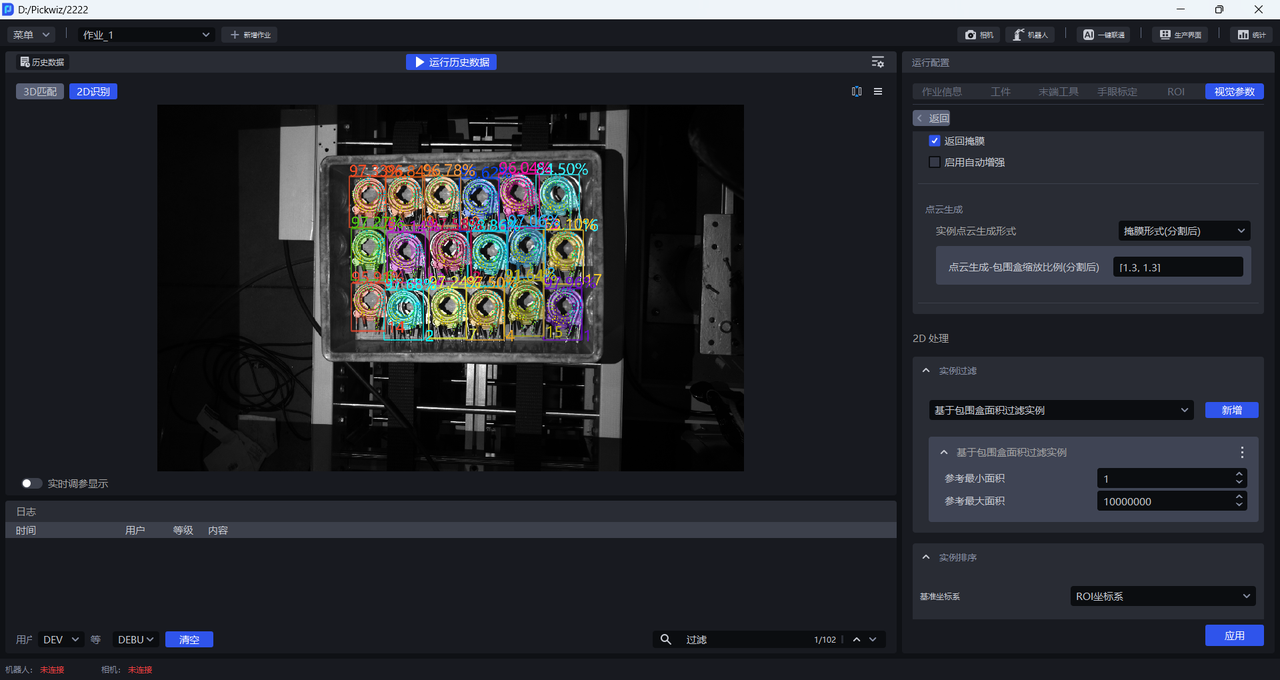

1.4.1 Filter by BBox Area

- Function Introduction

Filter according to the pixel area of the bounding box of the detected instance.

- Use Case

Applicable to scenarios where instance bounding box areas differ greatly. By setting upper and lower limits for the bounding box area, image noise can be filtered out to improve image recognition accuracy and avoid extra processing time caused by noise in subsequent processing.

- Parameter Description

| Parameter | Description | Default Value | Range | Unit |

|---|---|---|---|---|

| Minimum Area (Pixels) | This parameter sets the minimum filtering area for the bounding box. Instances whose bounding box area is lower than this value are filtered out. | 1 | [1, 10000000] | pixels |

| Maximum Area (Pixels) | This parameter sets the maximum filtering area for the bounding box. Instances whose bounding box area is higher than this value are filtered out. | 10000000 | [2, 10000000] | pixels |

- Example

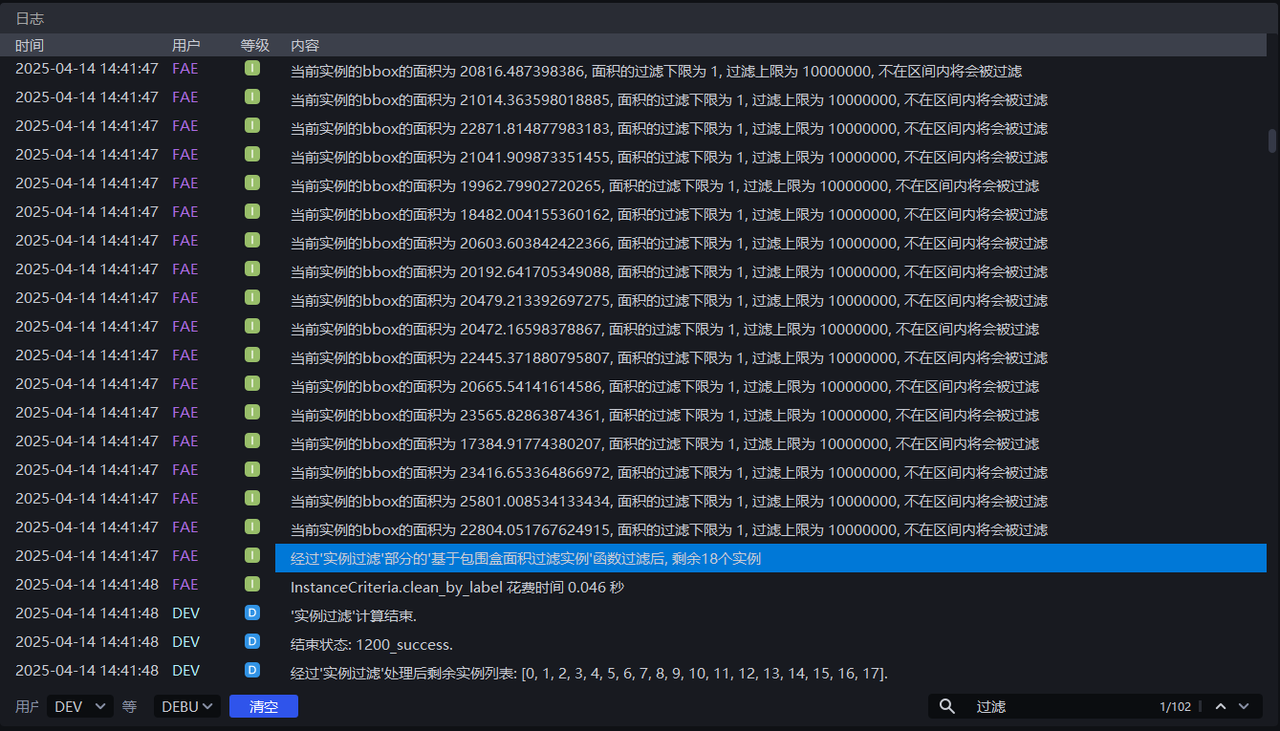

Run with the default values. You can view the bounding box area of each instance in the log, as shown below.

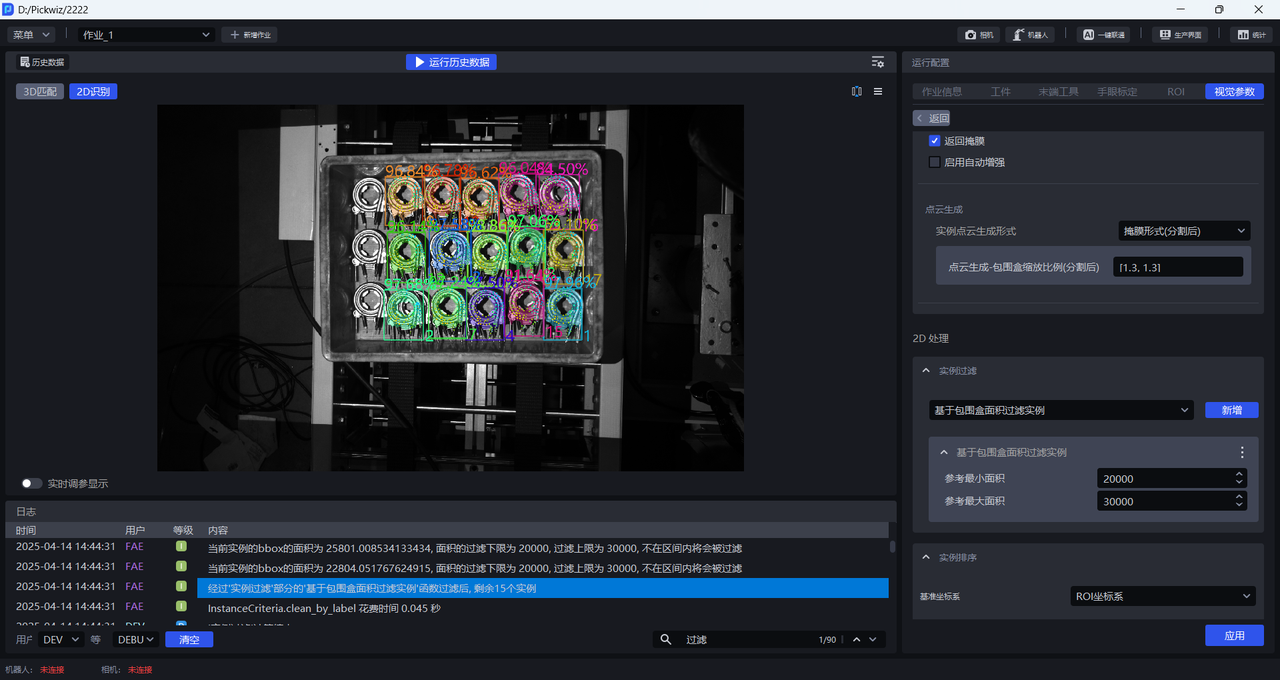

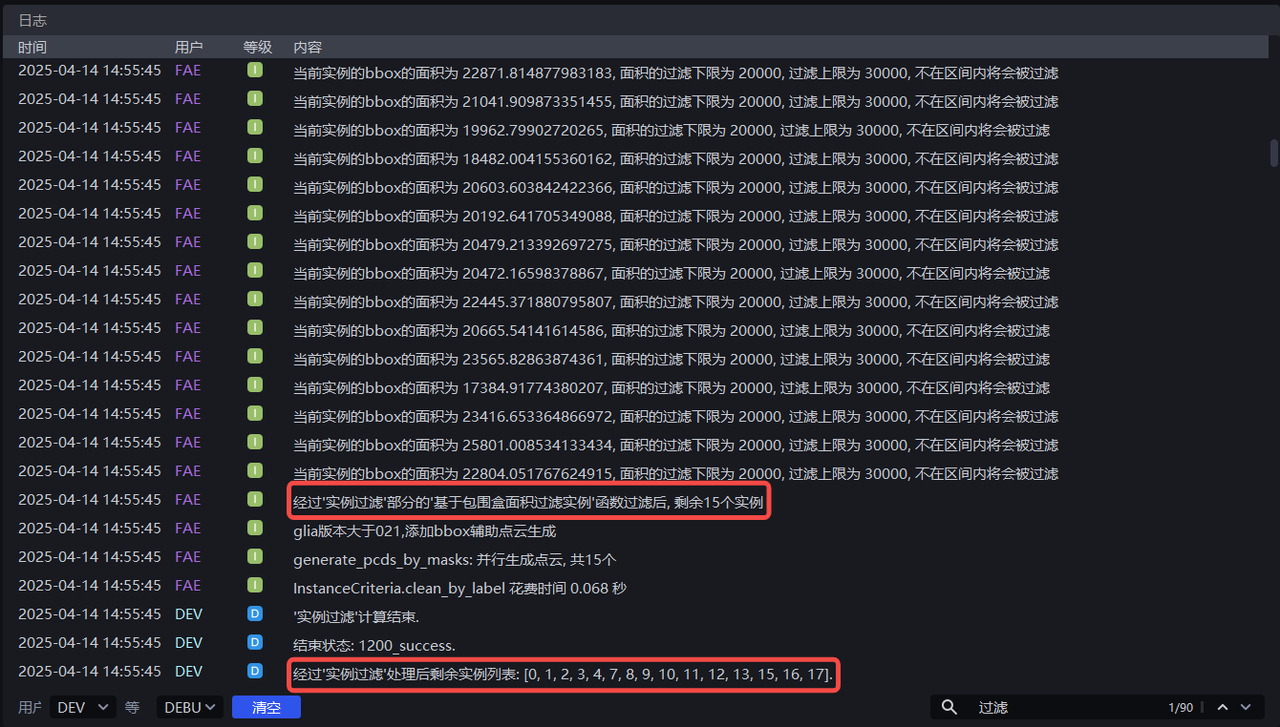

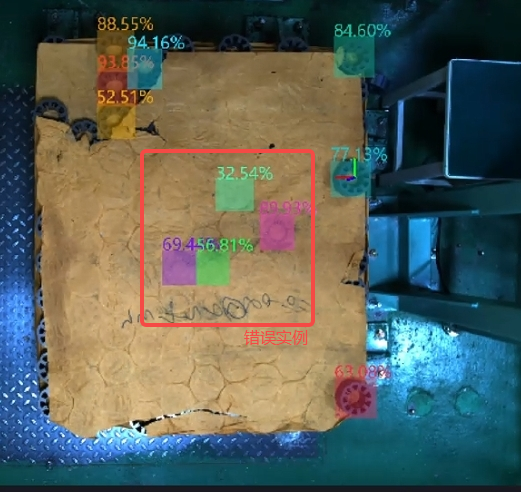

Adjust Minimum Area and Maximum Area according to the bounding box area of each instance. For example, setting Minimum Area to 20000 and Maximum Area to 30000 will filter out instances whose pixel area is less than 20000 or greater than 30000. The instance filtering process can be viewed in the log.

1.4.2 Filter Based on Bounding Box Aspect Ratio

- Function Introduction

Instances whose bounding box aspect ratios are outside the specified range are filtered out

- Use Case

Applicable to scenarios where instance bounding box aspect ratios differ greatly

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Minimum Aspect Ratio | Minimum bounding box aspect ratio. Instances whose bounding box aspect ratio is lower than this value are filtered out. | 0 | [0, 10000000] |

| Maximum Aspect Ratio | Maximum bounding box aspect ratio. Instances whose bounding box aspect ratio is higher than this value are filtered out. | 10000000 | [0, 10000000] |

| Use X/Y Axis Side Length as the Aspect Ratio | By default, this option is cleared, and the ratio of the longer side to the shorter side of the bounding box is used as the aspect ratio, which is suitable when the lengths of the longer and shorter sides differ greatly. After selection, the ratio of the side length on the X-axis to the side length on the Y-axis in the pixel coordinate system is used as the aspect ratio, which is suitable when the ratios of the longer side to the shorter side of most normal instance bounding boxes are similar, but some abnormal recognized instance bounding boxes differ greatly in their X-axis length / Y-axis length ratio. | Cleared | / |

1.4.3 Filter by Category ID

- Function Introduction

Filter according to the instance category

- Use Case

Applicable to scenarios where multiple types of Target Objects are supplied

- Parameter Description

| Parameter | Description | Default Value |

|---|---|---|

| Retained Category IDs | Retain instances whose category IDs are in the list. Instances whose category IDs are not in the list are filtered out. | [0] |

- Example

1.4.4 Filter Instance Edge

- Function Introduction

Filter according to the long side and short side of the instance point cloud

- Use Case

Applicable to scenarios where the distances of the instance point cloud on the X-axis or Y-axis differ greatly. By setting the distance range of the instance point cloud, image noise can be filtered out, image recognition accuracy can be improved, and extra processing time caused by noise in subsequent processing can be avoided.

- Parameter Description

| Parameter | Description | Default Value | Range | Unit |

|---|---|---|---|---|

| Short Side Length Range (mm) | Side length range of the short side of the point cloud | [0, 10000] | [0, 10000] | mm |

| Long Side Length Range (mm) | Side length range of the long side of the point cloud | [0, 10000] | [0, 10000] | mm |

| Lower Edge Denoising Limit (%) | Extract the lower percentage limit of X/Y values (camera coordinate system) in the instance point cloud, and remove point clouds outside the upper and lower limits to avoid noise affecting length calculation | 5 | [0, 100] | / |

| Upper Edge Denoising Limit (%) | Extract the upper percentage limit of X/Y values (camera coordinate system) in the instance point cloud, and remove point clouds outside the upper and lower limits to avoid noise affecting length calculation | 95 | [0, 100] | / |

| Side Length Type | Filter according to the long side and short side of the instance point cloud. Instances whose long side or short side lengths are outside the range are filtered out. | Instance Point Cloud Short Side | Instance Point Cloud Short Side; Instance Point Cloud Long Side; Instance Point Cloud Long Side and Short Side | / |

- Example

1.4.5 Filter Based on Classifier Category ID

- Function Introduction

Filter instances based on the category ID from the classifier. Instances not in the reference categories are filtered out.

- Use Case

In multi-category Target Object scenarios, the vision model may detect multiple types of Target Objects, but the actual task may require only one specific category. In this case, this function can be used to filter out unnecessary Target Objects.

- Parameter Description

The default value is [0], which means that instances with category ID 0 are retained by default. Instances whose category IDs are not in the list are filtered out.

1.4.6 Filter by Color Range

- Function Introduction

Instances can be filtered out by three-channel color thresholds (HSV or RGB).

- Use Case

Cases where incorrect instances and correct instances have obvious color differences.

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Maximum Color Range Value | Maximum color value | [180,255,255] | [[0,0,0],[255,255,255]] |

| Minimum Color Range Value | Minimum color value | [0,0,0] | [[0,0,0],[255,255,255]] |

| Filtering Percentage Threshold | Color pass-rate threshold | 0.05 | [0,1] |

| Reverse Filtering | If selected, instances whose proportion outside the color range is lower than the threshold are removed. If cleared, instances whose proportion inside the color range in the instance image is lower than the threshold are removed. | Cleared | / |

| Color Mode | Color space selected for color filtering | HSV Color Space | RGB Color SpaceHSV Color Space |

- Example

1.4.7 Filter by Confidence

- Function Introduction

Filter according to the confidence score of the instance

- Use Case

Applicable to scenarios where confidence scores of instances differ greatly

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Reference Confidence | Retain instances whose confidence is greater than the threshold, and filter out instances whose confidence is less than the threshold. | 0.5 | [0,1] |

| Reverse Filtering Result | After reversal, retain instances whose visibility confidence is less than the threshold, and filter out instances whose confidence is greater than the threshold. | Cleared | / |

- Example

1.4.8 Filter by Instance PCD Quantity

- Function Introduction

Filter according to the number of downsampled instance point clouds

- Use Case

The instance point cloud contains a large amount of noise

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Minimum Point Cloud Quantity | Minimum point cloud quantity | 3500 | [1, 10000000] |

| Maximum Point Cloud Quantity | Maximum point cloud quantity | 8500 | [2, 10000000] |

| Filter Instances Whose Quantity Falls Within the Interval | If selected, instances whose point cloud quantity is between the minimum and maximum values are filtered out. If cleared, instances whose point cloud quantity is not within the interval are filtered out. | Cleared | / |

1.4.9 Filter by Mask Area

- Function Introduction

Filter image masks according to the sum of mask pixels (that is, the pixel area) of the detected instances.

- Use Case

Applicable to scenarios where instance mask areas differ greatly. By setting upper and lower limits for mask area, noise in image masks can be filtered out to improve image recognition accuracy and avoid extra processing time caused by noise in subsequent processing.

- Parameter Setting Description

| Parameter Name | Description | Default Value | Range | Unit |

|---|---|---|---|---|

| Reference Minimum Area | This parameter sets the minimum filtering area for the mask. Instances whose mask area is lower than this value are filtered out. | 1 | [1, 10000000] | pixels |

| Reference Maximum Area | This parameter sets the maximum filtering area for the mask. Instances whose mask area is higher than this value are filtered out. | 10000000 | [2, 10000000] | pixels |

- Example

1.4.10 Filter Based on Visibility

- Function Introduction

Filter according to the visibility score of the instance

- Use Case

Applicable to scenarios where instance visibility differs greatly

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Reference Visibility Threshold | Retain instances whose visibility is greater than the threshold, and filter out instances whose visibility is less than the threshold. Visibility is used to determine how visible an instance is in the image. The more the Target Object is occluded, the lower the visibility. | 0.5 | [0,1] |

| Reverse Filtering Result | After reversal, retain instances whose visibility is less than the threshold, and filter out instances whose visibility is greater than the threshold. | Cleared | / |

1.4.11 Remove Overlapping Instances

- Function Introduction

Filter instances whose bounding boxes intersect and overlap

- Use Case

Applicable to scenarios where instance bounding boxes intersect each other

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Bounding Box Overlap Ratio Threshold | Threshold for the ratio of the intersecting area of bounding boxes to the area of the instance bounding box | 0.05 | [0, 1] |

| Filter the Instance with the Larger Bounding Box Area | If selected, the instance with the larger area among two intersecting bounding boxes is filtered out. If cleared, the instance with the smaller area among two intersecting bounding boxes is filtered out. | Selected | / |

- Example

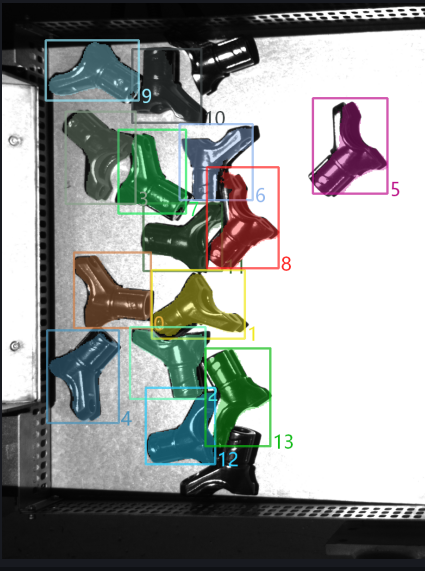

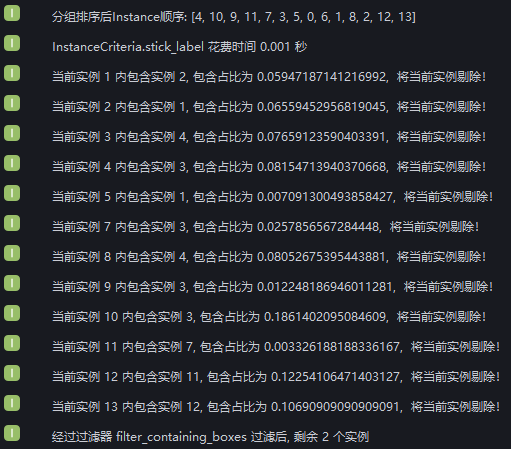

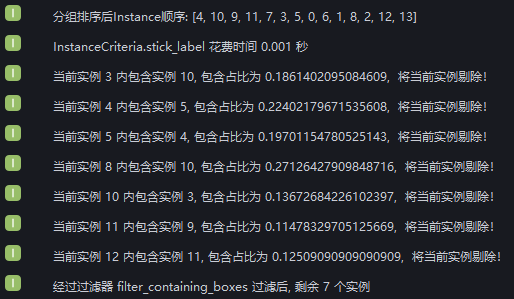

Added Filter enclosed instances. Run with the default values and view bounding box intersections of instances in the log. After instance filtering, 2 instances remain.

According to the log, 12 instances are filtered out because their bounding boxes intersect, leaving 2 instances whose bounding boxes do not intersect.

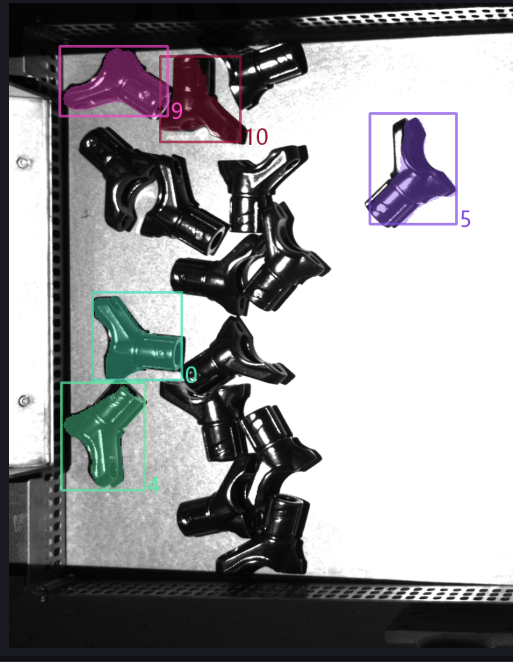

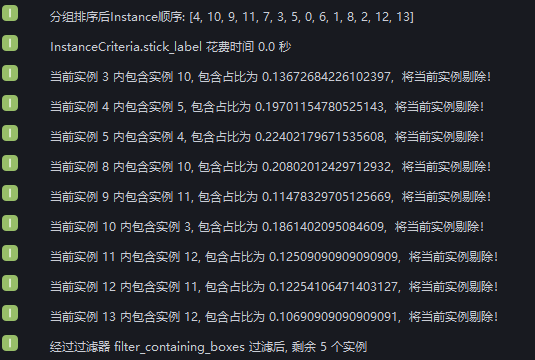

Set Bounding Box Overlap Ratio Threshold to 0.1 and select Whether to Filter Larger Instances. View the instance filtering process in the log. Nine instances are filtered out because the ratio of the intersection area of their bounding boxes to the instance bounding box area is greater than 0.1. Three instances are retained because the ratio of the intersection area of their bounding boxes to the instance bounding box area is less than 0.1. Two instances have non-intersecting bounding boxes.

Set Bounding Box Overlap Ratio Threshold to 0.1 and clear Whether to Filter Larger Instances. View the instance filtering process in the log. For 9 instances, the ratio of the intersection area of the bounding box to the instance bounding box area is greater than 0.1, but 2 of them are retained because their bounding box areas are smaller than the intersecting instances. Therefore, 7 instances are filtered out. Three instances are retained because the ratio of the intersection area of their bounding boxes to the instance bounding box area is less than 0.1. Two instances have non-intersecting bounding boxes.

1.4.12 [Master] Filter Instances with Concave/Convex Masks Based on Mask / Mask Bounding Polygon Area Ratio

- Function Introduction

Calculate the area ratio of the mask to the circumscribed polygon of the mask. If it is smaller than the set threshold, the instance is filtered out.

- Use Case

Applicable when the Target Object mask has jagged or concave/convex shapes.

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Area Ratio Threshold | Mask / convex hull area ratio threshold. If it is less than the set threshold, the instance is filtered out. | 0.1 | [0,1] |

1.4.13 [Master] Filter Based on Point Cloud Average Distance

- Function Introduction

Filter based on the average distance from points in the point cloud to the fitted plane, removing uneven instance point clouds

- Use Case

Applicable to scenarios where planar Target Object point clouds are bent

- Parameter Description

| Parameter | Description | Default Value | Range | Unit |

|---|---|---|---|---|

| Plane Segmentation Distance Threshold (mm) | Extract a plane from the bent instance point cloud. Points whose distance to the plane is less than this threshold are regarded as points on the plane. | 10 | [-1000, 1000] | mm |

| Average Distance Threshold (mm) | Average distance from points in the instance point cloud to the extracted plane | 20 | [-1000, 1000] | mm |

| Remove Instances Whose Average Distance Is Smaller Than the Threshold | If selected, instances whose average distance from points to the extracted plane is less than the average distance threshold are filtered out. If cleared, instances whose average distance from points to the extracted plane is greater than the average distance threshold are filtered out. | Cleared | / | / |

1.4.14 [Master] Filter Occluded Instances Based on Mask / Bounding Box Area Ratio

- Function Introduction

Calculate the area ratio of the mask to the bounding box. Instances whose ratios are outside the minimum and maximum range are filtered out.

- Use Case

Used to filter instances of occluded Target Objects

- Parameter Description

| Parameter | Description | Default Value | Range |

|---|---|---|---|

| Minimum Area Ratio | Lower limit of the mask / bounding box area ratio range. The smaller the ratio, the more severely the instance is occluded. | 0.1 | [0,1] |

| Maximum Area Ratio | Upper limit of the mask / bounding box area ratio range. The closer the ratio is to 1, the less the instance is occluded. | 1.0 | [0,1] |

1.4.15 [Master] Determine if Top-Layer Instances are fully detected

- Function Introduction

One of the foolproof mechanisms. It determines whether all instances on the topmost layer have been fully detected. If there are top-layer instances that have not been detected, an error is reported and the workflow ends.

- Use Case

Applicable to scenarios where one image is taken and multiple picks are performed, or where picking must be done in sequence, to prevent missed picks from affecting subsequent operations due to incomplete instance detection

- Parameter Description

| Parameter | Description | Default Value | Range | Unit | Parameter Tuning |

|---|---|---|---|---|---|

| Distance Threshold | Used to determine the topmost Target Object. If the distance between a point and the highest point of the Target Object point cloud is smaller than the distance threshold, the point is regarded as belonging to the topmost point cloud. Otherwise, it is regarded as not belonging to the topmost point cloud. | 5 | [0.1, 1000] | mm | It should be smaller than the height of the Target Object |

1.5 Instance Sequence

- Function Introduction

Group, sort, and extract instances according to the selected strategy

- Use Case

Common to depalletizing, random picking, and ordered loading/unloading scenarios

If sorting is not required, there is no need to configure a specific strategy.

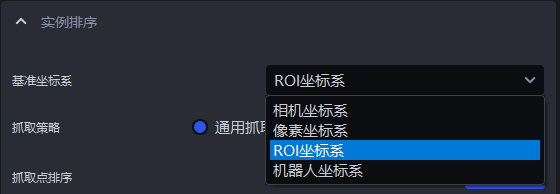

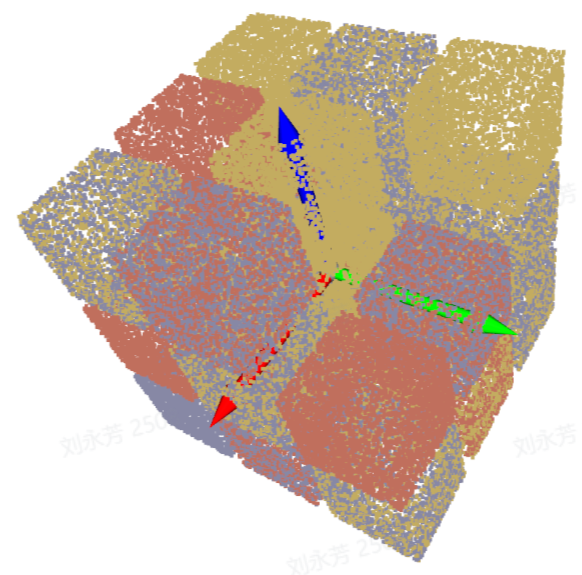

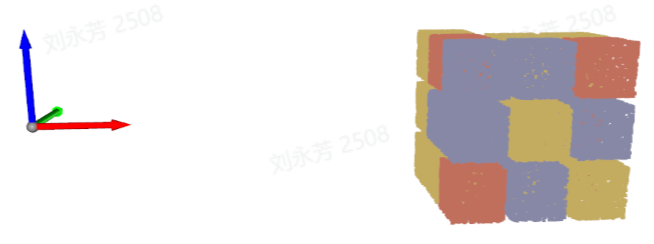

1.5.1 Base Coords

- Function Introduction

Set a unified coordinate system for all instances to group and sort instances

- Use Case

Common to depalletizing, random picking, and ordered loading/unloading scenarios

A reference coordinate system should be set before using coordinate-related strategies

- Parameter Description

| Parameter | Description | Illustration |

|---|---|---|

| Camera Coords | The origin of the coordinate system is above the object, and the positive Z-axis points downward; XYZ values are the values of the object center point in this coordinate system. |  |

| ROI Coords | The origin of the coordinate system is approximately at the center of the stack, and the positive Z-axis points upward; XYZ values are the values of the object center point in this coordinate system. |  |

| Robot Coords | The origin of the coordinate system is on the Robot itself, and the positive Z-axis generally points upward; XYZ values are the values of the object center point in this coordinate system. |  |

| Pixel Coords | The origin of the coordinate system is at the top-left vertex of the RGB image, and it is a 2D plane coordinate system; X and Y are the x value and y value of the bbox detection box, and Z is 0. |  |

1.5.2 Picking Strategy

- Parameter Description

| Parameter | Description | Default Value |

|---|---|---|

| Strategy | Select which value is used for grouping and sorting, and how to sort. Multiple criteria can be stacked, including XYZ coordinates of the instance point cloud center, bounding box aspect ratio, distance between the instance point cloud center and the ROI center, and so on. They are executed in sequence. | Instance Point Cloud Center X Coordinate from Small to Large (mm) |

| Grouping Step Size | According to the selected strategy, instances are divided into several groups based on the step size. The grouping step size is the interval between two groups. For example, if the strategy is "Instance Point Cloud Center Z Coordinate from Large to Small (mm)", then the Z coordinates of all instance point cloud centers are first sorted from large to small and then grouped by the step size. The corresponding instances are also divided into several groups. | / |

| Extract First Several Groups | After grouping and sorting, how many groups of instances need to be retained | 10000 |

| Strategy Name | Description | Grouping Step Size | Extract First Several Groups | |

|---|---|---|---|---|

| Default Value | Range | Default Value | ||

| Instance Point Cloud Center XYZ Coordinate from Large to Small / from Small to Large (mm) | Use the XYZ coordinates of each instance point cloud center for grouping and sorting The reference coordinate system should be set before using this strategy for sorting | 200.000 | (0, 10000000] | 10000 |

| From the Middle to Both Sides of the XY Coordinate Axis of the Instance Point Cloud Center / from Both Sides to the Middle of the XY Coordinate Axis of the Instance Point Cloud Center (mm) | Use the XY coordinate values of each instance point cloud center and perform grouping and sorting in the direction of "from the middle to both sides" or "from both sides to the middle" The reference coordinate system should be set before using this strategy for sorting | 200.000 | (0, 10000000] | 10000 |

| Bounding Box Aspect Ratio from Large to Small / from Small to Large | Use the ratio of the longer side to the shorter side of the bounding box for grouping and sorting | 1 | (0, 10000] | 10000 |

| Mask Area from Large to Small / from Small to Large | Use the mask area of each instance for grouping and sorting | 10000 | [1, 10000000] | 10000 |

| Distance from the Instance Point Cloud Center to the ROI Center from Near to Far / from Far to Near (mm) | Use the distance from each instance point cloud center to the center of the ROI coordinate system for grouping and sorting | 200.000 | (0, 10000] | 10000 |

- Example

1.5.3 Custom Grasping Strategy

(1) Function Description

Switch Grasping Strategy to Custom Grasping Strategy, then click Add to add a custom grasping strategy.

Customize the picking order for each Target Object. If it is difficult to achieve picking with the General Grasping Strategy, or if it is difficult to tune suitable parameters because of point cloud noise and other issues, you can consider using the Custom Grasping Strategy.

The Custom Grasping Strategy is applicable to depalletizing and ordered loading/unloading scenarios, but not to random picking scenarios, because the Target Objects used with the Custom Grasping Strategy must be ordered (that is, the order of the Target Objects is fixed).

The Custom Grasping Strategy can only be combined with a single General Grasping Strategy, and the strategy can only be selected as Z coordinate from small to large.

(2) Parameter Description

| Parameter | Description | Default Value | Range | Parameter Tuning |

|---|---|---|---|---|

| IOU Threshold | Represents the overlap threshold between the annotated bbox and the detected bbox. The overlap is used to determine which image's sorting method should be selected for the current Target Object instance sorting. | 0.7 | [0,1] | The larger the threshold, the stricter the matching and the worse the anti-interference capability. Minor shape or position changes may lead to matching failure, or the wrong custom strategy may be matched, resulting in sorting in the wrong order. |

| Pixel Distance Threshold | Represents the size difference between the matchable bbox and the detected bbox. | 100 | [0,1000] | The smaller the threshold, the stricter the matching and the better the anti-interference capability. If the placement of Target Objects between different layers is relatively similar, the wrong custom strategy may still be matched, resulting in an incorrect sorting order. |

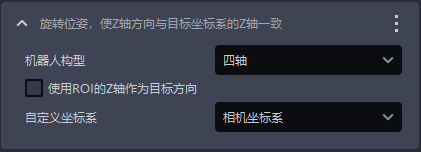

(3) Select the Reference Coordinate System

When using the Custom Grasping Strategy, only the camera coordinate system or the pixel coordinate system can be selected

If there are multiple layers of Target Objects, select the camera coordinate system; if there is only one layer of Target Objects, select the pixel coordinate system

(4) Strategy, Grouping Step Size, Extract First Several Groups

| Parameter | Description | Default Value |

|---|---|---|

| Strategy | Only Instance Point Cloud Center Z Coordinate from Large to Small / from Small to Large (mm) can be selected | / |

| Grouping Step Size | According to the strategy of sorting Z coordinates from small to large, the Z coordinates of instances are sorted from small to large, and the instances are divided into several groups according to the step size | 10000 |

| Extract First Several Groups | After grouping and sorting, how many groups of instances need to be retained | 10000 |

(5) Take Photo / Add Local Image

Click Take Photo to acquire an image from the currently connected camera, or click Add Local Image to import an image locally. For each layer or each different placement form of Target Objects, you need to take a photo or add a local image to obtain one corresponding image. If every layer is the same, only one image is needed. Right-click the image to delete it.

On the acquired image, click and hold the left mouse button while dragging to annotate a bbox. The DELETE key can be used to delete annotated bboxes one by one.

2. 3D Computation

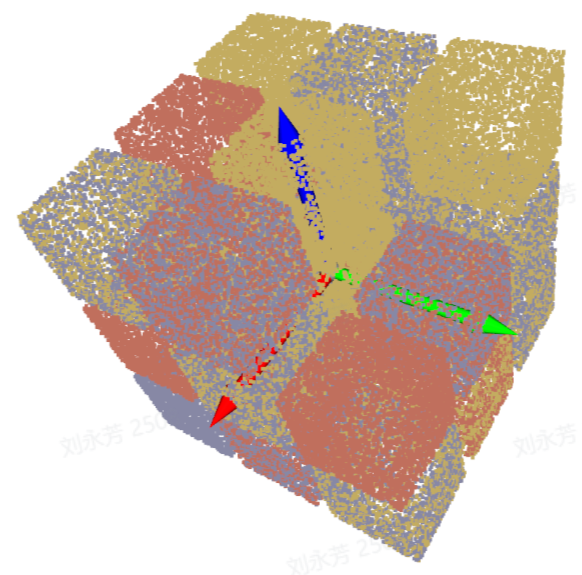

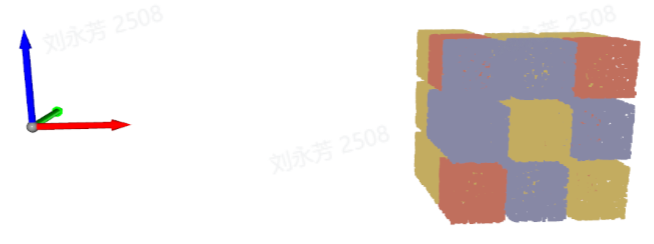

2.1 Preprocessing

The Preprocessing step for 3D computation processes the 3D point cloud before performing pose estimation on instances and generating grasp points. The Planar Workpiece Ordered Loading/Unloading (Parallelized) scene does not require 3D point cloud processing.

2.2 Point Cloud Matching Pose Estimation

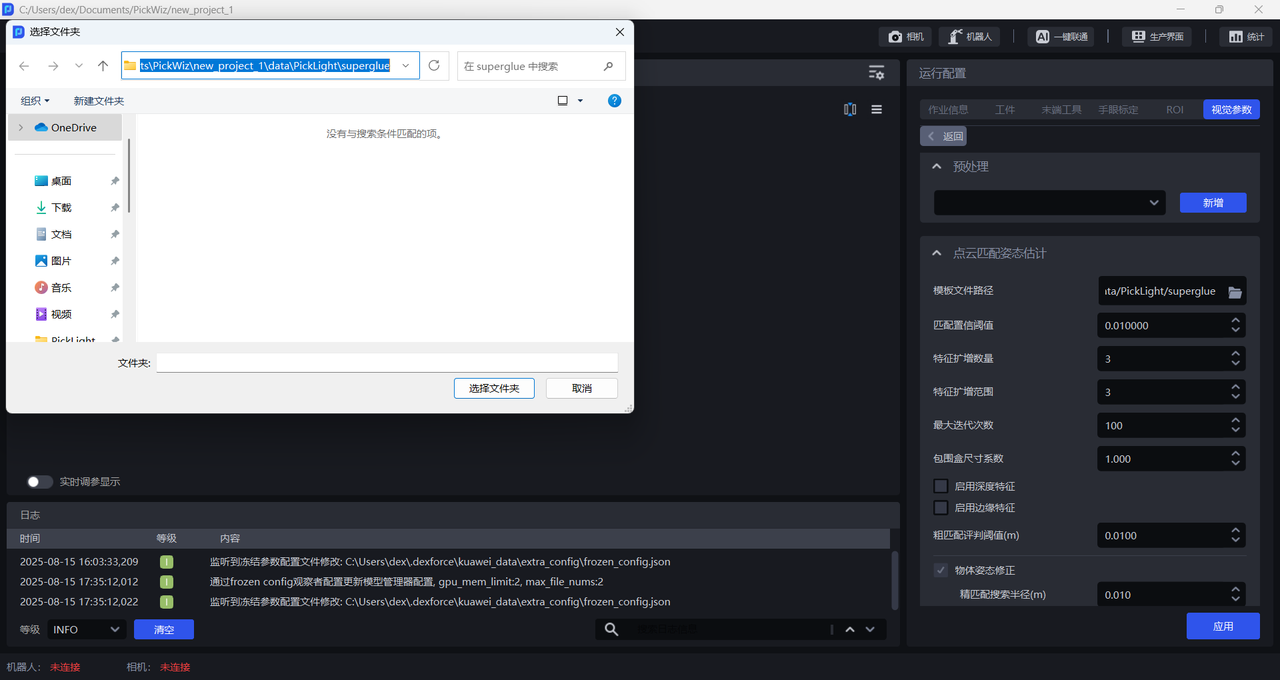

2.2.1 Template File Path

- Function

Upload the point cloud template to match against the instance point cloud from the scene

- Applicable Scenarios

Planar Workpiece Ordered Loading/Unloading (Parallelized) scene

- Parameter Tuning Instructions

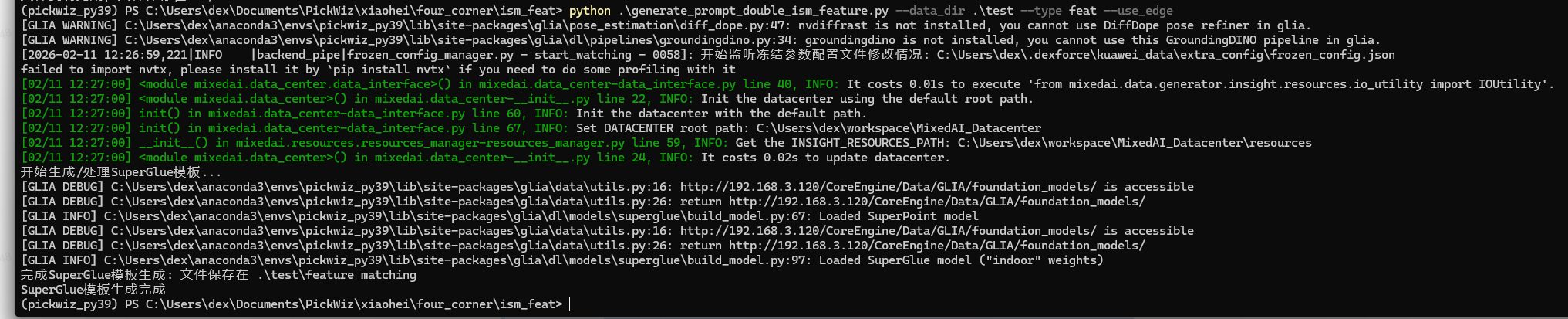

The point cloud template must be created using the template generation script. The procedure is as follows:

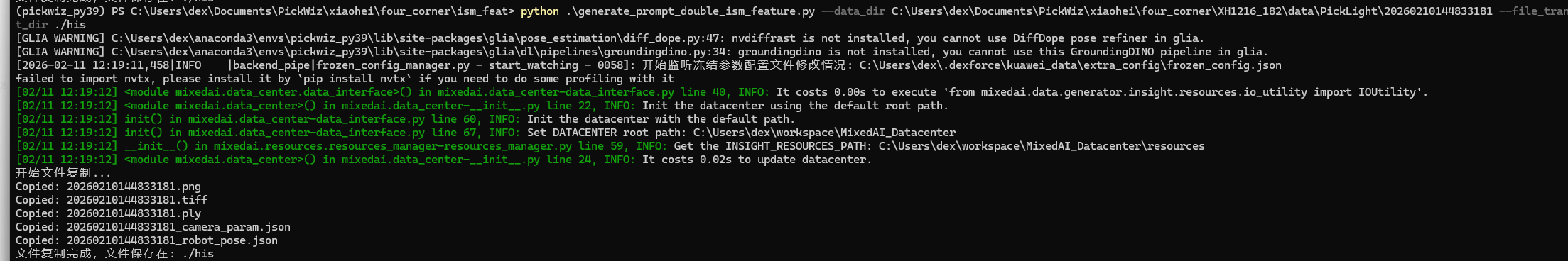

- Copy the Scene's 2D Image, Depth Map, and Point Cloud

(1)Select the timestamp of the historical data to copy

Select a timestamp folder from the PickLight historical data folder (e.g. /home/xxx/PickLight/20240718201333036), and copy its full path for later use.

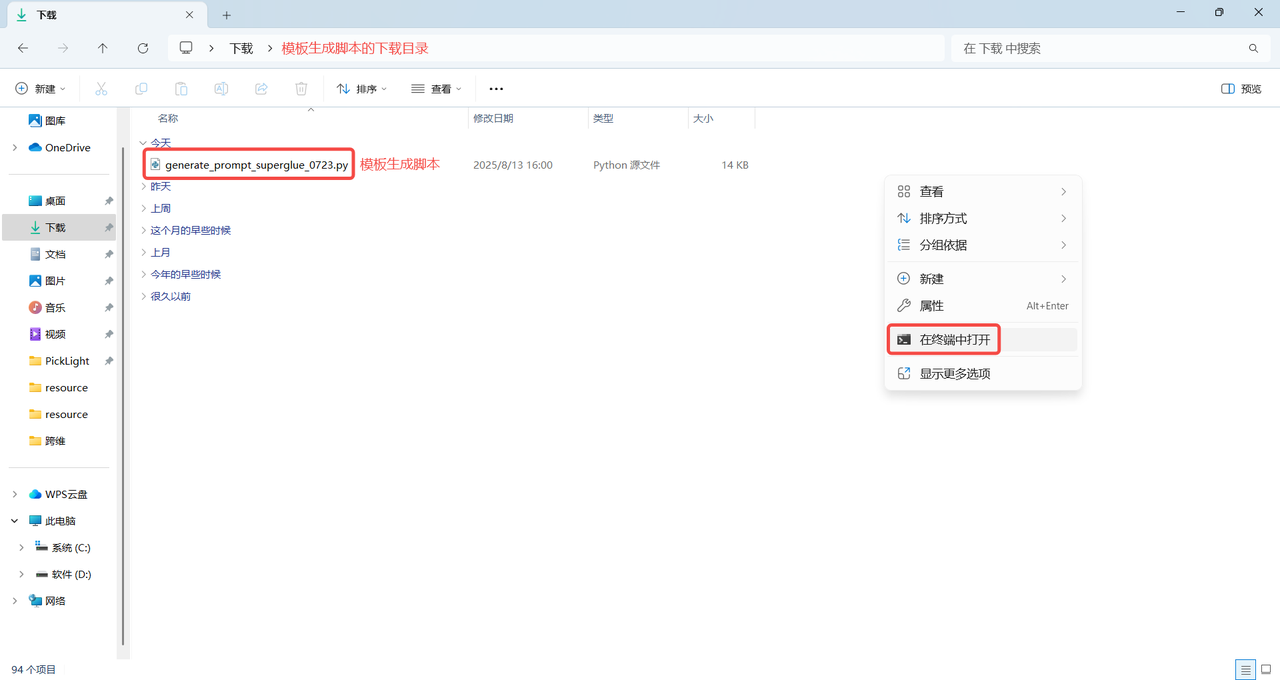

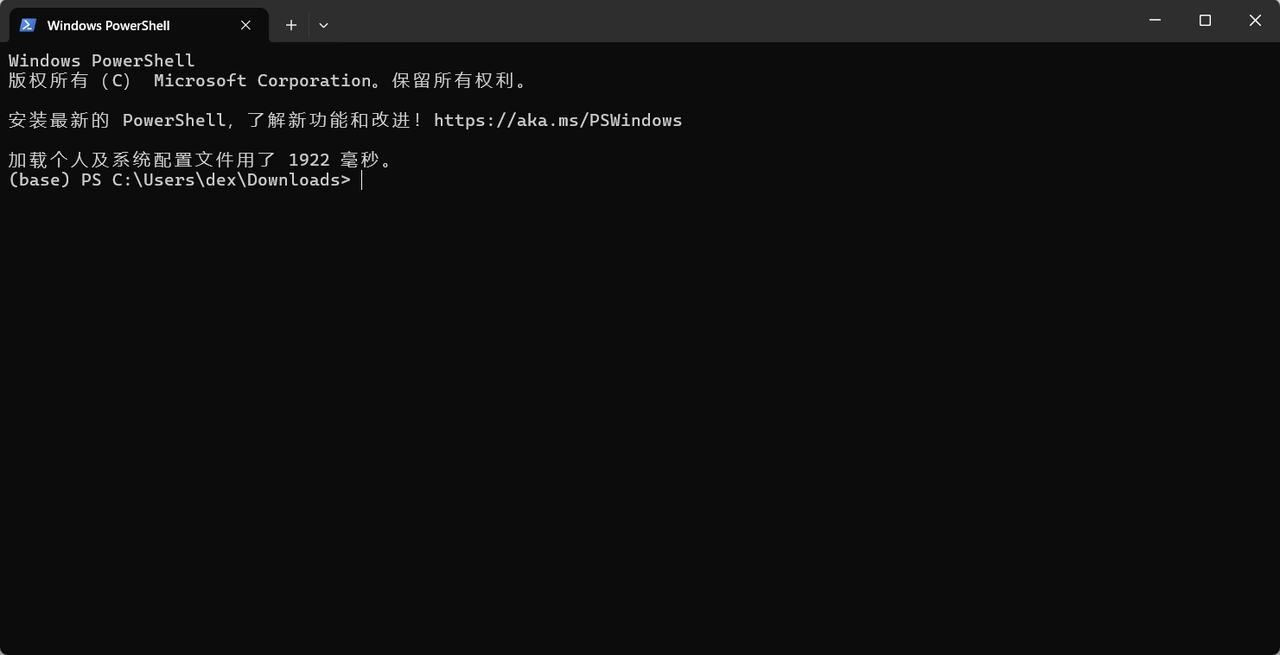

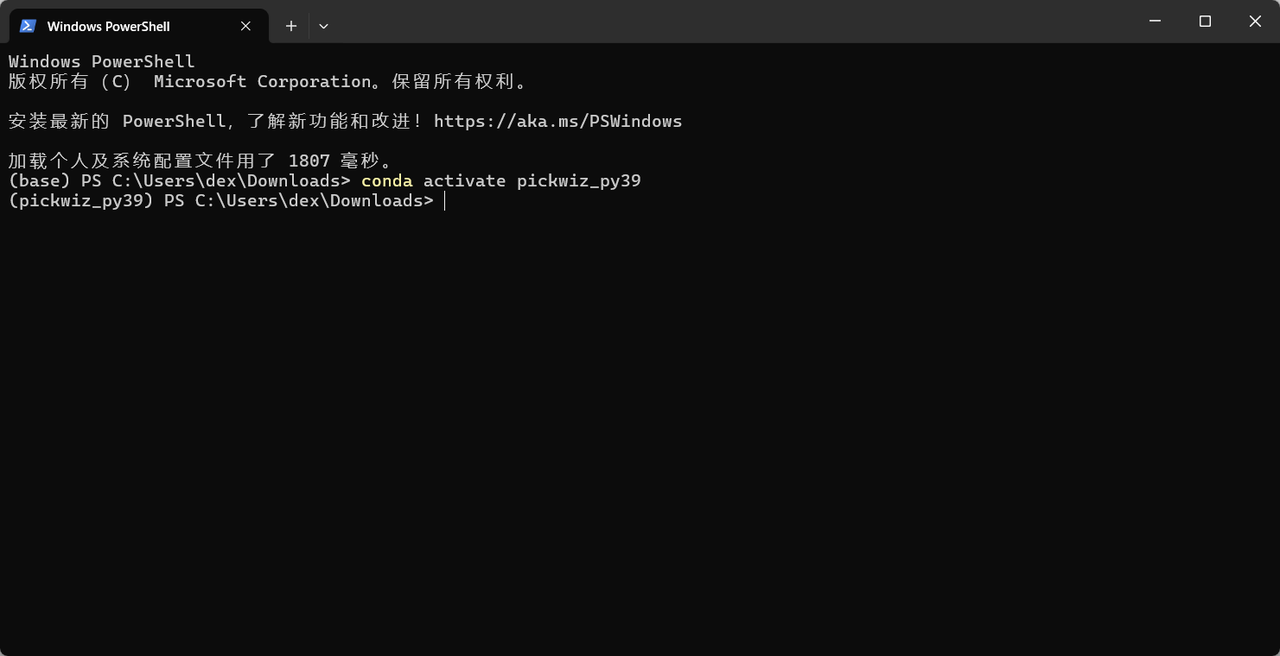

(2) Download the template generation script

Note:

The template generation script must be downloaded in the version corresponding to the software version; otherwise, version incompatibility will cause point cloud template generation to fail.

The download directory for the template generation script must not contain Chinese characters or special characters. It is recommended to store it in the default download directory C:

\Users\dex\Downloads

- Template Generation Script

import argparse

import json

import math

import os

import shutil

from copy import deepcopy

import re

import cv2

import numpy as np

import open3d as o3d

from tqdm import tqdm

from PickLight.Utils.Convertor import generate_mask_from_points

from PickLight.Utils.Convertor import get_ratio_from_mask

from PickLight.Utils.Utility import FileOperation

try:

import glia

if not glia.__version__ >= "0.2.4":

raise RuntimeError("请将 glia 版本升级到 0.2.4 或者更高. 目前版本为 {}".format(glia.__version__))

from glia.dl.models.superglue import SuperGlueMatcher

except ImportError as e:

print(f"警告: {e}")

SuperGlueMatcher = None

try:

import torch

except ImportError:

torch = None

def file_transfer(args):

"""文件复制功能,从两个脚本合并而来"""

input_dir = args.data_dir

output_dir = args.output_dir

os.makedirs(output_dir, exist_ok=True)

# RGB+D 图像

try:

for root, dirs, files in os.walk(input_dir):

target_path = os.path.join(root, 'Builder', 'foreground', 'input')

if os.path.exists(target_path):

for file in os.listdir(target_path):

if file.endswith('.png') or file.endswith('.tiff'):

full_file_path = os.path.join(target_path, file)

shutil.copy(full_file_path, output_dir)

print(f'Copied: {file}')

except Exception as e:

raise ValueError(f"检查{input_dir}路径下是否存在Builder/foreground/input文件夹, {e}")

# PCD 点云文件

try:

for root, dirs, files in os.walk(input_dir):

target_path = os.path.join(root, 'Builder', 'foreground', 'output')

if os.path.exists(target_path):

for file in os.listdir(target_path):

if file.endswith('.ply'):

full_file_path = os.path.join(target_path, file)

shutil.copy(full_file_path, output_dir)

print(f'Copied: {file}')

except Exception as e:

# 尝试第二个脚本的路径

try:

for root, dirs, files in os.walk(input_dir):

target_path = os.path.join(root, 'Builder', 'foreground', 'input')

if os.path.exists(target_path):

for file in os.listdir(target_path):

if file.endswith('.ply'):

full_file_path = os.path.join(target_path, file)

shutil.copy(full_file_path, output_dir)

print(f'Copied: {file}')

except Exception as e2:

raise ValueError(f"检查{input_dir}路径下是否存在Builder/foreground/output或input文件夹, {e2}")

# JSON 配置文件

try:

for root, dirs, files in os.walk(input_dir):

target_path = os.path.join(root, 'Builder', 'foreground', 'input')

if os.path.exists(target_path):

for file in os.listdir(target_path):

# 兼容两个脚本的不同要求

if file.endswith('.json') and (args.type in ['superglue', 'both'] or 'camera_param' in file):

full_file_path = os.path.join(target_path, file)

shutil.copy(full_file_path, output_dir)

print(f'Copied: {file}')

except Exception as e:

raise ValueError(f"检查{input_dir}路径下是否存在JSON文件, {e}")

def _list_indices_by_json(dir_path):

"""列出目录中已有的 model_info_{i}.json 的索引列表"""

if not os.path.isdir(dir_path):

return []

pat = re.compile(r"model_info_(\d+)\.json$")

idxs = []

for f in os.listdir(dir_path):

m = pat.match(f)

if m:

idxs.append(int(m.group(1)))

return sorted(idxs)

def _get_next_index(dir_path):

"""返回可用的下一个索引"""

idxs = _list_indices_by_json(dir_path)

if not idxs:

return 0

return max(idxs) + 1

def _ensure_template_id_in_dir(dir_path, template_id_value):

"""为目录内所有 model_info_{i}.json 写入 template_id(若缺失则补齐)"""

for i in _list_indices_by_json(dir_path):

p = os.path.join(dir_path, f"model_info_{i}.json")

try:

d = json.load(open(p, "r", encoding="utf-8"))

except Exception:

continue

if "template_id" not in d:

d["template_id"] = int(template_id_value)

with open(p, "w", encoding="utf-8") as fw:

json.dump(d, fw, ensure_ascii=False, indent=4)

def _is_superglue_template_dir(dir_path):

"""粗略校验是否为superglue模板目录"""

if not os.path.isdir(dir_path):

return False

js = _list_indices_by_json(dir_path)

if not js:

return False

# 至少有 temp_0.png 或 depth_model_0.tiff

has_temp = any(os.path.exists(os.path.join(dir_path, f"temp_{i}.png")) for i in js)

has_depth = any(os.path.exists(os.path.join(dir_path, f"depth_model_{i}.tiff")) for i in js)

return has_temp or has_depth

def _copy_one_template_block(src_dir, src_idx, dst_dir, dst_idx, template_id):

"""将一套 index 对应的模板文件从src复制到dst,并改名为新索引;同时写入 template_id"""

patterns = [

("temp_{i}.png", "temp_{j}.png"),

("depth_model_{i}.tiff", "depth_model_{j}.tiff"),

("model_{i}.ply", "model_{j}.ply"),

("model_{i}_downsampled.ply", "model_{j}_downsampled.ply"),

("keypoints_{i}.ply", "keypoints_{j}.ply"),

("temp_vis_{i}.jpg", "temp_vis_{j}.jpg"),

("depth_mask_uint8_{i}.png", "depth_mask_uint8_{j}.png"),

]

os.makedirs(dst_dir, exist_ok=True)

for src_pat, dst_pat in patterns:

s = os.path.join(src_dir, src_pat.format(i=src_idx))

d = os.path.join(dst_dir, dst_pat.format(j=dst_idx))

if os.path.exists(s):

shutil.copy(s, d)

# 处理 model_info

src_info = os.path.join(src_dir, f"model_info_{src_idx}.json")

dst_info = os.path.join(dst_dir, f"model_info_{dst_idx}.json")

if os.path.exists(src_info):

try:

data = json.load(open(src_info, "r", encoding="utf-8"))

except Exception:

data = {}

data["template_id"] = int(template_id)

with open(dst_info, "w", encoding="utf-8") as f:

json.dump(data, f, ensure_ascii=False, indent=4)

def merge_superglue_folders(target_dir, source_dirs):

"""功能1:合并多个superglue模板目录到target_dir,顺序追加并写入template_id"""

if not _is_superglue_template_dir(target_dir):

raise RuntimeError(f"目标路径不是有效的superglue模板目录: {target_dir}")

for s in source_dirs:

if not _is_superglue_template_dir(s):

raise RuntimeError(f"来源路径不是有效的superglue模板目录: {s}")

# 目标已有模板写入 template_id=0(若缺失)

_ensure_template_id_in_dir(target_dir, 0)

next_idx = _get_next_index(target_dir)

# 从1开始给后续目录分配 template_id

for folder_tid, src in enumerate(source_dirs, start=1):

src_indices = _list_indices_by_json(src)

for i in src_indices:

_copy_one_template_block(src, i, target_dir, next_idx, folder_tid)

next_idx += 1

print(f"合并完成,输出在: {target_dir}")

def _detect_group_dirs(root_dir):

"""功能2:检测 root_dir 下是否存在多组数据(按子目录作为一组)"""

group_dirs = []

for name in sorted(os.listdir(root_dir)):

p = os.path.join(root_dir, name)

if not os.path.isdir(p):

continue

# 需要同时包含 ply + 任一rgb + tiff + json(camera_param)

has_ply = any(fn.endswith(".ply") for fn in os.listdir(p))

has_img = any(fn.lower().endswith((".png", ".jpg", ".jpeg", ".bmp")) for fn in os.listdir(p))

has_tiff = any(fn.lower().endswith(".tiff") for fn in os.listdir(p))

has_json = False

for fn in os.listdir(p):

if fn.lower().endswith(".json"):

try:

d = json.load(open(os.path.join(p, fn), "r", encoding="utf-8"))

if "camera_param" in d:

has_json = True

break

except Exception:

pass

if has_ply and has_img and has_tiff and has_json:

group_dirs.append(p)

return group_dirs

def search_depth_values(indices, depth_mask, search_radius=3):

"""

在附近搜索一个非零的深度值并填充到深度掩码中

参数:

- indices: 关键点的索引数组,形状为 (N, 2)

- depth_mask: 初始深度掩码,形状为 (H, W)

- search_radius: 搜索半径,默认为 3

返回:

- depth_values: 每个关键点搜索到的深度值

"""

depth_values = np.full(indices.shape[0], -1, dtype=np.float32)

for idx, (x, y) in enumerate(indices):

if depth_mask[y, x] == 0:

xmin, xmax = max(0, x - search_radius), min(depth_mask.shape[1], x + search_radius + 1)

ymin, ymax = max(0, y - search_radius), min(depth_mask.shape[0], y + search_radius + 1)

search_area = depth_mask[ymin:ymax, xmin:xmax]

non_zero = search_area[search_area != 0]

if non_zero.size > 0:

depth_values[idx] = non_zero[0]

else:

depth_values[idx] = depth_mask[y, x]

return depth_values

def rotate_pcd(pcd, angle, original_center=None):

"""旋转点云"""

if original_center is None:

original_center = pcd.get_center()

t_1 = np.array(

[[1, 0, 0, -original_center[0]], [0, 1, 0, -original_center[1]], [0, 0, 1, -original_center[2]], [0, 0, 0, 1]]

)

theta = np.radians(angle)

cos_theta = np.cos(theta)

sin_theta = np.sin(theta)

t_2 = np.array([[cos_theta, -sin_theta, 0, 0], [sin_theta, cos_theta, 0, 0], [0, 0, 1, 0], [0, 0, 0, 1]])

t_3 = np.array(

[[1, 0, 0, original_center[0]], [0, 1, 0, original_center[1]], [0, 0, 1, original_center[2]], [0, 0, 0, 1]]

)

transform_all = np.dot(t_3, np.dot(t_2, t_1))

pcd_final = deepcopy(pcd).transform(transform_all)

return pcd_final, transform_all

def project_pcd_to_rgb(

input_dir,

output_dir,

angle,

auto_scale,

mask_kernel_size,

edge_kernel_size,

index=1,

use_edge=False,

background_color=0,

foreground_color=255,

template_id=None,

base_index=0,

):

"""将点云投影到RGB图像(用于SuperGlue模板)"""

os.makedirs(output_dir, exist_ok=True)

# 使用 base_index 读取基准模板

cam_k_path = os.path.join(input_dir, f"model_info_{base_index}.json")

cam_k = json.load(open(cam_k_path))

cam_k = cam_k["camera_param"]

cam_k = np.asarray(cam_k).reshape(3, 3).astype(np.float32)

pcd_path = os.path.join(input_dir, f"model_{base_index}.ply")

pcd = o3d.io.read_point_cloud(pcd_path)

temp_img_path = os.path.join(input_dir, f"temp_{base_index}.png")

temp_img = cv2.imread(temp_img_path)

project_img = np.zeros(temp_img.shape, dtype=np.uint8)

project_depth = np.zeros(temp_img.shape[:2], dtype=np.float32)

aabb = pcd.get_axis_aligned_bounding_box()

aabb_center = (aabb.get_min_bound() + aabb.get_max_bound()) / 2

pcd_transformed, transform_all = rotate_pcd(pcd, angle, aabb_center)

o3d.io.write_point_cloud(os.path.join(output_dir, f"model_{index}.ply"), pcd_transformed)

f_json_data = open(os.path.join(output_dir, f"model_info_{index}.json"), "w+")

json_saved_data = {}

json_saved_data["camera_param"] = cam_k.tolist()

json_saved_data["transform"] = transform_all.tolist()

json_saved_data["angle"] = angle

json_saved_data["mask_kernel_size"] = mask_kernel_size

json_saved_data["edge_kernel_size"] = edge_kernel_size

json_saved_data["use_edge"] = use_edge

if template_id is not None:

json_saved_data["template_id"] = int(template_id)

json.dump(json_saved_data, f_json_data, indent=4)

f_json_data.close()

rvec = np.array([0.0, 0.0, 0.0])

tvec = np.array([0.0, 0.0, 0.0])

distortion_zeros = np.zeros((5, 1), dtype=np.float32)

pcd_np = np.array(pcd_transformed.points)

points_2d, _ = cv2.projectPoints(pcd_np, rvec, tvec, cam_k, distortion_zeros)

points_2d = points_2d.squeeze(1).reshape(-1, 2)

color_bgr = np.asarray(pcd_transformed.colors)[:, ::-1] * 255

for i, pt in enumerate(points_2d):

project_img[round(pt[1]), round(pt[0]), :] = color_bgr[i]

project_depth[round(pt[1]), round(pt[0])] = pcd_np[i][2]

mask = np.any(project_img != 0, axis=2)

project_img = cv2.cvtColor(project_img, cv2.COLOR_BGR2GRAY)

project_img[project_img == 0] = background_color

if use_edge:

project_img[mask] = foreground_color

project_img = cv2.morphologyEx(

project_img, cv2.MORPH_CLOSE, np.ones((mask_kernel_size, mask_kernel_size), np.uint8)

)

project_img = cv2.cvtColor(project_img, cv2.COLOR_GRAY2BGR)

cv2.imwrite(os.path.join(output_dir, f"depth_model_{index}.tiff"), project_depth)

cv2.imwrite(os.path.join(output_dir, f"temp_{index}.png"), project_img)

concat_img = cv2.hconcat([project_img, temp_img])

cv2.imwrite(os.path.join(output_dir, f"project_img_concat_{index}.jpg"), concat_img)

# 输出 keypoints.ply

if SuperGlueMatcher is not None and torch is not None:

device = 'cuda' if torch.cuda.is_available() else 'cpu'

matching = SuperGlueMatcher().eval().to(device)

matching.set_edge_mode(use_edge)

matching.set_edge_kernel_size(edge_kernel_size)

matching.register(project_img, resolution=(project_img.shape[0], project_img.shape[1]))

keypoints_model_2d = matching.temp_data['keypoints0'][0].cpu().numpy().astype(np.int32)

Keypoint_3D_model = np.zeros((len(keypoints_model_2d), 3), dtype=np.float32)

depth_values = search_depth_values(keypoints_model_2d, project_depth, 5)

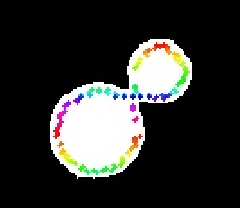

def get_rainbow_color(index, total_points):

hsv = np.array([[[int(255 * index / total_points), 255, 255]]], dtype=np.uint8)

bgr = cv2.cvtColor(hsv, cv2.COLOR_HSV2BGR)[0][0]

return tuple(map(int, bgr))

temp_vis = deepcopy(project_img)

for i in range(len(keypoints_model_2d)):

x, y = keypoints_model_2d[i]

z = depth_values[i]

if z == -1:

Keypoint_3D_model[i] = [0, 0, 0]

continue

Keypoint_3D_model[i] = np.linalg.inv(cam_k) @ (np.array([x, y, 1]) * z)

color = get_rainbow_color(i, len(keypoints_model_2d))

center = (int(round(x)), int(round(y)))

cv2.circle(temp_vis, center, radius=1, color=color, thickness=-1)

cv2.imwrite(os.path.join(output_dir, f"temp_vis_{index}.jpg"), temp_vis)

rotated_model_kpts = o3d.geometry.PointCloud()

rotated_model_kpts.points = o3d.utility.Vector3dVector(Keypoint_3D_model)

rotated_model_kpts.colors = o3d.utility.Vector3dVector([[0, 1, 0] for _ in range(Keypoint_3D_model.shape[0])])

o3d.io.write_point_cloud(os.path.join(output_dir, f"keypoints_{index}.ply"), rotated_model_kpts)

if torch.cuda.is_available():

torch.cuda.empty_cache()

return True

def generate_ism_template(args):

"""生成ISM模板(第一个脚本的功能)"""

data_dir = args.data_dir

rotation_range = args.rot_range

rotation_interval = args.rot_interval

rotation_range_xy = args.rot_range_xy

depth_range = args.depth_range

depth_interval = args.depth_interval

template_path = None

ply_path = None

pcd = None

# 查找所需文件

for f in os.listdir(data_dir):

template_path = os.path.join(data_dir, "template")

os.makedirs(template_path, exist_ok=True)

f_path = os.path.join(data_dir, f)

if f.endswith('.ply'):

ply_path = f_path

pcd = o3d.io.read_point_cloud(ply_path)

pcd.paint_uniform_color([0, 1.0, 0])

o3d.io.write_point_cloud(f"{template_path}/model.ply", pcd)

if f.endswith('.png') or f.endswith('.jpg') or f.endswith('.bmp'):

rgb = cv2.imread(f_path)

if len(rgb.shape) == 2:

rgb = cv2.cvtColor(rgb, cv2.COLOR_GRAY2RGB)

h, w, _ = rgb.shape

if f.endswith('.tiff'):

depth = cv2.imread(f_path, -1)

if f.endswith('.json'):

resource_manager = FileOperation.load_json(f_path)

cam_k = resource_manager["camera_param"]

cam_k = np.asarray(cam_k).reshape(3, 3).astype(np.float32)

# 检查文件是否存在

if ply_path is None:

raise ValueError(f"错误! 未在 {data_dir} 路径下找到 点云 文件, 支持后缀为 ply.\n")

if pcd is None:

raise ValueError(f"错误! {ply_path} 路径的点云文件读取失败.\n")

try:

rgb

except NameError:

raise ValueError(f"错误! 未能在 {data_dir} 路径下正确读取 彩色图像 文件, 支持后缀为 png/jpg/bmp.\n")

try:

depth

except NameError:

raise ValueError(f"错误! 未能在 {data_dir} 路径下正确读取 深度图像 文件, 支持后缀为 tiff.\n")

try:

cam_k

except NameError:

raise ValueError(f"错误! 未在 {data_dir} 路径下找到 配置参数 文件, 支持后缀为 json.\n")

# ISM prompt

pcd_center = pcd.get_center()

angles_z = range(-rotation_range, rotation_range, rotation_interval)

mask = generate_mask_from_points(np.array(pcd.points), cam_k, h, w, auto_scale=1)

# ISM prompt

mask_uint8 = cv2.normalize(mask, None, 0, 255, cv2.NORM_MINMAX).astype(np.uint8)

rgb_mask = deepcopy(rgb)

rgb_mask[mask == 0] = 0

# 将 mask 与 rgb 的前景质心平移到图像中心

coords = np.column_stack(np.where(mask_uint8 > 0))

if coords.size > 0:

cy, cx = coords.mean(axis=0)

center_x = w / 2.0

center_y = h / 2.0

dx = center_x - cx

dy = center_y - cy

T = np.float32([[1, 0, dx], [0, 1, dy]])

rgb_mask = cv2.warpAffine(

rgb_mask, T, (w, h), flags=cv2.INTER_LINEAR, borderMode=cv2.BORDER_CONSTANT, borderValue=(0, 0, 0)

)

mask_uint8 = cv2.warpAffine(

mask_uint8, T, (w, h), flags=cv2.INTER_NEAREST, borderMode=cv2.BORDER_CONSTANT, borderValue=0

)

if template_path is not None:

center = (w / 2, h / 2)

new_w = int(math.sqrt(w**2 + h**2))

new_h = new_w

main_i = 0

for angle in tqdm(angles_z, desc="生成ISM旋转模板"):

M = cv2.getRotationMatrix2D(center, angle, 1.0)

M[0, 2] += (new_w - w) / 2

M[1, 2] += (new_h - h) / 2

# 生成rgb

rotated_rgb = cv2.warpAffine(

rgb_mask, M, (new_w, new_h), borderMode=cv2.BORDER_REPLICATE, borderValue=(127, 127, 127)

)

save_name_rgb = f"rgb_{main_i}.png"

save_path_rgb = os.path.join(template_path, save_name_rgb)

cv2.imwrite(save_path_rgb, rotated_rgb)

# 生成mask

rotated_mask = cv2.warpAffine(

mask_uint8, M, (new_w, new_h), borderMode=cv2.BORDER_REPLICATE, borderValue=(127, 127, 127)

)

save_name_mask = f"mask_{main_i}.png"

save_path_mask = os.path.join(template_path, save_name_mask)

cv2.imwrite(save_path_mask, rotated_mask)

main_i += 1

angles_xy = range(-rotation_range_xy, rotation_range_xy + 1, rotation_range_xy) if rotation_range_xy > 0 else [0]

# 设置深度变化范围 (mm to meters)

if depth_interval > 0:

depth_shifts = np.arange(-depth_range, depth_range + depth_interval, depth_interval) / 1000.0

else:

depth_shifts = [0]

geometric_features_model = []

variants_meta = []

i = 0

for angle_z in tqdm(angles_z, desc="生成ISM几何特征"):

for angle_x in angles_xy:

for angle_y in angles_xy:

pcd_rotated = deepcopy(pcd)

# Z轴旋转

Rz = pcd_rotated.get_rotation_matrix_from_xyz((0, 0, np.deg2rad(angle_z)))

pcd_rotated.rotate(Rz, center=pcd_center)

# X轴小角度旋转

if angle_x != 0:

Rx = pcd_rotated.get_rotation_matrix_from_xyz((np.deg2rad(angle_x), 0, 0))

pcd_rotated.rotate(Rx, center=pcd_center)

# Y轴小角度旋转

if angle_y != 0:

Ry = pcd_rotated.get_rotation_matrix_from_xyz((0, np.deg2rad(angle_y), 0))

pcd_rotated.rotate(Ry, center=pcd_center)

for depth_shift in depth_shifts:

pcd_final = deepcopy(pcd_rotated)

if depth_shift != 0:

pcd_final.translate((0, 0, depth_shift))

# 从旋转和平移后的点云生成mask

mask = generate_mask_from_points(np.array(pcd_final.points), cam_k, h, w, auto_scale=2)

mask_uint8 = cv2.normalize(mask, None, 0, 255, cv2.NORM_MINMAX).astype(np.uint8)

obb_ratio = get_ratio_from_mask(mask_uint8)

mask_area = int(np.count_nonzero(mask_uint8 > 0))

if obb_ratio > 0 and mask_area > 0:

geometric_features_model.append([float(obb_ratio), float(mask_area)])

variants_meta.append(

{

"idx": i,

"angle_z": angle_z,

"angle_x": angle_x,

"angle_y": angle_y,

"depth_shift_m": float(depth_shift),

"obb_ratio": float(obb_ratio),

"mask_area": mask_area,

}

)

i += 1

meta = {"geometric_features_model": geometric_features_model, "variants": variants_meta, "num_variants": i}

with open(os.path.join(template_path, "prompt_meta.json"), "w", encoding="utf-8") as f:

json.dump(meta, f, ensure_ascii=False, indent=2)

print(f"完成ISM模板生成: 元数据写入 {template_path}/prompt_meta.json")

return template_path

def generate_superglue_template(args, start_index=0, template_id=None):

"""生成SuperGlue模板(第二个脚本的功能)"""

if SuperGlueMatcher is None:

raise ImportError("无法导入SuperGlueMatcher,请确保glia版本正确")

if torch is None:

raise ImportError("无法导入torch,请安装PyTorch")

data_dir = args.data_dir

auto_scale = args.auto_scale

mask_kernel_size = args.mask_kernel_size

edge_kernel_size = args.edge_kernel_size

background_color = args.background_color

foreground_color = args.foreground_color

voxel_size = args.down_sample

ply_path = None

pcd = None

# 查找所需文件

for f in os.listdir(data_dir):

f_path = os.path.join(data_dir, f)

if f.endswith('.ply'):

ply_path = f_path

pcd = o3d.io.read_point_cloud(ply_path)

if f.endswith('.png') or f.endswith('.jpg') or f.endswith('.bmp'):

rgb = cv2.imread(f_path)

h, w = rgb.shape[0], rgb.shape[1]

if f.endswith('.tiff'):

depth = cv2.imread(f_path, -1)

if f.endswith('.json'):

resource_manager = json.load(open(f_path))

cam_k = resource_manager["camera_param"]

cam_k = np.asarray(cam_k).reshape(3, 3).astype(np.float32)

# 检查文件是否存在

if ply_path is None:

raise ValueError(f"错误! 未在 {data_dir} 路径下找到 点云 文件, 支持后缀为 ply.\n")

if pcd is None:

raise ValueError(f"错误! {ply_path} 路径的点云文件读取失败.\n")

try:

rgb

except NameError:

raise ValueError(f"错误! 未能在 {data_dir} 路径下正确读取 彩色图像 文件, 支持后缀为 png/jpg/bmp.\n")

try:

depth

except NameError:

raise ValueError(f"错误! 未能在 {data_dir} 路径下正确读取 深度图像 文件, 支持后缀为 tiff.\n")

try:

cam_k

except NameError:

raise ValueError(f"错误! 未在 {data_dir} 路径下找到 配置参数 文件, 支持后缀为 json.\n")

mask = generate_mask_from_points(

np.array(pcd.points), cam_k, h, w, kernel_size=mask_kernel_size, auto_scale=auto_scale

)

mask = cv2.morphologyEx(mask, cv2.MORPH_CLOSE, np.ones((mask_kernel_size, mask_kernel_size), np.uint8))

if rgb.shape[-1] == 3:

rgb = cv2.cvtColor(rgb, cv2.COLOR_BGR2GRAY)

rgb_mask = deepcopy(rgb)

rgb_mask[mask == 0] = background_color

if args.use_edge:

rgb_mask[mask != 0] = foreground_color

depth_mask = deepcopy(depth)

# 裁剪图像

expand_pixels = 10

coords = cv2.findNonZero(mask)

if coords is None or len(coords) == 0:

raise ValueError("mask 中没有非零点,无法进行裁剪")

x_bbox, y_bbox, w_bbox, h_bbox = cv2.boundingRect(coords)

x_expanded = max(0, x_bbox - expand_pixels)

y_expanded = max(0, y_bbox - expand_pixels)

w_expanded = min(w - x_expanded, w_bbox + 2 * expand_pixels)

h_expanded = min(h - y_expanded, h_bbox + 2 * expand_pixels)

rgb_mask = rgb_mask[y_expanded : y_expanded + h_expanded, x_expanded : x_expanded + w_expanded]

depth_mask = depth_mask[y_expanded : y_expanded + h_expanded, x_expanded : x_expanded + w_expanded]

diameter = math.ceil(np.sqrt(w_expanded * w_expanded + h_expanded * h_expanded))

x_pad = (diameter - w_expanded) // 2

y_pad = (diameter - h_expanded) // 2

rgb_mask_paded = np.zeros([diameter, diameter], dtype=np.uint8)

depth_mask_paded = np.zeros([diameter, diameter], dtype=np.float32)

rgb_mask_paded[y_pad : y_pad + h_expanded, x_pad : x_pad + w_expanded] = rgb_mask

depth_mask_paded[y_pad : y_pad + h_expanded, x_pad : x_pad + w_expanded] = depth_mask

rgb_mask = deepcopy(rgb_mask_paded)

depth_mask = deepcopy(depth_mask_paded)

# 更新相机内参矩阵

cam_k[0, 2] = cam_k[0, 2] - x_expanded + x_pad

cam_k[1, 2] = cam_k[1, 2] - y_expanded + y_pad

# 统一输出目录:优先 args.output_superglue_dir,否则 data_dir/superglue

superglue_path = getattr(args, "output_superglue_dir", None) or os.path.join(data_dir, "superglue")

os.makedirs(superglue_path, exist_ok=True)

index = int(start_index)

base_index = index

use_depth = args.use_depth

use_edge = args.use_edge

# 输出 model.ply

o3d.io.write_point_cloud(f"{superglue_path}/model_{index}.ply", pcd)

if voxel_size is not None:

pcd_down_sampled = deepcopy(pcd).voxel_down_sample(voxel_size=voxel_size)

o3d.io.write_point_cloud(f"{superglue_path}/model_{index}_downsampled.ply", pcd_down_sampled)

# 注册模版输出 temp.png keypoints.ply

temp = deepcopy(rgb_mask)

if use_depth:

temp = deepcopy(depth_mask)

temp = cv2.normalize(temp, None, 0, 255, cv2.NORM_MINMAX).astype(np.uint8)

cv2.imwrite(f"{superglue_path}/depth_mask_uint8_{index}.png", temp)

cv2.imwrite(f"{superglue_path}/depth_model_{index}.tiff", depth_mask)

cv2.imwrite(f"{superglue_path}/temp_{index}.png", temp)

# 输出 keypoints.ply

device = 'cuda' if torch.cuda.is_available() else 'cpu'

matching = SuperGlueMatcher().eval().to(device)

if use_edge:

matching.set_edge_mode(True)

matching.set_edge_kernel_size(edge_kernel_size)

matching.register(temp, resolution=(temp.shape[0], temp.shape[1]))

keypoints_model_2d = matching.temp_data['keypoints0'][0].cpu().numpy().astype(np.int32)

Keypoint_3D_model = np.zeros((len(keypoints_model_2d), 3), dtype=np.float32)

depth_values = search_depth_values(keypoints_model_2d, depth_mask, 5)

temp_vis = deepcopy(rgb_mask)

temp_vis = cv2.cvtColor(temp_vis, cv2.COLOR_GRAY2BGR)

def get_rainbow_color(index, total_points):

hsv = np.array([[[int(255 * index / total_points), 255, 255]]], dtype=np.uint8)

bgr = cv2.cvtColor(hsv, cv2.COLOR_HSV2BGR)[0][0]

return tuple(map(int, bgr))

for i in range(len(keypoints_model_2d)):

x, y = keypoints_model_2d[i]

z = depth_values[i]

if z == -1:

Keypoint_3D_model[i] = [0, 0, 0]

continue

Keypoint_3D_model[i] = np.linalg.inv(cam_k) @ (np.array([x, y, 1]) * z)

color = get_rainbow_color(i, len(keypoints_model_2d))

center = (int(round(x)), int(round(y)))

cv2.circle(temp_vis, center, radius=1, color=color, thickness=-1)

cv2.imwrite(f"{superglue_path}/temp_vis_{index}.jpg", temp_vis)

pcd_model_keypoints = o3d.geometry.PointCloud()

pcd_model_keypoints.points = o3d.utility.Vector3dVector(Keypoint_3D_model)

pcd_model_keypoints.colors = o3d.utility.Vector3dVector([[0, 1, 0] for _ in range(Keypoint_3D_model.shape[0])])

o3d.io.write_point_cloud(f"{superglue_path}/keypoints_{index}.ply", pcd_model_keypoints)

f_json_data = open(os.path.join(superglue_path, f"model_info_{index}.json"), "w+")

json_saved_data = {}

json_saved_data["camera_param"] = cam_k.tolist()

json_saved_data["transform"] = np.eye(4).tolist()

json_saved_data["angle"] = 0.0

json_saved_data["mask_kernel_size"] = mask_kernel_size

json_saved_data["edge_kernel_size"] = edge_kernel_size

json_saved_data["use_edge"] = args.use_edge

if template_id is not None:

json_saved_data["template_id"] = int(template_id)

json.dump(json_saved_data, f_json_data, indent=4)

f_json_data.close()

if args.multi_temp:

for k, angle in enumerate(args.angle):

project_pcd_to_rgb(

superglue_path,

superglue_path,

angle,

args.auto_scale,

mask_kernel_size,

edge_kernel_size,

index=base_index + 1 + k,

use_edge=args.use_edge,

background_color=background_color,

foreground_color=foreground_color,

template_id=template_id,

base_index=base_index,

)

# 组别的第0个模版点云另存为 multi_model_{template_id}.ply(若启用多模版)

if template_id is not None:

src = os.path.join(superglue_path, f"model_{base_index}.ply")

dst = os.path.join(superglue_path, f"multi_model_{template_id}.ply")

if os.path.exists(src):

shutil.copy(src, dst)

if voxel_size is not None:

# 生成 multi_model_{template_id}_downsampled.ply

pcd0 = o3d.io.read_point_cloud(src)

pcd0_ds = deepcopy(pcd0).voxel_down_sample(voxel_size=voxel_size)

o3d.io.write_point_cloud(

os.path.join(superglue_path, f"multi_model_{template_id}_downsampled.ply"), pcd0_ds

)

if torch.cuda.is_available():

torch.cuda.empty_cache()

print(f"完成SuperGlue模板生成: 文件保存在 {superglue_path}")

# 返回下一个可用索引(使用 base_index 计算)

end_next_index = base_index + (len(args.angle) + 1 if args.multi_temp else 1)

return superglue_path, end_next_index

def main():

parser = argparse.ArgumentParser(description="融合脚本:生成ISM和/或SuperGlue模板")

parser.add_argument(

"--output_superglue_dir",

type=str,

default=None,

help="指定SuperGlue模板统一输出目录;不指定则写入 data_dir/superglue",

)

# 基础参数

parser.add_argument("data_dir", help="输入数据目录")

parser.add_argument(

"--type",

choices=["ism", "superglue", "both"],

default="both",

help="选择生成的模板类型: ism, superglue, 或 both",

)

parser.add_argument("--file_transfer", action="store_true", default=False, help="执行文件复制操作")

parser.add_argument("--output_dir", help="文件复制时的输出目录")

# ISM参数

parser.add_argument("--rot_range", type=int, default=60, help="Z-axis rotation range (degrees)")

parser.add_argument("--rot_interval", type=int, default=10, help="Z-axis rotation interval (degrees)")

parser.add_argument("--rot_range_xy", type=int, default=5, help="XY small rotation range (degrees)")

parser.add_argument("--depth_range", type=int, default=200, help="Z translation range (mm)")

parser.add_argument("--depth_interval", type=int, default=200, help="Z translation interval (mm)")

# SuperGlue参数

parser.add_argument("--auto_scale", type=float, default=0.1, help="点云到掩码的缩放比例")

parser.add_argument("--use_depth", action="store_true", default=False, help="使用深度图")

parser.add_argument("--use_edge", action="store_true", default=False, help="使用边缘模式")

parser.add_argument("--edge_kernel_size", type=int, default=3, help="边缘核大小")

parser.add_argument("--mask_kernel_size", type=int, default=5, help="形态学操作核大小")

parser.add_argument("--multi_temp", action="store_true", default=False, help="生成多角度模板")

parser.add_argument("--angle", type=float, nargs="+", default=[90, 180, 270], help="旋转角度")

parser.add_argument("--down_sample", type=float, default=None, help="点云下采样体素大小(米), 设置为None禁用")

parser.add_argument("--background_color", type=int, default=0, help="边缘模式背景颜色")

parser.add_argument("--foreground_color", type=int, default=255, help="边缘模式前景颜色")

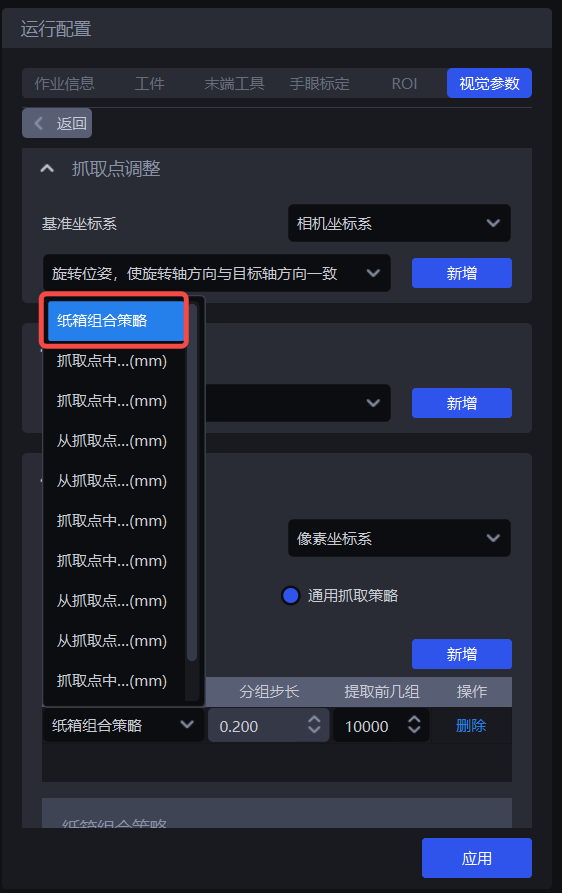

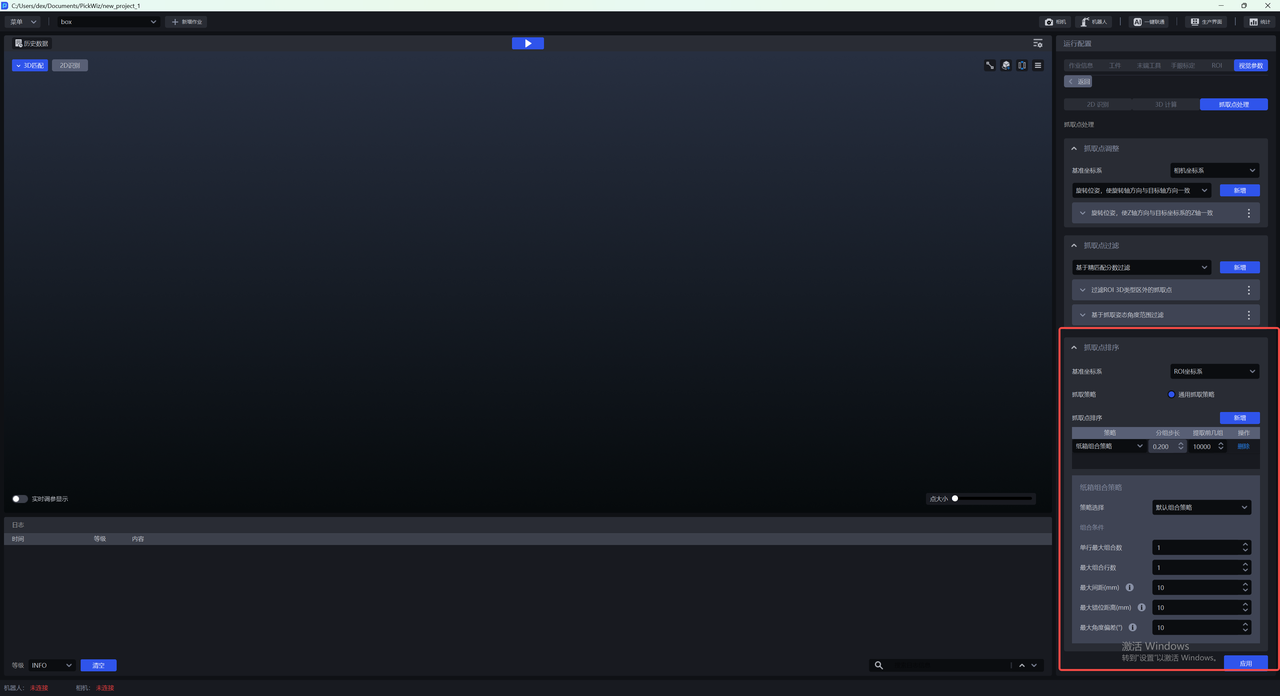

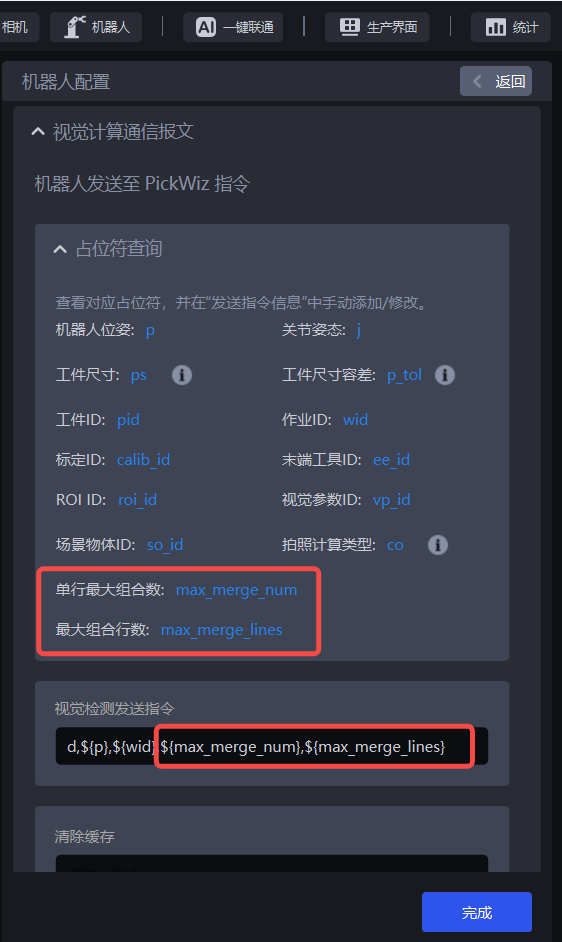

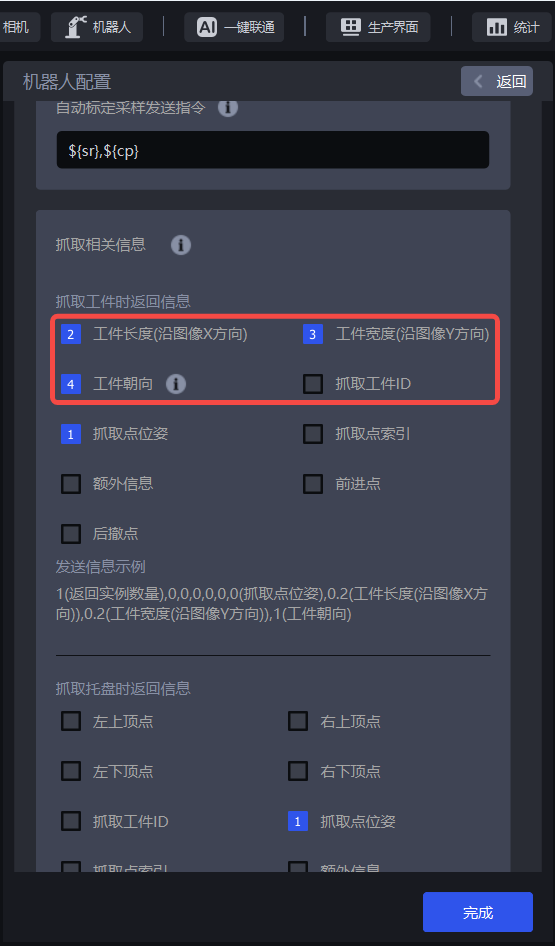

# 新增:多模版模式